diff --git a/notebooks/unit6/requirements-unit6.txt b/notebooks/unit6/requirements-unit6.txt

index 1c8ffaa..cf5db2d 100644

--- a/notebooks/unit6/requirements-unit6.txt

+++ b/notebooks/unit6/requirements-unit6.txt

@@ -1,4 +1,4 @@

-stable-baselines3[extra]

+stable-baselines3==2.0.0a4

huggingface_sb3

-panda_gym==2.0.0

-pyglet==1.5.1

+panda-gym

+huggingface_hub

\ No newline at end of file

diff --git a/units/en/_toctree.yml b/units/en/_toctree.yml

index e844292..26327a5 100644

--- a/units/en/_toctree.yml

+++ b/units/en/_toctree.yml

@@ -159,7 +159,7 @@

- local: unit6/advantage-actor-critic

title: Advantage Actor Critic (A2C)

- local: unit6/hands-on

- title: Advantage Actor Critic (A2C) using Robotics Simulations with PyBullet and Panda-Gym 🤖

+ title: Advantage Actor Critic (A2C) using Robotics Simulations with Panda-Gym 🤖

- local: unit6/conclusion

title: Conclusion

- local: unit6/additional-readings

diff --git a/units/en/unit6/hands-on.mdx b/units/en/unit6/hands-on.mdx

index b6c063b..d1035ae 100644

--- a/units/en/unit6/hands-on.mdx

+++ b/units/en/unit6/hands-on.mdx

@@ -1,4 +1,4 @@

-# Advantage Actor Critic (A2C) using Robotics Simulations with PyBullet and Panda-Gym 🤖 [[hands-on]]

+# Advantage Actor Critic (A2C) using Robotics Simulations with Panda-Gym 🤖 [[hands-on]]

-Now that you've studied the theory behind Advantage Actor Critic (A2C), **you're ready to train your A2C agent** using Stable-Baselines3 in robotic environments. And train two robots:

-

-- A spider 🕷️ to learn to move.

+Now that you've studied the theory behind Advantage Actor Critic (A2C), **you're ready to train your A2C agent** using Stable-Baselines3 in a robotic environment. And train a:

- A robotic arm 🦾 to move to the correct position.

-We're going to use two Robotics environments:

-

-- [PyBullet](https://github.com/bulletphysics/bullet3)

+We're going to use

- [panda-gym](https://github.com/qgallouedec/panda-gym)

- -

-

To validate this hands-on for the certification process, you need to push your two trained models to the Hub and get the following results:

-- `AntBulletEnv-v0` get a result of >= 650.

-- `PandaReachDense-v2` get a result of >= -3.5.

+- `PandaReachDense-v3` get a result of >= -3.5.

To find your result, [go to the leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) and find your model, **the result = mean_reward - std of reward**

-**If you don't find your model, go to the bottom of the page and click on the refresh button.**

-

For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

**To start the hands-on click on Open In Colab button** 👇 :

@@ -37,11 +27,10 @@ For more information about the certification process, check this section 👉 ht

[](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit6/unit6.ipynb)

-# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with PyBullet and Panda-Gym 🤖

+# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with Panda-Gym 🤖

### 🎮 Environments:

-- [PyBullet](https://github.com/bulletphysics/bullet3)

- [Panda-Gym](https://github.com/qgallouedec/panda-gym)

### 📚 RL-Library:

@@ -54,12 +43,13 @@ We're constantly trying to improve our tutorials, so **if you find some issues i

At the end of the notebook, you will:

-- Be able to use the environment librairies **PyBullet** and **Panda-Gym**.

+- Be able to use **Panda-Gym**, the environment library.

- Be able to **train robots using A2C**.

- Understand why **we need to normalize the input**.

- Be able to **push your trained agent and the code to the Hub** with a nice video replay and an evaluation score 🔥.

## Prerequisites 🏗️

+

Before diving into the notebook, you need to:

🔲 📚 Study [Actor-Critic methods by reading Unit 6](https://huggingface.co/deep-rl-course/unit6/introduction) 🤗

@@ -99,30 +89,31 @@ virtual_display.start()

```

### Install dependencies 🔽

-The first step is to install the dependencies, we’ll install multiple ones:

-- `pybullet`: Contains the walking robots environments.

+We’ll install multiple ones:

+

+- `gymnasium`

- `panda-gym`: Contains the robotics arm environments.

-- `stable-baselines3[extra]`: The SB3 deep reinforcement learning library.

+- `stable-baselines3`: The SB3 deep reinforcement learning library.

- `huggingface_sb3`: Additional code for Stable-baselines3 to load and upload models from the Hugging Face 🤗 Hub.

- `huggingface_hub`: Library allowing anyone to work with the Hub repositories.

```bash

-!pip install stable-baselines3[extra]==1.8.0

-!pip install huggingface_sb3

-!pip install panda_gym==2.0.0

-!pip install pyglet==1.5.1

+!pip install stable-baselines3[extra]

+!pip install gymnasium

+!pip install git+https://github.com/huggingface/huggingface_sb3@gymnasium-v2

+!pip install huggingface_hub

+!pip install panda_gym

```

## Import the packages 📦

```python

-import pybullet_envs

-import panda_gym

-import gym

-

import os

+import gymnasium as gym

+import panda_gym

+

from huggingface_sb3 import load_from_hub, package_to_hub

from stable_baselines3 import A2C

@@ -133,45 +124,61 @@ from stable_baselines3.common.env_util import make_vec_env

from huggingface_hub import notebook_login

```

-## Environment 1: AntBulletEnv-v0 🕸

+## PandaReachDense-v3 🦾

+

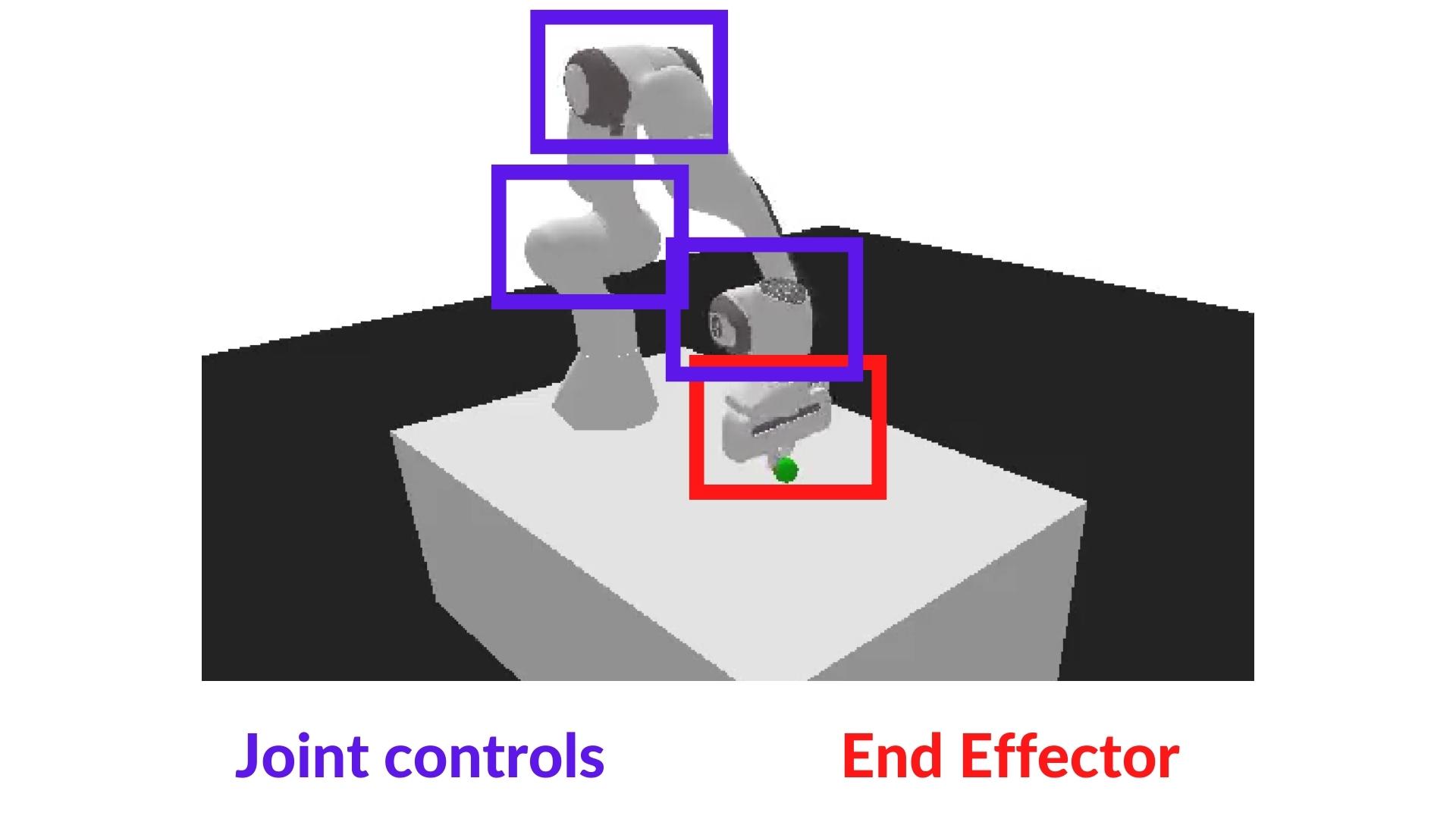

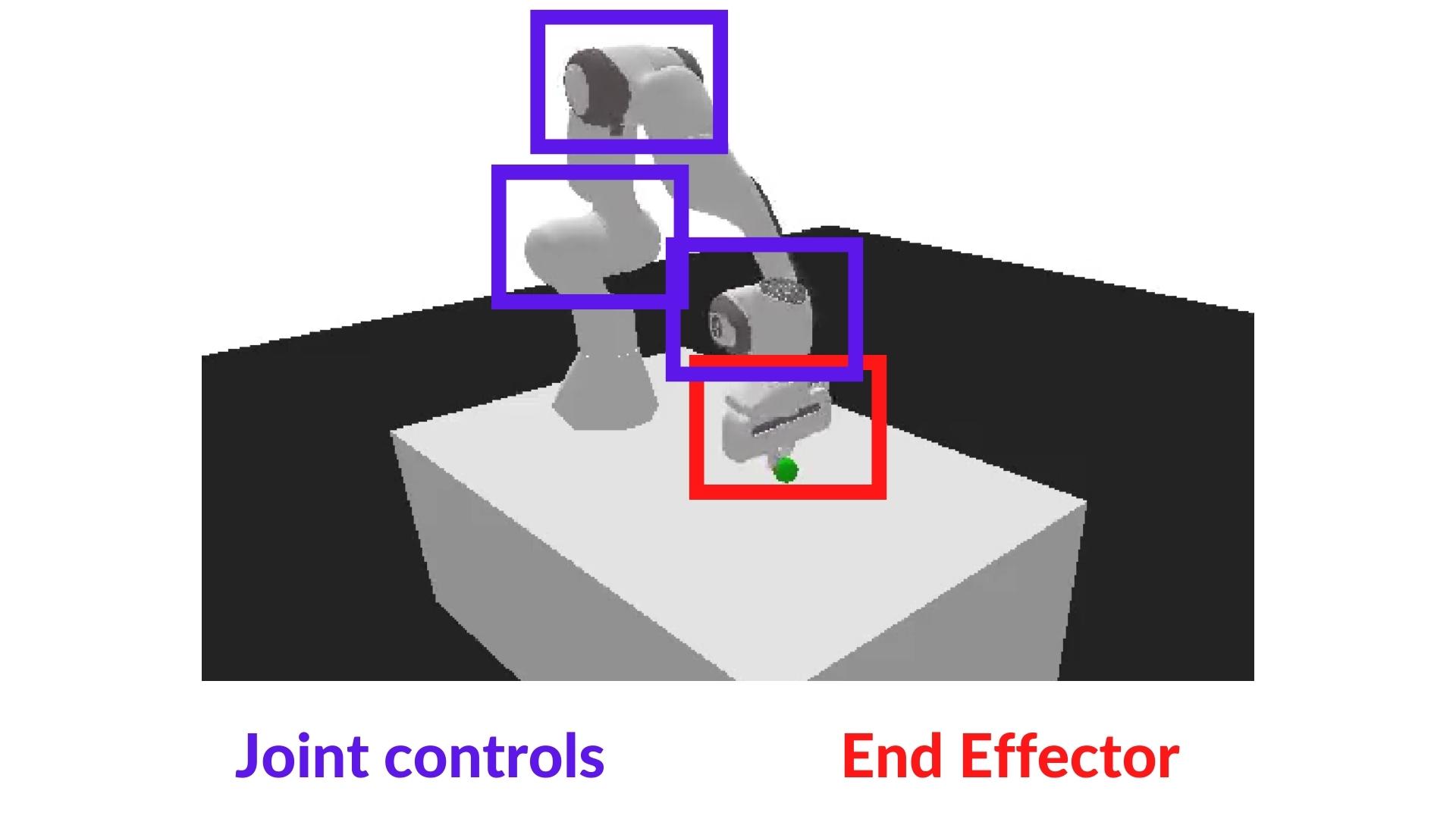

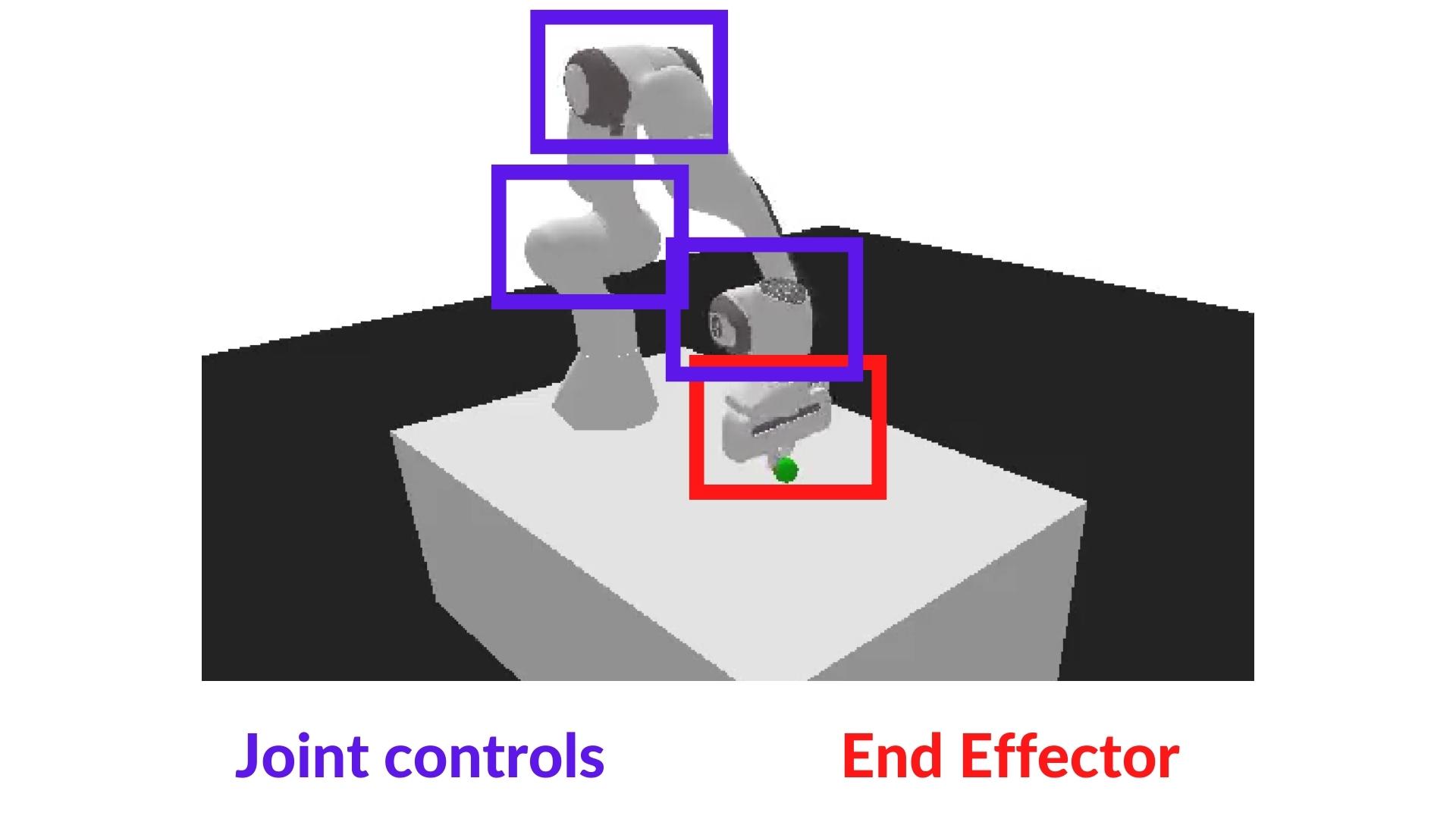

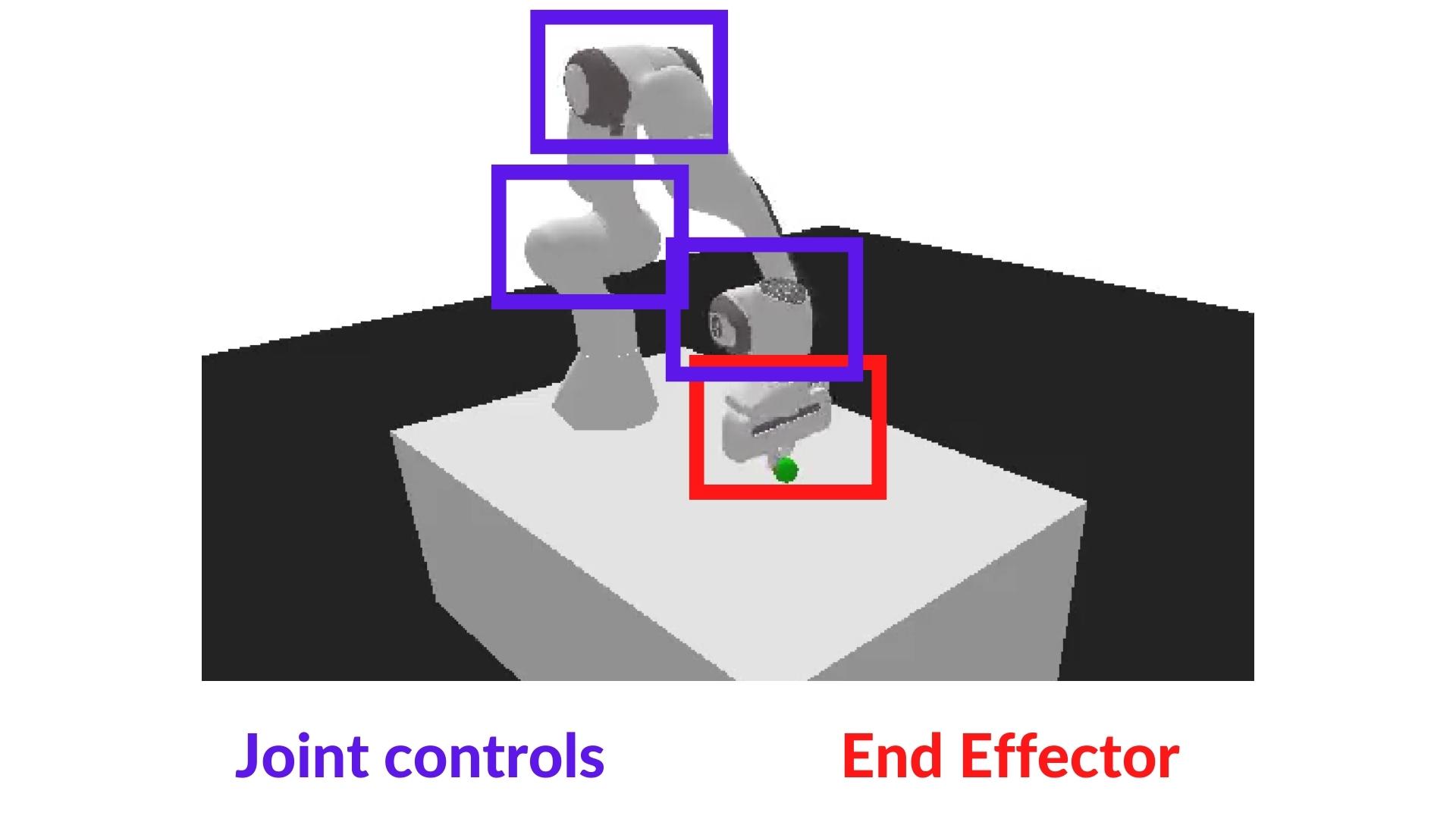

+The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+

+In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+

+In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+

+We're going to use the dense version of this environment. It means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). Contrary to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

+

+Also, we're going to use the *End-effector displacement control*, it means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

+

+

-

-

To validate this hands-on for the certification process, you need to push your two trained models to the Hub and get the following results:

-- `AntBulletEnv-v0` get a result of >= 650.

-- `PandaReachDense-v2` get a result of >= -3.5.

+- `PandaReachDense-v3` get a result of >= -3.5.

To find your result, [go to the leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) and find your model, **the result = mean_reward - std of reward**

-**If you don't find your model, go to the bottom of the page and click on the refresh button.**

-

For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

**To start the hands-on click on Open In Colab button** 👇 :

@@ -37,11 +27,10 @@ For more information about the certification process, check this section 👉 ht

[](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit6/unit6.ipynb)

-# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with PyBullet and Panda-Gym 🤖

+# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with Panda-Gym 🤖

### 🎮 Environments:

-- [PyBullet](https://github.com/bulletphysics/bullet3)

- [Panda-Gym](https://github.com/qgallouedec/panda-gym)

### 📚 RL-Library:

@@ -54,12 +43,13 @@ We're constantly trying to improve our tutorials, so **if you find some issues i

At the end of the notebook, you will:

-- Be able to use the environment librairies **PyBullet** and **Panda-Gym**.

+- Be able to use **Panda-Gym**, the environment library.

- Be able to **train robots using A2C**.

- Understand why **we need to normalize the input**.

- Be able to **push your trained agent and the code to the Hub** with a nice video replay and an evaluation score 🔥.

## Prerequisites 🏗️

+

Before diving into the notebook, you need to:

🔲 📚 Study [Actor-Critic methods by reading Unit 6](https://huggingface.co/deep-rl-course/unit6/introduction) 🤗

@@ -99,30 +89,31 @@ virtual_display.start()

```

### Install dependencies 🔽

-The first step is to install the dependencies, we’ll install multiple ones:

-- `pybullet`: Contains the walking robots environments.

+We’ll install multiple ones:

+

+- `gymnasium`

- `panda-gym`: Contains the robotics arm environments.

-- `stable-baselines3[extra]`: The SB3 deep reinforcement learning library.

+- `stable-baselines3`: The SB3 deep reinforcement learning library.

- `huggingface_sb3`: Additional code for Stable-baselines3 to load and upload models from the Hugging Face 🤗 Hub.

- `huggingface_hub`: Library allowing anyone to work with the Hub repositories.

```bash

-!pip install stable-baselines3[extra]==1.8.0

-!pip install huggingface_sb3

-!pip install panda_gym==2.0.0

-!pip install pyglet==1.5.1

+!pip install stable-baselines3[extra]

+!pip install gymnasium

+!pip install git+https://github.com/huggingface/huggingface_sb3@gymnasium-v2

+!pip install huggingface_hub

+!pip install panda_gym

```

## Import the packages 📦

```python

-import pybullet_envs

-import panda_gym

-import gym

-

import os

+import gymnasium as gym

+import panda_gym

+

from huggingface_sb3 import load_from_hub, package_to_hub

from stable_baselines3 import A2C

@@ -133,45 +124,61 @@ from stable_baselines3.common.env_util import make_vec_env

from huggingface_hub import notebook_login

```

-## Environment 1: AntBulletEnv-v0 🕸

+## PandaReachDense-v3 🦾

+

+The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+

+In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+

+In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+

+We're going to use the dense version of this environment. It means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). Contrary to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

+

+Also, we're going to use the *End-effector displacement control*, it means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

+

+ +

+This way **the training will be easier**.

+

+### Create the environment

-### Create the AntBulletEnv-v0

#### The environment 🎮

-In this environment, the agent needs to use its different joints correctly in order to walk.

-You can find a detailled explanation of this environment here: https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet

+In `PandaReachDense-v3` the robotic arm must place its end-effector at a target position (green ball).

```python

-env_id = "AntBulletEnv-v0"

+env_id = "PandaReachDense-v3"

+

# Create the env

env = gym.make(env_id)

# Get the state space and action space

-s_size = env.observation_space.shape[0]

+s_size = env.observation_space.shape

a_size = env.action_space

```

```python

print("_____OBSERVATION SPACE_____ \n")

print("The State Space is: ", s_size)

-print("Sample observation", env.observation_space.sample()) # Get a random observation

+print("Sample observation", env.observation_space.sample()) # Get a random observation

```

-The observation Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-The difference is that our observation space is 28 not 29.

+The observation space **is a dictionary with 3 different elements**:

-

+

+This way **the training will be easier**.

+

+### Create the environment

-### Create the AntBulletEnv-v0

#### The environment 🎮

-In this environment, the agent needs to use its different joints correctly in order to walk.

-You can find a detailled explanation of this environment here: https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet

+In `PandaReachDense-v3` the robotic arm must place its end-effector at a target position (green ball).

```python

-env_id = "AntBulletEnv-v0"

+env_id = "PandaReachDense-v3"

+

# Create the env

env = gym.make(env_id)

# Get the state space and action space

-s_size = env.observation_space.shape[0]

+s_size = env.observation_space.shape

a_size = env.action_space

```

```python

print("_____OBSERVATION SPACE_____ \n")

print("The State Space is: ", s_size)

-print("Sample observation", env.observation_space.sample()) # Get a random observation

+print("Sample observation", env.observation_space.sample()) # Get a random observation

```

-The observation Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-The difference is that our observation space is 28 not 29.

+The observation space **is a dictionary with 3 different elements**:

- +- `achieved_goal`: (x,y,z) position of the goal.

+- `desired_goal`: (x,y,z) distance between the goal position and the current object position.

+- `observation`: position (x,y,z) and velocity of the end-effector (vx, vy, vz).

+Given it's a dictionary as observation, **we will need to use a MultiInputPolicy policy instead of MlpPolicy**.

```python

print("\n _____ACTION SPACE_____ \n")

print("The Action Space is: ", a_size)

-print("Action Space Sample", env.action_space.sample()) # Take a random action

+print("Action Space Sample", env.action_space.sample()) # Take a random action

```

-The action Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-

-

+- `achieved_goal`: (x,y,z) position of the goal.

+- `desired_goal`: (x,y,z) distance between the goal position and the current object position.

+- `observation`: position (x,y,z) and velocity of the end-effector (vx, vy, vz).

+Given it's a dictionary as observation, **we will need to use a MultiInputPolicy policy instead of MlpPolicy**.

```python

print("\n _____ACTION SPACE_____ \n")

print("The Action Space is: ", a_size)

-print("Action Space Sample", env.action_space.sample()) # Take a random action

+print("Action Space Sample", env.action_space.sample()) # Take a random action

```

-The action Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-

- +The action space is a vector with 3 values:

+- Control x, y, z movement

### Normalize observation and rewards

@@ -196,13 +203,11 @@ env = # TODO: Add the wrapper

```python

env = make_vec_env(env_id, n_envs=4)

-env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.0)

+env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.)

```

### Create the A2C Model 🤖

-In this case, because we have a vector of 28 values as input, we'll use an MLP (multi-layer perceptron) as policy.

-

For more information about A2C implementation with StableBaselines3 check: https://stable-baselines3.readthedocs.io/en/master/modules/a2c.html#notes

To find the best parameters I checked the [official trained agents by Stable-Baselines3 team](https://huggingface.co/sb3).

@@ -214,86 +219,71 @@ model = # Create the A2C model and try to find the best parameters

#### Solution

```python

-model = A2C(

- policy="MlpPolicy",

- env=env,

- gae_lambda=0.9,

- gamma=0.99,

- learning_rate=0.00096,

- max_grad_norm=0.5,

- n_steps=8,

- vf_coef=0.4,

- ent_coef=0.0,

- policy_kwargs=dict(log_std_init=-2, ortho_init=False),

- normalize_advantage=False,

- use_rms_prop=True,

- use_sde=True,

- verbose=1,

-)

+model = A2C(policy = "MultiInputPolicy",

+ env = env,

+ verbose=1)

```

### Train the A2C agent 🏃

-- Let's train our agent for 2,000,000 timesteps. Don't forget to use GPU on Colab. It will take approximately ~25-40min

+- Let's train our agent for 1,000,000 timesteps, don't forget to use GPU on Colab. It will take approximately ~25-40min

```python

-model.learn(2_000_000)

+model.learn(1_000_000)

```

```python

# Save the model and VecNormalize statistics when saving the agent

-model.save("a2c-AntBulletEnv-v0")

+model.save("a2c-PandaReachDense-v3")

env.save("vec_normalize.pkl")

```

### Evaluate the agent 📈

-- Now that our agent is trained, we need to **check its performance**.

+

+- Now that's our agent is trained, we need to **check its performance**.

- Stable-Baselines3 provides a method to do that: `evaluate_policy`

-- In my case, I got a mean reward of `2371.90 +/- 16.50`

```python

from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize

# Load the saved statistics

-eval_env = DummyVecEnv([lambda: gym.make("AntBulletEnv-v0")])

+eval_env = DummyVecEnv([lambda: gym.make("PandaReachDense-v3")])

eval_env = VecNormalize.load("vec_normalize.pkl", eval_env)

+# We need to override the render_mode

+eval_env.render_mode = "rgb_array"

+

# do not update them at test time

eval_env.training = False

# reward normalization is not needed at test time

eval_env.norm_reward = False

# Load the agent

-model = A2C.load("a2c-AntBulletEnv-v0")

+model = A2C.load("a2c-PandaReachDense-v3")

-mean_reward, std_reward = evaluate_policy(model, env)

+mean_reward, std_reward = evaluate_policy(model, eval_env)

print(f"Mean reward = {mean_reward:.2f} +/- {std_reward:.2f}")

```

-

### Publish your trained model on the Hub 🔥

+

Now that we saw we got good results after the training, we can publish our trained model on the Hub with one line of code.

📚 The libraries documentation 👉 https://github.com/huggingface/huggingface_sb3/tree/main#hugging-face--x-stable-baselines3-v20

-Here's an example of a Model Card (with a PyBullet environment):

-

-

+The action space is a vector with 3 values:

+- Control x, y, z movement

### Normalize observation and rewards

@@ -196,13 +203,11 @@ env = # TODO: Add the wrapper

```python

env = make_vec_env(env_id, n_envs=4)

-env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.0)

+env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.)

```

### Create the A2C Model 🤖

-In this case, because we have a vector of 28 values as input, we'll use an MLP (multi-layer perceptron) as policy.

-

For more information about A2C implementation with StableBaselines3 check: https://stable-baselines3.readthedocs.io/en/master/modules/a2c.html#notes

To find the best parameters I checked the [official trained agents by Stable-Baselines3 team](https://huggingface.co/sb3).

@@ -214,86 +219,71 @@ model = # Create the A2C model and try to find the best parameters

#### Solution

```python

-model = A2C(

- policy="MlpPolicy",

- env=env,

- gae_lambda=0.9,

- gamma=0.99,

- learning_rate=0.00096,

- max_grad_norm=0.5,

- n_steps=8,

- vf_coef=0.4,

- ent_coef=0.0,

- policy_kwargs=dict(log_std_init=-2, ortho_init=False),

- normalize_advantage=False,

- use_rms_prop=True,

- use_sde=True,

- verbose=1,

-)

+model = A2C(policy = "MultiInputPolicy",

+ env = env,

+ verbose=1)

```

### Train the A2C agent 🏃

-- Let's train our agent for 2,000,000 timesteps. Don't forget to use GPU on Colab. It will take approximately ~25-40min

+- Let's train our agent for 1,000,000 timesteps, don't forget to use GPU on Colab. It will take approximately ~25-40min

```python

-model.learn(2_000_000)

+model.learn(1_000_000)

```

```python

# Save the model and VecNormalize statistics when saving the agent

-model.save("a2c-AntBulletEnv-v0")

+model.save("a2c-PandaReachDense-v3")

env.save("vec_normalize.pkl")

```

### Evaluate the agent 📈

-- Now that our agent is trained, we need to **check its performance**.

+

+- Now that's our agent is trained, we need to **check its performance**.

- Stable-Baselines3 provides a method to do that: `evaluate_policy`

-- In my case, I got a mean reward of `2371.90 +/- 16.50`

```python

from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize

# Load the saved statistics

-eval_env = DummyVecEnv([lambda: gym.make("AntBulletEnv-v0")])

+eval_env = DummyVecEnv([lambda: gym.make("PandaReachDense-v3")])

eval_env = VecNormalize.load("vec_normalize.pkl", eval_env)

+# We need to override the render_mode

+eval_env.render_mode = "rgb_array"

+

# do not update them at test time

eval_env.training = False

# reward normalization is not needed at test time

eval_env.norm_reward = False

# Load the agent

-model = A2C.load("a2c-AntBulletEnv-v0")

+model = A2C.load("a2c-PandaReachDense-v3")

-mean_reward, std_reward = evaluate_policy(model, env)

+mean_reward, std_reward = evaluate_policy(model, eval_env)

print(f"Mean reward = {mean_reward:.2f} +/- {std_reward:.2f}")

```

-

### Publish your trained model on the Hub 🔥

+

Now that we saw we got good results after the training, we can publish our trained model on the Hub with one line of code.

📚 The libraries documentation 👉 https://github.com/huggingface/huggingface_sb3/tree/main#hugging-face--x-stable-baselines3-v20

-Here's an example of a Model Card (with a PyBullet environment):

-

- -

By using `package_to_hub`, as we already mentionned in the former units, **you evaluate, record a replay, generate a model card of your agent and push it to the hub**.

This way:

- You can **showcase our work** 🔥

- You can **visualize your agent playing** 👀

-- You can **share an agent with the community that others can use** 💾

+- You can **share with the community an agent that others can use** 💾

- You can **access a leaderboard 🏆 to see how well your agent is performing compared to your classmates** 👉 https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard

-

To be able to share your model with the community there are three more steps to follow:

1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join

-2️⃣ Sign in and then you need to get your authentication token from the Hugging Face website.

+2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.

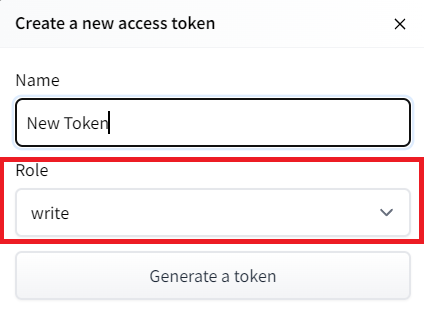

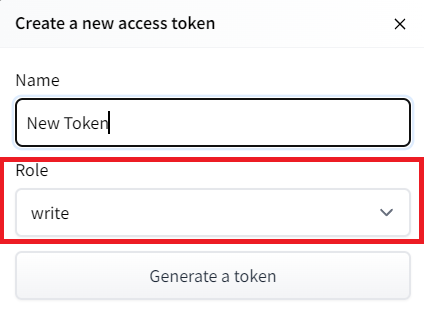

- Create a new token (https://huggingface.co/settings/tokens) **with write role**

-

By using `package_to_hub`, as we already mentionned in the former units, **you evaluate, record a replay, generate a model card of your agent and push it to the hub**.

This way:

- You can **showcase our work** 🔥

- You can **visualize your agent playing** 👀

-- You can **share an agent with the community that others can use** 💾

+- You can **share with the community an agent that others can use** 💾

- You can **access a leaderboard 🏆 to see how well your agent is performing compared to your classmates** 👉 https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard

-

To be able to share your model with the community there are three more steps to follow:

1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join

-2️⃣ Sign in and then you need to get your authentication token from the Hugging Face website.

+2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.

- Create a new token (https://huggingface.co/settings/tokens) **with write role**

@@ -305,116 +295,68 @@ To be able to share your model with the community there are three more steps to

notebook_login()

!git config --global credential.helper store

```

+If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-If you don't want to use Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-

-3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function

+3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function.

+For this environment, **running this cell can take approximately 10min**

```python

+from huggingface_sb3 import package_to_hub

+

package_to_hub(

model=model,

model_name=f"a2c-{env_id}",

model_architecture="A2C",

env_id=env_id,

eval_env=eval_env,

- repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

+ repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

commit_message="Initial commit",

)

```

-## Take a coffee break ☕

-- You already trained your first robot that learned to move congratutlations 🥳!

-- It's **time to take a break**. Don't hesitate to **save this notebook** `File > Save a copy to Drive` to work on this second part later.

+## Some additional challenges 🏆

+The best way to learn **is to try things by your own**! Why not trying `PandaPickAndPlace-v3`?

-## Environment 2: PandaReachDense-v2 🦾

+If you want to try more advanced tasks for panda-gym, you need to check what was done using **TQC or SAC** (a more sample-efficient algorithm suited for robotics tasks). In real robotics, you'll use a more sample-efficient algorithm for a simple reason: contrary to a simulation **if you move your robotic arm too much, you have a risk of breaking it**.

-The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+PandaPickAndPlace-v1 (this model uses the v1 version of the environment): https://huggingface.co/sb3/tqc-PandaPickAndPlace-v1

-In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+And don't hesitate to check panda-gym documentation here: https://panda-gym.readthedocs.io/en/latest/usage/train_with_sb3.html

-In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+We provide you the steps to train another agent (optional):

-We're going to use the dense version of this environment. This means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). This is in contrast to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

-

-Also, we're going to use the *End-effector displacement control*, which means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

-

-

@@ -305,116 +295,68 @@ To be able to share your model with the community there are three more steps to

notebook_login()

!git config --global credential.helper store

```

+If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-If you don't want to use Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-

-3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function

+3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function.

+For this environment, **running this cell can take approximately 10min**

```python

+from huggingface_sb3 import package_to_hub

+

package_to_hub(

model=model,

model_name=f"a2c-{env_id}",

model_architecture="A2C",

env_id=env_id,

eval_env=eval_env,

- repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

+ repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

commit_message="Initial commit",

)

```

-## Take a coffee break ☕

-- You already trained your first robot that learned to move congratutlations 🥳!

-- It's **time to take a break**. Don't hesitate to **save this notebook** `File > Save a copy to Drive` to work on this second part later.

+## Some additional challenges 🏆

+The best way to learn **is to try things by your own**! Why not trying `PandaPickAndPlace-v3`?

-## Environment 2: PandaReachDense-v2 🦾

+If you want to try more advanced tasks for panda-gym, you need to check what was done using **TQC or SAC** (a more sample-efficient algorithm suited for robotics tasks). In real robotics, you'll use a more sample-efficient algorithm for a simple reason: contrary to a simulation **if you move your robotic arm too much, you have a risk of breaking it**.

-The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+PandaPickAndPlace-v1 (this model uses the v1 version of the environment): https://huggingface.co/sb3/tqc-PandaPickAndPlace-v1

-In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+And don't hesitate to check panda-gym documentation here: https://panda-gym.readthedocs.io/en/latest/usage/train_with_sb3.html

-In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+We provide you the steps to train another agent (optional):

-We're going to use the dense version of this environment. This means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). This is in contrast to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

-

-Also, we're going to use the *End-effector displacement control*, which means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

-

- -

-

-This way **the training will be easier**.

-

-

-

-In `PandaReachDense-v2`, the robotic arm must place its end-effector at a target position (green ball).

-

-

-

-```python

-import gym

-

-env_id = "PandaReachDense-v2"

-

-# Create the env

-env = gym.make(env_id)

-

-# Get the state space and action space

-s_size = env.observation_space.shape

-a_size = env.action_space

-```

-

-```python

-print("_____OBSERVATION SPACE_____ \n")

-print("The State Space is: ", s_size)

-print("Sample observation", env.observation_space.sample()) # Get a random observation

-```

-

-The observation space **is a dictionary with 3 different elements**:

-- `achieved_goal`: (x,y,z) position of the goal.

-- `desired_goal`: (x,y,z) distance between the goal position and the current object position.

-- `observation`: position (x,y,z) and velocity of the end-effector (vx, vy, vz).

-

-Given it's a dictionary as observation, **we will need to use a MultiInputPolicy policy instead of MlpPolicy**.

-

-```python

-print("\n _____ACTION SPACE_____ \n")

-print("The Action Space is: ", a_size)

-print("Action Space Sample", env.action_space.sample()) # Take a random action

-```

-

-The action space is a vector with 3 values:

-- Control x, y, z movement

-

-Now it's your turn:

-

-1. Define the environment called "PandaReachDense-v2".

-2. Make a vectorized environment.

+1. Define the environment called "PandaPickAndPlace-v3"

+2. Make a vectorized environment

3. Add a wrapper to normalize the observations and rewards. [Check the documentation](https://stable-baselines3.readthedocs.io/en/master/guide/vec_envs.html#vecnormalize)

4. Create the A2C Model (don't forget verbose=1 to print the training logs).

-5. Train it for 1M Timesteps.

-6. Save the model and VecNormalize statistics when saving the agent.

-7. Evaluate your agent.

-8. Publish your trained model on the Hub 🔥 with `package_to_hub`.

+5. Train it for 1M Timesteps

+6. Save the model and VecNormalize statistics when saving the agent

+7. Evaluate your agent

+8. Publish your trained model on the Hub 🔥 with `package_to_hub`

-### Solution (fill the todo)

+

+### Solution (optional)

```python

# 1 - 2

-env_id = "PandaReachDense-v2"

+env_id = "PandaPickAndPlace-v3"

env = make_vec_env(env_id, n_envs=4)

# 3

-env = VecNormalize(env, norm_obs=True, norm_reward=False, clip_obs=10.0)

+env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.)

# 4

-model = A2C(policy="MultiInputPolicy", env=env, verbose=1)

+model = A2C(policy = "MultiInputPolicy",

+ env = env,

+ verbose=1)

# 5

model.learn(1_000_000)

```

```python

# 6

-model_name = "a2c-PandaReachDense-v2"

+model_name = "a2c-PandaPickAndPlace-v3";

model.save(model_name)

env.save("vec_normalize.pkl")

@@ -422,7 +364,7 @@ env.save("vec_normalize.pkl")

from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize

# Load the saved statistics

-eval_env = DummyVecEnv([lambda: gym.make("PandaReachDense-v2")])

+eval_env = DummyVecEnv([lambda: gym.make("PandaPickAndPlace-v3")])

eval_env = VecNormalize.load("vec_normalize.pkl", eval_env)

# do not update them at test time

@@ -433,7 +375,7 @@ eval_env.norm_reward = False

# Load the agent

model = A2C.load(model_name)

-mean_reward, std_reward = evaluate_policy(model, env)

+mean_reward, std_reward = evaluate_policy(model, eval_env)

print(f"Mean reward = {mean_reward:.2f} +/- {std_reward:.2f}")

@@ -444,26 +386,11 @@ package_to_hub(

model_architecture="A2C",

env_id=env_id,

eval_env=eval_env,

- repo_id=f"ThomasSimonini/a2c-{env_id}", # TODO: Change the username

+ repo_id=f"ThomasSimonini/a2c-{env_id}", # TODO: Change the username

commit_message="Initial commit",

)

```

-## Some additional challenges 🏆

-

-The best way to learn **is to try things on your own**! Why not try `HalfCheetahBulletEnv-v0` for PyBullet and `PandaPickAndPlace-v1` for Panda-Gym?

-

-If you want to try more advanced tasks for panda-gym, you need to check what was done using **TQC or SAC** (a more sample-efficient algorithm suited for robotics tasks). In real robotics, you'll use a more sample-efficient algorithm for a simple reason: contrary to a simulation **if you move your robotic arm too much, you have a risk of breaking it**.

-

-PandaPickAndPlace-v1: https://huggingface.co/sb3/tqc-PandaPickAndPlace-v1

-

-And don't hesitate to check panda-gym documentation here: https://panda-gym.readthedocs.io/en/latest/usage/train_with_sb3.html

-

-Here are some ideas to go further:

-* Train more steps

-* Try different hyperparameters by looking at what your classmates have done 👉 https://huggingface.co/models?other=https://huggingface.co/models?other=AntBulletEnv-v0

-* **Push your new trained model** on the Hub 🔥

-

-

See you on Unit 7! 🔥

+

## Keep learning, stay awesome 🤗

diff --git a/units/en/unit6/introduction.mdx b/units/en/unit6/introduction.mdx

index 4be735f..9d4c4ad 100644

--- a/units/en/unit6/introduction.mdx

+++ b/units/en/unit6/introduction.mdx

@@ -16,10 +16,7 @@ So today we'll study **Actor-Critic methods**, a hybrid architecture combining v

- *A Critic* that measures **how good the taken action is** (Value-Based method)

-We'll study one of these hybrid methods, Advantage Actor Critic (A2C), **and train our agent using Stable-Baselines3 in robotic environments**. We'll train two robots:

-- A spider 🕷️ to learn to move.

+We'll study one of these hybrid methods, Advantage Actor Critic (A2C), **and train our agent using Stable-Baselines3 in robotic environments**. We'll train:

- A robotic arm 🦾 to move to the correct position.

-

-

-

-This way **the training will be easier**.

-

-

-

-In `PandaReachDense-v2`, the robotic arm must place its end-effector at a target position (green ball).

-

-

-

-```python

-import gym

-

-env_id = "PandaReachDense-v2"

-

-# Create the env

-env = gym.make(env_id)

-

-# Get the state space and action space

-s_size = env.observation_space.shape

-a_size = env.action_space

-```

-

-```python

-print("_____OBSERVATION SPACE_____ \n")

-print("The State Space is: ", s_size)

-print("Sample observation", env.observation_space.sample()) # Get a random observation

-```

-

-The observation space **is a dictionary with 3 different elements**:

-- `achieved_goal`: (x,y,z) position of the goal.

-- `desired_goal`: (x,y,z) distance between the goal position and the current object position.

-- `observation`: position (x,y,z) and velocity of the end-effector (vx, vy, vz).

-

-Given it's a dictionary as observation, **we will need to use a MultiInputPolicy policy instead of MlpPolicy**.

-

-```python

-print("\n _____ACTION SPACE_____ \n")

-print("The Action Space is: ", a_size)

-print("Action Space Sample", env.action_space.sample()) # Take a random action

-```

-

-The action space is a vector with 3 values:

-- Control x, y, z movement

-

-Now it's your turn:

-

-1. Define the environment called "PandaReachDense-v2".

-2. Make a vectorized environment.

+1. Define the environment called "PandaPickAndPlace-v3"

+2. Make a vectorized environment

3. Add a wrapper to normalize the observations and rewards. [Check the documentation](https://stable-baselines3.readthedocs.io/en/master/guide/vec_envs.html#vecnormalize)

4. Create the A2C Model (don't forget verbose=1 to print the training logs).

-5. Train it for 1M Timesteps.

-6. Save the model and VecNormalize statistics when saving the agent.

-7. Evaluate your agent.

-8. Publish your trained model on the Hub 🔥 with `package_to_hub`.

+5. Train it for 1M Timesteps

+6. Save the model and VecNormalize statistics when saving the agent

+7. Evaluate your agent

+8. Publish your trained model on the Hub 🔥 with `package_to_hub`

-### Solution (fill the todo)

+

+### Solution (optional)

```python

# 1 - 2

-env_id = "PandaReachDense-v2"

+env_id = "PandaPickAndPlace-v3"

env = make_vec_env(env_id, n_envs=4)

# 3

-env = VecNormalize(env, norm_obs=True, norm_reward=False, clip_obs=10.0)

+env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.)

# 4

-model = A2C(policy="MultiInputPolicy", env=env, verbose=1)

+model = A2C(policy = "MultiInputPolicy",

+ env = env,

+ verbose=1)

# 5

model.learn(1_000_000)

```

```python

# 6

-model_name = "a2c-PandaReachDense-v2"

+model_name = "a2c-PandaPickAndPlace-v3";

model.save(model_name)

env.save("vec_normalize.pkl")

@@ -422,7 +364,7 @@ env.save("vec_normalize.pkl")

from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize

# Load the saved statistics

-eval_env = DummyVecEnv([lambda: gym.make("PandaReachDense-v2")])

+eval_env = DummyVecEnv([lambda: gym.make("PandaPickAndPlace-v3")])

eval_env = VecNormalize.load("vec_normalize.pkl", eval_env)

# do not update them at test time

@@ -433,7 +375,7 @@ eval_env.norm_reward = False

# Load the agent

model = A2C.load(model_name)

-mean_reward, std_reward = evaluate_policy(model, env)

+mean_reward, std_reward = evaluate_policy(model, eval_env)

print(f"Mean reward = {mean_reward:.2f} +/- {std_reward:.2f}")

@@ -444,26 +386,11 @@ package_to_hub(

model_architecture="A2C",

env_id=env_id,

eval_env=eval_env,

- repo_id=f"ThomasSimonini/a2c-{env_id}", # TODO: Change the username

+ repo_id=f"ThomasSimonini/a2c-{env_id}", # TODO: Change the username

commit_message="Initial commit",

)

```

-## Some additional challenges 🏆

-

-The best way to learn **is to try things on your own**! Why not try `HalfCheetahBulletEnv-v0` for PyBullet and `PandaPickAndPlace-v1` for Panda-Gym?

-

-If you want to try more advanced tasks for panda-gym, you need to check what was done using **TQC or SAC** (a more sample-efficient algorithm suited for robotics tasks). In real robotics, you'll use a more sample-efficient algorithm for a simple reason: contrary to a simulation **if you move your robotic arm too much, you have a risk of breaking it**.

-

-PandaPickAndPlace-v1: https://huggingface.co/sb3/tqc-PandaPickAndPlace-v1

-

-And don't hesitate to check panda-gym documentation here: https://panda-gym.readthedocs.io/en/latest/usage/train_with_sb3.html

-

-Here are some ideas to go further:

-* Train more steps

-* Try different hyperparameters by looking at what your classmates have done 👉 https://huggingface.co/models?other=https://huggingface.co/models?other=AntBulletEnv-v0

-* **Push your new trained model** on the Hub 🔥

-

-

See you on Unit 7! 🔥

+

## Keep learning, stay awesome 🤗

diff --git a/units/en/unit6/introduction.mdx b/units/en/unit6/introduction.mdx

index 4be735f..9d4c4ad 100644

--- a/units/en/unit6/introduction.mdx

+++ b/units/en/unit6/introduction.mdx

@@ -16,10 +16,7 @@ So today we'll study **Actor-Critic methods**, a hybrid architecture combining v

- *A Critic* that measures **how good the taken action is** (Value-Based method)

-We'll study one of these hybrid methods, Advantage Actor Critic (A2C), **and train our agent using Stable-Baselines3 in robotic environments**. We'll train two robots:

-- A spider 🕷️ to learn to move.

+We'll study one of these hybrid methods, Advantage Actor Critic (A2C), **and train our agent using Stable-Baselines3 in robotic environments**. We'll train:

- A robotic arm 🦾 to move to the correct position.

- -

Sound exciting? Let's get started!

-

Sound exciting? Let's get started!

-

-

To validate this hands-on for the certification process, you need to push your two trained models to the Hub and get the following results:

-- `AntBulletEnv-v0` get a result of >= 650.

-- `PandaReachDense-v2` get a result of >= -3.5.

+- `PandaReachDense-v3` get a result of >= -3.5.

To find your result, [go to the leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) and find your model, **the result = mean_reward - std of reward**

-**If you don't find your model, go to the bottom of the page and click on the refresh button.**

-

For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

**To start the hands-on click on Open In Colab button** 👇 :

@@ -37,11 +27,10 @@ For more information about the certification process, check this section 👉 ht

[](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit6/unit6.ipynb)

-# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with PyBullet and Panda-Gym 🤖

+# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with Panda-Gym 🤖

### 🎮 Environments:

-- [PyBullet](https://github.com/bulletphysics/bullet3)

- [Panda-Gym](https://github.com/qgallouedec/panda-gym)

### 📚 RL-Library:

@@ -54,12 +43,13 @@ We're constantly trying to improve our tutorials, so **if you find some issues i

At the end of the notebook, you will:

-- Be able to use the environment librairies **PyBullet** and **Panda-Gym**.

+- Be able to use **Panda-Gym**, the environment library.

- Be able to **train robots using A2C**.

- Understand why **we need to normalize the input**.

- Be able to **push your trained agent and the code to the Hub** with a nice video replay and an evaluation score 🔥.

## Prerequisites 🏗️

+

Before diving into the notebook, you need to:

🔲 📚 Study [Actor-Critic methods by reading Unit 6](https://huggingface.co/deep-rl-course/unit6/introduction) 🤗

@@ -99,30 +89,31 @@ virtual_display.start()

```

### Install dependencies 🔽

-The first step is to install the dependencies, we’ll install multiple ones:

-- `pybullet`: Contains the walking robots environments.

+We’ll install multiple ones:

+

+- `gymnasium`

- `panda-gym`: Contains the robotics arm environments.

-- `stable-baselines3[extra]`: The SB3 deep reinforcement learning library.

+- `stable-baselines3`: The SB3 deep reinforcement learning library.

- `huggingface_sb3`: Additional code for Stable-baselines3 to load and upload models from the Hugging Face 🤗 Hub.

- `huggingface_hub`: Library allowing anyone to work with the Hub repositories.

```bash

-!pip install stable-baselines3[extra]==1.8.0

-!pip install huggingface_sb3

-!pip install panda_gym==2.0.0

-!pip install pyglet==1.5.1

+!pip install stable-baselines3[extra]

+!pip install gymnasium

+!pip install git+https://github.com/huggingface/huggingface_sb3@gymnasium-v2

+!pip install huggingface_hub

+!pip install panda_gym

```

## Import the packages 📦

```python

-import pybullet_envs

-import panda_gym

-import gym

-

import os

+import gymnasium as gym

+import panda_gym

+

from huggingface_sb3 import load_from_hub, package_to_hub

from stable_baselines3 import A2C

@@ -133,45 +124,61 @@ from stable_baselines3.common.env_util import make_vec_env

from huggingface_hub import notebook_login

```

-## Environment 1: AntBulletEnv-v0 🕸

+## PandaReachDense-v3 🦾

+

+The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+

+In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+

+In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+

+We're going to use the dense version of this environment. It means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). Contrary to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

+

+Also, we're going to use the *End-effector displacement control*, it means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

+

+

-

-

To validate this hands-on for the certification process, you need to push your two trained models to the Hub and get the following results:

-- `AntBulletEnv-v0` get a result of >= 650.

-- `PandaReachDense-v2` get a result of >= -3.5.

+- `PandaReachDense-v3` get a result of >= -3.5.

To find your result, [go to the leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) and find your model, **the result = mean_reward - std of reward**

-**If you don't find your model, go to the bottom of the page and click on the refresh button.**

-

For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

**To start the hands-on click on Open In Colab button** 👇 :

@@ -37,11 +27,10 @@ For more information about the certification process, check this section 👉 ht

[](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit6/unit6.ipynb)

-# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with PyBullet and Panda-Gym 🤖

+# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with Panda-Gym 🤖

### 🎮 Environments:

-- [PyBullet](https://github.com/bulletphysics/bullet3)

- [Panda-Gym](https://github.com/qgallouedec/panda-gym)

### 📚 RL-Library:

@@ -54,12 +43,13 @@ We're constantly trying to improve our tutorials, so **if you find some issues i

At the end of the notebook, you will:

-- Be able to use the environment librairies **PyBullet** and **Panda-Gym**.

+- Be able to use **Panda-Gym**, the environment library.

- Be able to **train robots using A2C**.

- Understand why **we need to normalize the input**.

- Be able to **push your trained agent and the code to the Hub** with a nice video replay and an evaluation score 🔥.

## Prerequisites 🏗️

+

Before diving into the notebook, you need to:

🔲 📚 Study [Actor-Critic methods by reading Unit 6](https://huggingface.co/deep-rl-course/unit6/introduction) 🤗

@@ -99,30 +89,31 @@ virtual_display.start()

```

### Install dependencies 🔽

-The first step is to install the dependencies, we’ll install multiple ones:

-- `pybullet`: Contains the walking robots environments.

+We’ll install multiple ones:

+

+- `gymnasium`

- `panda-gym`: Contains the robotics arm environments.

-- `stable-baselines3[extra]`: The SB3 deep reinforcement learning library.

+- `stable-baselines3`: The SB3 deep reinforcement learning library.

- `huggingface_sb3`: Additional code for Stable-baselines3 to load and upload models from the Hugging Face 🤗 Hub.

- `huggingface_hub`: Library allowing anyone to work with the Hub repositories.

```bash

-!pip install stable-baselines3[extra]==1.8.0

-!pip install huggingface_sb3

-!pip install panda_gym==2.0.0

-!pip install pyglet==1.5.1

+!pip install stable-baselines3[extra]

+!pip install gymnasium

+!pip install git+https://github.com/huggingface/huggingface_sb3@gymnasium-v2

+!pip install huggingface_hub

+!pip install panda_gym

```

## Import the packages 📦

```python

-import pybullet_envs

-import panda_gym

-import gym

-

import os

+import gymnasium as gym

+import panda_gym

+

from huggingface_sb3 import load_from_hub, package_to_hub

from stable_baselines3 import A2C

@@ -133,45 +124,61 @@ from stable_baselines3.common.env_util import make_vec_env

from huggingface_hub import notebook_login

```

-## Environment 1: AntBulletEnv-v0 🕸

+## PandaReachDense-v3 🦾

+

+The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+

+In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+

+In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+

+We're going to use the dense version of this environment. It means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). Contrary to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

+

+Also, we're going to use the *End-effector displacement control*, it means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

+

+ +

+This way **the training will be easier**.

+

+### Create the environment

-### Create the AntBulletEnv-v0

#### The environment 🎮

-In this environment, the agent needs to use its different joints correctly in order to walk.

-You can find a detailled explanation of this environment here: https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet

+In `PandaReachDense-v3` the robotic arm must place its end-effector at a target position (green ball).

```python

-env_id = "AntBulletEnv-v0"

+env_id = "PandaReachDense-v3"

+

# Create the env

env = gym.make(env_id)

# Get the state space and action space

-s_size = env.observation_space.shape[0]

+s_size = env.observation_space.shape

a_size = env.action_space

```

```python

print("_____OBSERVATION SPACE_____ \n")

print("The State Space is: ", s_size)

-print("Sample observation", env.observation_space.sample()) # Get a random observation

+print("Sample observation", env.observation_space.sample()) # Get a random observation

```

-The observation Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-The difference is that our observation space is 28 not 29.

+The observation space **is a dictionary with 3 different elements**:

-

+

+This way **the training will be easier**.

+

+### Create the environment

-### Create the AntBulletEnv-v0

#### The environment 🎮

-In this environment, the agent needs to use its different joints correctly in order to walk.

-You can find a detailled explanation of this environment here: https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet

+In `PandaReachDense-v3` the robotic arm must place its end-effector at a target position (green ball).

```python

-env_id = "AntBulletEnv-v0"

+env_id = "PandaReachDense-v3"

+

# Create the env

env = gym.make(env_id)

# Get the state space and action space

-s_size = env.observation_space.shape[0]

+s_size = env.observation_space.shape

a_size = env.action_space

```

```python

print("_____OBSERVATION SPACE_____ \n")

print("The State Space is: ", s_size)

-print("Sample observation", env.observation_space.sample()) # Get a random observation

+print("Sample observation", env.observation_space.sample()) # Get a random observation

```

-The observation Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-The difference is that our observation space is 28 not 29.

+The observation space **is a dictionary with 3 different elements**:

- +- `achieved_goal`: (x,y,z) position of the goal.

+- `desired_goal`: (x,y,z) distance between the goal position and the current object position.

+- `observation`: position (x,y,z) and velocity of the end-effector (vx, vy, vz).

+Given it's a dictionary as observation, **we will need to use a MultiInputPolicy policy instead of MlpPolicy**.

```python

print("\n _____ACTION SPACE_____ \n")

print("The Action Space is: ", a_size)

-print("Action Space Sample", env.action_space.sample()) # Take a random action

+print("Action Space Sample", env.action_space.sample()) # Take a random action

```

-The action Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-

-

+- `achieved_goal`: (x,y,z) position of the goal.

+- `desired_goal`: (x,y,z) distance between the goal position and the current object position.

+- `observation`: position (x,y,z) and velocity of the end-effector (vx, vy, vz).

+Given it's a dictionary as observation, **we will need to use a MultiInputPolicy policy instead of MlpPolicy**.

```python

print("\n _____ACTION SPACE_____ \n")

print("The Action Space is: ", a_size)

-print("Action Space Sample", env.action_space.sample()) # Take a random action

+print("Action Space Sample", env.action_space.sample()) # Take a random action

```

-The action Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):

-

- +The action space is a vector with 3 values:

+- Control x, y, z movement

### Normalize observation and rewards

@@ -196,13 +203,11 @@ env = # TODO: Add the wrapper

```python

env = make_vec_env(env_id, n_envs=4)

-env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.0)

+env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.)

```

### Create the A2C Model 🤖

-In this case, because we have a vector of 28 values as input, we'll use an MLP (multi-layer perceptron) as policy.

-

For more information about A2C implementation with StableBaselines3 check: https://stable-baselines3.readthedocs.io/en/master/modules/a2c.html#notes

To find the best parameters I checked the [official trained agents by Stable-Baselines3 team](https://huggingface.co/sb3).

@@ -214,86 +219,71 @@ model = # Create the A2C model and try to find the best parameters

#### Solution

```python

-model = A2C(

- policy="MlpPolicy",

- env=env,

- gae_lambda=0.9,

- gamma=0.99,

- learning_rate=0.00096,

- max_grad_norm=0.5,

- n_steps=8,

- vf_coef=0.4,

- ent_coef=0.0,

- policy_kwargs=dict(log_std_init=-2, ortho_init=False),

- normalize_advantage=False,

- use_rms_prop=True,

- use_sde=True,

- verbose=1,

-)

+model = A2C(policy = "MultiInputPolicy",

+ env = env,

+ verbose=1)

```

### Train the A2C agent 🏃

-- Let's train our agent for 2,000,000 timesteps. Don't forget to use GPU on Colab. It will take approximately ~25-40min

+- Let's train our agent for 1,000,000 timesteps, don't forget to use GPU on Colab. It will take approximately ~25-40min

```python

-model.learn(2_000_000)

+model.learn(1_000_000)

```

```python

# Save the model and VecNormalize statistics when saving the agent

-model.save("a2c-AntBulletEnv-v0")

+model.save("a2c-PandaReachDense-v3")

env.save("vec_normalize.pkl")

```

### Evaluate the agent 📈

-- Now that our agent is trained, we need to **check its performance**.

+

+- Now that's our agent is trained, we need to **check its performance**.

- Stable-Baselines3 provides a method to do that: `evaluate_policy`

-- In my case, I got a mean reward of `2371.90 +/- 16.50`

```python

from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize

# Load the saved statistics

-eval_env = DummyVecEnv([lambda: gym.make("AntBulletEnv-v0")])

+eval_env = DummyVecEnv([lambda: gym.make("PandaReachDense-v3")])

eval_env = VecNormalize.load("vec_normalize.pkl", eval_env)

+# We need to override the render_mode

+eval_env.render_mode = "rgb_array"

+

# do not update them at test time

eval_env.training = False

# reward normalization is not needed at test time

eval_env.norm_reward = False

# Load the agent

-model = A2C.load("a2c-AntBulletEnv-v0")

+model = A2C.load("a2c-PandaReachDense-v3")

-mean_reward, std_reward = evaluate_policy(model, env)

+mean_reward, std_reward = evaluate_policy(model, eval_env)

print(f"Mean reward = {mean_reward:.2f} +/- {std_reward:.2f}")

```

-

### Publish your trained model on the Hub 🔥

+

Now that we saw we got good results after the training, we can publish our trained model on the Hub with one line of code.

📚 The libraries documentation 👉 https://github.com/huggingface/huggingface_sb3/tree/main#hugging-face--x-stable-baselines3-v20

-Here's an example of a Model Card (with a PyBullet environment):

-

-

+The action space is a vector with 3 values:

+- Control x, y, z movement

### Normalize observation and rewards

@@ -196,13 +203,11 @@ env = # TODO: Add the wrapper

```python

env = make_vec_env(env_id, n_envs=4)

-env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.0)

+env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.)

```

### Create the A2C Model 🤖

-In this case, because we have a vector of 28 values as input, we'll use an MLP (multi-layer perceptron) as policy.

-

For more information about A2C implementation with StableBaselines3 check: https://stable-baselines3.readthedocs.io/en/master/modules/a2c.html#notes

To find the best parameters I checked the [official trained agents by Stable-Baselines3 team](https://huggingface.co/sb3).

@@ -214,86 +219,71 @@ model = # Create the A2C model and try to find the best parameters

#### Solution

```python

-model = A2C(

- policy="MlpPolicy",

- env=env,

- gae_lambda=0.9,

- gamma=0.99,

- learning_rate=0.00096,

- max_grad_norm=0.5,

- n_steps=8,

- vf_coef=0.4,

- ent_coef=0.0,

- policy_kwargs=dict(log_std_init=-2, ortho_init=False),

- normalize_advantage=False,

- use_rms_prop=True,

- use_sde=True,

- verbose=1,

-)

+model = A2C(policy = "MultiInputPolicy",

+ env = env,

+ verbose=1)

```

### Train the A2C agent 🏃

-- Let's train our agent for 2,000,000 timesteps. Don't forget to use GPU on Colab. It will take approximately ~25-40min

+- Let's train our agent for 1,000,000 timesteps, don't forget to use GPU on Colab. It will take approximately ~25-40min

```python

-model.learn(2_000_000)

+model.learn(1_000_000)

```

```python

# Save the model and VecNormalize statistics when saving the agent

-model.save("a2c-AntBulletEnv-v0")

+model.save("a2c-PandaReachDense-v3")

env.save("vec_normalize.pkl")

```

### Evaluate the agent 📈

-- Now that our agent is trained, we need to **check its performance**.

+

+- Now that's our agent is trained, we need to **check its performance**.

- Stable-Baselines3 provides a method to do that: `evaluate_policy`

-- In my case, I got a mean reward of `2371.90 +/- 16.50`

```python

from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize

# Load the saved statistics

-eval_env = DummyVecEnv([lambda: gym.make("AntBulletEnv-v0")])

+eval_env = DummyVecEnv([lambda: gym.make("PandaReachDense-v3")])

eval_env = VecNormalize.load("vec_normalize.pkl", eval_env)

+# We need to override the render_mode

+eval_env.render_mode = "rgb_array"

+

# do not update them at test time

eval_env.training = False

# reward normalization is not needed at test time

eval_env.norm_reward = False

# Load the agent

-model = A2C.load("a2c-AntBulletEnv-v0")

+model = A2C.load("a2c-PandaReachDense-v3")

-mean_reward, std_reward = evaluate_policy(model, env)

+mean_reward, std_reward = evaluate_policy(model, eval_env)

print(f"Mean reward = {mean_reward:.2f} +/- {std_reward:.2f}")

```

-

### Publish your trained model on the Hub 🔥

+

Now that we saw we got good results after the training, we can publish our trained model on the Hub with one line of code.

📚 The libraries documentation 👉 https://github.com/huggingface/huggingface_sb3/tree/main#hugging-face--x-stable-baselines3-v20

-Here's an example of a Model Card (with a PyBullet environment):

-

- -

By using `package_to_hub`, as we already mentionned in the former units, **you evaluate, record a replay, generate a model card of your agent and push it to the hub**.

This way:

- You can **showcase our work** 🔥

- You can **visualize your agent playing** 👀

-- You can **share an agent with the community that others can use** 💾

+- You can **share with the community an agent that others can use** 💾

- You can **access a leaderboard 🏆 to see how well your agent is performing compared to your classmates** 👉 https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard

-

To be able to share your model with the community there are three more steps to follow:

1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join

-2️⃣ Sign in and then you need to get your authentication token from the Hugging Face website.

+2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.

- Create a new token (https://huggingface.co/settings/tokens) **with write role**

-

By using `package_to_hub`, as we already mentionned in the former units, **you evaluate, record a replay, generate a model card of your agent and push it to the hub**.

This way:

- You can **showcase our work** 🔥

- You can **visualize your agent playing** 👀

-- You can **share an agent with the community that others can use** 💾

+- You can **share with the community an agent that others can use** 💾

- You can **access a leaderboard 🏆 to see how well your agent is performing compared to your classmates** 👉 https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard

-

To be able to share your model with the community there are three more steps to follow:

1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join

-2️⃣ Sign in and then you need to get your authentication token from the Hugging Face website.

+2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.

- Create a new token (https://huggingface.co/settings/tokens) **with write role**

@@ -305,116 +295,68 @@ To be able to share your model with the community there are three more steps to

notebook_login()

!git config --global credential.helper store

```

+If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-If you don't want to use Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-

-3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function

+3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function.

+For this environment, **running this cell can take approximately 10min**

```python

+from huggingface_sb3 import package_to_hub

+

package_to_hub(

model=model,

model_name=f"a2c-{env_id}",

model_architecture="A2C",

env_id=env_id,

eval_env=eval_env,

- repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

+ repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

commit_message="Initial commit",

)

```

-## Take a coffee break ☕

-- You already trained your first robot that learned to move congratutlations 🥳!

-- It's **time to take a break**. Don't hesitate to **save this notebook** `File > Save a copy to Drive` to work on this second part later.

+## Some additional challenges 🏆

+The best way to learn **is to try things by your own**! Why not trying `PandaPickAndPlace-v3`?

-## Environment 2: PandaReachDense-v2 🦾

+If you want to try more advanced tasks for panda-gym, you need to check what was done using **TQC or SAC** (a more sample-efficient algorithm suited for robotics tasks). In real robotics, you'll use a more sample-efficient algorithm for a simple reason: contrary to a simulation **if you move your robotic arm too much, you have a risk of breaking it**.

-The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+PandaPickAndPlace-v1 (this model uses the v1 version of the environment): https://huggingface.co/sb3/tqc-PandaPickAndPlace-v1

-In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+And don't hesitate to check panda-gym documentation here: https://panda-gym.readthedocs.io/en/latest/usage/train_with_sb3.html

-In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+We provide you the steps to train another agent (optional):

-We're going to use the dense version of this environment. This means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). This is in contrast to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

-

-Also, we're going to use the *End-effector displacement control*, which means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

-

-

@@ -305,116 +295,68 @@ To be able to share your model with the community there are three more steps to

notebook_login()

!git config --global credential.helper store

```

+If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-If you don't want to use Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

-

-3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function

+3️⃣ We're now ready to push our trained agent to the 🤗 Hub 🔥 using `package_to_hub()` function.

+For this environment, **running this cell can take approximately 10min**

```python

+from huggingface_sb3 import package_to_hub

+

package_to_hub(

model=model,

model_name=f"a2c-{env_id}",

model_architecture="A2C",

env_id=env_id,

eval_env=eval_env,

- repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

+ repo_id=f"ThomasSimonini/a2c-{env_id}", # Change the username

commit_message="Initial commit",

)

```

-## Take a coffee break ☕

-- You already trained your first robot that learned to move congratutlations 🥳!

-- It's **time to take a break**. Don't hesitate to **save this notebook** `File > Save a copy to Drive` to work on this second part later.

+## Some additional challenges 🏆

+The best way to learn **is to try things by your own**! Why not trying `PandaPickAndPlace-v3`?

-## Environment 2: PandaReachDense-v2 🦾

+If you want to try more advanced tasks for panda-gym, you need to check what was done using **TQC or SAC** (a more sample-efficient algorithm suited for robotics tasks). In real robotics, you'll use a more sample-efficient algorithm for a simple reason: contrary to a simulation **if you move your robotic arm too much, you have a risk of breaking it**.

-The agent we're going to train is a robotic arm that needs to do controls (moving the arm and using the end-effector).

+PandaPickAndPlace-v1 (this model uses the v1 version of the environment): https://huggingface.co/sb3/tqc-PandaPickAndPlace-v1

-In robotics, the *end-effector* is the device at the end of a robotic arm designed to interact with the environment.

+And don't hesitate to check panda-gym documentation here: https://panda-gym.readthedocs.io/en/latest/usage/train_with_sb3.html

-In `PandaReach`, the robot must place its end-effector at a target position (green ball).

+We provide you the steps to train another agent (optional):

-We're going to use the dense version of this environment. This means we'll get a *dense reward function* that **will provide a reward at each timestep** (the closer the agent is to completing the task, the higher the reward). This is in contrast to a *sparse reward function* where the environment **return a reward if and only if the task is completed**.

-

-Also, we're going to use the *End-effector displacement control*, which means the **action corresponds to the displacement of the end-effector**. We don't control the individual motion of each joint (joint control).

-

- -

-

-This way **the training will be easier**.

-

-

-

-In `PandaReachDense-v2`, the robotic arm must place its end-effector at a target position (green ball).

-

-

-

-```python

-import gym

-

-env_id = "PandaReachDense-v2"

-

-# Create the env

-env = gym.make(env_id)

-

-# Get the state space and action space

-s_size = env.observation_space.shape

-a_size = env.action_space

-```

-

-```python

-print("_____OBSERVATION SPACE_____ \n")

-print("The State Space is: ", s_size)

-print("Sample observation", env.observation_space.sample()) # Get a random observation

-```

-

-The observation space **is a dictionary with 3 different elements**:

-- `achieved_goal`: (x,y,z) position of the goal.

-- `desired_goal`: (x,y,z) distance between the goal position and the current object position.

-- `observation`: position (x,y,z) and velocity of the end-effector (vx, vy, vz).

-

-Given it's a dictionary as observation, **we will need to use a MultiInputPolicy policy instead of MlpPolicy**.

-

-```python

-print("\n _____ACTION SPACE_____ \n")

-print("The Action Space is: ", a_size)

-print("Action Space Sample", env.action_space.sample()) # Take a random action

-```

-

-The action space is a vector with 3 values:

-- Control x, y, z movement

-

-Now it's your turn:

-

-1. Define the environment called "PandaReachDense-v2".

-2. Make a vectorized environment.

+1. Define the environment called "PandaPickAndPlace-v3"

+2. Make a vectorized environment

3. Add a wrapper to normalize the observations and rewards. [Check the documentation](https://stable-baselines3.readthedocs.io/en/master/guide/vec_envs.html#vecnormalize)

4. Create the A2C Model (don't forget verbose=1 to print the training logs).

-5. Train it for 1M Timesteps.

-6. Save the model and VecNormalize statistics when saving the agent.

-7. Evaluate your agent.

-8. Publish your trained model on the Hub 🔥 with `package_to_hub`.

+5. Train it for 1M Timesteps

+6. Save the model and VecNormalize statistics when saving the agent

+7. Evaluate your agent

+8. Publish your trained model on the Hub 🔥 with `package_to_hub`

-### Solution (fill the todo)

+

+### Solution (optional)

```python

# 1 - 2

-env_id = "PandaReachDense-v2"

+env_id = "PandaPickAndPlace-v3"

env = make_vec_env(env_id, n_envs=4)

# 3

-env = VecNormalize(env, norm_obs=True, norm_reward=False, clip_obs=10.0)

+env = VecNormalize(env, norm_obs=True, norm_reward=True, clip_obs=10.)

# 4

-model = A2C(policy="MultiInputPolicy", env=env, verbose=1)

+model = A2C(policy = "MultiInputPolicy",

+ env = env,

+ verbose=1)

# 5

model.learn(1_000_000)

```

```python

# 6

-model_name = "a2c-PandaReachDense-v2"

+model_name = "a2c-PandaPickAndPlace-v3";

model.save(model_name)

env.save("vec_normalize.pkl")

@@ -422,7 +364,7 @@ env.save("vec_normalize.pkl")

from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize

# Load the saved statistics

-eval_env = DummyVecEnv([lambda: gym.make("PandaReachDense-v2")])

+eval_env = DummyVecEnv([lambda: gym.make("PandaPickAndPlace-v3")])

eval_env = VecNormalize.load("vec_normalize.pkl", eval_env)

# do not update them at test time

@@ -433,7 +375,7 @@ eval_env.norm_reward = False

# Load the agent

model = A2C.load(model_name)

-mean_reward, std_reward = evaluate_policy(model, env)

+mean_reward, std_reward = evaluate_policy(model, eval_env)

print(f"Mean reward = {mean_reward:.2f} +/- {std_reward:.2f}")

@@ -444,26 +386,11 @@ package_to_hub(

model_architecture="A2C",

env_id=env_id,

eval_env=eval_env,

- repo_id=f"ThomasSimonini/a2c-{env_id}", # TODO: Change the username

+ repo_id=f"ThomasSimonini/a2c-{env_id}", # TODO: Change the username

commit_message="Initial commit",

)

```