diff --git a/units/en/unit2/q-learning.mdx b/units/en/unit2/q-learning.mdx

index 5f46722..1ff8456 100644

--- a/units/en/unit2/q-learning.mdx

+++ b/units/en/unit2/q-learning.mdx

@@ -27,7 +27,8 @@ Let's go through an example of a maze.

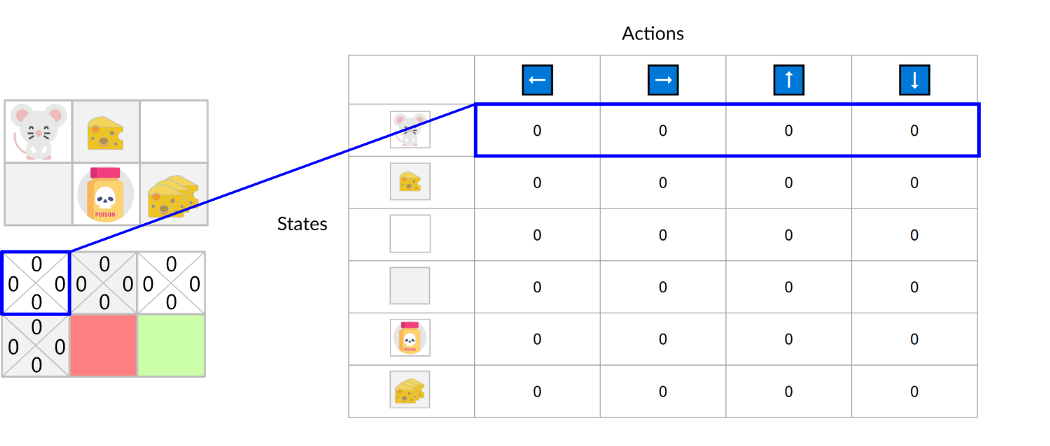

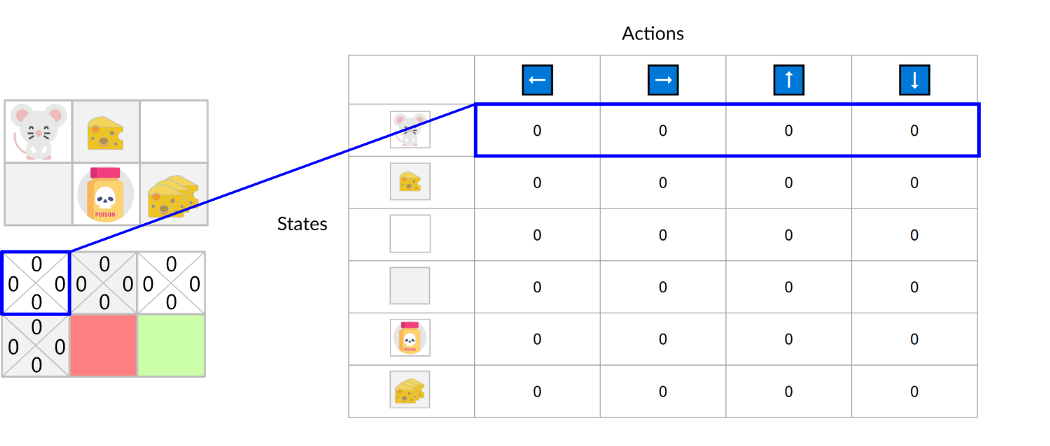

-The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+For this simple example, the state is only defined by the position of the mouse. Therefore, we have 2*3 rows in our Q-table, one row for each possible position of the mouse. In more complex scenarios, the state could contain more information than the position of the actor.

-The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+For this simple example, the state is only defined by the position of the mouse. Therefore, we have 2*3 rows in our Q-table, one row for each possible position of the mouse. In more complex scenarios, the state could contain more information than the position of the actor.

-The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+For this simple example, the state is only defined by the position of the mouse. Therefore, we have 2*3 rows in our Q-table, one row for each possible position of the mouse. In more complex scenarios, the state could contain more information than the position of the actor.

-The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+The Q-table is initialized. That's why all values are = 0. This table **contains, for each state and action, the corresponding state-action values.**

+For this simple example, the state is only defined by the position of the mouse. Therefore, we have 2*3 rows in our Q-table, one row for each possible position of the mouse. In more complex scenarios, the state could contain more information than the position of the actor.