diff --git a/units/en/_toctree.yml b/units/en/_toctree.yml

index 6a79159..34cd6f9 100644

--- a/units/en/_toctree.yml

+++ b/units/en/_toctree.yml

@@ -119,7 +119,9 @@

- local: unit4/advantages-disadvantages

title: The advantages and disadvantages of Policy-based methods

- local: unit4/policy-gradient

- title: Diving deeper into Policy-gradient methods

+ title: Diving deeper into Policy-gradient

+ - local: unit4/pg-theorem

+ title: (Optional) the Policy Gradient Theorem

- local: unit4/hands-on

title: Hands-on

- local: unit4/quiz

diff --git a/units/en/unit4/pg-theorem.mdx b/units/en/unit4/pg-theorem.mdx

new file mode 100644

index 0000000..55eea08

--- /dev/null

+++ b/units/en/unit4/pg-theorem.mdx

@@ -0,0 +1,77 @@

+# (Optional) the Policy Gradient Theorem

+

+In this optional section where we're **going to study how we differentiate the objective function that we will use to approximate the policy gradient**.

+

+Let's first recap our different formulas:

+

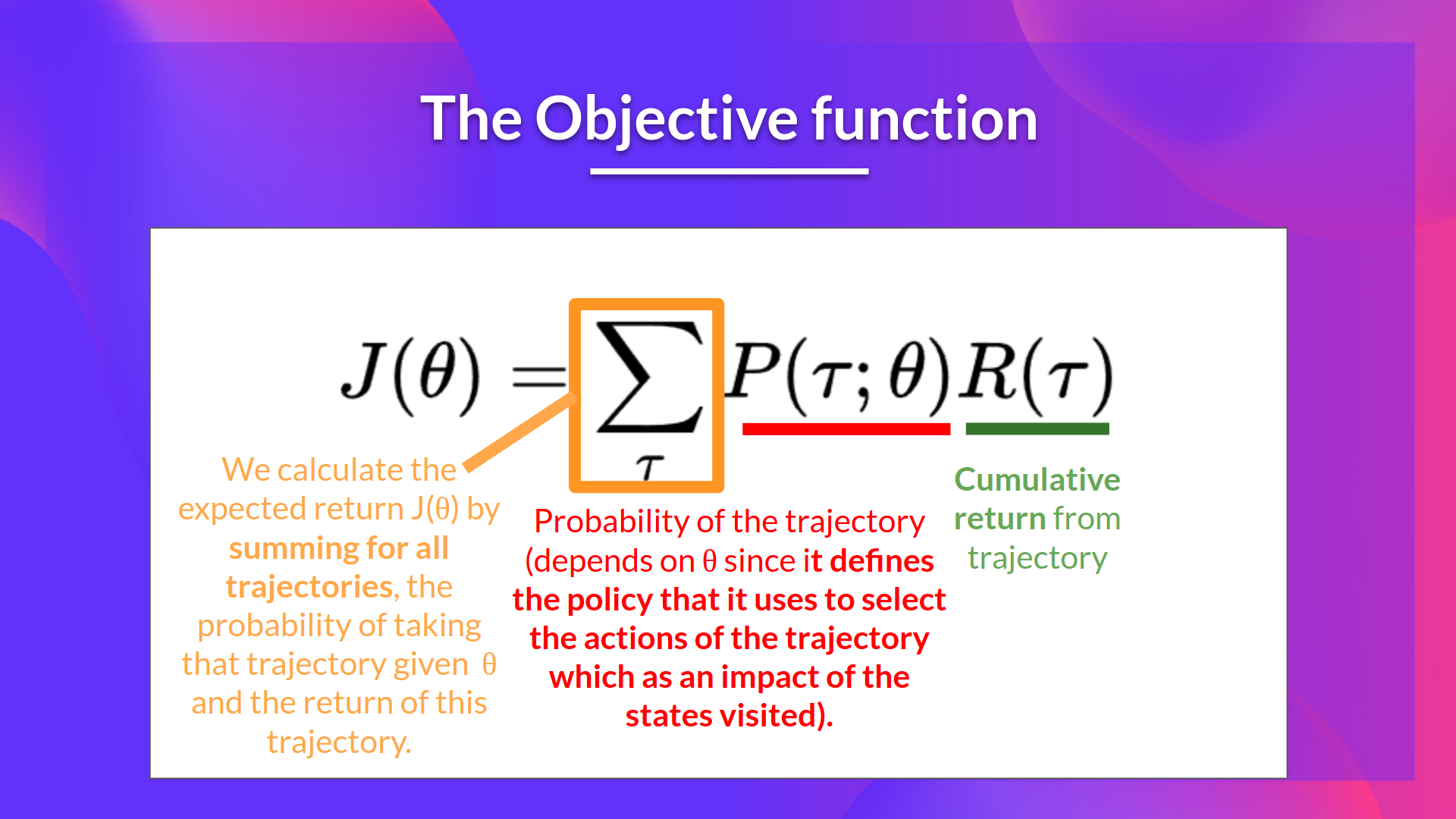

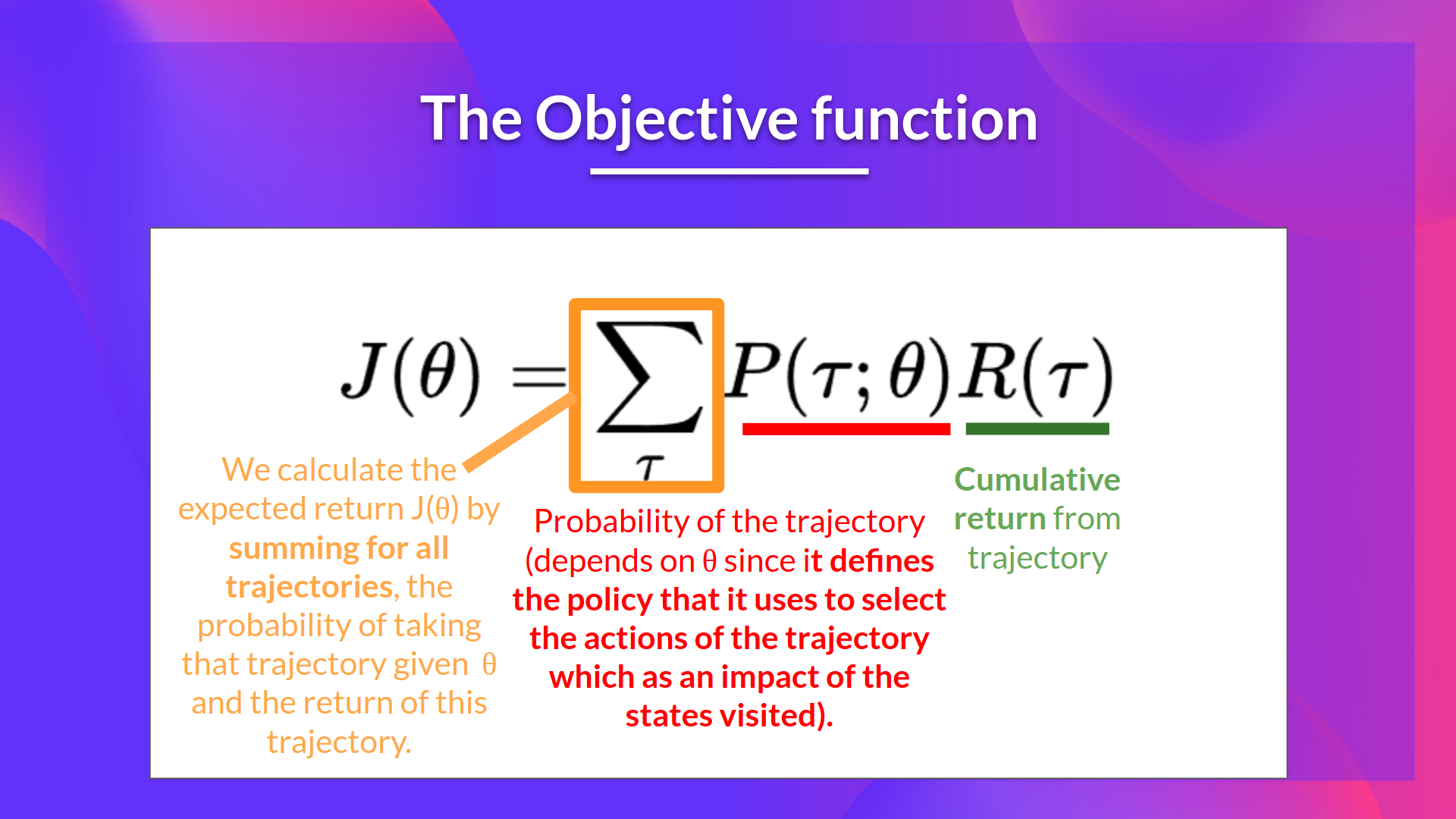

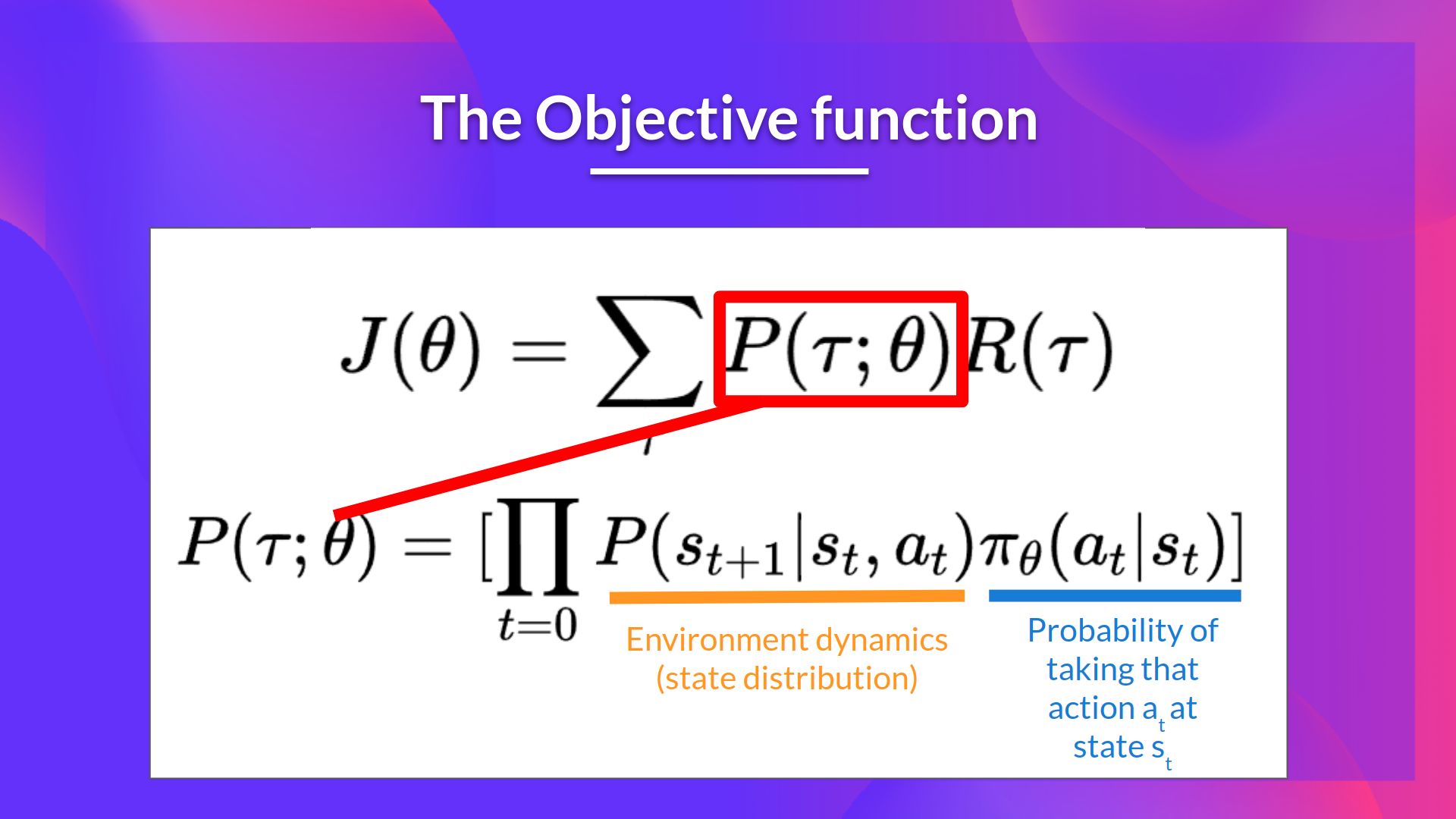

+1. The Objective function

+

+ +

+

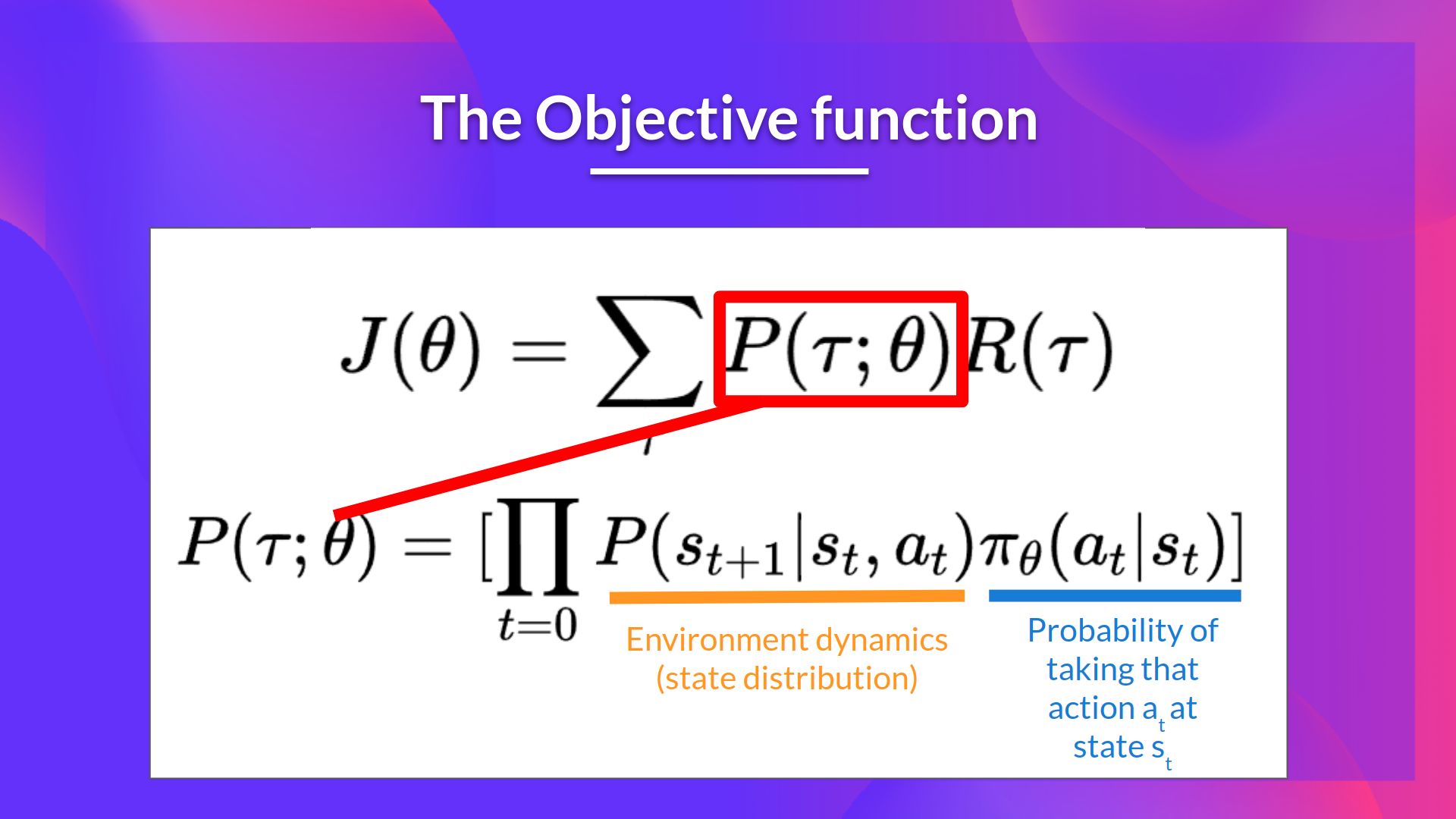

+2. The probability of a trajectory (given that action comes from //(/pi_/theta//)):

+

+

+

+

+2. The probability of a trajectory (given that action comes from //(/pi_/theta//)):

+

+ +

+

+So we have:

+

+\\(\nabla_\theta J(\theta) = \nabla_\theta \sum_{\tau}P(\tau;\theta)R(\tau)\\)

+

+We can rewrite the gradient of the sum as the sum of the gradient:

+

+\\( = \sum_{\tau} \nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We then multiply every term in the sum by \\(\frac{P(\tau;\theta)}{P(\tau;\theta)}\\)(which is possible since it's = 1)

+

+\\( = \sum_{\tau} \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We can simplify further this since \\( \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta) = P(\tau;\theta)\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\)

+

+\\(= \sum_{\tau} P(\tau;\theta) \frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)}R(\tau) \\)

+

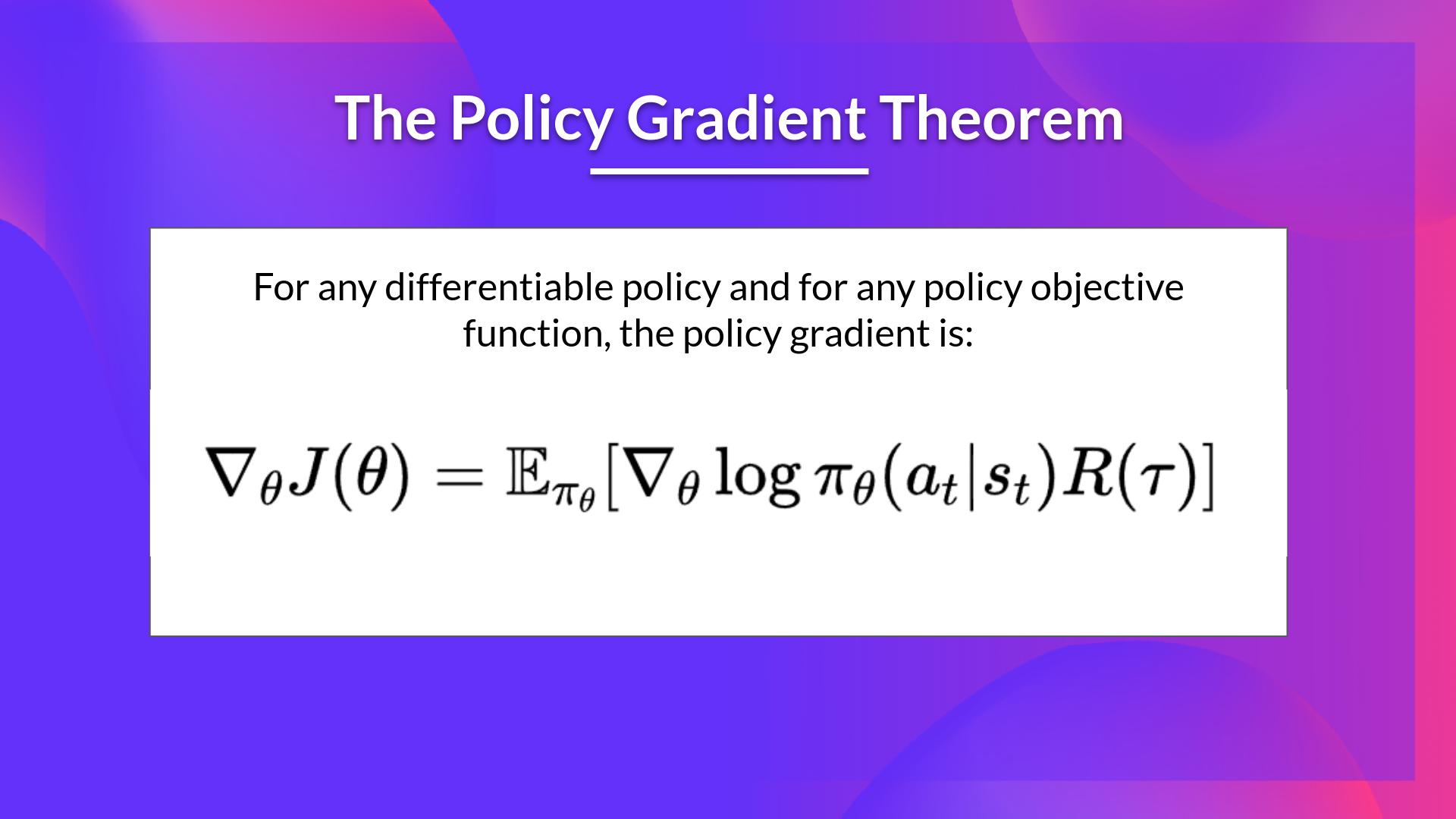

+We can then use the *derivative log trick* (also called *likelihood ratio trick* or *REINFORCE trick*), a simple rule in calculus that implies that \\( \nabla_x log f(x) = \frac{\nabla_x f(x)}{f(x)} \\)

+

+So given we have \\(\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\) we transform it as \\(\nabla_\theta log P(\tau|\theta) \\)

+

+So this is our likelihood policy gradient:

+

+\\( \nabla_\theta J(\theta) = \sum_{\tau} P(\tau;\theta) \nabla_\theta log P(\tau;\theta) R(\tau) \\)

+

+

+Thanks for this new formula, we can estimate the gradient using trajectory samples (we can approximate the likelihood ratio policy gradient with sample-based estimate if you prefer).

+

+\\(\nabla_\theta J(\theta) = \frac{1}{m} \sum^{m}_{i=1} \nabla_\theta log P(\tau^{(i)};\theta)R(\tau^{(i)})\\)

+

+where each \\(\tau(i)}\\) is a sampled trajectory.

+

+But we still have some mathematics work to do there: we need to simplify \\( \nabla_\theta log P(\tau|\theta) \\)

+

+We know that:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta log[ \mu(s_0) \prod_{t=0}^{H} P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})])\\

+

+Where \\(\mu(s_0)\\) is the initial state distribution and \\( P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \\) is the state transition dynamics of the MDP.

+

+We know that the log of a product is equal to the sum of the logs:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \left[ \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\right]\\)

+

+We also know that the gradient of the sum is equal to the sum of gradient:

+

+\\( \nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

+Since neither initial state distribution or state transition dynamics of the MDP are dependent of \\(\theta\\), the derivate of both terms are 0. So we can remove them:

+

+Since:

+\\(\nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) = 0 \\) and (\\ \nabla_\theta \mu(s_0) = 0\\)

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta) = \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\\)

+

+We can rewrite the gradient of the sum as the sum of gradients:

+

+(\\ \nabla_\theta log P(\tau^{(i)};\theta)= \sum_{t=0}^{H} \nabla_\theta log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

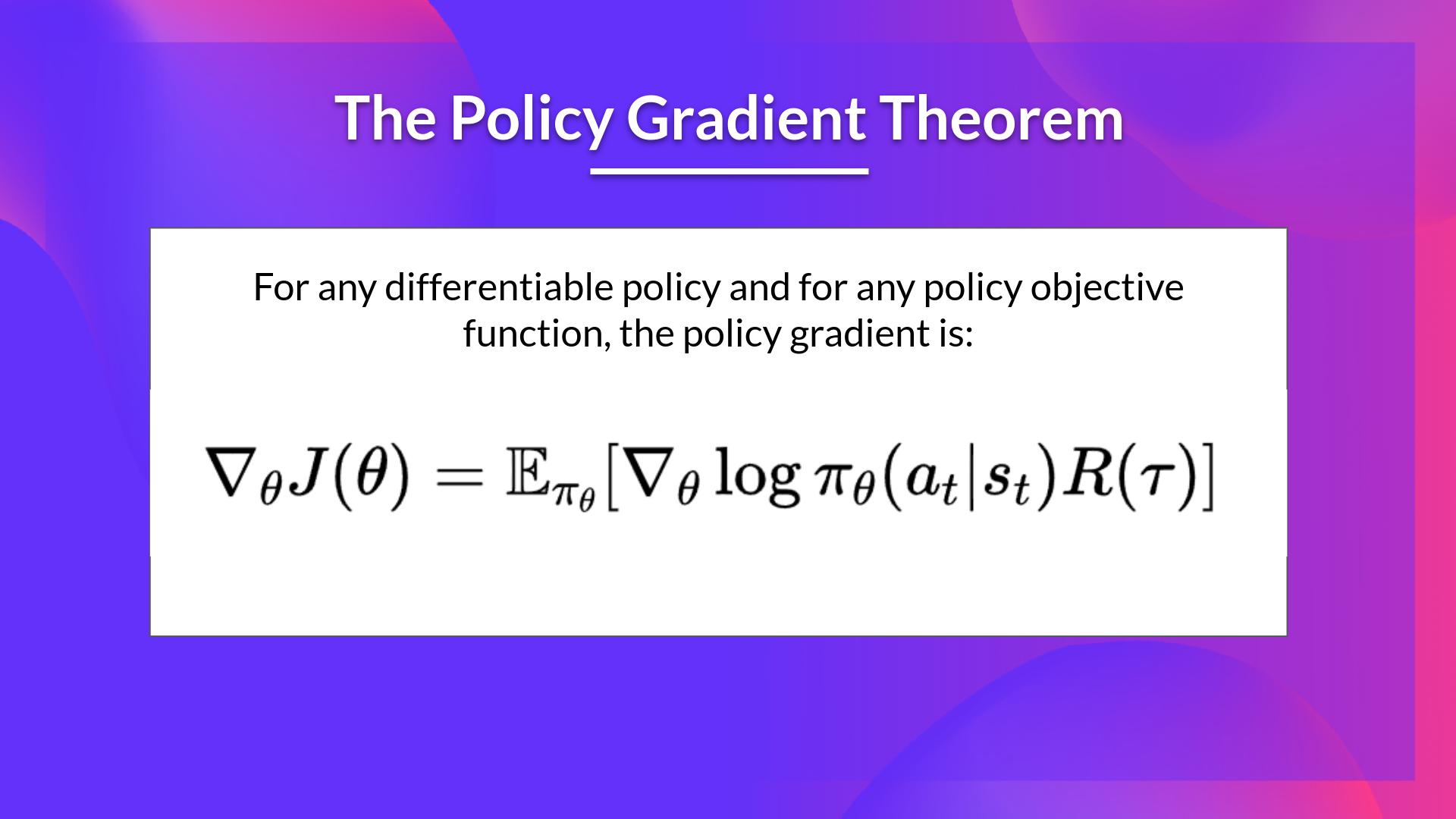

+So, the final formula for estimating the policy gradient is:

+

+\\( \nabla_{\theta} J(\theta) = \hat{g} = \frac{1}{m} \sum^{m}_{i=1} \sum^{H}_{t=0} \nabla_\theta \log \pi_\theta(a^{(i)}_{t} | s_{t}^{(i)})R(\tau^{(i)}) \\)

diff --git a/units/en/unit4/policy-gradient.mdx b/units/en/unit4/policy-gradient.mdx

index fbd0740..0ca624f 100644

--- a/units/en/unit4/policy-gradient.mdx

+++ b/units/en/unit4/policy-gradient.mdx

@@ -88,6 +88,8 @@ Fortunately we're going to use a solution called the Policy Gradient Theorem tha

+

+

+So we have:

+

+\\(\nabla_\theta J(\theta) = \nabla_\theta \sum_{\tau}P(\tau;\theta)R(\tau)\\)

+

+We can rewrite the gradient of the sum as the sum of the gradient:

+

+\\( = \sum_{\tau} \nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We then multiply every term in the sum by \\(\frac{P(\tau;\theta)}{P(\tau;\theta)}\\)(which is possible since it's = 1)

+

+\\( = \sum_{\tau} \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We can simplify further this since \\( \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta) = P(\tau;\theta)\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\)

+

+\\(= \sum_{\tau} P(\tau;\theta) \frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)}R(\tau) \\)

+

+We can then use the *derivative log trick* (also called *likelihood ratio trick* or *REINFORCE trick*), a simple rule in calculus that implies that \\( \nabla_x log f(x) = \frac{\nabla_x f(x)}{f(x)} \\)

+

+So given we have \\(\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\) we transform it as \\(\nabla_\theta log P(\tau|\theta) \\)

+

+So this is our likelihood policy gradient:

+

+\\( \nabla_\theta J(\theta) = \sum_{\tau} P(\tau;\theta) \nabla_\theta log P(\tau;\theta) R(\tau) \\)

+

+

+Thanks for this new formula, we can estimate the gradient using trajectory samples (we can approximate the likelihood ratio policy gradient with sample-based estimate if you prefer).

+

+\\(\nabla_\theta J(\theta) = \frac{1}{m} \sum^{m}_{i=1} \nabla_\theta log P(\tau^{(i)};\theta)R(\tau^{(i)})\\)

+

+where each \\(\tau(i)}\\) is a sampled trajectory.

+

+But we still have some mathematics work to do there: we need to simplify \\( \nabla_\theta log P(\tau|\theta) \\)

+

+We know that:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta log[ \mu(s_0) \prod_{t=0}^{H} P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})])\\

+

+Where \\(\mu(s_0)\\) is the initial state distribution and \\( P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \\) is the state transition dynamics of the MDP.

+

+We know that the log of a product is equal to the sum of the logs:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \left[ \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\right]\\)

+

+We also know that the gradient of the sum is equal to the sum of gradient:

+

+\\( \nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

+Since neither initial state distribution or state transition dynamics of the MDP are dependent of \\(\theta\\), the derivate of both terms are 0. So we can remove them:

+

+Since:

+\\(\nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) = 0 \\) and (\\ \nabla_\theta \mu(s_0) = 0\\)

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta) = \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\\)

+

+We can rewrite the gradient of the sum as the sum of gradients:

+

+(\\ \nabla_\theta log P(\tau^{(i)};\theta)= \sum_{t=0}^{H} \nabla_\theta log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

+So, the final formula for estimating the policy gradient is:

+

+\\( \nabla_{\theta} J(\theta) = \hat{g} = \frac{1}{m} \sum^{m}_{i=1} \sum^{H}_{t=0} \nabla_\theta \log \pi_\theta(a^{(i)}_{t} | s_{t}^{(i)})R(\tau^{(i)}) \\)

diff --git a/units/en/unit4/policy-gradient.mdx b/units/en/unit4/policy-gradient.mdx

index fbd0740..0ca624f 100644

--- a/units/en/unit4/policy-gradient.mdx

+++ b/units/en/unit4/policy-gradient.mdx

@@ -88,6 +88,8 @@ Fortunately we're going to use a solution called the Policy Gradient Theorem tha

+If you want to understand how we derivate this formula that we will use to approximate the gradient, check the next (optional) section.

+

## The Reinforce algorithm (Monte Carlo Reinforce)

The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):

+If you want to understand how we derivate this formula that we will use to approximate the gradient, check the next (optional) section.

+

## The Reinforce algorithm (Monte Carlo Reinforce)

The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):

+

+

+2. The probability of a trajectory (given that action comes from //(/pi_/theta//)):

+

+

+

+

+2. The probability of a trajectory (given that action comes from //(/pi_/theta//)):

+

+ +

+

+So we have:

+

+\\(\nabla_\theta J(\theta) = \nabla_\theta \sum_{\tau}P(\tau;\theta)R(\tau)\\)

+

+We can rewrite the gradient of the sum as the sum of the gradient:

+

+\\( = \sum_{\tau} \nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We then multiply every term in the sum by \\(\frac{P(\tau;\theta)}{P(\tau;\theta)}\\)(which is possible since it's = 1)

+

+\\( = \sum_{\tau} \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We can simplify further this since \\( \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta) = P(\tau;\theta)\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\)

+

+\\(= \sum_{\tau} P(\tau;\theta) \frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)}R(\tau) \\)

+

+We can then use the *derivative log trick* (also called *likelihood ratio trick* or *REINFORCE trick*), a simple rule in calculus that implies that \\( \nabla_x log f(x) = \frac{\nabla_x f(x)}{f(x)} \\)

+

+So given we have \\(\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\) we transform it as \\(\nabla_\theta log P(\tau|\theta) \\)

+

+So this is our likelihood policy gradient:

+

+\\( \nabla_\theta J(\theta) = \sum_{\tau} P(\tau;\theta) \nabla_\theta log P(\tau;\theta) R(\tau) \\)

+

+

+Thanks for this new formula, we can estimate the gradient using trajectory samples (we can approximate the likelihood ratio policy gradient with sample-based estimate if you prefer).

+

+\\(\nabla_\theta J(\theta) = \frac{1}{m} \sum^{m}_{i=1} \nabla_\theta log P(\tau^{(i)};\theta)R(\tau^{(i)})\\)

+

+where each \\(\tau(i)}\\) is a sampled trajectory.

+

+But we still have some mathematics work to do there: we need to simplify \\( \nabla_\theta log P(\tau|\theta) \\)

+

+We know that:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta log[ \mu(s_0) \prod_{t=0}^{H} P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})])\\

+

+Where \\(\mu(s_0)\\) is the initial state distribution and \\( P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \\) is the state transition dynamics of the MDP.

+

+We know that the log of a product is equal to the sum of the logs:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \left[ \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\right]\\)

+

+We also know that the gradient of the sum is equal to the sum of gradient:

+

+\\( \nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

+Since neither initial state distribution or state transition dynamics of the MDP are dependent of \\(\theta\\), the derivate of both terms are 0. So we can remove them:

+

+Since:

+\\(\nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) = 0 \\) and (\\ \nabla_\theta \mu(s_0) = 0\\)

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta) = \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\\)

+

+We can rewrite the gradient of the sum as the sum of gradients:

+

+(\\ \nabla_\theta log P(\tau^{(i)};\theta)= \sum_{t=0}^{H} \nabla_\theta log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

+So, the final formula for estimating the policy gradient is:

+

+\\( \nabla_{\theta} J(\theta) = \hat{g} = \frac{1}{m} \sum^{m}_{i=1} \sum^{H}_{t=0} \nabla_\theta \log \pi_\theta(a^{(i)}_{t} | s_{t}^{(i)})R(\tau^{(i)}) \\)

diff --git a/units/en/unit4/policy-gradient.mdx b/units/en/unit4/policy-gradient.mdx

index fbd0740..0ca624f 100644

--- a/units/en/unit4/policy-gradient.mdx

+++ b/units/en/unit4/policy-gradient.mdx

@@ -88,6 +88,8 @@ Fortunately we're going to use a solution called the Policy Gradient Theorem tha

+

+

+So we have:

+

+\\(\nabla_\theta J(\theta) = \nabla_\theta \sum_{\tau}P(\tau;\theta)R(\tau)\\)

+

+We can rewrite the gradient of the sum as the sum of the gradient:

+

+\\( = \sum_{\tau} \nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We then multiply every term in the sum by \\(\frac{P(\tau;\theta)}{P(\tau;\theta)}\\)(which is possible since it's = 1)

+

+\\( = \sum_{\tau} \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta)R(\tau) \\)

+

+We can simplify further this since \\( \frac{P(\tau;\theta)}{P(\tau;\theta)}\nabla_\theta P(\tau;\theta) = P(\tau;\theta)\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\)

+

+\\(= \sum_{\tau} P(\tau;\theta) \frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)}R(\tau) \\)

+

+We can then use the *derivative log trick* (also called *likelihood ratio trick* or *REINFORCE trick*), a simple rule in calculus that implies that \\( \nabla_x log f(x) = \frac{\nabla_x f(x)}{f(x)} \\)

+

+So given we have \\(\frac{\nabla_\theta P(\tau;\theta)}{P(\tau;\theta)} \\) we transform it as \\(\nabla_\theta log P(\tau|\theta) \\)

+

+So this is our likelihood policy gradient:

+

+\\( \nabla_\theta J(\theta) = \sum_{\tau} P(\tau;\theta) \nabla_\theta log P(\tau;\theta) R(\tau) \\)

+

+

+Thanks for this new formula, we can estimate the gradient using trajectory samples (we can approximate the likelihood ratio policy gradient with sample-based estimate if you prefer).

+

+\\(\nabla_\theta J(\theta) = \frac{1}{m} \sum^{m}_{i=1} \nabla_\theta log P(\tau^{(i)};\theta)R(\tau^{(i)})\\)

+

+where each \\(\tau(i)}\\) is a sampled trajectory.

+

+But we still have some mathematics work to do there: we need to simplify \\( \nabla_\theta log P(\tau|\theta) \\)

+

+We know that:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta log[ \mu(s_0) \prod_{t=0}^{H} P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})])\\

+

+Where \\(\mu(s_0)\\) is the initial state distribution and \\( P(s_{t+1}^{(i)}|s_{t}^{(i)}, a_{t}^{(i)}) \\) is the state transition dynamics of the MDP.

+

+We know that the log of a product is equal to the sum of the logs:

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \left[ \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\right]\\)

+

+We also know that the gradient of the sum is equal to the sum of gradient:

+

+\\( \nabla_\theta log P(\tau^{(i)};\theta)= \nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) + \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

+Since neither initial state distribution or state transition dynamics of the MDP are dependent of \\(\theta\\), the derivate of both terms are 0. So we can remove them:

+

+Since:

+\\(\nabla_\theta \sum_{t=0}^{H} log P(s_{t+1}^{(i)}|s_{t}^{(i)} a_{t}^{(i)}) = 0 \\) and (\\ \nabla_\theta \mu(s_0) = 0\\)

+

+\\(\nabla_\theta log P(\tau^{(i)};\theta) = \nabla_\theta \sum_{t=0}^{H} log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)})\\)

+

+We can rewrite the gradient of the sum as the sum of gradients:

+

+(\\ \nabla_\theta log P(\tau^{(i)};\theta)= \sum_{t=0}^{H} \nabla_\theta log \pi_\theta(a_{t}^{(i)}|s_{t}^{(i)}) \\)

+

+So, the final formula for estimating the policy gradient is:

+

+\\( \nabla_{\theta} J(\theta) = \hat{g} = \frac{1}{m} \sum^{m}_{i=1} \sum^{H}_{t=0} \nabla_\theta \log \pi_\theta(a^{(i)}_{t} | s_{t}^{(i)})R(\tau^{(i)}) \\)

diff --git a/units/en/unit4/policy-gradient.mdx b/units/en/unit4/policy-gradient.mdx

index fbd0740..0ca624f 100644

--- a/units/en/unit4/policy-gradient.mdx

+++ b/units/en/unit4/policy-gradient.mdx

@@ -88,6 +88,8 @@ Fortunately we're going to use a solution called the Policy Gradient Theorem tha

+If you want to understand how we derivate this formula that we will use to approximate the gradient, check the next (optional) section.

+

## The Reinforce algorithm (Monte Carlo Reinforce)

The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):

+If you want to understand how we derivate this formula that we will use to approximate the gradient, check the next (optional) section.

+

## The Reinforce algorithm (Monte Carlo Reinforce)

The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):