diff --git a/README.md b/README.md

index d9cd483..c39366f 100644

--- a/README.md

+++ b/README.md

@@ -10,11 +10,31 @@ This repository contains the Deep Reinforcement Learning Course mdx files and no

- **Sign up here** ➡️➡️➡️ http://eepurl.com/ic5ZUD

+

+

+## Citing the project

+

+To cite this repository in publications:

+

+```bibtex

+@misc{deep-rl-course,

+ author = {Simonini, Thomas and Sanseviero, Omar},

+ title = {The Hugging Face Deep Reinforcement Learning Class},

+ year = {2023},

+ publisher = {GitHub},

+ journal = {GitHub repository},

+ howpublished = {\url{https://github.com/huggingface/deep-rl-class}},

+}

+```

+

+

+

+

# The documentation below is for v1.0 (deprecated)

We're launching a **new version (v2.0) of the course starting December the 5th,**

@@ -211,18 +231,3 @@ If it's not the case yet, you can check these free resources:

Yes 🎉. You'll **need to upload the eight models with the eight hands-on.**

-

-## Citing the project

-

-To cite this repository in publications:

-

-```bibtex

-@misc{deep-rl-class,

- author = {Simonini, Thomas and Sanseviero, Omar},

- title = {The Hugging Face Deep Reinforcement Learning Class},

- year = {2022},

- publisher = {GitHub},

- journal = {GitHub repository},

- howpublished = {\url{https://github.com/huggingface/deep-rl-class}},

-}

-```

diff --git a/notebooks/bonus-unit1/bonus-unit1.ipynb b/notebooks/bonus-unit1/bonus-unit1.ipynb

index fb128e4..5f87f23 100644

--- a/notebooks/bonus-unit1/bonus-unit1.ipynb

+++ b/notebooks/bonus-unit1/bonus-unit1.ipynb

@@ -174,7 +174,7 @@

"source": [

"%%capture\n",

"# Clone this specific repository (can take 3min)\n",

- "!git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents"

+ "!git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents"

]

},

{

@@ -582,4 +582,4 @@

},

"nbformat": 4,

"nbformat_minor": 0

-}

\ No newline at end of file

+}

diff --git a/notebooks/unit5/unit5.ipynb b/notebooks/unit5/unit5.ipynb

index a19498b..aeed808 100644

--- a/notebooks/unit5/unit5.ipynb

+++ b/notebooks/unit5/unit5.ipynb

@@ -200,7 +200,7 @@

"source": [

"%%capture\n",

"# Clone the repository\n",

- "!git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents"

+ "!git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents"

]

},

{

@@ -865,4 +865,4 @@

},

"nbformat": 4,

"nbformat_minor": 0

-}

\ No newline at end of file

+}

diff --git a/units/en/communication/certification.mdx b/units/en/communication/certification.mdx

index 8274fb9..6d7ab34 100644

--- a/units/en/communication/certification.mdx

+++ b/units/en/communication/certification.mdx

@@ -3,8 +3,8 @@

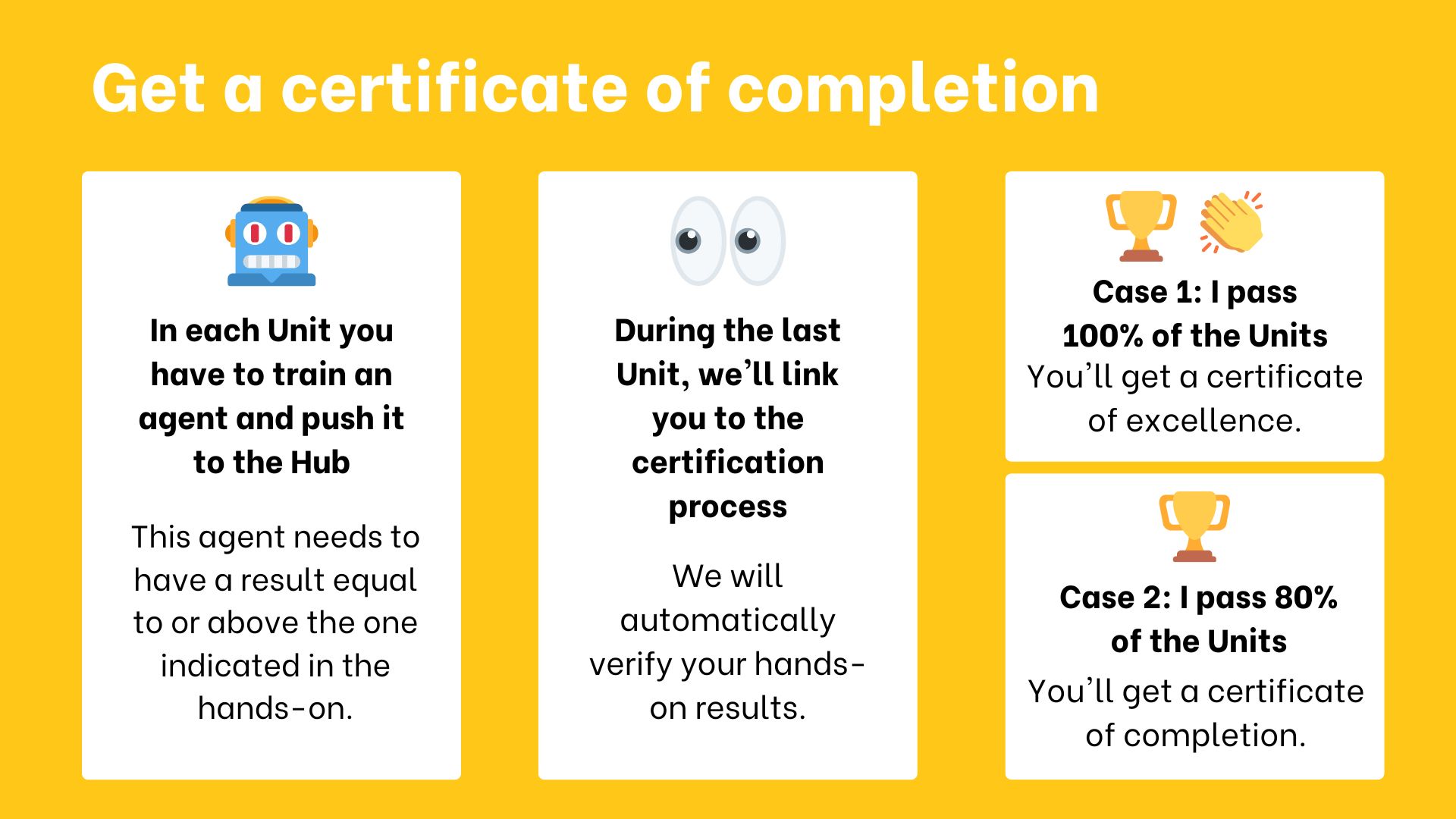

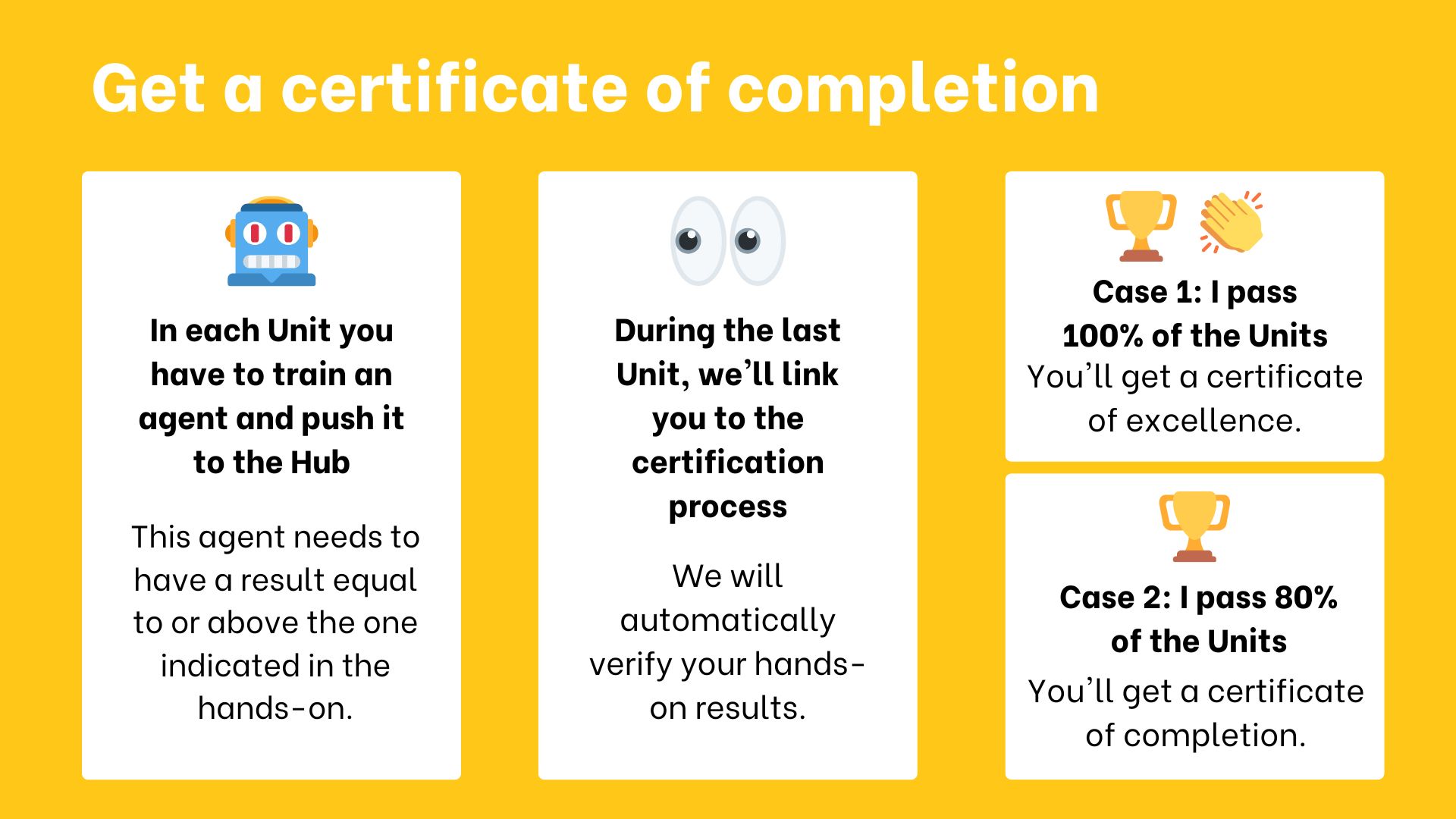

The certification process is **completely free**:

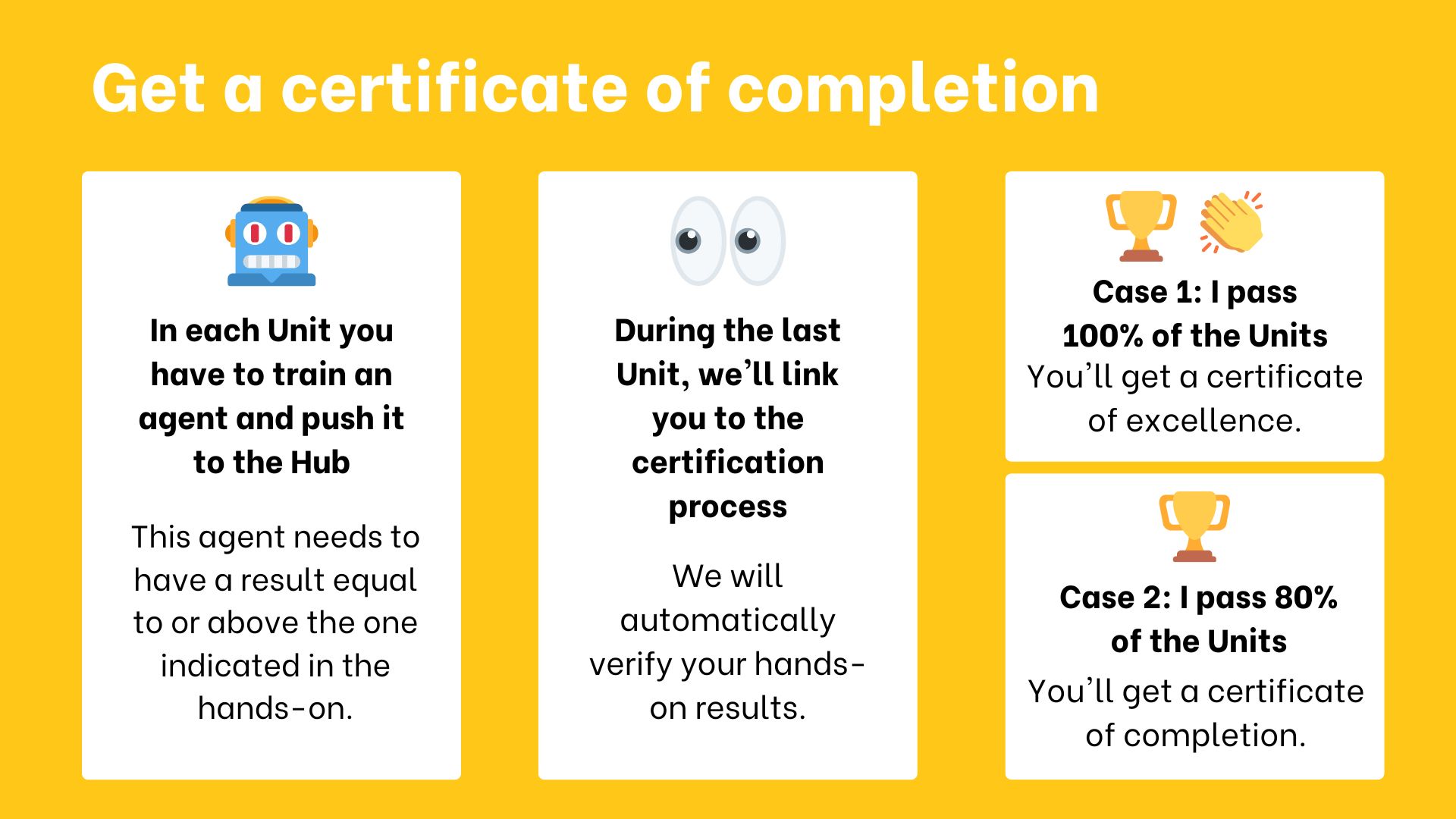

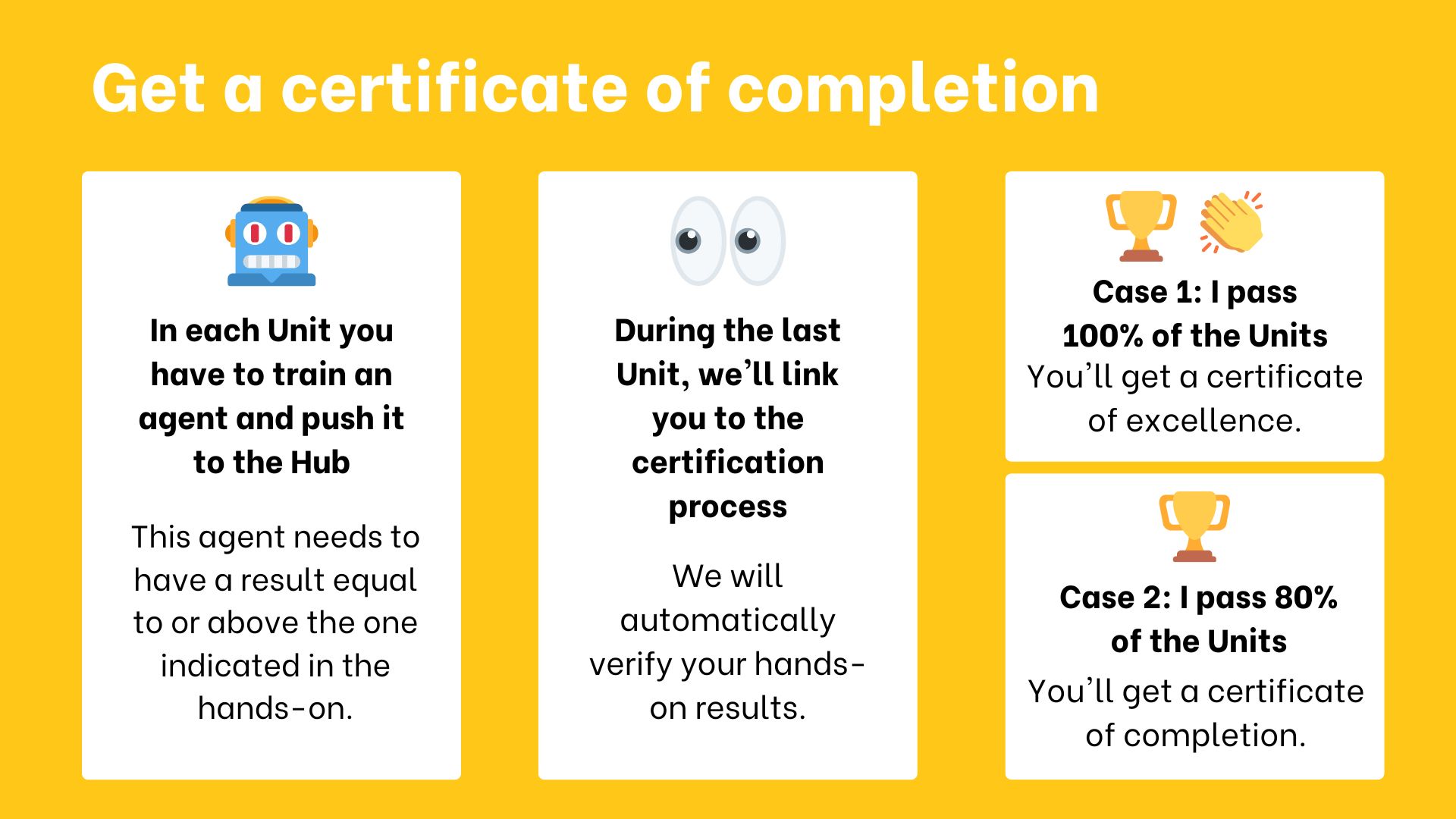

-- To get a *certificate of completion*: you need **to pass 80% of the assignments** before the end of April 2023.

-- To get a *certificate of excellence*: you need **to pass 100% of the assignments** before the end of April 2023.

+- To get a *certificate of completion*: you need **to pass 80% of the assignments** before the end of July 2023.

+- To get a *certificate of excellence*: you need **to pass 100% of the assignments** before the end of July 2023.

diff --git a/units/en/unit0/introduction.mdx b/units/en/unit0/introduction.mdx

index 57e6640..07c60fa 100644

--- a/units/en/unit0/introduction.mdx

+++ b/units/en/unit0/introduction.mdx

@@ -2,7 +2,7 @@

diff --git a/units/en/unit0/introduction.mdx b/units/en/unit0/introduction.mdx

index 57e6640..07c60fa 100644

--- a/units/en/unit0/introduction.mdx

+++ b/units/en/unit0/introduction.mdx

@@ -2,7 +2,7 @@

-Welcome to the most fascinating topic in Artificial Intelligence: Deep Reinforcement Learning.

+Welcome to the most fascinating topic in Artificial Intelligence: **Deep Reinforcement Learning**.

This course will **teach you about Deep Reinforcement Learning from beginner to expert**. It’s completely free and open-source!

@@ -23,28 +23,35 @@ In this course, you will:

- 📖 Study Deep Reinforcement Learning in **theory and practice.**

- 🧑💻 Learn to **use famous Deep RL libraries** such as [Stable Baselines3](https://stable-baselines3.readthedocs.io/en/master/), [RL Baselines3 Zoo](https://github.com/DLR-RM/rl-baselines3-zoo), [Sample Factory](https://samplefactory.dev/) and [CleanRL](https://github.com/vwxyzjn/cleanrl).

-- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://www.gymlibrary.dev/environments/atari/), [PyBullet](https://pybullet.org/wordpress/) and more.

+- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://gymnasium.farama.org/environments/atari/space_invaders/), [PyBullet](https://pybullet.org/wordpress/) and more.

- 💾 Share your **trained agents with one line of code to the Hub** and also download powerful agents from the community.

- 🏆 Participate in challenges where you will **evaluate your agents against other teams. You'll also get to play against the agents you'll train.**

+- 🎓 **Earn a certificate of completion** by completing 80% of the assignments.

And more!

At the end of this course, **you’ll get a solid foundation from the basics to the SOTA (state-of-the-art) of methods**.

-You can find the syllabus on our website 👉 here

-

Don’t forget to **sign up to the course** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**

Sign up 👉 here

## What does the course look like? [[course-look-like]]

+

The course is composed of:

-- *A theory part*: where you learn a **concept in theory (article)**.

+- *A theory part*: where you learn a **concept in theory**.

- *A hands-on*: where you’ll learn **to use famous Deep RL libraries** to train your agents in unique environments. These hands-on will be **Google Colab notebooks with companion tutorial videos** if you prefer learning with video format!

-- *Challenges*: you'll get to use your agent to compete against other agents in different challenges. There will also be leaderboards for you to compare the agents' performance.

+- *Challenges*: you'll get to put your agent to compete against other agents in different challenges. There will also be [a leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) for you to compare the agents' performance.

+

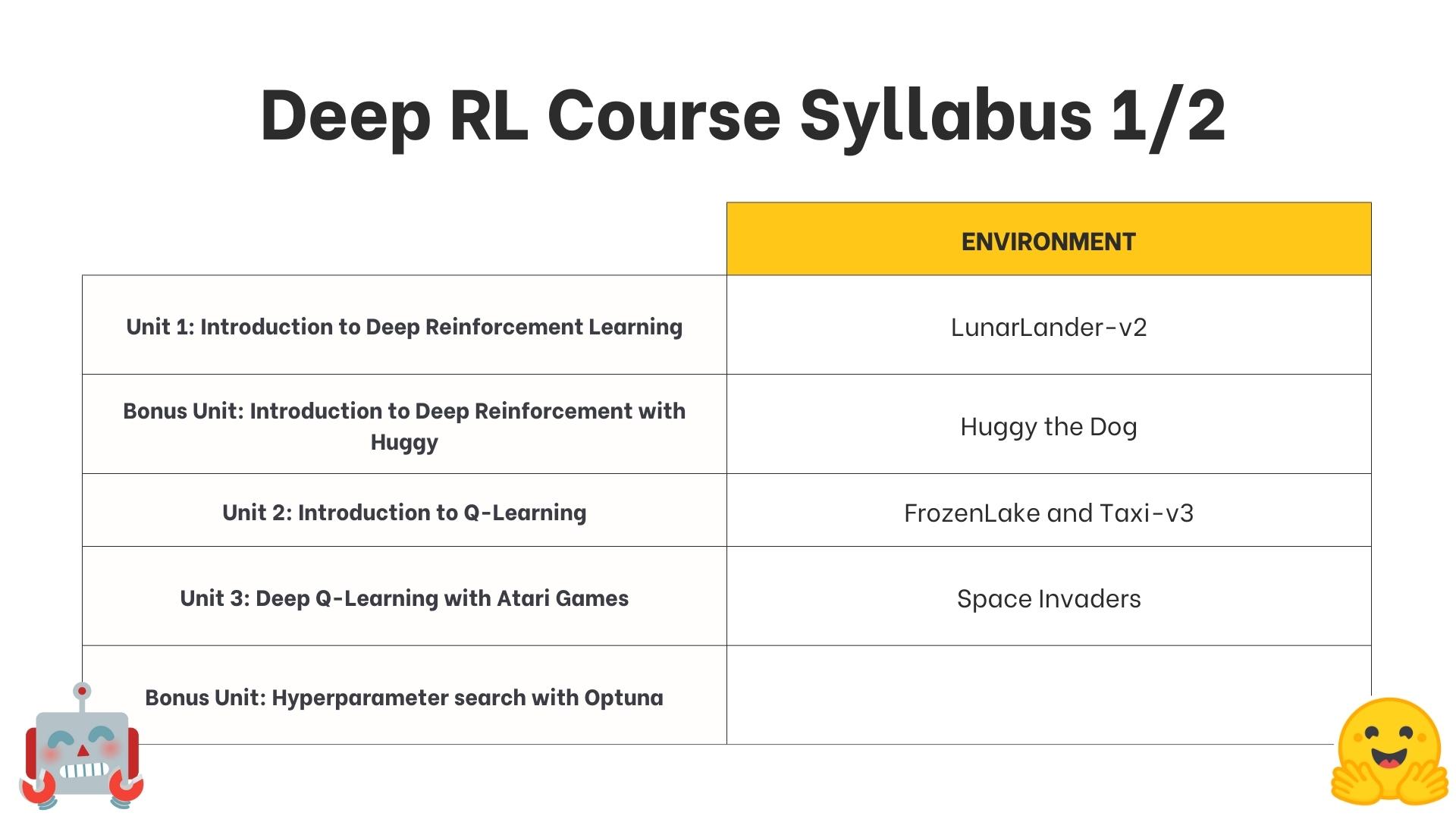

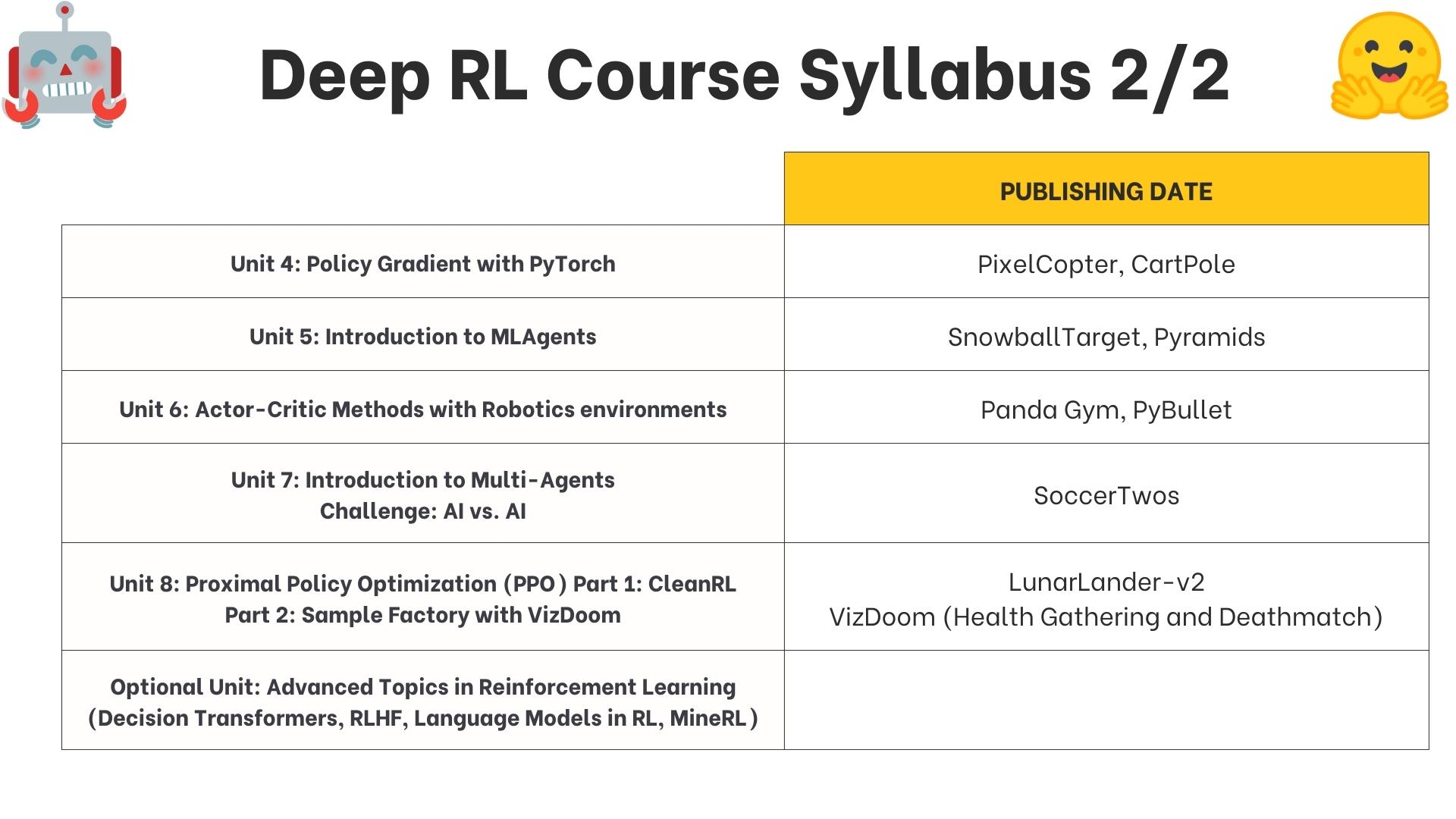

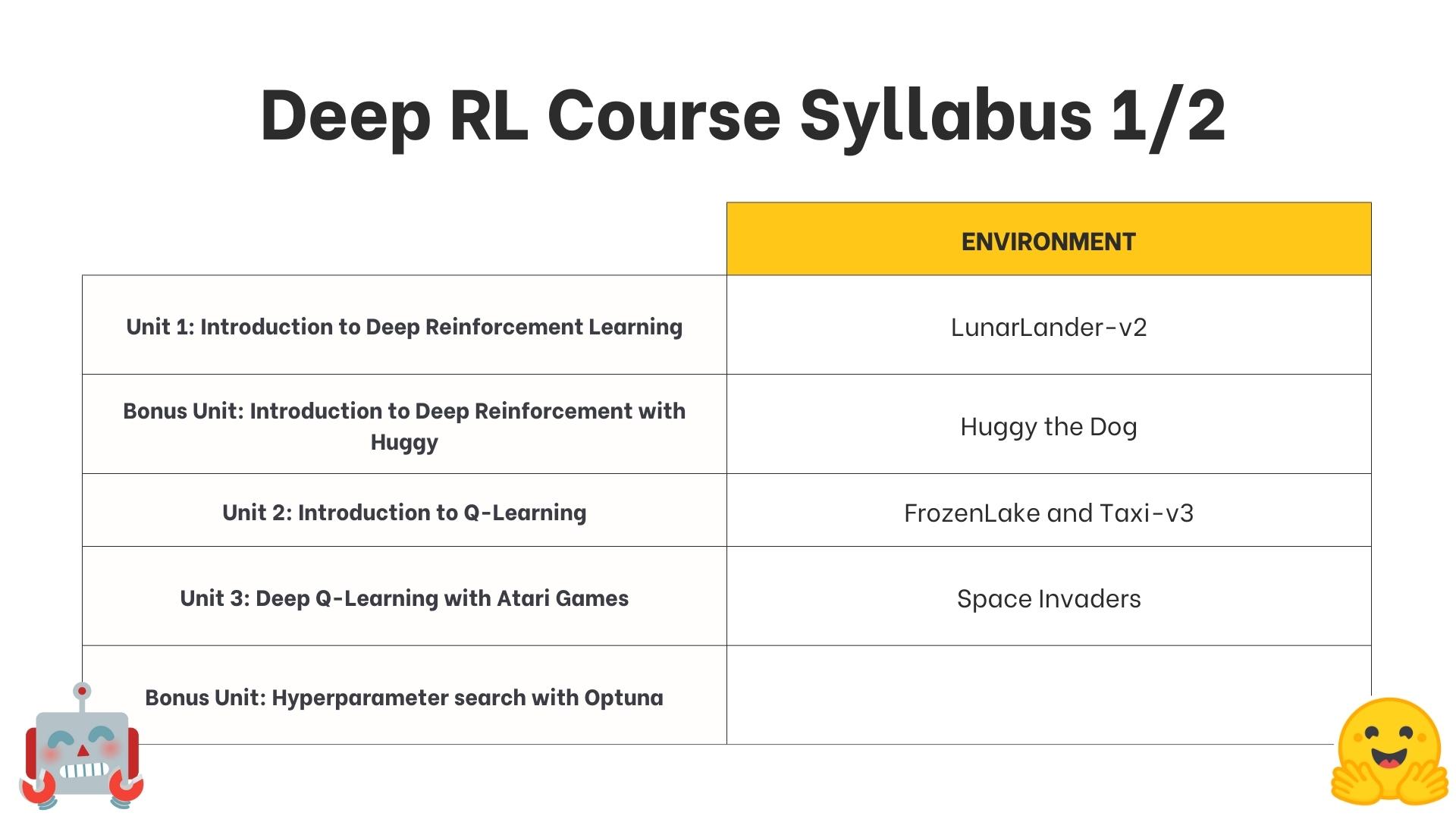

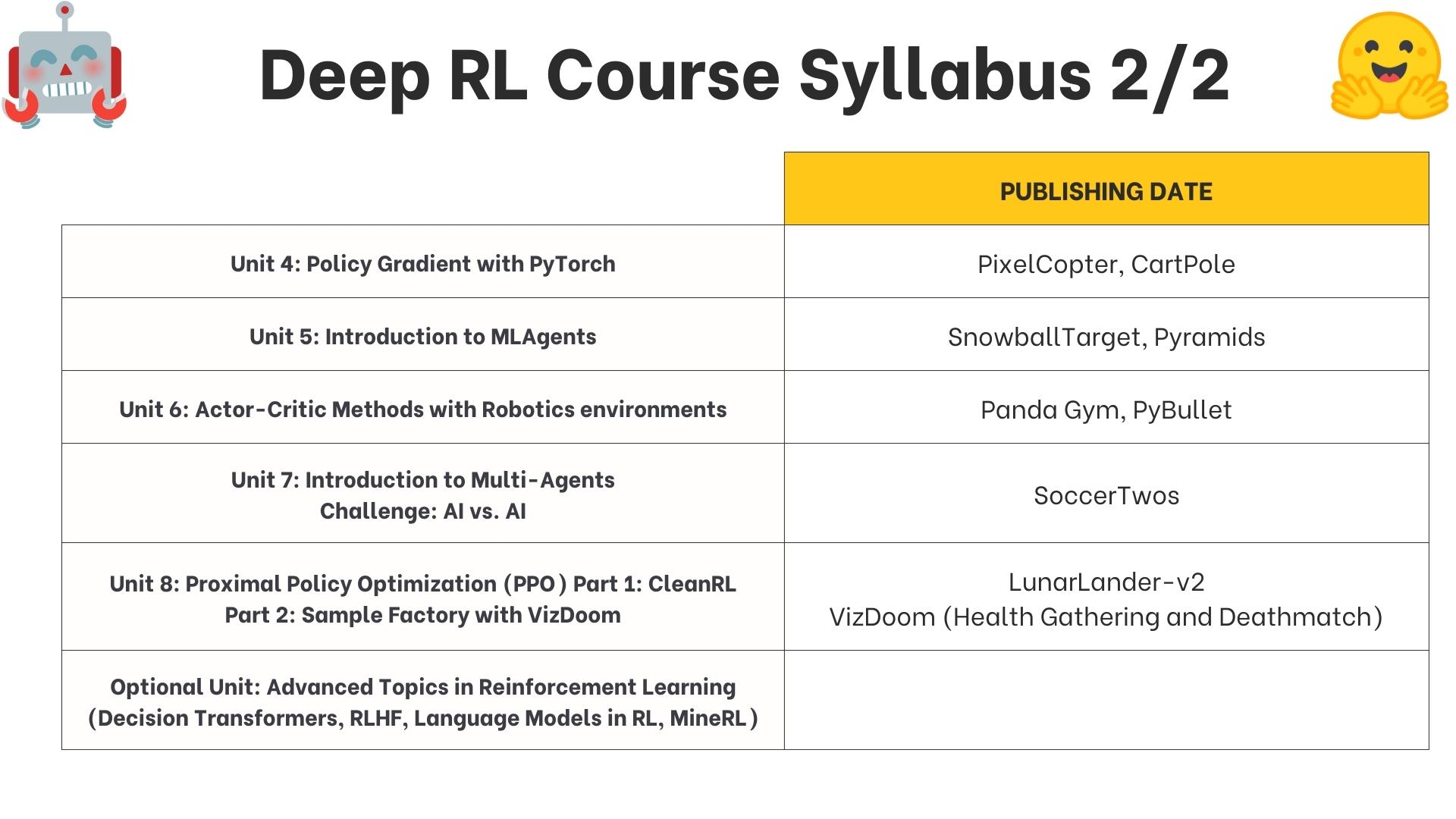

+## What's the syllabus? [[syllabus]]

+

+This is the course's syllabus:

+

+

-Welcome to the most fascinating topic in Artificial Intelligence: Deep Reinforcement Learning.

+Welcome to the most fascinating topic in Artificial Intelligence: **Deep Reinforcement Learning**.

This course will **teach you about Deep Reinforcement Learning from beginner to expert**. It’s completely free and open-source!

@@ -23,28 +23,35 @@ In this course, you will:

- 📖 Study Deep Reinforcement Learning in **theory and practice.**

- 🧑💻 Learn to **use famous Deep RL libraries** such as [Stable Baselines3](https://stable-baselines3.readthedocs.io/en/master/), [RL Baselines3 Zoo](https://github.com/DLR-RM/rl-baselines3-zoo), [Sample Factory](https://samplefactory.dev/) and [CleanRL](https://github.com/vwxyzjn/cleanrl).

-- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://www.gymlibrary.dev/environments/atari/), [PyBullet](https://pybullet.org/wordpress/) and more.

+- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://gymnasium.farama.org/environments/atari/space_invaders/), [PyBullet](https://pybullet.org/wordpress/) and more.

- 💾 Share your **trained agents with one line of code to the Hub** and also download powerful agents from the community.

- 🏆 Participate in challenges where you will **evaluate your agents against other teams. You'll also get to play against the agents you'll train.**

+- 🎓 **Earn a certificate of completion** by completing 80% of the assignments.

And more!

At the end of this course, **you’ll get a solid foundation from the basics to the SOTA (state-of-the-art) of methods**.

-You can find the syllabus on our website 👉 here

-

Don’t forget to **sign up to the course** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**

Sign up 👉 here

## What does the course look like? [[course-look-like]]

+

The course is composed of:

-- *A theory part*: where you learn a **concept in theory (article)**.

+- *A theory part*: where you learn a **concept in theory**.

- *A hands-on*: where you’ll learn **to use famous Deep RL libraries** to train your agents in unique environments. These hands-on will be **Google Colab notebooks with companion tutorial videos** if you prefer learning with video format!

-- *Challenges*: you'll get to use your agent to compete against other agents in different challenges. There will also be leaderboards for you to compare the agents' performance.

+- *Challenges*: you'll get to put your agent to compete against other agents in different challenges. There will also be [a leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) for you to compare the agents' performance.

+

+## What's the syllabus? [[syllabus]]

+

+This is the course's syllabus:

+

+ +

+ ## Two paths: choose your own adventure [[two-paths]]

@@ -52,8 +59,8 @@ The course is composed of:

You can choose to follow this course either:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

- *As a simple audit*: you can participate in all challenges and do assignments if you want, but you have no deadlines.

Both paths **are completely free**.

@@ -65,8 +72,8 @@ You don't need to tell us which path you choose. **If you get more than 80% of t

The certification process is **completely free**:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

## Two paths: choose your own adventure [[two-paths]]

@@ -52,8 +59,8 @@ The course is composed of:

You can choose to follow this course either:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

- *As a simple audit*: you can participate in all challenges and do assignments if you want, but you have no deadlines.

Both paths **are completely free**.

@@ -65,8 +72,8 @@ You don't need to tell us which path you choose. **If you get more than 80% of t

The certification process is **completely free**:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

@@ -74,7 +81,7 @@ The certification process is **completely free**:

To get most of the course, we have some advice:

-1. Join or create study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

+1. Join study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

2. **Do the quizzes and assignments**: the best way to learn is to do and test yourself.

3. **Define a schedule to stay in sync**: you can use our recommended pace schedule below or create yours.

@@ -90,14 +97,6 @@ You need only 3 things:

@@ -74,7 +81,7 @@ The certification process is **completely free**:

To get most of the course, we have some advice:

-1. Join or create study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

+1. Join study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

2. **Do the quizzes and assignments**: the best way to learn is to do and test yourself.

3. **Define a schedule to stay in sync**: you can use our recommended pace schedule below or create yours.

@@ -90,14 +97,6 @@ You need only 3 things:

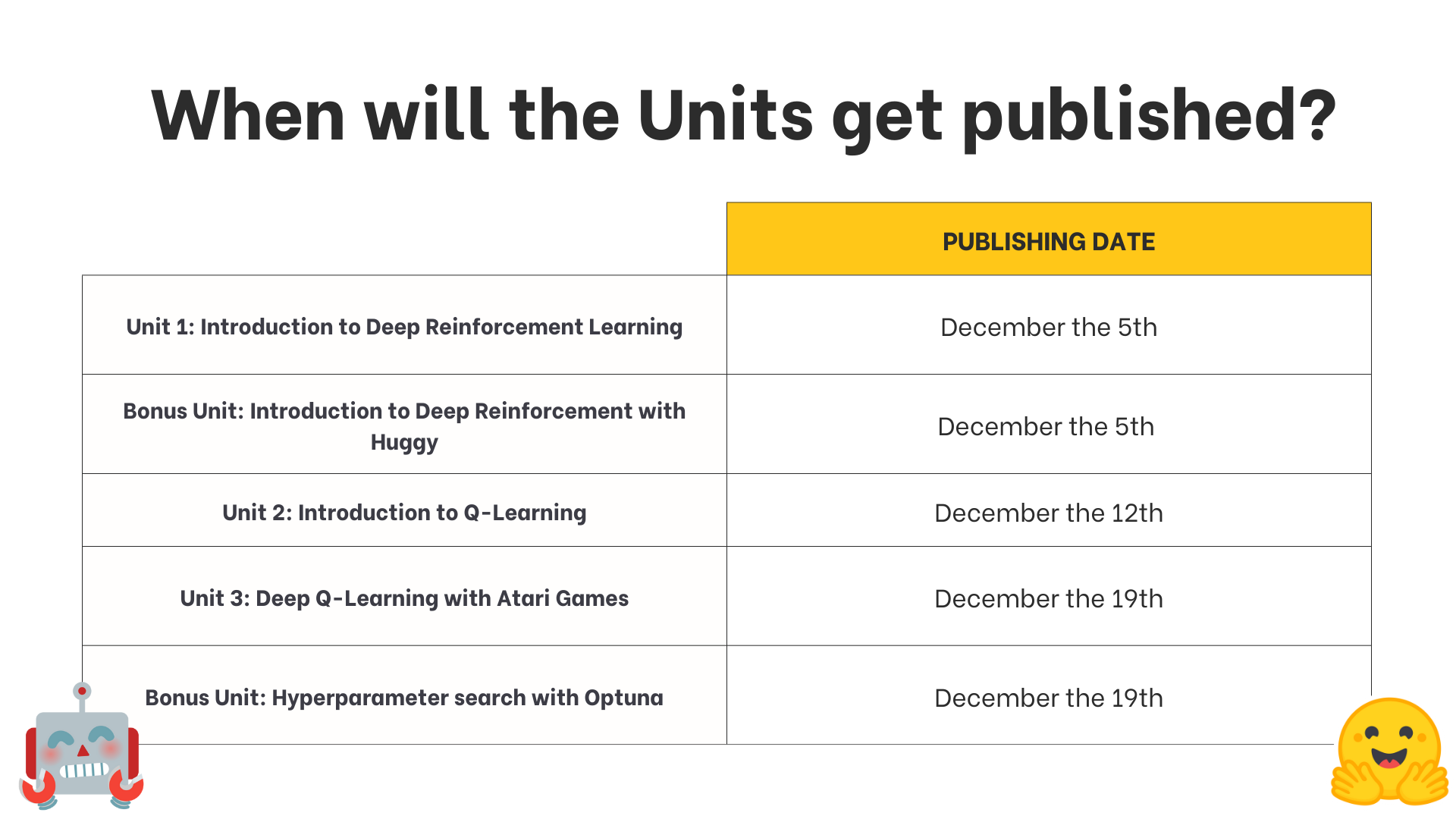

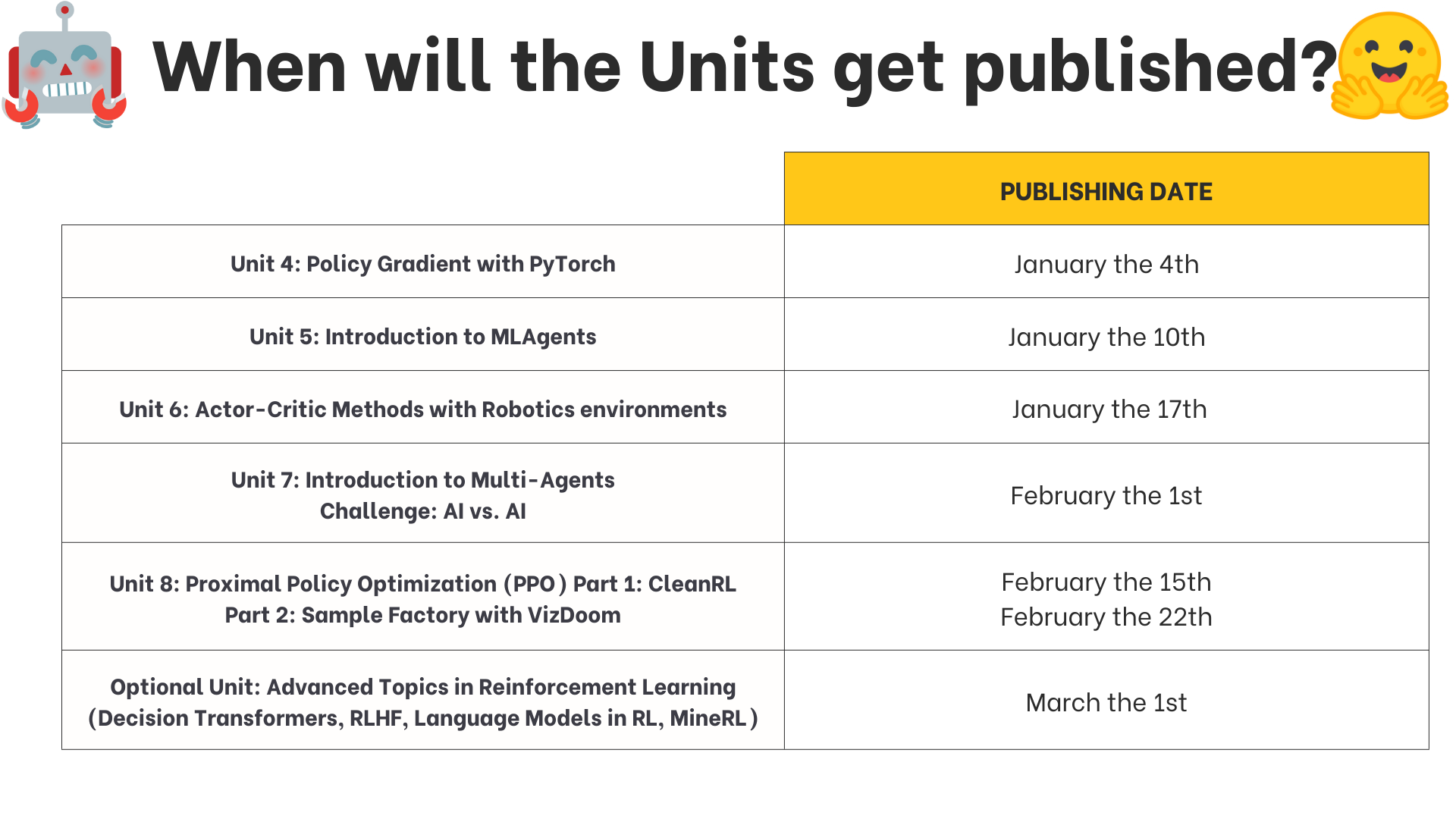

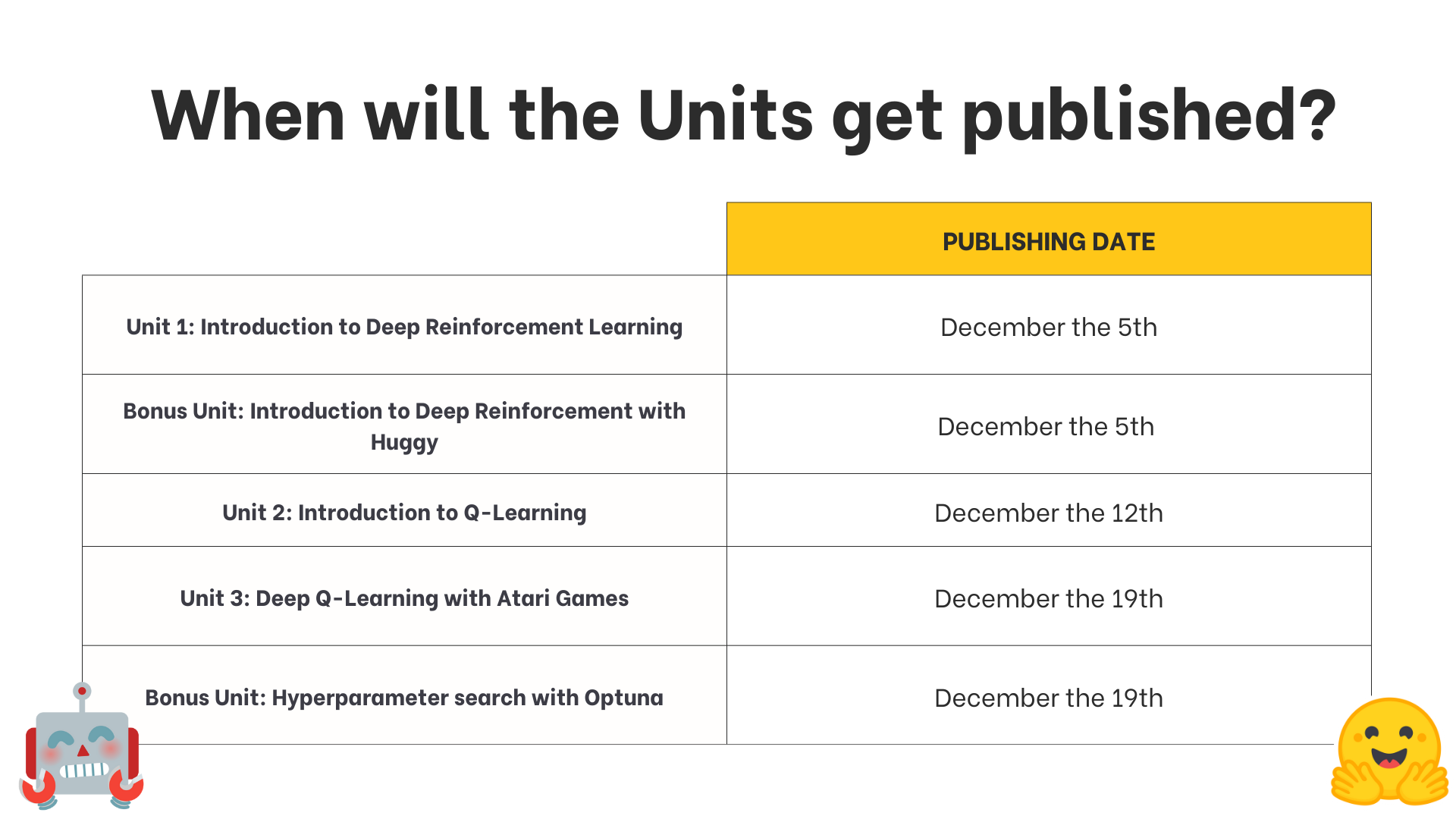

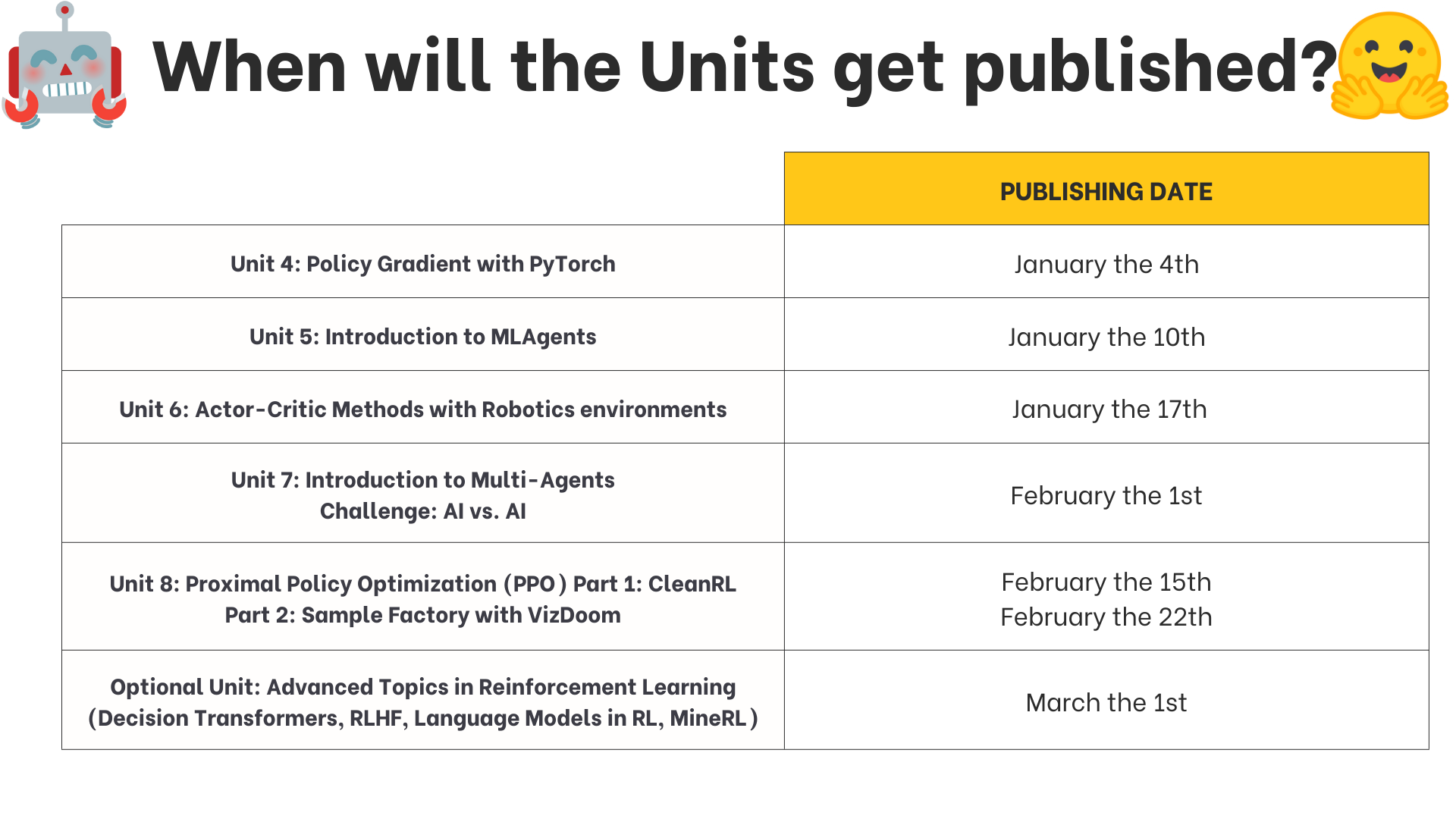

-## What is the publishing schedule? [[publishing-schedule]]

-

-

-We publish **a new unit every Tuesday**.

-

-

-## What is the publishing schedule? [[publishing-schedule]]

-

-

-We publish **a new unit every Tuesday**.

-

- -

- -

## What is the recommended pace? [[recommended-pace]]

@@ -124,14 +123,11 @@ About the team:

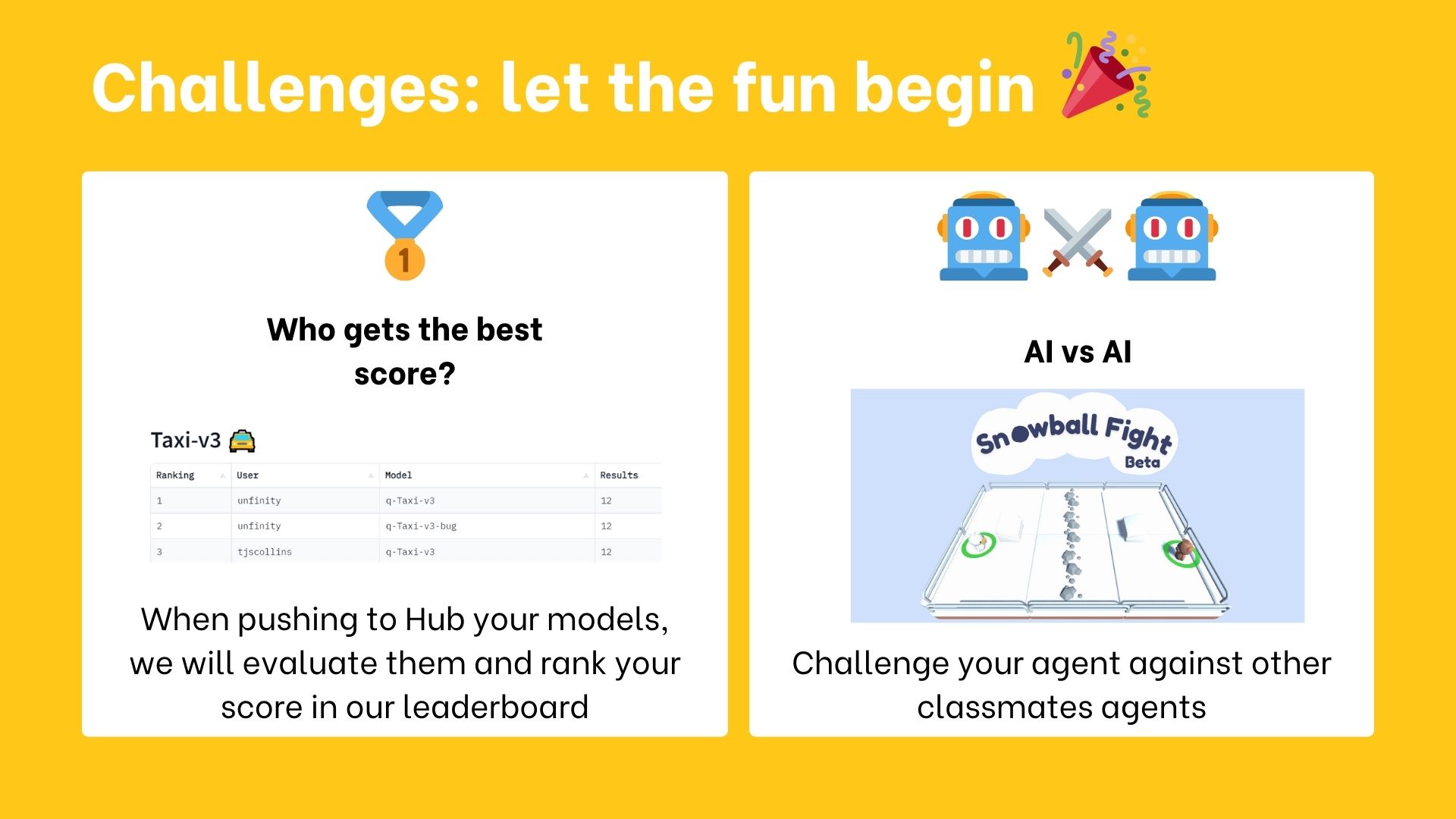

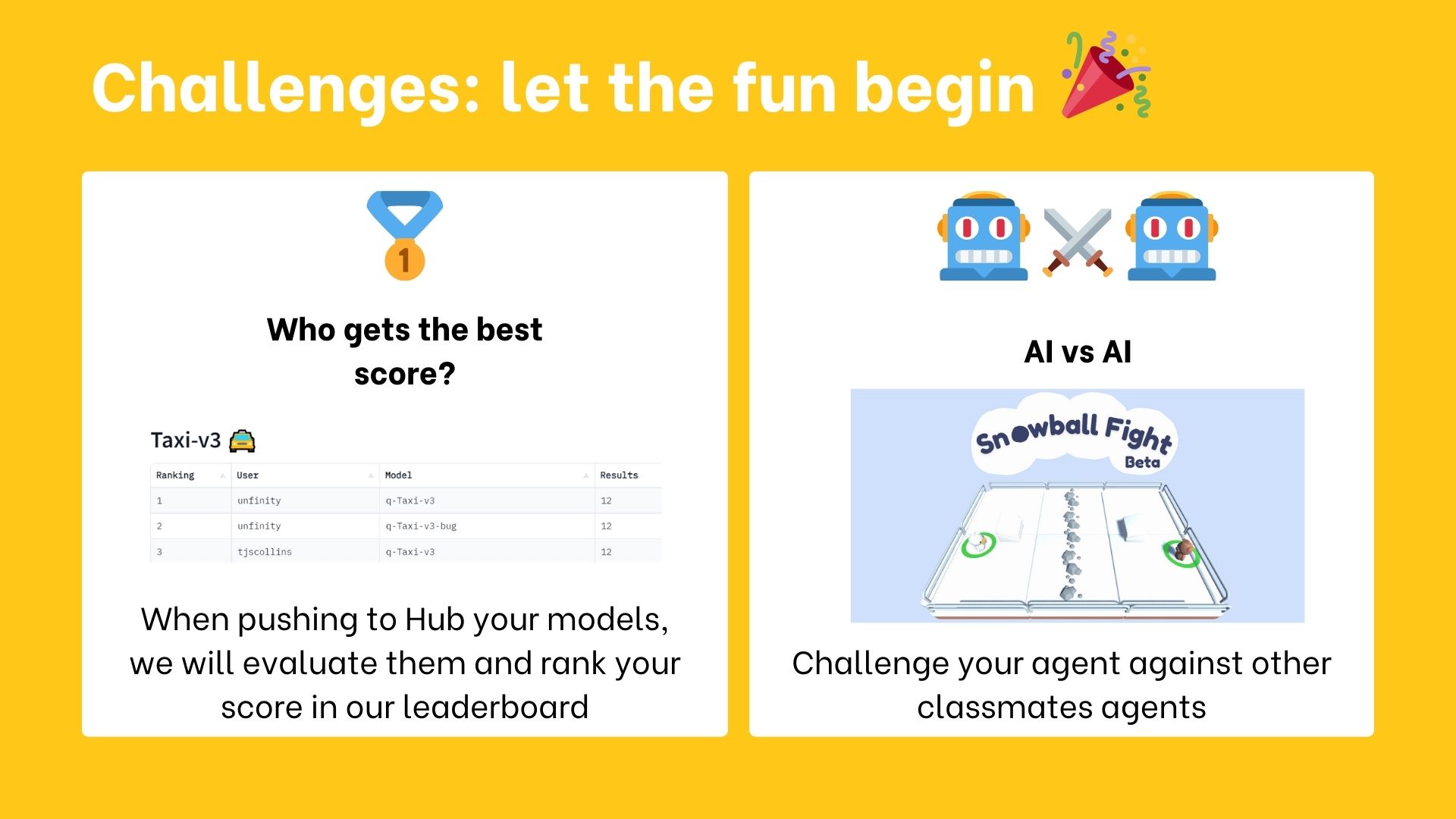

## When do the challenges start? [[challenges]]

In this new version of the course, you have two types of challenges:

-- A leaderboard to compare your agent's performance to other classmates'.

-- AI vs. AI challenges where you can train your agent and compete against other classmates' agents.

+- [A leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) to compare your agent's performance to other classmates'.

+- [AI vs. AI challenges](https://huggingface.co/learn/deep-rl-course/unit7/introduction?fw=pt) where you can train your agent and compete against other classmates' agents.

-

## What is the recommended pace? [[recommended-pace]]

@@ -124,14 +123,11 @@ About the team:

## When do the challenges start? [[challenges]]

In this new version of the course, you have two types of challenges:

-- A leaderboard to compare your agent's performance to other classmates'.

-- AI vs. AI challenges where you can train your agent and compete against other classmates' agents.

+- [A leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) to compare your agent's performance to other classmates'.

+- [AI vs. AI challenges](https://huggingface.co/learn/deep-rl-course/unit7/introduction?fw=pt) where you can train your agent and compete against other classmates' agents.

-These AI vs.AI challenges will be announced **in January**.

-

-

## I found a bug, or I want to improve the course [[contribute]]

Contributions are welcomed 🤗

@@ -141,4 +137,4 @@ Contributions are welcomed 🤗

## I still have questions [[questions]]

-In that case, check our FAQ. And if the question is not in it, ask your question in our discord server #rl-discussions.

+Please ask your question in our discord server #rl-discussions.

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index ac85f71..7148d4f 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -85,7 +85,7 @@ Before diving into the notebook, you need to:

```python

%%capture

# Clone the repository

-!git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+!git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```python

diff --git a/units/en/unitbonus1/train.mdx b/units/en/unitbonus1/train.mdx

index 24c4259..8bba57d 100644

--- a/units/en/unitbonus1/train.mdx

+++ b/units/en/unitbonus1/train.mdx

@@ -68,7 +68,7 @@ Before diving into the notebook, you need to:

```bash

# Clone this specific repository (can take 3min)

-git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```bash

-These AI vs.AI challenges will be announced **in January**.

-

-

## I found a bug, or I want to improve the course [[contribute]]

Contributions are welcomed 🤗

@@ -141,4 +137,4 @@ Contributions are welcomed 🤗

## I still have questions [[questions]]

-In that case, check our FAQ. And if the question is not in it, ask your question in our discord server #rl-discussions.

+Please ask your question in our discord server #rl-discussions.

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index ac85f71..7148d4f 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -85,7 +85,7 @@ Before diving into the notebook, you need to:

```python

%%capture

# Clone the repository

-!git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+!git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```python

diff --git a/units/en/unitbonus1/train.mdx b/units/en/unitbonus1/train.mdx

index 24c4259..8bba57d 100644

--- a/units/en/unitbonus1/train.mdx

+++ b/units/en/unitbonus1/train.mdx

@@ -68,7 +68,7 @@ Before diving into the notebook, you need to:

```bash

# Clone this specific repository (can take 3min)

-git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```bash

diff --git a/units/en/unit0/introduction.mdx b/units/en/unit0/introduction.mdx

index 57e6640..07c60fa 100644

--- a/units/en/unit0/introduction.mdx

+++ b/units/en/unit0/introduction.mdx

@@ -2,7 +2,7 @@

diff --git a/units/en/unit0/introduction.mdx b/units/en/unit0/introduction.mdx

index 57e6640..07c60fa 100644

--- a/units/en/unit0/introduction.mdx

+++ b/units/en/unit0/introduction.mdx

@@ -2,7 +2,7 @@

-Welcome to the most fascinating topic in Artificial Intelligence: Deep Reinforcement Learning.

+Welcome to the most fascinating topic in Artificial Intelligence: **Deep Reinforcement Learning**.

This course will **teach you about Deep Reinforcement Learning from beginner to expert**. It’s completely free and open-source!

@@ -23,28 +23,35 @@ In this course, you will:

- 📖 Study Deep Reinforcement Learning in **theory and practice.**

- 🧑💻 Learn to **use famous Deep RL libraries** such as [Stable Baselines3](https://stable-baselines3.readthedocs.io/en/master/), [RL Baselines3 Zoo](https://github.com/DLR-RM/rl-baselines3-zoo), [Sample Factory](https://samplefactory.dev/) and [CleanRL](https://github.com/vwxyzjn/cleanrl).

-- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://www.gymlibrary.dev/environments/atari/), [PyBullet](https://pybullet.org/wordpress/) and more.

+- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://gymnasium.farama.org/environments/atari/space_invaders/), [PyBullet](https://pybullet.org/wordpress/) and more.

- 💾 Share your **trained agents with one line of code to the Hub** and also download powerful agents from the community.

- 🏆 Participate in challenges where you will **evaluate your agents against other teams. You'll also get to play against the agents you'll train.**

+- 🎓 **Earn a certificate of completion** by completing 80% of the assignments.

And more!

At the end of this course, **you’ll get a solid foundation from the basics to the SOTA (state-of-the-art) of methods**.

-You can find the syllabus on our website 👉 here

-

Don’t forget to **sign up to the course** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**

Sign up 👉 here

## What does the course look like? [[course-look-like]]

+

The course is composed of:

-- *A theory part*: where you learn a **concept in theory (article)**.

+- *A theory part*: where you learn a **concept in theory**.

- *A hands-on*: where you’ll learn **to use famous Deep RL libraries** to train your agents in unique environments. These hands-on will be **Google Colab notebooks with companion tutorial videos** if you prefer learning with video format!

-- *Challenges*: you'll get to use your agent to compete against other agents in different challenges. There will also be leaderboards for you to compare the agents' performance.

+- *Challenges*: you'll get to put your agent to compete against other agents in different challenges. There will also be [a leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) for you to compare the agents' performance.

+

+## What's the syllabus? [[syllabus]]

+

+This is the course's syllabus:

+

+

-Welcome to the most fascinating topic in Artificial Intelligence: Deep Reinforcement Learning.

+Welcome to the most fascinating topic in Artificial Intelligence: **Deep Reinforcement Learning**.

This course will **teach you about Deep Reinforcement Learning from beginner to expert**. It’s completely free and open-source!

@@ -23,28 +23,35 @@ In this course, you will:

- 📖 Study Deep Reinforcement Learning in **theory and practice.**

- 🧑💻 Learn to **use famous Deep RL libraries** such as [Stable Baselines3](https://stable-baselines3.readthedocs.io/en/master/), [RL Baselines3 Zoo](https://github.com/DLR-RM/rl-baselines3-zoo), [Sample Factory](https://samplefactory.dev/) and [CleanRL](https://github.com/vwxyzjn/cleanrl).

-- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://www.gymlibrary.dev/environments/atari/), [PyBullet](https://pybullet.org/wordpress/) and more.

+- 🤖 **Train agents in unique environments** such as [SnowballFight](https://huggingface.co/spaces/ThomasSimonini/SnowballFight), [Huggy the Doggo 🐶](https://huggingface.co/spaces/ThomasSimonini/Huggy), [VizDoom (Doom)](https://vizdoom.cs.put.edu.pl/) and classical ones such as [Space Invaders](https://gymnasium.farama.org/environments/atari/space_invaders/), [PyBullet](https://pybullet.org/wordpress/) and more.

- 💾 Share your **trained agents with one line of code to the Hub** and also download powerful agents from the community.

- 🏆 Participate in challenges where you will **evaluate your agents against other teams. You'll also get to play against the agents you'll train.**

+- 🎓 **Earn a certificate of completion** by completing 80% of the assignments.

And more!

At the end of this course, **you’ll get a solid foundation from the basics to the SOTA (state-of-the-art) of methods**.

-You can find the syllabus on our website 👉 here

-

Don’t forget to **sign up to the course** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**

Sign up 👉 here

## What does the course look like? [[course-look-like]]

+

The course is composed of:

-- *A theory part*: where you learn a **concept in theory (article)**.

+- *A theory part*: where you learn a **concept in theory**.

- *A hands-on*: where you’ll learn **to use famous Deep RL libraries** to train your agents in unique environments. These hands-on will be **Google Colab notebooks with companion tutorial videos** if you prefer learning with video format!

-- *Challenges*: you'll get to use your agent to compete against other agents in different challenges. There will also be leaderboards for you to compare the agents' performance.

+- *Challenges*: you'll get to put your agent to compete against other agents in different challenges. There will also be [a leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) for you to compare the agents' performance.

+

+## What's the syllabus? [[syllabus]]

+

+This is the course's syllabus:

+

+ +

+ ## Two paths: choose your own adventure [[two-paths]]

@@ -52,8 +59,8 @@ The course is composed of:

You can choose to follow this course either:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

- *As a simple audit*: you can participate in all challenges and do assignments if you want, but you have no deadlines.

Both paths **are completely free**.

@@ -65,8 +72,8 @@ You don't need to tell us which path you choose. **If you get more than 80% of t

The certification process is **completely free**:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

## Two paths: choose your own adventure [[two-paths]]

@@ -52,8 +59,8 @@ The course is composed of:

You can choose to follow this course either:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

- *As a simple audit*: you can participate in all challenges and do assignments if you want, but you have no deadlines.

Both paths **are completely free**.

@@ -65,8 +72,8 @@ You don't need to tell us which path you choose. **If you get more than 80% of t

The certification process is **completely free**:

-- *To get a certificate of completion*: you need to complete 80% of the assignments before the beginning of June 2023.

-- *To get a certificate of honors*: you need to complete 100% of the assignments before the beginning of June 2023.

+- *To get a certificate of completion*: you need to complete 80% of the assignments before the end of July 2023.

+- *To get a certificate of honors*: you need to complete 100% of the assignments before the end of July 2023.

@@ -74,7 +81,7 @@ The certification process is **completely free**:

To get most of the course, we have some advice:

-1. Join or create study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

+1. Join study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

2. **Do the quizzes and assignments**: the best way to learn is to do and test yourself.

3. **Define a schedule to stay in sync**: you can use our recommended pace schedule below or create yours.

@@ -90,14 +97,6 @@ You need only 3 things:

@@ -74,7 +81,7 @@ The certification process is **completely free**:

To get most of the course, we have some advice:

-1. Join or create study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

+1. Join study groups in Discord : studying in groups is always easier. To do that, you need to join our discord server. If you're new to Discord, no worries! We have some tools that will help you learn about it.

2. **Do the quizzes and assignments**: the best way to learn is to do and test yourself.

3. **Define a schedule to stay in sync**: you can use our recommended pace schedule below or create yours.

@@ -90,14 +97,6 @@ You need only 3 things:

-## What is the publishing schedule? [[publishing-schedule]]

-

-

-We publish **a new unit every Tuesday**.

-

-

-## What is the publishing schedule? [[publishing-schedule]]

-

-

-We publish **a new unit every Tuesday**.

-

- -

- -

## What is the recommended pace? [[recommended-pace]]

@@ -124,14 +123,11 @@ About the team:

## When do the challenges start? [[challenges]]

In this new version of the course, you have two types of challenges:

-- A leaderboard to compare your agent's performance to other classmates'.

-- AI vs. AI challenges where you can train your agent and compete against other classmates' agents.

+- [A leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) to compare your agent's performance to other classmates'.

+- [AI vs. AI challenges](https://huggingface.co/learn/deep-rl-course/unit7/introduction?fw=pt) where you can train your agent and compete against other classmates' agents.

-

## What is the recommended pace? [[recommended-pace]]

@@ -124,14 +123,11 @@ About the team:

## When do the challenges start? [[challenges]]

In this new version of the course, you have two types of challenges:

-- A leaderboard to compare your agent's performance to other classmates'.

-- AI vs. AI challenges where you can train your agent and compete against other classmates' agents.

+- [A leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) to compare your agent's performance to other classmates'.

+- [AI vs. AI challenges](https://huggingface.co/learn/deep-rl-course/unit7/introduction?fw=pt) where you can train your agent and compete against other classmates' agents.

-These AI vs.AI challenges will be announced **in January**.

-

-

## I found a bug, or I want to improve the course [[contribute]]

Contributions are welcomed 🤗

@@ -141,4 +137,4 @@ Contributions are welcomed 🤗

## I still have questions [[questions]]

-In that case, check our FAQ. And if the question is not in it, ask your question in our discord server #rl-discussions.

+Please ask your question in our discord server #rl-discussions.

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index ac85f71..7148d4f 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -85,7 +85,7 @@ Before diving into the notebook, you need to:

```python

%%capture

# Clone the repository

-!git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+!git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```python

diff --git a/units/en/unitbonus1/train.mdx b/units/en/unitbonus1/train.mdx

index 24c4259..8bba57d 100644

--- a/units/en/unitbonus1/train.mdx

+++ b/units/en/unitbonus1/train.mdx

@@ -68,7 +68,7 @@ Before diving into the notebook, you need to:

```bash

# Clone this specific repository (can take 3min)

-git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```bash

-These AI vs.AI challenges will be announced **in January**.

-

-

## I found a bug, or I want to improve the course [[contribute]]

Contributions are welcomed 🤗

@@ -141,4 +137,4 @@ Contributions are welcomed 🤗

## I still have questions [[questions]]

-In that case, check our FAQ. And if the question is not in it, ask your question in our discord server #rl-discussions.

+Please ask your question in our discord server #rl-discussions.

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index ac85f71..7148d4f 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -85,7 +85,7 @@ Before diving into the notebook, you need to:

```python

%%capture

# Clone the repository

-!git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+!git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```python

diff --git a/units/en/unitbonus1/train.mdx b/units/en/unitbonus1/train.mdx

index 24c4259..8bba57d 100644

--- a/units/en/unitbonus1/train.mdx

+++ b/units/en/unitbonus1/train.mdx

@@ -68,7 +68,7 @@ Before diving into the notebook, you need to:

```bash

# Clone this specific repository (can take 3min)

-git clone --depth 1 --branch hf-integration https://github.com/huggingface/ml-agents

+git clone --depth 1 --branch hf-integration-save https://github.com/huggingface/ml-agents

```

```bash