diff --git a/units/en/live1/live1.mdx b/units/en/live1/live1.mdx

index 821ad23..f81bca8 100644

--- a/units/en/live1/live1.mdx

+++ b/units/en/live1/live1.mdx

@@ -1,4 +1,4 @@

-# Live 1: Deep RL Course. Intro, Q&A, and playing with Huggy 🐶

+# Live 1: How the course work, Q&A, and playing with Huggy 🐶

In this first live stream, we explained how the course work (scope, units, challenges, and more) and answered your questions.

@@ -6,5 +6,4 @@ And finally, we saw some LunarLander agents you've trained and play with your Hu

-

To know when the next live is scheduled **check the discord server**. We will also send **you an email**. If you can't participate, don't worry, we record the live sessions.

\ No newline at end of file

diff --git a/units/en/unit3/deep-q-network.mdx b/units/en/unit3/deep-q-network.mdx

index 75c66d3..b69dc58 100644

--- a/units/en/unit3/deep-q-network.mdx

+++ b/units/en/unit3/deep-q-network.mdx

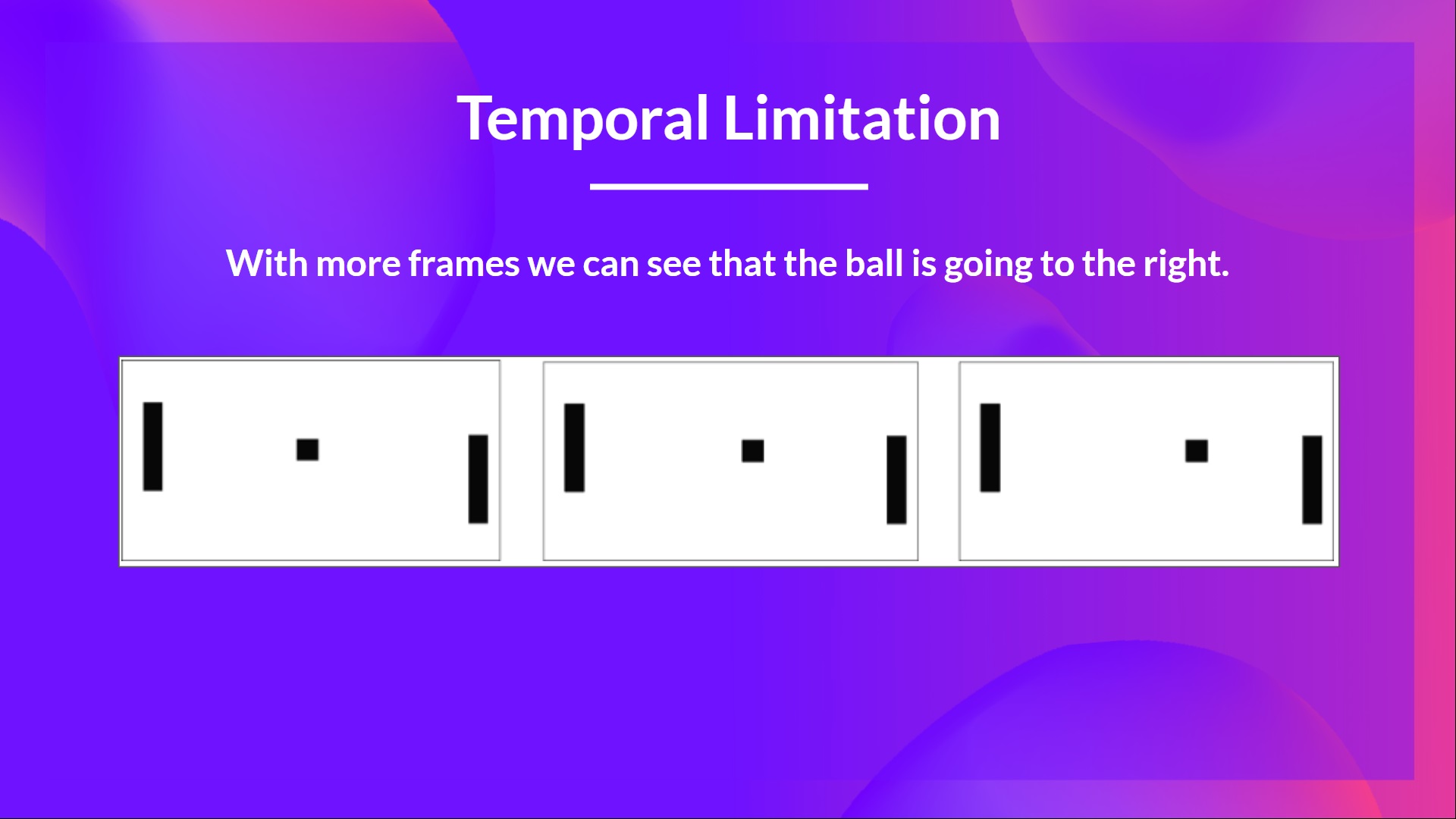

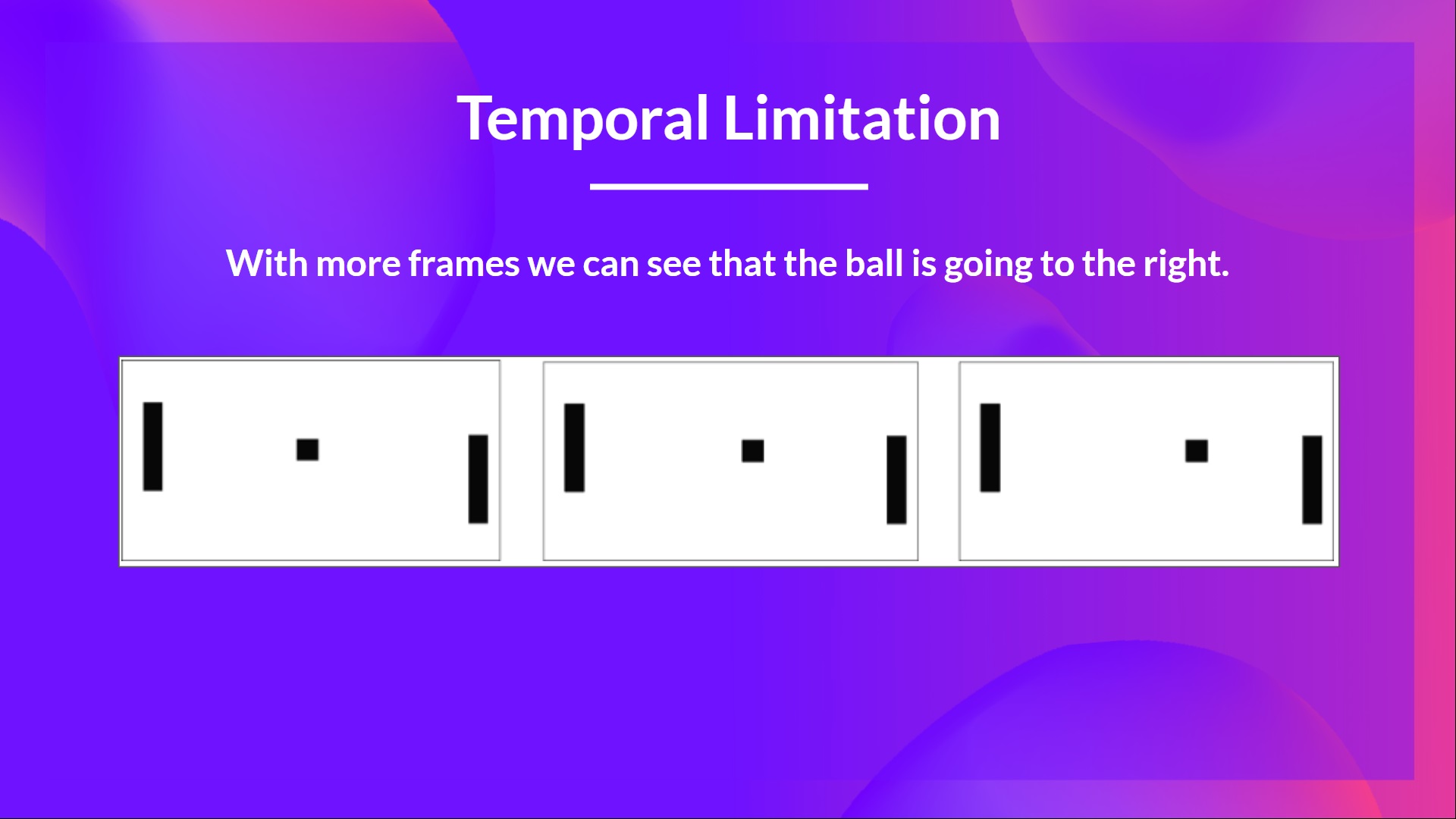

@@ -30,7 +30,7 @@ No, because one frame is not enough to have a sense of motion! But what if I add

That’s why, to capture temporal information, we stack four frames together.

-Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some spatial properties across those frames**.

+Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some temporal properties across those frames**.

If you don't know what are convolutional layers, don't worry. You can check the [Lesson 4 of this free Deep Reinforcement Learning Course by Udacity](https://www.udacity.com/course/deep-learning-pytorch--ud188)

That’s why, to capture temporal information, we stack four frames together.

-Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some spatial properties across those frames**.

+Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some temporal properties across those frames**.

If you don't know what are convolutional layers, don't worry. You can check the [Lesson 4 of this free Deep Reinforcement Learning Course by Udacity](https://www.udacity.com/course/deep-learning-pytorch--ud188)

That’s why, to capture temporal information, we stack four frames together.

-Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some spatial properties across those frames**.

+Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some temporal properties across those frames**.

If you don't know what are convolutional layers, don't worry. You can check the [Lesson 4 of this free Deep Reinforcement Learning Course by Udacity](https://www.udacity.com/course/deep-learning-pytorch--ud188)

That’s why, to capture temporal information, we stack four frames together.

-Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some spatial properties across those frames**.

+Then, the stacked frames are processed by three convolutional layers. These layers **allow us to capture and exploit spatial relationships in images**. But also, because frames are stacked together, **you can exploit some temporal properties across those frames**.

If you don't know what are convolutional layers, don't worry. You can check the [Lesson 4 of this free Deep Reinforcement Learning Course by Udacity](https://www.udacity.com/course/deep-learning-pytorch--ud188)