diff --git a/units/en/unitbonus3/curriculum-learning.mdx b/units/en/unitbonus3/curriculum-learning.mdx

index dbe8e64..fa26427 100644

--- a/units/en/unitbonus3/curriculum-learning.mdx

+++ b/units/en/unitbonus3/curriculum-learning.mdx

@@ -1,6 +1,6 @@

# (Automatic) Curriculum Learning for RL

-While most of the RL methods seen in this course work well in practice, there are some cases where using them alone fails. It is for instance the case where:

+While most of the RL methods seen in this course work well in practice, there are some cases where using them alone fails. This can happen, for instance, when:

- the task to learn is hard and requires an **incremental acquisition of skills** (for instance when one wants to make a bipedal agent learn to go through hard obstacles, it must first learn to stand, then walk, then maybe jump…)

- there are variations in the environment (that affect the difficulty) and one wants its agent to be **robust** to them

@@ -11,9 +11,9 @@ While most of the RL methods seen in this course work well in practice, there ar

TeachMyAgent

-In such cases, it seems needed to propose different tasks to our RL agent and organize them such that it allows the agent to progressively acquire skills. This approach is called **Curriculum Learning** and usually implies a hand-designed curriculum (or set of tasks organized in a specific order). In practice, one can for instance control the generation of the environment, the initial states, or use Self-Play an control the level of opponents proposed to the RL agent.

+In such cases, it seems needed to propose different tasks to our RL agent and organize them such that the agent progressively acquires skills. This approach is called **Curriculum Learning** and usually implies a hand-designed curriculum (or set of tasks organized in a specific order). In practice, one can, for instance, control the generation of the environment, the initial states, or use Self-Play and control the level of opponents proposed to the RL agent.

-As designing such a curriculum is not always trivial, the field of **Automatic Curriculum Learning (ACL) proposes to design approaches that learn to create such and organization of tasks in order to maximize the RL agent’s performances**. Portelas et al. proposed to define ACL as:

+As designing such a curriculum is not always trivial, the field of **Automatic Curriculum Learning (ACL) proposes to design approaches that learn to create such an organization of tasks in order to maximize the RL agent’s performances**. Portelas et al. proposed to define ACL as:

> … a family of mechanisms that automatically adapt the distribution of training data by learning to adjust the selection of learning situations to the capabilities of RL agents.

>

@@ -36,7 +36,7 @@ Finally, you can play with the robustness of agents trained in the

@@ -12,17 +12,17 @@ A natural question recently studied was could such knowledge benefit agents such

## LMs and RL

-There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct these knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by agent leading to:

+There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct this knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by the agent leading to:

1) Sample inefficiency

2) Unexpected behaviors from humans’ eyes

-As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenue for sample efficiency RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

+As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenues for sample efficient RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

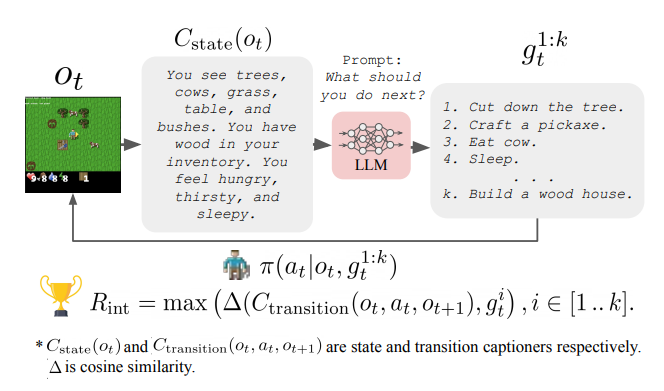

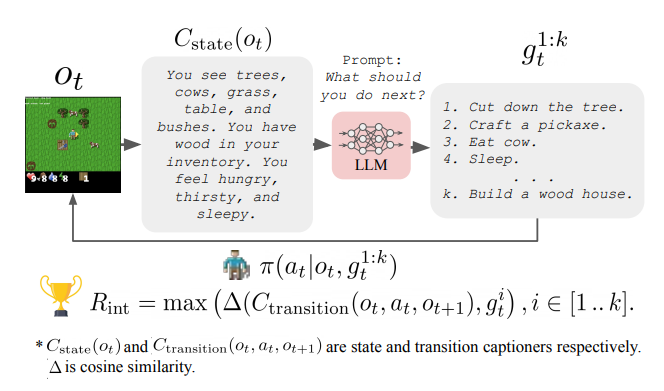

-Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.

+Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such a method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.

@@ -12,17 +12,17 @@ A natural question recently studied was could such knowledge benefit agents such

## LMs and RL

-There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct these knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by agent leading to:

+There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct this knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by the agent leading to:

1) Sample inefficiency

2) Unexpected behaviors from humans’ eyes

-As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenue for sample efficiency RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

+As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenues for sample efficient RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

-Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.

+Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such a method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.

diff --git a/units/en/unitbonus3/model-based.mdx b/units/en/unitbonus3/model-based.mdx

index 9983a01..633afcb 100644

--- a/units/en/unitbonus3/model-based.mdx

+++ b/units/en/unitbonus3/model-based.mdx

@@ -2,7 +2,7 @@

Model-based reinforcement learning only differs from its model-free counterpart in learning a *dynamics model*, but that has substantial downstream effects on how the decisions are made.

-The dynamics models usually model the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

+The dynamics model usually models the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

## Simple definition

diff --git a/units/en/unitbonus3/rl-documentation.mdx b/units/en/unitbonus3/rl-documentation.mdx

index dc4a661..8357e10 100644

--- a/units/en/unitbonus3/rl-documentation.mdx

+++ b/units/en/unitbonus3/rl-documentation.mdx

@@ -3,7 +3,7 @@

In this advanced topic, we address the question: **how should we monitor and keep track of powerful reinforcement learning agents that we are training in the real world and

interfacing with humans?**

-As machine learning systems have increasingly impacted modern life, **call for documentation of these systems has grown**.

+As machine learning systems have increasingly impacted modern life, the **call for the documentation of these systems has grown**.

Such documentation can cover aspects such as the training data used — where it is stored, when it was collected, who was involved, etc.

— or the model optimization framework — the architecture, evaluation metrics, relevant papers, etc. — and more.

@@ -19,7 +19,7 @@ These model and data specific logs are designed to be completed when the model o

Reinforcement learning systems are fundamentally designed to optimize based on measurements of reward and time.

While the notion of a reward function can be mapped nicely to many well-understood fields of supervised learning (via a loss function),

-understanding how machine learning systems evolve over time is limited.

+understanding of how machine learning systems evolve over time is limited.

To that end, the authors introduce [*Reward Reports for Reinforcement Learning*](https://www.notion.so/Brief-introduction-to-RL-documentation-b8cbda5a6f5242338e0756e6bef72af4) (the pithy naming is designed to mirror the popular papers *Model Cards for Model Reporting* and *Datasheets for Datasets*).

The goal is to propose a type of documentation focused on the **human factors of reward** and **time-varying feedback systems**.

@@ -42,7 +42,7 @@ The change log is accompanied by update triggers that encourage monitoring these

## Contributing

-Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind closed doors of private corporations.

+Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind the closed doors of private corporations.

These corporations are largely without regulation, so the burden of documentation falls on the public.

If you are interested in contributing, we are building Reward Reports for popular machine learning systems on a public

diff --git a/units/en/unitbonus3/rlhf.mdx b/units/en/unitbonus3/rlhf.mdx

index 7c473d1..e8de6d1 100644

--- a/units/en/unitbonus3/rlhf.mdx

+++ b/units/en/unitbonus3/rlhf.mdx

@@ -14,7 +14,7 @@ To start learning about RLHF:

1. Read this introduction: [Illustrating Reinforcement Learning from Human Feedback (RLHF)](https://huggingface.co/blog/rlhf).

2. Watch the recorded live we did some weeks ago, where Nathan covered the basics of Reinforcement Learning from Human Feedback (RLHF) and how this technology is being used to enable state-of-the-art ML tools like ChatGPT.

-Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with the open question in RLHF.

+Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with open questions in RLHF.

diff --git a/units/en/unitbonus3/model-based.mdx b/units/en/unitbonus3/model-based.mdx

index 9983a01..633afcb 100644

--- a/units/en/unitbonus3/model-based.mdx

+++ b/units/en/unitbonus3/model-based.mdx

@@ -2,7 +2,7 @@

Model-based reinforcement learning only differs from its model-free counterpart in learning a *dynamics model*, but that has substantial downstream effects on how the decisions are made.

-The dynamics models usually model the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

+The dynamics model usually models the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

## Simple definition

diff --git a/units/en/unitbonus3/rl-documentation.mdx b/units/en/unitbonus3/rl-documentation.mdx

index dc4a661..8357e10 100644

--- a/units/en/unitbonus3/rl-documentation.mdx

+++ b/units/en/unitbonus3/rl-documentation.mdx

@@ -3,7 +3,7 @@

In this advanced topic, we address the question: **how should we monitor and keep track of powerful reinforcement learning agents that we are training in the real world and

interfacing with humans?**

-As machine learning systems have increasingly impacted modern life, **call for documentation of these systems has grown**.

+As machine learning systems have increasingly impacted modern life, the **call for the documentation of these systems has grown**.

Such documentation can cover aspects such as the training data used — where it is stored, when it was collected, who was involved, etc.

— or the model optimization framework — the architecture, evaluation metrics, relevant papers, etc. — and more.

@@ -19,7 +19,7 @@ These model and data specific logs are designed to be completed when the model o

Reinforcement learning systems are fundamentally designed to optimize based on measurements of reward and time.

While the notion of a reward function can be mapped nicely to many well-understood fields of supervised learning (via a loss function),

-understanding how machine learning systems evolve over time is limited.

+understanding of how machine learning systems evolve over time is limited.

To that end, the authors introduce [*Reward Reports for Reinforcement Learning*](https://www.notion.so/Brief-introduction-to-RL-documentation-b8cbda5a6f5242338e0756e6bef72af4) (the pithy naming is designed to mirror the popular papers *Model Cards for Model Reporting* and *Datasheets for Datasets*).

The goal is to propose a type of documentation focused on the **human factors of reward** and **time-varying feedback systems**.

@@ -42,7 +42,7 @@ The change log is accompanied by update triggers that encourage monitoring these

## Contributing

-Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind closed doors of private corporations.

+Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind the closed doors of private corporations.

These corporations are largely without regulation, so the burden of documentation falls on the public.

If you are interested in contributing, we are building Reward Reports for popular machine learning systems on a public

diff --git a/units/en/unitbonus3/rlhf.mdx b/units/en/unitbonus3/rlhf.mdx

index 7c473d1..e8de6d1 100644

--- a/units/en/unitbonus3/rlhf.mdx

+++ b/units/en/unitbonus3/rlhf.mdx

@@ -14,7 +14,7 @@ To start learning about RLHF:

1. Read this introduction: [Illustrating Reinforcement Learning from Human Feedback (RLHF)](https://huggingface.co/blog/rlhf).

2. Watch the recorded live we did some weeks ago, where Nathan covered the basics of Reinforcement Learning from Human Feedback (RLHF) and how this technology is being used to enable state-of-the-art ML tools like ChatGPT.

-Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with the open question in RLHF.

+Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with open questions in RLHF.

diff --git a/units/en/unitbonus3/model-based.mdx b/units/en/unitbonus3/model-based.mdx

index 9983a01..633afcb 100644

--- a/units/en/unitbonus3/model-based.mdx

+++ b/units/en/unitbonus3/model-based.mdx

@@ -2,7 +2,7 @@

Model-based reinforcement learning only differs from its model-free counterpart in learning a *dynamics model*, but that has substantial downstream effects on how the decisions are made.

-The dynamics models usually model the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

+The dynamics model usually models the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

## Simple definition

diff --git a/units/en/unitbonus3/rl-documentation.mdx b/units/en/unitbonus3/rl-documentation.mdx

index dc4a661..8357e10 100644

--- a/units/en/unitbonus3/rl-documentation.mdx

+++ b/units/en/unitbonus3/rl-documentation.mdx

@@ -3,7 +3,7 @@

In this advanced topic, we address the question: **how should we monitor and keep track of powerful reinforcement learning agents that we are training in the real world and

interfacing with humans?**

-As machine learning systems have increasingly impacted modern life, **call for documentation of these systems has grown**.

+As machine learning systems have increasingly impacted modern life, the **call for the documentation of these systems has grown**.

Such documentation can cover aspects such as the training data used — where it is stored, when it was collected, who was involved, etc.

— or the model optimization framework — the architecture, evaluation metrics, relevant papers, etc. — and more.

@@ -19,7 +19,7 @@ These model and data specific logs are designed to be completed when the model o

Reinforcement learning systems are fundamentally designed to optimize based on measurements of reward and time.

While the notion of a reward function can be mapped nicely to many well-understood fields of supervised learning (via a loss function),

-understanding how machine learning systems evolve over time is limited.

+understanding of how machine learning systems evolve over time is limited.

To that end, the authors introduce [*Reward Reports for Reinforcement Learning*](https://www.notion.so/Brief-introduction-to-RL-documentation-b8cbda5a6f5242338e0756e6bef72af4) (the pithy naming is designed to mirror the popular papers *Model Cards for Model Reporting* and *Datasheets for Datasets*).

The goal is to propose a type of documentation focused on the **human factors of reward** and **time-varying feedback systems**.

@@ -42,7 +42,7 @@ The change log is accompanied by update triggers that encourage monitoring these

## Contributing

-Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind closed doors of private corporations.

+Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind the closed doors of private corporations.

These corporations are largely without regulation, so the burden of documentation falls on the public.

If you are interested in contributing, we are building Reward Reports for popular machine learning systems on a public

diff --git a/units/en/unitbonus3/rlhf.mdx b/units/en/unitbonus3/rlhf.mdx

index 7c473d1..e8de6d1 100644

--- a/units/en/unitbonus3/rlhf.mdx

+++ b/units/en/unitbonus3/rlhf.mdx

@@ -14,7 +14,7 @@ To start learning about RLHF:

1. Read this introduction: [Illustrating Reinforcement Learning from Human Feedback (RLHF)](https://huggingface.co/blog/rlhf).

2. Watch the recorded live we did some weeks ago, where Nathan covered the basics of Reinforcement Learning from Human Feedback (RLHF) and how this technology is being used to enable state-of-the-art ML tools like ChatGPT.

-Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with the open question in RLHF.

+Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with open questions in RLHF.

diff --git a/units/en/unitbonus3/model-based.mdx b/units/en/unitbonus3/model-based.mdx

index 9983a01..633afcb 100644

--- a/units/en/unitbonus3/model-based.mdx

+++ b/units/en/unitbonus3/model-based.mdx

@@ -2,7 +2,7 @@

Model-based reinforcement learning only differs from its model-free counterpart in learning a *dynamics model*, but that has substantial downstream effects on how the decisions are made.

-The dynamics models usually model the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

+The dynamics model usually models the environment transition dynamics, \\( s_{t+1} = f_\theta (s_t, a_t) \\), but things like inverse dynamics models (mapping from states to actions) or reward models (predicting rewards) can be used in this framework.

## Simple definition

diff --git a/units/en/unitbonus3/rl-documentation.mdx b/units/en/unitbonus3/rl-documentation.mdx

index dc4a661..8357e10 100644

--- a/units/en/unitbonus3/rl-documentation.mdx

+++ b/units/en/unitbonus3/rl-documentation.mdx

@@ -3,7 +3,7 @@

In this advanced topic, we address the question: **how should we monitor and keep track of powerful reinforcement learning agents that we are training in the real world and

interfacing with humans?**

-As machine learning systems have increasingly impacted modern life, **call for documentation of these systems has grown**.

+As machine learning systems have increasingly impacted modern life, the **call for the documentation of these systems has grown**.

Such documentation can cover aspects such as the training data used — where it is stored, when it was collected, who was involved, etc.

— or the model optimization framework — the architecture, evaluation metrics, relevant papers, etc. — and more.

@@ -19,7 +19,7 @@ These model and data specific logs are designed to be completed when the model o

Reinforcement learning systems are fundamentally designed to optimize based on measurements of reward and time.

While the notion of a reward function can be mapped nicely to many well-understood fields of supervised learning (via a loss function),

-understanding how machine learning systems evolve over time is limited.

+understanding of how machine learning systems evolve over time is limited.

To that end, the authors introduce [*Reward Reports for Reinforcement Learning*](https://www.notion.so/Brief-introduction-to-RL-documentation-b8cbda5a6f5242338e0756e6bef72af4) (the pithy naming is designed to mirror the popular papers *Model Cards for Model Reporting* and *Datasheets for Datasets*).

The goal is to propose a type of documentation focused on the **human factors of reward** and **time-varying feedback systems**.

@@ -42,7 +42,7 @@ The change log is accompanied by update triggers that encourage monitoring these

## Contributing

-Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind closed doors of private corporations.

+Some of the most impactful RL-driven systems are multi-stakeholder in nature and behind the closed doors of private corporations.

These corporations are largely without regulation, so the burden of documentation falls on the public.

If you are interested in contributing, we are building Reward Reports for popular machine learning systems on a public

diff --git a/units/en/unitbonus3/rlhf.mdx b/units/en/unitbonus3/rlhf.mdx

index 7c473d1..e8de6d1 100644

--- a/units/en/unitbonus3/rlhf.mdx

+++ b/units/en/unitbonus3/rlhf.mdx

@@ -14,7 +14,7 @@ To start learning about RLHF:

1. Read this introduction: [Illustrating Reinforcement Learning from Human Feedback (RLHF)](https://huggingface.co/blog/rlhf).

2. Watch the recorded live we did some weeks ago, where Nathan covered the basics of Reinforcement Learning from Human Feedback (RLHF) and how this technology is being used to enable state-of-the-art ML tools like ChatGPT.

-Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with the open question in RLHF.

+Most of the talk is an overview of the interconnected ML models. It covers the basics of Natural Language Processing and RL and how RLHF is used on large language models. We then conclude with open questions in RLHF.

@@ -12,17 +12,17 @@ A natural question recently studied was could such knowledge benefit agents such

## LMs and RL

-There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct these knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by agent leading to:

+There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct this knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by the agent leading to:

1) Sample inefficiency

2) Unexpected behaviors from humans’ eyes

-As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenue for sample efficiency RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

+As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenues for sample efficient RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

-Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.

+Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such a method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.

@@ -12,17 +12,17 @@ A natural question recently studied was could such knowledge benefit agents such

## LMs and RL

-There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct these knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by agent leading to:

+There is therefore a potential synergy between LMs which can bring knowledge about the world, and RL which can align and correct this knowledge by interacting with an environment. It is especially interesting from a RL point-of-view as the RL field mostly relies on the **Tabula-rasa** setup where everything is learned from scratch by the agent leading to:

1) Sample inefficiency

2) Unexpected behaviors from humans’ eyes

-As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenue for sample efficiency RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

+As a first attempt, the paper [“Grounding Large Language Models with Online Reinforcement Learning”](https://arxiv.org/abs/2302.02662v1) tackled the problem of **adapting or aligning a LM to a textual environment using PPO**. They showed that the knowledge encoded in the LM lead to a fast adaptation to the environment (opening avenues for sample efficient RL agents) but also that such knowledge allowed the LM to better generalize to new tasks once aligned.

-Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.

+Another direction studied in [“Guiding Pretraining in Reinforcement Learning with Large Language Models”](https://arxiv.org/abs/2302.06692) was to keep the LM frozen but leverage its knowledge to **guide an RL agent’s exploration**. Such a method allows the RL agent to be guided towards human-meaningful and plausibly useful behaviors without requiring a human in the loop during training.