diff --git a/units/en/unit4/quiz.mdx b/units/en/unit4/quiz.mdx

index e070a37..13d9047 100644

--- a/units/en/unit4/quiz.mdx

+++ b/units/en/unit4/quiz.mdx

@@ -1,3 +1,82 @@

# Quiz

-This is the quiz

+The best way to learn and [to avoid the illusion of competence](https://www.coursera.org/lecture/learning-how-to-learn/illusions-of-competence-BuFzf) **is to test yourself.** This will help you to find **where you need to reinforce your knowledge**.

+

+

+### Q1: What are the advantages of policy-gradient over value-based methods? (Check all that apply)

+

+

+

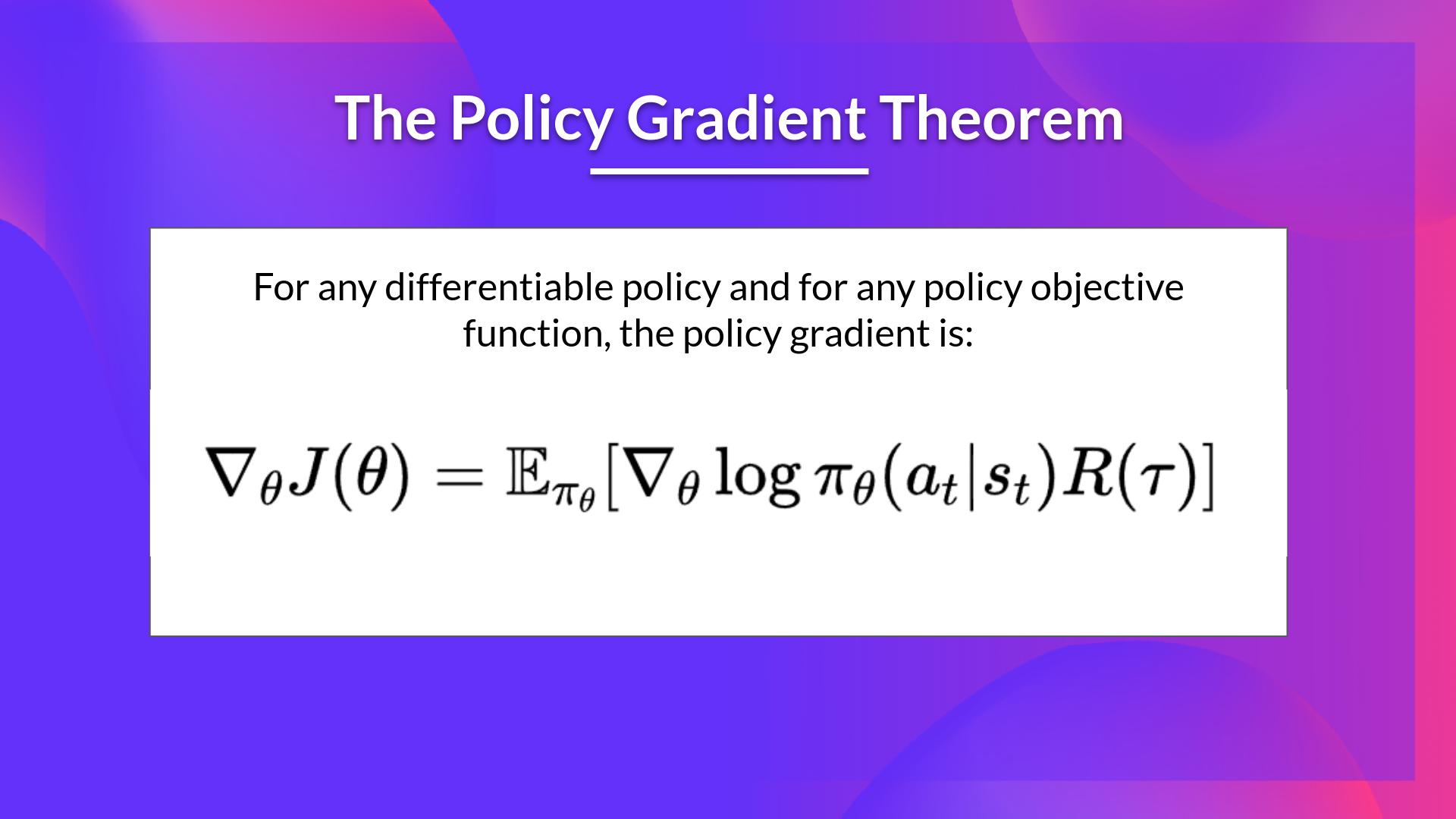

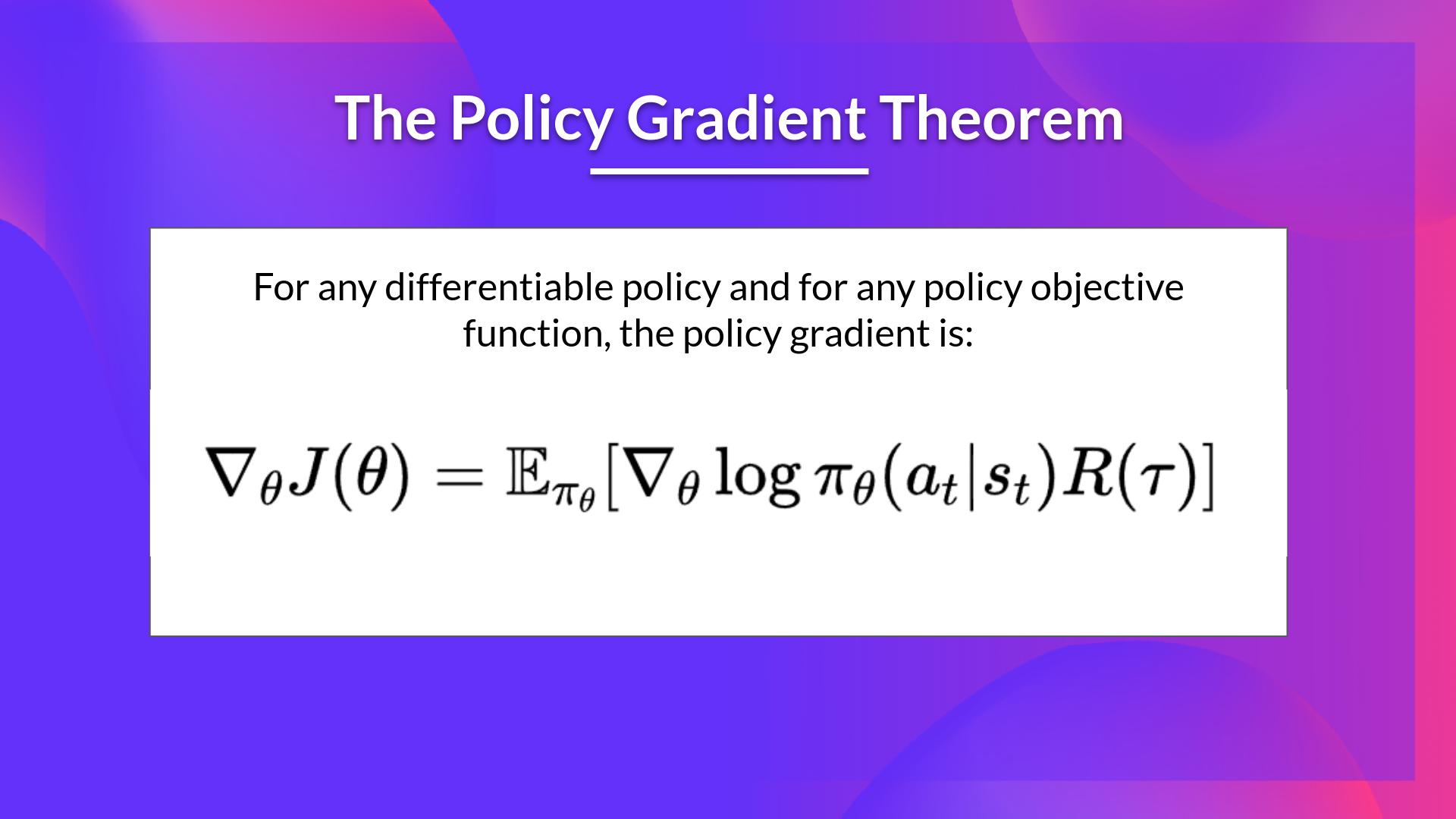

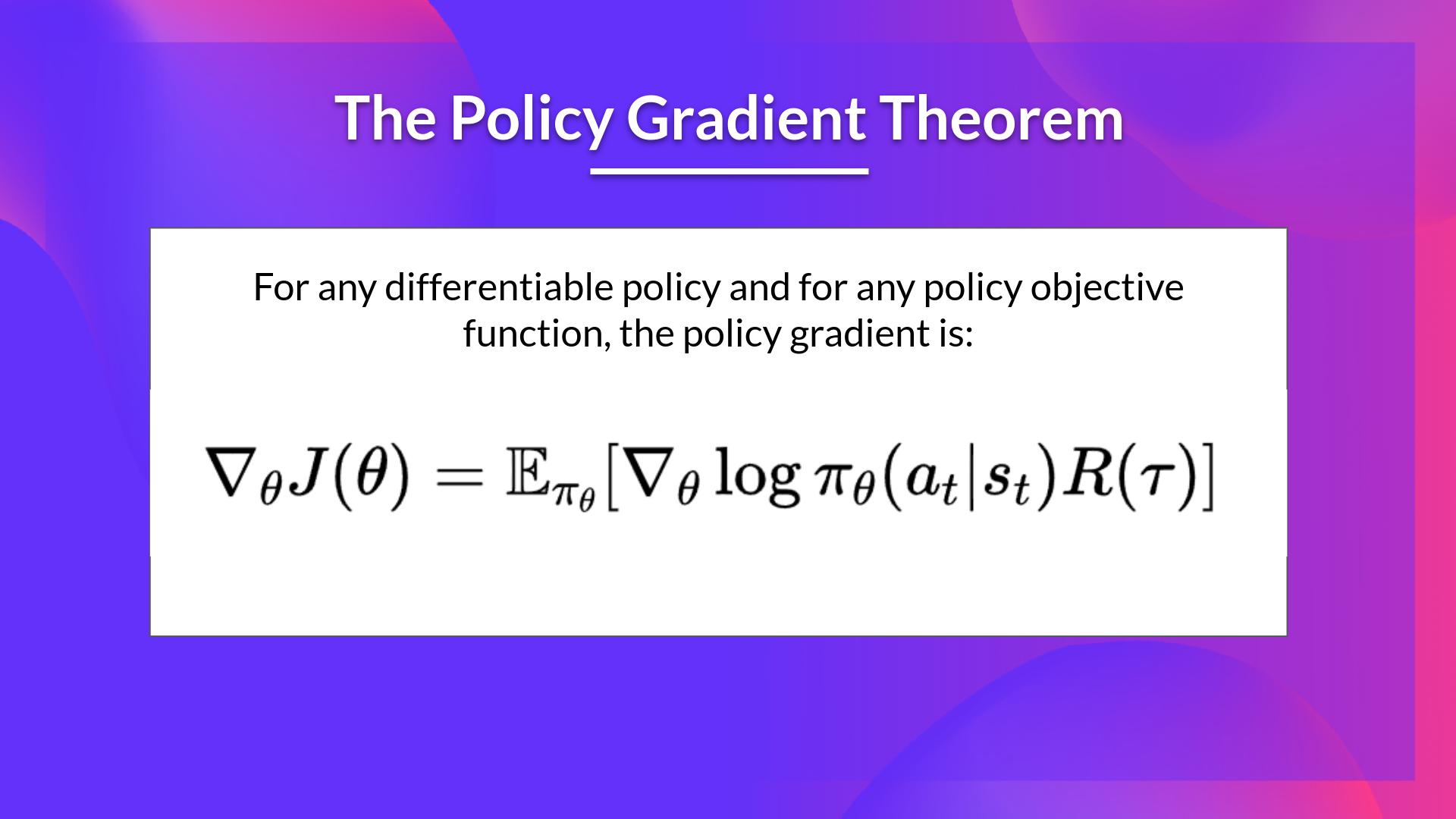

+### Q2: What is the Policy Gradient Theorem?

+

+

+Solution

+

+*The Policy Gradient Theorem* is a formula that will help us to reformulate the objective function into a differentiable function that does not involve the differentiation of the state distribution.

+

+ +

+

+

+

+

+

+### Q3: What's the difference between policy-based methods and policy-gradient methods? (Check all that apply)

+

+

+

+

+### Q4: Why do we use gradient ascent instead of gradient descent to optimize J(θ)?

+

+

+

+Congrats on finishing this Quiz 🥳, if you missed some elements, take time to read the chapter again to reinforce (😏) your knowledge.

+

+

+

+ +

+

+

+