diff --git a/units/en/unit5/conclusion.mdx b/units/en/unit5/conclusion.mdx

index 4719c61..8f173fc 100644

--- a/units/en/unit5/conclusion.mdx

+++ b/units/en/unit5/conclusion.mdx

@@ -5,8 +5,10 @@ Congrats on finishing this unit! You’ve just trained your first ML-Agents and

The best way to learn is to **practice and try stuff**. Why not try another environment? [ML-Agents has 18 different environments](https://github.com/Unity-Technologies/ml-agents/blob/develop/docs/Learning-Environment-Examples.md).

For instance:

-- *Worm*, where you teach a worm to crawl.

-- *Walker*: teach an agent to walk towards a goal.

+- [Worm](https://huggingface.co/spaces/unity/ML-Agents-Worm), where you teach a worm to crawl.

+- [Walker](https://huggingface.co/spaces/unity/ML-Agents-Walker): teach an agent to walk towards a goal.

+

+Check the documentation to find how to train them and the list of already integrated MLAgents environments on the Hub: https://github.com/huggingface/ml-agents#getting-started

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index 3654eda..258ae79 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -1 +1,29 @@

# Hands-on

+

+

+

+

+Now that we learned what is ML-Agents, how it works and that we studied the two environments we're going to use. We're ready to train our agents.

+

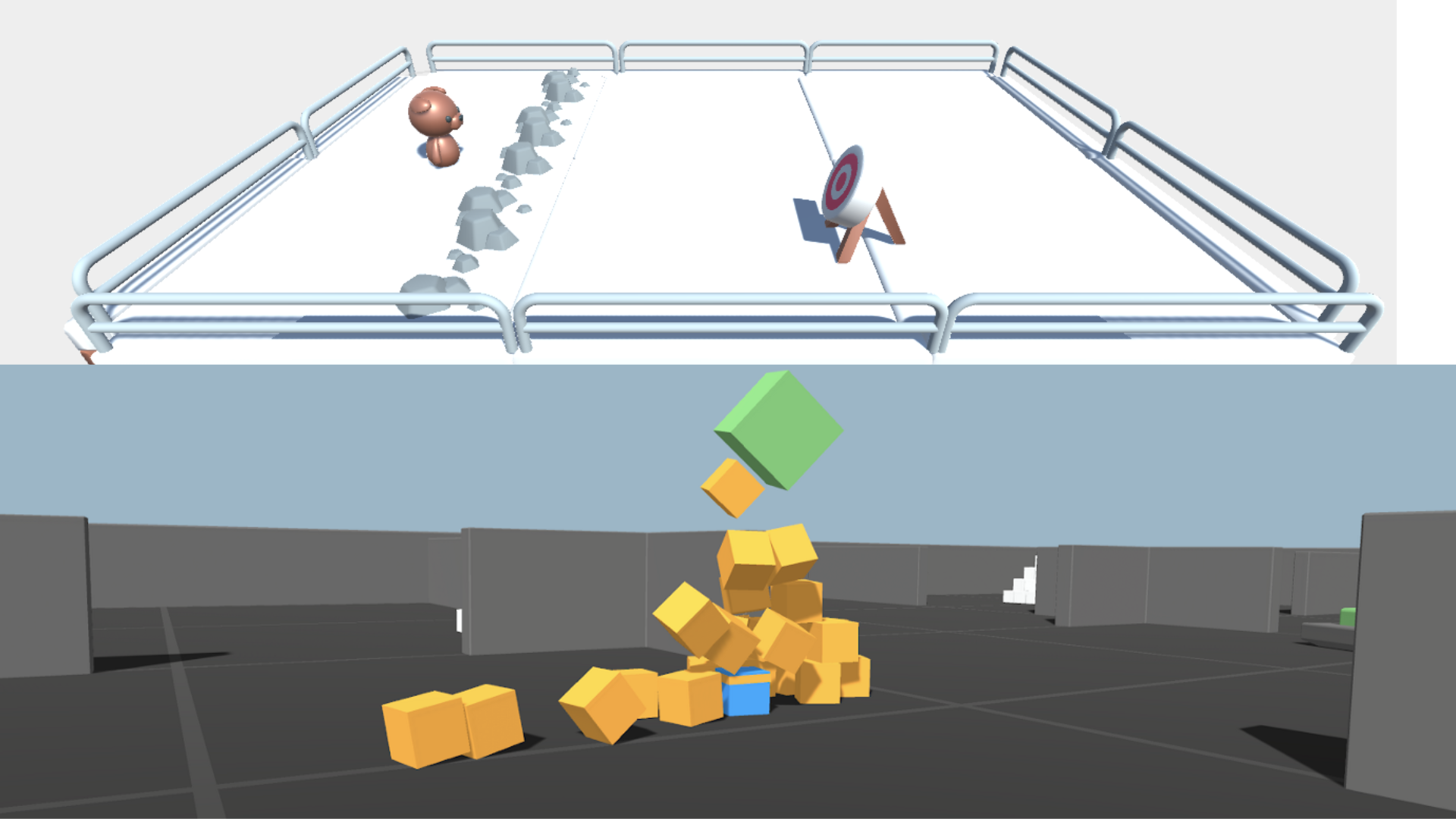

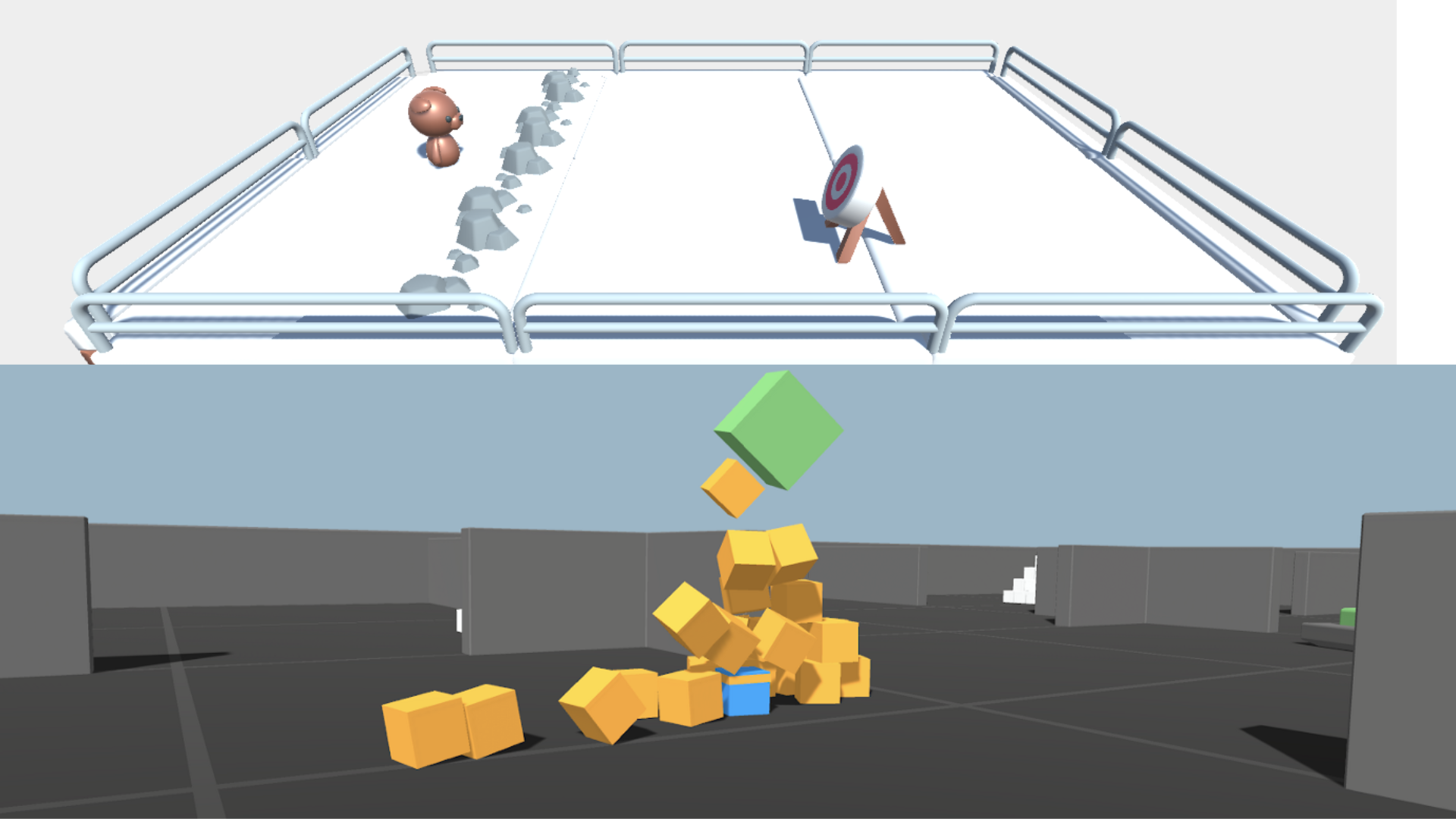

+- The first one will learn to **shoot snowballs onto spawning target**.

+- The second need to **press a button to spawn a pyramid, then navigate to the pyramid, knock it over, and move to the gold brick at the top**. To do that, it will need to explore its environment, and we will use a technique called curiosity.

+

+

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index 3654eda..258ae79 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -1 +1,29 @@

# Hands-on

+

+

+

+

+Now that we learned what is ML-Agents, how it works and that we studied the two environments we're going to use. We're ready to train our agents.

+

+- The first one will learn to **shoot snowballs onto spawning target**.

+- The second need to **press a button to spawn a pyramid, then navigate to the pyramid, knock it over, and move to the gold brick at the top**. To do that, it will need to explore its environment, and we will use a technique called curiosity.

+

+ +

+After that, you'll be able to watch your agents playing directly on your browser.

+

+The ML-Agents integration on the Hub **is still experimental**, some features will be added in the future. But for now, to validate this hands-on for the certification process, you just need to push your trained models to the Hub.

+There's no results to attain to validate this one. But if you want to get nice results you can try to attain:

+

+- For [Pyramids](https://huggingface.co/spaces/unity/ML-Agents-Pyramids): Mean Reward = 1.75

+- For [SnowballTarget](https://huggingface.co/spaces/ThomasSimonini/ML-Agents-SnowballTarget): Mean Reward ⁼ 15 or 30 targets shoot in an episode.

+

+ For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

+

+ **To start the hands-on click on Open In Colab button** 👇 :

+

+ [](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit5/unit5.ipynb)

diff --git a/units/en/unit5/introduction.mdx b/units/en/unit5/introduction.mdx

index 5746ac3..5ef5795 100644

--- a/units/en/unit5/introduction.mdx

+++ b/units/en/unit5/introduction.mdx

@@ -1,6 +1,6 @@

# An Introduction to Unity ML-Agents [[introduction-to-ml-agents]]

-One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, game engines are the perfect tool to use.

+One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, we can use game engines.

Game engines like [Unity](https://unity.com/), [Godot](https://godotengine.org/) or [Unreal Engine](https://www.unrealengine.com/), are programs made to create video games. They are perfectly suited

for creating environments: they provide physics systems, 2D/3D rendering, and more.

diff --git a/units/en/unit5/pyramids.mdx b/units/en/unit5/pyramids.mdx

index 2b6cdf9..4ddf267 100644

--- a/units/en/unit5/pyramids.mdx

+++ b/units/en/unit5/pyramids.mdx

@@ -11,7 +11,6 @@ The reward function is:

+

+After that, you'll be able to watch your agents playing directly on your browser.

+

+The ML-Agents integration on the Hub **is still experimental**, some features will be added in the future. But for now, to validate this hands-on for the certification process, you just need to push your trained models to the Hub.

+There's no results to attain to validate this one. But if you want to get nice results you can try to attain:

+

+- For [Pyramids](https://huggingface.co/spaces/unity/ML-Agents-Pyramids): Mean Reward = 1.75

+- For [SnowballTarget](https://huggingface.co/spaces/ThomasSimonini/ML-Agents-SnowballTarget): Mean Reward ⁼ 15 or 30 targets shoot in an episode.

+

+ For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

+

+ **To start the hands-on click on Open In Colab button** 👇 :

+

+ [](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit5/unit5.ipynb)

diff --git a/units/en/unit5/introduction.mdx b/units/en/unit5/introduction.mdx

index 5746ac3..5ef5795 100644

--- a/units/en/unit5/introduction.mdx

+++ b/units/en/unit5/introduction.mdx

@@ -1,6 +1,6 @@

# An Introduction to Unity ML-Agents [[introduction-to-ml-agents]]

-One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, game engines are the perfect tool to use.

+One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, we can use game engines.

Game engines like [Unity](https://unity.com/), [Godot](https://godotengine.org/) or [Unreal Engine](https://www.unrealengine.com/), are programs made to create video games. They are perfectly suited

for creating environments: they provide physics systems, 2D/3D rendering, and more.

diff --git a/units/en/unit5/pyramids.mdx b/units/en/unit5/pyramids.mdx

index 2b6cdf9..4ddf267 100644

--- a/units/en/unit5/pyramids.mdx

+++ b/units/en/unit5/pyramids.mdx

@@ -11,7 +11,6 @@ The reward function is:

-

To train this new agent that seeks that button and then the Pyramid to destroy, we’ll use a combination of two types of rewards:

- The *extrinsic one* given by the environment (illustration above).

@@ -27,7 +26,8 @@ In terms of observation, we **use 148 raycasts that can each detect objects** (s

We also use a **boolean variable indicating the switch state** (did we turn on or not the switch to spawn the Pyramid) and a vector that **contains the agent’s speed**.

-ADD SCREENSHOT CODE

+

-

To train this new agent that seeks that button and then the Pyramid to destroy, we’ll use a combination of two types of rewards:

- The *extrinsic one* given by the environment (illustration above).

@@ -27,7 +26,8 @@ In terms of observation, we **use 148 raycasts that can each detect objects** (s

We also use a **boolean variable indicating the switch state** (did we turn on or not the switch to spawn the Pyramid) and a vector that **contains the agent’s speed**.

-ADD SCREENSHOT CODE

+ +

## The action space

diff --git a/units/en/unit5/snowball-target.mdx b/units/en/unit5/snowball-target.mdx

index a277b5d..5101716 100644

--- a/units/en/unit5/snowball-target.mdx

+++ b/units/en/unit5/snowball-target.mdx

@@ -1,14 +1,14 @@

# The SnowballTarget Environment

-TODO Add gif snowballtarget environment

+

+

## The action space

diff --git a/units/en/unit5/snowball-target.mdx b/units/en/unit5/snowball-target.mdx

index a277b5d..5101716 100644

--- a/units/en/unit5/snowball-target.mdx

+++ b/units/en/unit5/snowball-target.mdx

@@ -1,14 +1,14 @@

# The SnowballTarget Environment

-TODO Add gif snowballtarget environment

+ ## The Agent's Goal

-The first agent you're going to train is Julien the bear (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

+The first agent you're going to train is Julien the bear 🐻 (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

-The goal in this environment is that Julien the bear **hit as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

+The goal in this environment is that Julien **hits as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

-In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien the bear has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).

+In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).

## The Agent's Goal

-The first agent you're going to train is Julien the bear (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

+The first agent you're going to train is Julien the bear 🐻 (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

-The goal in this environment is that Julien the bear **hit as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

+The goal in this environment is that Julien **hits as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

-In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien the bear has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).

+In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).

@@ -17,8 +17,11 @@ In addition, to avoid "snowball spamming" (aka shooting a snowball every timeste

## The reward function and the reward engineering problem

-The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target**.

-Because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target** and because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+

+In terms of code it looks like this:

+

+

@@ -17,8 +17,11 @@ In addition, to avoid "snowball spamming" (aka shooting a snowball every timeste

## The reward function and the reward engineering problem

-The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target**.

-Because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target** and because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+

+In terms of code it looks like this:

+

+ We could have a more complex reward function (with a penalty to push the agent to go faster, etc.). But when you design an environment, you need to avoid the *reward engineering problem*, which is having a too complex reward function to force your agent to behave as you want it to do.

Why? Because by doing that, **you might miss interesting strategies that the agent will find with a simpler reward function**.

@@ -38,11 +41,14 @@ Think of raycasts as lasers that will detect if it passes through an object.

In this environment our agent have multiple set of raycasts:

--

We could have a more complex reward function (with a penalty to push the agent to go faster, etc.). But when you design an environment, you need to avoid the *reward engineering problem*, which is having a too complex reward function to force your agent to behave as you want it to do.

Why? Because by doing that, **you might miss interesting strategies that the agent will find with a simpler reward function**.

@@ -38,11 +41,14 @@ Think of raycasts as lasers that will detect if it passes through an object.

In this environment our agent have multiple set of raycasts:

--

+TODO: ADd explanation vector

+

+

+TODO: ADd explanation vector

+

+ ## The action space

The action space is discrete with TODO ADD

-IMAGE

+

+

## The action space

The action space is discrete with TODO ADD

-IMAGE

+

+

@@ -17,8 +17,11 @@ In addition, to avoid "snowball spamming" (aka shooting a snowball every timeste

## The reward function and the reward engineering problem

-The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target**.

-Because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target** and because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+

+In terms of code it looks like this:

+

+

@@ -17,8 +17,11 @@ In addition, to avoid "snowball spamming" (aka shooting a snowball every timeste

## The reward function and the reward engineering problem

-The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target**.

-Because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+The reward function is simple. **The environment gives a +1 reward every time the agent's snowball hits a target** and because the agent's goal is to maximize the expected cumulative reward, **it will try to hit as many targets as possible**.

+

+In terms of code it looks like this:

+

+ We could have a more complex reward function (with a penalty to push the agent to go faster, etc.). But when you design an environment, you need to avoid the *reward engineering problem*, which is having a too complex reward function to force your agent to behave as you want it to do.

Why? Because by doing that, **you might miss interesting strategies that the agent will find with a simpler reward function**.

@@ -38,11 +41,14 @@ Think of raycasts as lasers that will detect if it passes through an object.

In this environment our agent have multiple set of raycasts:

--

We could have a more complex reward function (with a penalty to push the agent to go faster, etc.). But when you design an environment, you need to avoid the *reward engineering problem*, which is having a too complex reward function to force your agent to behave as you want it to do.

Why? Because by doing that, **you might miss interesting strategies that the agent will find with a simpler reward function**.

@@ -38,11 +41,14 @@ Think of raycasts as lasers that will detect if it passes through an object.

In this environment our agent have multiple set of raycasts:

--

+TODO: ADd explanation vector

+

+

+TODO: ADd explanation vector

+

+ ## The action space

The action space is discrete with TODO ADD

-IMAGE

+

+

## The action space

The action space is discrete with TODO ADD

-IMAGE

+

+

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index 3654eda..258ae79 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -1 +1,29 @@

# Hands-on

+

+

diff --git a/units/en/unit5/hands-on.mdx b/units/en/unit5/hands-on.mdx

index 3654eda..258ae79 100644

--- a/units/en/unit5/hands-on.mdx

+++ b/units/en/unit5/hands-on.mdx

@@ -1 +1,29 @@

# Hands-on

+

+ +

+After that, you'll be able to watch your agents playing directly on your browser.

+

+The ML-Agents integration on the Hub **is still experimental**, some features will be added in the future. But for now, to validate this hands-on for the certification process, you just need to push your trained models to the Hub.

+There's no results to attain to validate this one. But if you want to get nice results you can try to attain:

+

+- For [Pyramids](https://huggingface.co/spaces/unity/ML-Agents-Pyramids): Mean Reward = 1.75

+- For [SnowballTarget](https://huggingface.co/spaces/ThomasSimonini/ML-Agents-SnowballTarget): Mean Reward ⁼ 15 or 30 targets shoot in an episode.

+

+ For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

+

+ **To start the hands-on click on Open In Colab button** 👇 :

+

+ [](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit5/unit5.ipynb)

diff --git a/units/en/unit5/introduction.mdx b/units/en/unit5/introduction.mdx

index 5746ac3..5ef5795 100644

--- a/units/en/unit5/introduction.mdx

+++ b/units/en/unit5/introduction.mdx

@@ -1,6 +1,6 @@

# An Introduction to Unity ML-Agents [[introduction-to-ml-agents]]

-One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, game engines are the perfect tool to use.

+One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, we can use game engines.

Game engines like [Unity](https://unity.com/), [Godot](https://godotengine.org/) or [Unreal Engine](https://www.unrealengine.com/), are programs made to create video games. They are perfectly suited

for creating environments: they provide physics systems, 2D/3D rendering, and more.

diff --git a/units/en/unit5/pyramids.mdx b/units/en/unit5/pyramids.mdx

index 2b6cdf9..4ddf267 100644

--- a/units/en/unit5/pyramids.mdx

+++ b/units/en/unit5/pyramids.mdx

@@ -11,7 +11,6 @@ The reward function is:

+

+After that, you'll be able to watch your agents playing directly on your browser.

+

+The ML-Agents integration on the Hub **is still experimental**, some features will be added in the future. But for now, to validate this hands-on for the certification process, you just need to push your trained models to the Hub.

+There's no results to attain to validate this one. But if you want to get nice results you can try to attain:

+

+- For [Pyramids](https://huggingface.co/spaces/unity/ML-Agents-Pyramids): Mean Reward = 1.75

+- For [SnowballTarget](https://huggingface.co/spaces/ThomasSimonini/ML-Agents-SnowballTarget): Mean Reward ⁼ 15 or 30 targets shoot in an episode.

+

+ For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

+

+ **To start the hands-on click on Open In Colab button** 👇 :

+

+ [](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit5/unit5.ipynb)

diff --git a/units/en/unit5/introduction.mdx b/units/en/unit5/introduction.mdx

index 5746ac3..5ef5795 100644

--- a/units/en/unit5/introduction.mdx

+++ b/units/en/unit5/introduction.mdx

@@ -1,6 +1,6 @@

# An Introduction to Unity ML-Agents [[introduction-to-ml-agents]]

-One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, game engines are the perfect tool to use.

+One of the challenges in Reinforcement Learning is to **create environments**. Fortunately for us, we can use game engines.

Game engines like [Unity](https://unity.com/), [Godot](https://godotengine.org/) or [Unreal Engine](https://www.unrealengine.com/), are programs made to create video games. They are perfectly suited

for creating environments: they provide physics systems, 2D/3D rendering, and more.

diff --git a/units/en/unit5/pyramids.mdx b/units/en/unit5/pyramids.mdx

index 2b6cdf9..4ddf267 100644

--- a/units/en/unit5/pyramids.mdx

+++ b/units/en/unit5/pyramids.mdx

@@ -11,7 +11,6 @@ The reward function is:

-

To train this new agent that seeks that button and then the Pyramid to destroy, we’ll use a combination of two types of rewards:

- The *extrinsic one* given by the environment (illustration above).

@@ -27,7 +26,8 @@ In terms of observation, we **use 148 raycasts that can each detect objects** (s

We also use a **boolean variable indicating the switch state** (did we turn on or not the switch to spawn the Pyramid) and a vector that **contains the agent’s speed**.

-ADD SCREENSHOT CODE

+

-

To train this new agent that seeks that button and then the Pyramid to destroy, we’ll use a combination of two types of rewards:

- The *extrinsic one* given by the environment (illustration above).

@@ -27,7 +26,8 @@ In terms of observation, we **use 148 raycasts that can each detect objects** (s

We also use a **boolean variable indicating the switch state** (did we turn on or not the switch to spawn the Pyramid) and a vector that **contains the agent’s speed**.

-ADD SCREENSHOT CODE

+ +

## The action space

diff --git a/units/en/unit5/snowball-target.mdx b/units/en/unit5/snowball-target.mdx

index a277b5d..5101716 100644

--- a/units/en/unit5/snowball-target.mdx

+++ b/units/en/unit5/snowball-target.mdx

@@ -1,14 +1,14 @@

# The SnowballTarget Environment

-TODO Add gif snowballtarget environment

+

+

## The action space

diff --git a/units/en/unit5/snowball-target.mdx b/units/en/unit5/snowball-target.mdx

index a277b5d..5101716 100644

--- a/units/en/unit5/snowball-target.mdx

+++ b/units/en/unit5/snowball-target.mdx

@@ -1,14 +1,14 @@

# The SnowballTarget Environment

-TODO Add gif snowballtarget environment

+ ## The Agent's Goal

-The first agent you're going to train is Julien the bear (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

+The first agent you're going to train is Julien the bear 🐻 (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

-The goal in this environment is that Julien the bear **hit as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

+The goal in this environment is that Julien **hits as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

-In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien the bear has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).

+In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).

## The Agent's Goal

-The first agent you're going to train is Julien the bear (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

+The first agent you're going to train is Julien the bear 🐻 (the name is based after our [CTO Julien Chaumond](https://twitter.com/julien_c)) **to hit targets with snowballs**.

-The goal in this environment is that Julien the bear **hit as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

+The goal in this environment is that Julien **hits as many targets as possible in the limited time** (1000 timesteps). To do that, it will need **to place itself correctly from the target and shoot**.

-In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien the bear has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).

+In addition, to avoid "snowball spamming" (aka shooting a snowball every timestep), **Julien has a "cool off" system** (it needs to wait 0.5 seconds after a shoot to be able to shoot again).