diff --git a/units/en/_toctree.yml b/units/en/_toctree.yml

index 87e5fa0..6a79159 100644

--- a/units/en/_toctree.yml

+++ b/units/en/_toctree.yml

@@ -120,8 +120,6 @@

title: The advantages and disadvantages of Policy-based methods

- local: unit4/policy-gradient

title: Diving deeper into Policy-gradient methods

- - local: unit4/reinforce

- title: The Reinforce algorithm

- local: unit4/hands-on

title: Hands-on

- local: unit4/quiz

diff --git a/units/en/unit4/hands-on.mdx b/units/en/unit4/hands-on.mdx

index 73dca3d..13b3c35 100644

--- a/units/en/unit4/hands-on.mdx

+++ b/units/en/unit4/hands-on.mdx

@@ -1 +1,33 @@

# Hands on

+

+

+

+

+

+

+

+Now that we studied the theory behind Reinforce, **you’re ready to code your Reinforce agent with PyTorch**. And you'll test its robustness using CartPole-v1 and PixelCopter,.

+

+You'll then be able to iterate and improve this implementation for more advanced environments.

+

+

+  +

+

+

+To validate this hands-on for the certification process, you need to push your trained models to the Hub.

+

+- Get a result of >= 450 for `Cartpole-v1`.

+- Get a result of >= 5 for `PixelCopter`.

+

+To find your result, go to the leaderboard and find your model, **the result = mean_reward - std of reward**. **If you don't see your model on the leaderboard, go at the bottom of the leaderboard page and click on the refresh button**.

+

+For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

+

+**To start the hands-on click on Open In Colab button** 👇 :

+

+[](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit4/unit4.ipynb)

diff --git a/units/en/unit4/policy-gradient.mdx b/units/en/unit4/policy-gradient.mdx

index 9f3a714..dc17db5 100644

--- a/units/en/unit4/policy-gradient.mdx

+++ b/units/en/unit4/policy-gradient.mdx

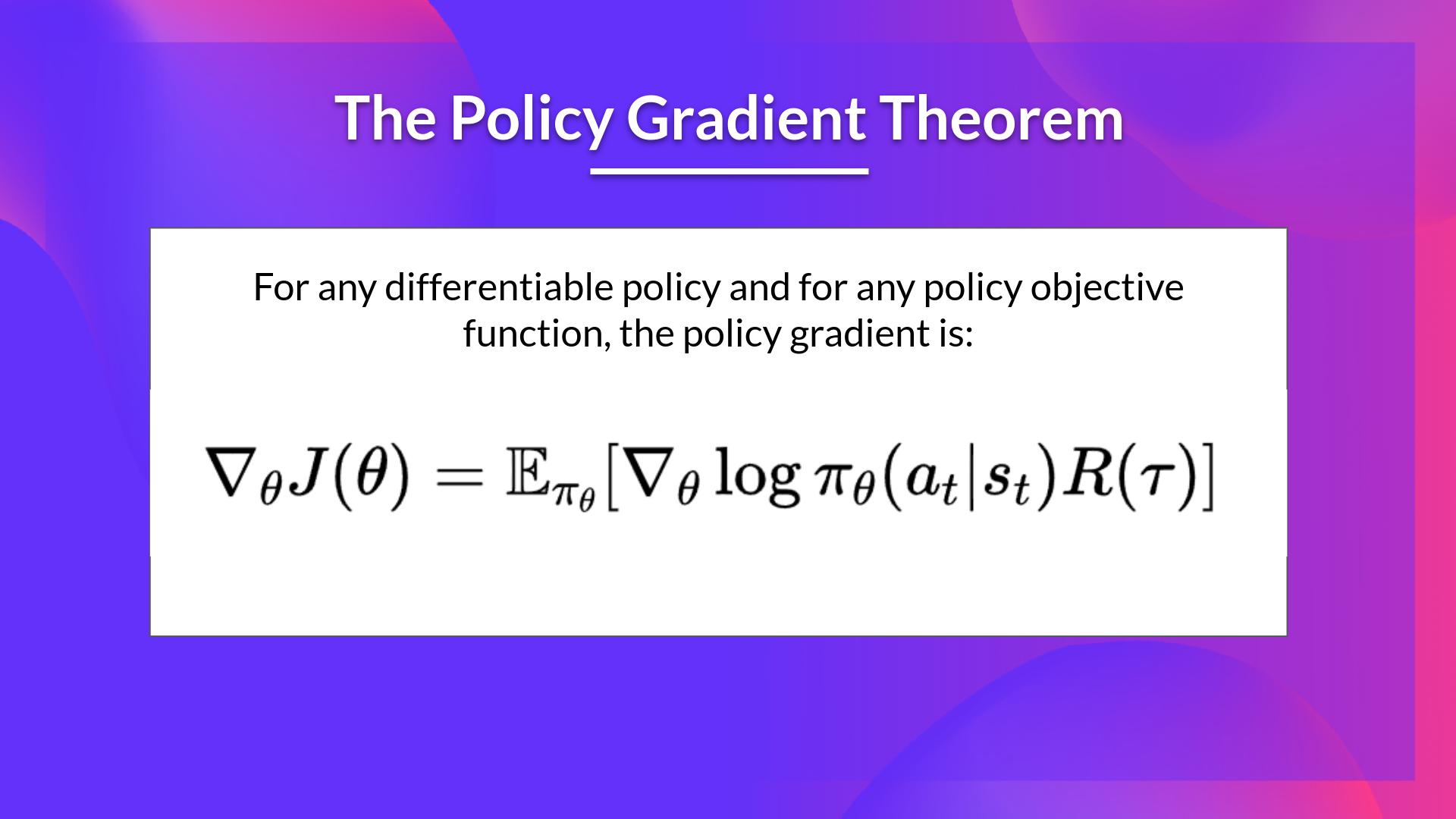

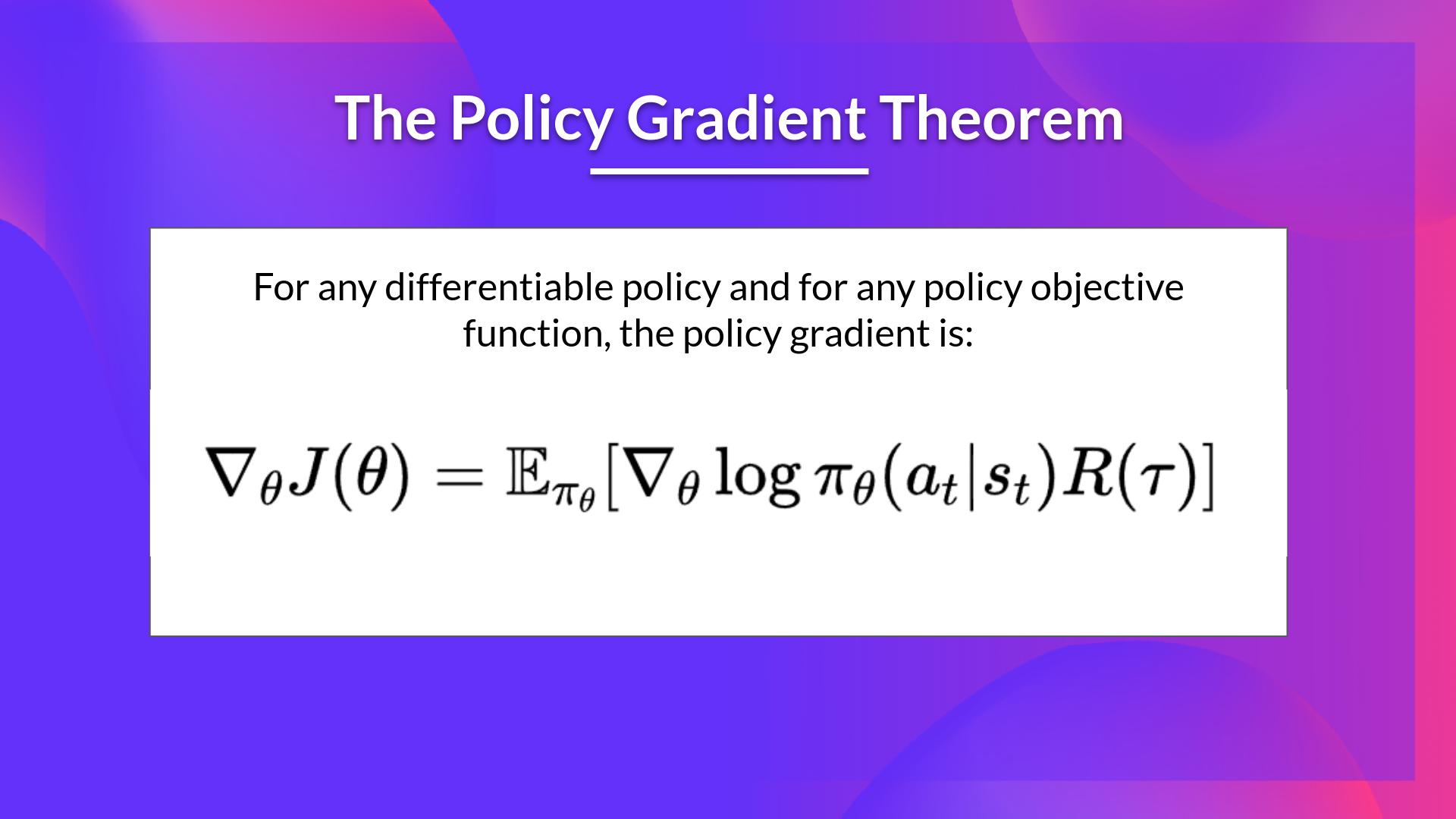

@@ -85,8 +85,8 @@ Fortunately we're going to use a solution called the Policy Gradient Theorem tha

+

+

+

+To validate this hands-on for the certification process, you need to push your trained models to the Hub.

+

+- Get a result of >= 450 for `Cartpole-v1`.

+- Get a result of >= 5 for `PixelCopter`.

+

+To find your result, go to the leaderboard and find your model, **the result = mean_reward - std of reward**. **If you don't see your model on the leaderboard, go at the bottom of the leaderboard page and click on the refresh button**.

+

+For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

+

+**To start the hands-on click on Open In Colab button** 👇 :

+

+[](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit4/unit4.ipynb)

diff --git a/units/en/unit4/policy-gradient.mdx b/units/en/unit4/policy-gradient.mdx

index 9f3a714..dc17db5 100644

--- a/units/en/unit4/policy-gradient.mdx

+++ b/units/en/unit4/policy-gradient.mdx

@@ -85,8 +85,8 @@ Fortunately we're going to use a solution called the Policy Gradient Theorem tha

-## The Reinforce algorithm

-The Reinforce algorithm is a policy-gradient algorithm that works like this:

+## The Reinforce algorithm (Monte Carlo Reinforce)

+The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):

In a loop:

- Use the policy \\(\pi_\theta\\) to collect an episode \\(\tau\\)

diff --git a/units/en/unit4/reinforce.mdx b/units/en/unit4/reinforce.mdx

deleted file mode 100644

index 549ef85..0000000

--- a/units/en/unit4/reinforce.mdx

+++ /dev/null

@@ -1,20 +0,0 @@

-# Monte Carlo Policy Gradient (Reinforce)

-

-

-

-Now that we have seen the big picture of Policy-Gradient and its advantages and disadvantages, **let's study and implement one of them**: Reinforce.

-

-## Reinforce (Monte Carlo Policy Gradient)

-

-Reinforce, also called Monte-Carlo Policy Gradient, **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\).

-

-

-Now that we studied the theory behind Reinforce, **you’re ready to code your Reinforce agent with PyTorch**. And you'll test its robustness using CartPole-v1, PixelCopter, and Pong.

-

-Start the tutorial here 👉 https://colab.research.google.com/github/huggingface/deep-rl-class/blob/main/unit5/unit5.ipynb

-

-The leaderboard to compare your results with your classmates 🏆 👉 https://huggingface.co/spaces/chrisjay/Deep-Reinforcement-Learning-Leaderboard

-

-

-

-## The Reinforce algorithm

-The Reinforce algorithm is a policy-gradient algorithm that works like this:

+## The Reinforce algorithm (Monte Carlo Reinforce)

+The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):

In a loop:

- Use the policy \\(\pi_\theta\\) to collect an episode \\(\tau\\)

diff --git a/units/en/unit4/reinforce.mdx b/units/en/unit4/reinforce.mdx

deleted file mode 100644

index 549ef85..0000000

--- a/units/en/unit4/reinforce.mdx

+++ /dev/null

@@ -1,20 +0,0 @@

-# Monte Carlo Policy Gradient (Reinforce)

-

-

-

-Now that we have seen the big picture of Policy-Gradient and its advantages and disadvantages, **let's study and implement one of them**: Reinforce.

-

-## Reinforce (Monte Carlo Policy Gradient)

-

-Reinforce, also called Monte-Carlo Policy Gradient, **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\).

-

-

-Now that we studied the theory behind Reinforce, **you’re ready to code your Reinforce agent with PyTorch**. And you'll test its robustness using CartPole-v1, PixelCopter, and Pong.

-

-Start the tutorial here 👉 https://colab.research.google.com/github/huggingface/deep-rl-class/blob/main/unit5/unit5.ipynb

-

-The leaderboard to compare your results with your classmates 🏆 👉 https://huggingface.co/spaces/chrisjay/Deep-Reinforcement-Learning-Leaderboard

-

-

-  -

-

+

+ +

+ -## The Reinforce algorithm

-The Reinforce algorithm is a policy-gradient algorithm that works like this:

+## The Reinforce algorithm (Monte Carlo Reinforce)

+The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):

In a loop:

- Use the policy \\(\pi_\theta\\) to collect an episode \\(\tau\\)

diff --git a/units/en/unit4/reinforce.mdx b/units/en/unit4/reinforce.mdx

deleted file mode 100644

index 549ef85..0000000

--- a/units/en/unit4/reinforce.mdx

+++ /dev/null

@@ -1,20 +0,0 @@

-# Monte Carlo Policy Gradient (Reinforce)

-

-

-

-Now that we have seen the big picture of Policy-Gradient and its advantages and disadvantages, **let's study and implement one of them**: Reinforce.

-

-## Reinforce (Monte Carlo Policy Gradient)

-

-Reinforce, also called Monte-Carlo Policy Gradient, **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\).

-

-

-Now that we studied the theory behind Reinforce, **you’re ready to code your Reinforce agent with PyTorch**. And you'll test its robustness using CartPole-v1, PixelCopter, and Pong.

-

-Start the tutorial here 👉 https://colab.research.google.com/github/huggingface/deep-rl-class/blob/main/unit5/unit5.ipynb

-

-The leaderboard to compare your results with your classmates 🏆 👉 https://huggingface.co/spaces/chrisjay/Deep-Reinforcement-Learning-Leaderboard

-

-

-## The Reinforce algorithm

-The Reinforce algorithm is a policy-gradient algorithm that works like this:

+## The Reinforce algorithm (Monte Carlo Reinforce)

+The Reinforce algorithm also called Monte-Carlo policy-gradient is a policy-gradient algorithm that **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\):

In a loop:

- Use the policy \\(\pi_\theta\\) to collect an episode \\(\tau\\)

diff --git a/units/en/unit4/reinforce.mdx b/units/en/unit4/reinforce.mdx

deleted file mode 100644

index 549ef85..0000000

--- a/units/en/unit4/reinforce.mdx

+++ /dev/null

@@ -1,20 +0,0 @@

-# Monte Carlo Policy Gradient (Reinforce)

-

-

-

-Now that we have seen the big picture of Policy-Gradient and its advantages and disadvantages, **let's study and implement one of them**: Reinforce.

-

-## Reinforce (Monte Carlo Policy Gradient)

-

-Reinforce, also called Monte-Carlo Policy Gradient, **uses an estimated return from an entire episode to update the policy parameter** \\(\theta\\).

-

-

-Now that we studied the theory behind Reinforce, **you’re ready to code your Reinforce agent with PyTorch**. And you'll test its robustness using CartPole-v1, PixelCopter, and Pong.

-

-Start the tutorial here 👉 https://colab.research.google.com/github/huggingface/deep-rl-class/blob/main/unit5/unit5.ipynb

-

-The leaderboard to compare your results with your classmates 🏆 👉 https://huggingface.co/spaces/chrisjay/Deep-Reinforcement-Learning-Leaderboard

-

- -

-