diff --git a/units/en/unit3/deep-q-algorithm.mdx b/units/en/unit3/deep-q-algorithm.mdx

index d8dd604..63b8780 100644

--- a/units/en/unit3/deep-q-algorithm.mdx

+++ b/units/en/unit3/deep-q-algorithm.mdx

@@ -6,24 +6,25 @@ The difference is that, during the training phase, instead of updating the Q-val

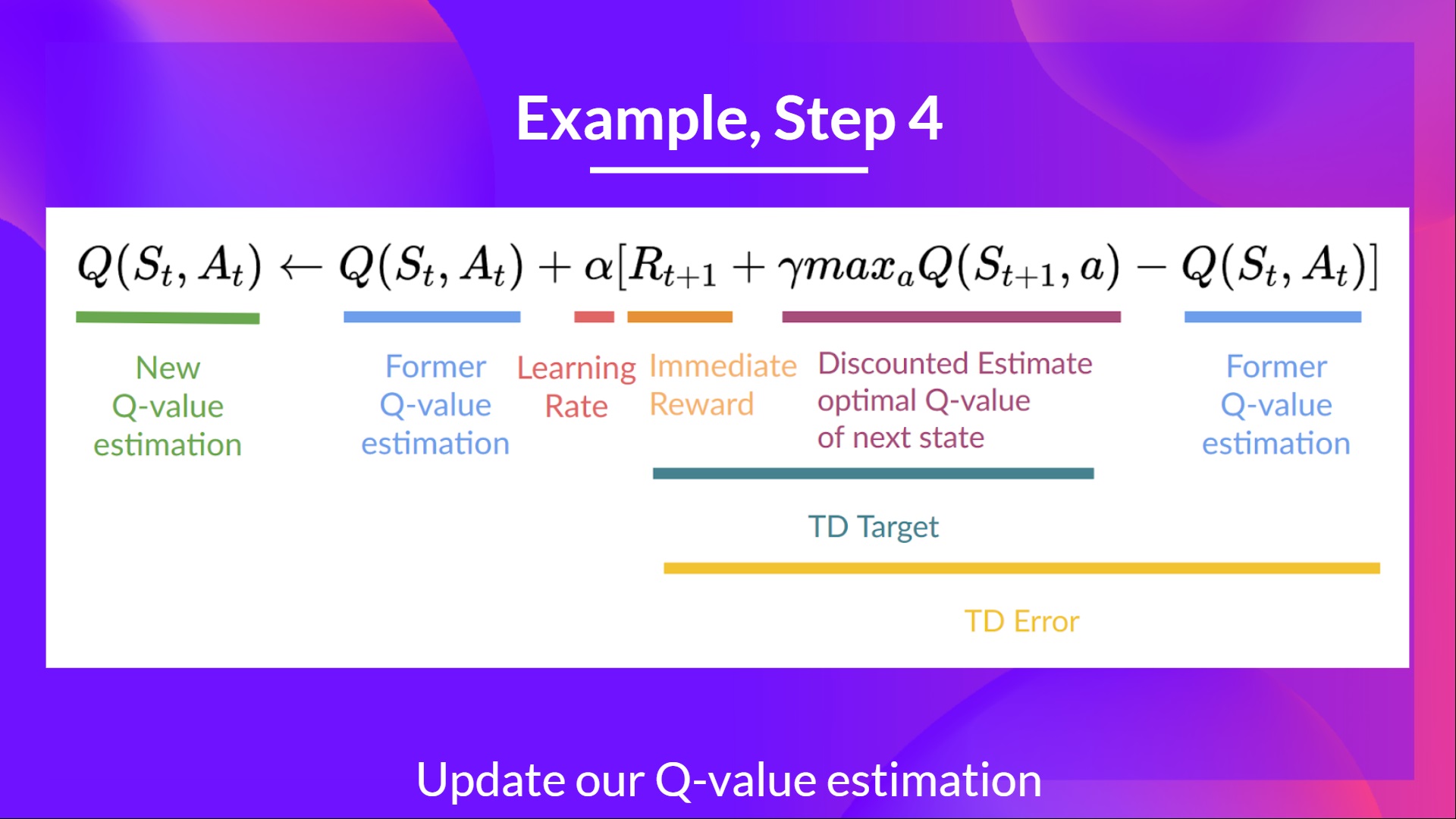

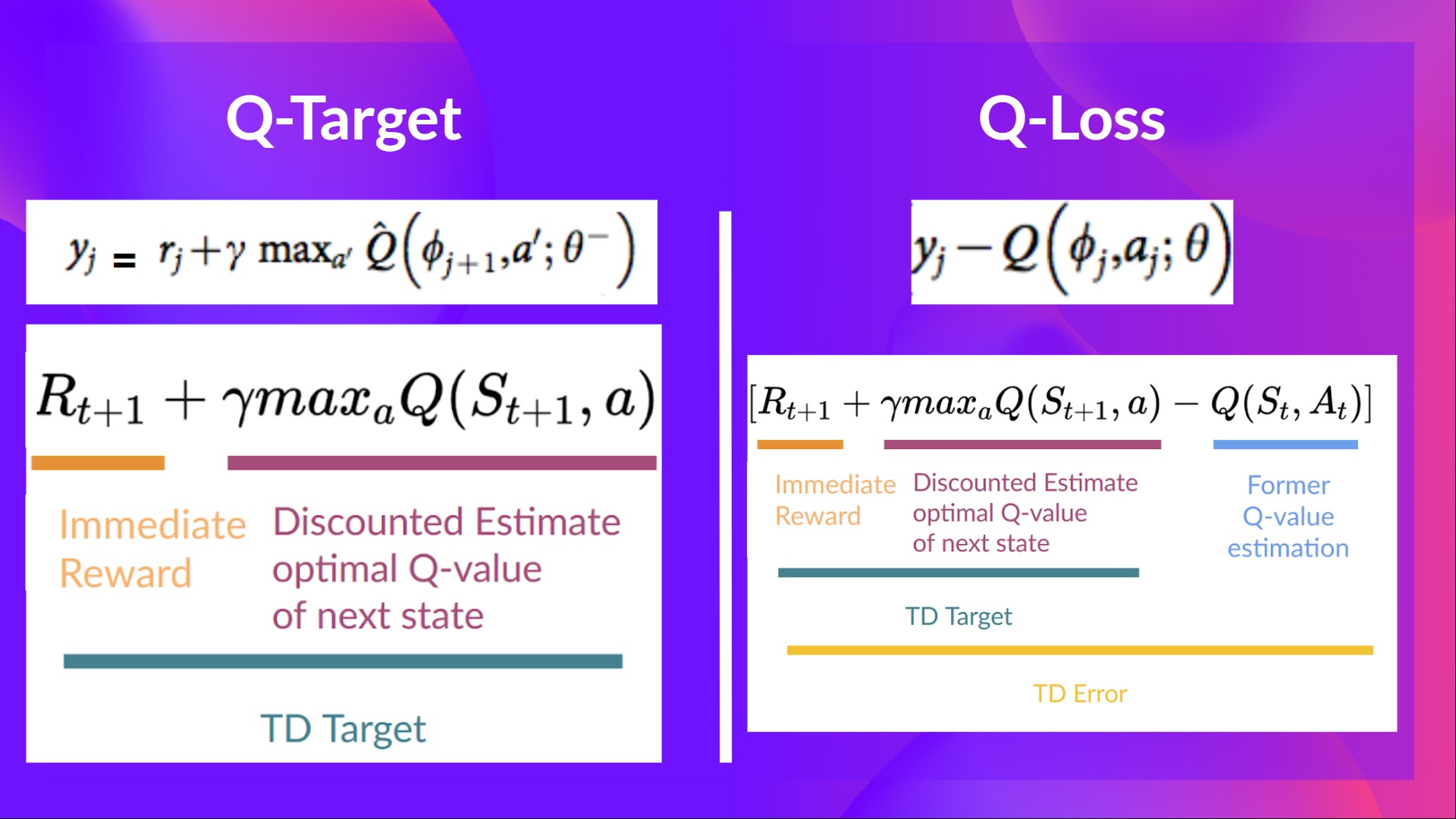

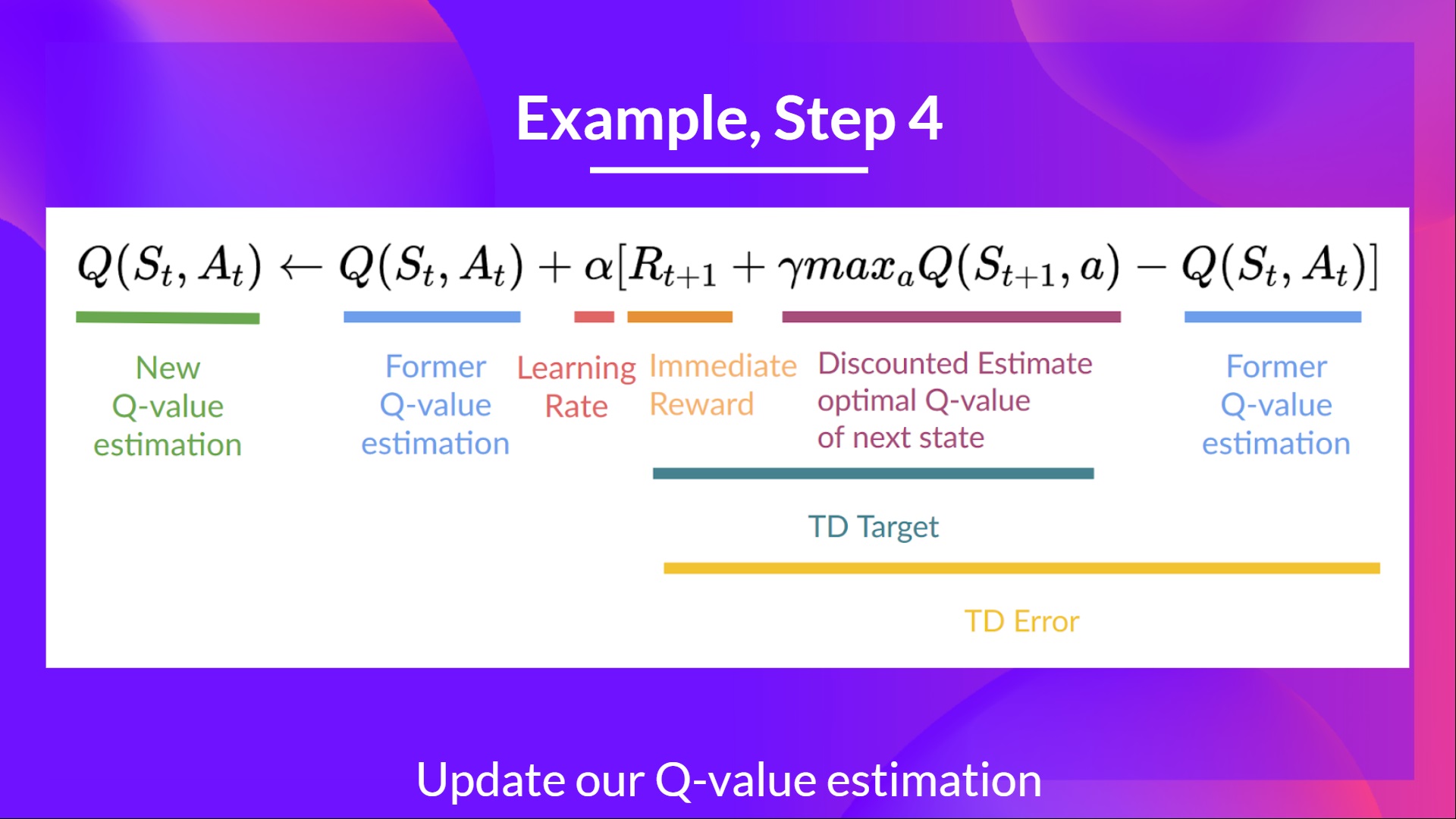

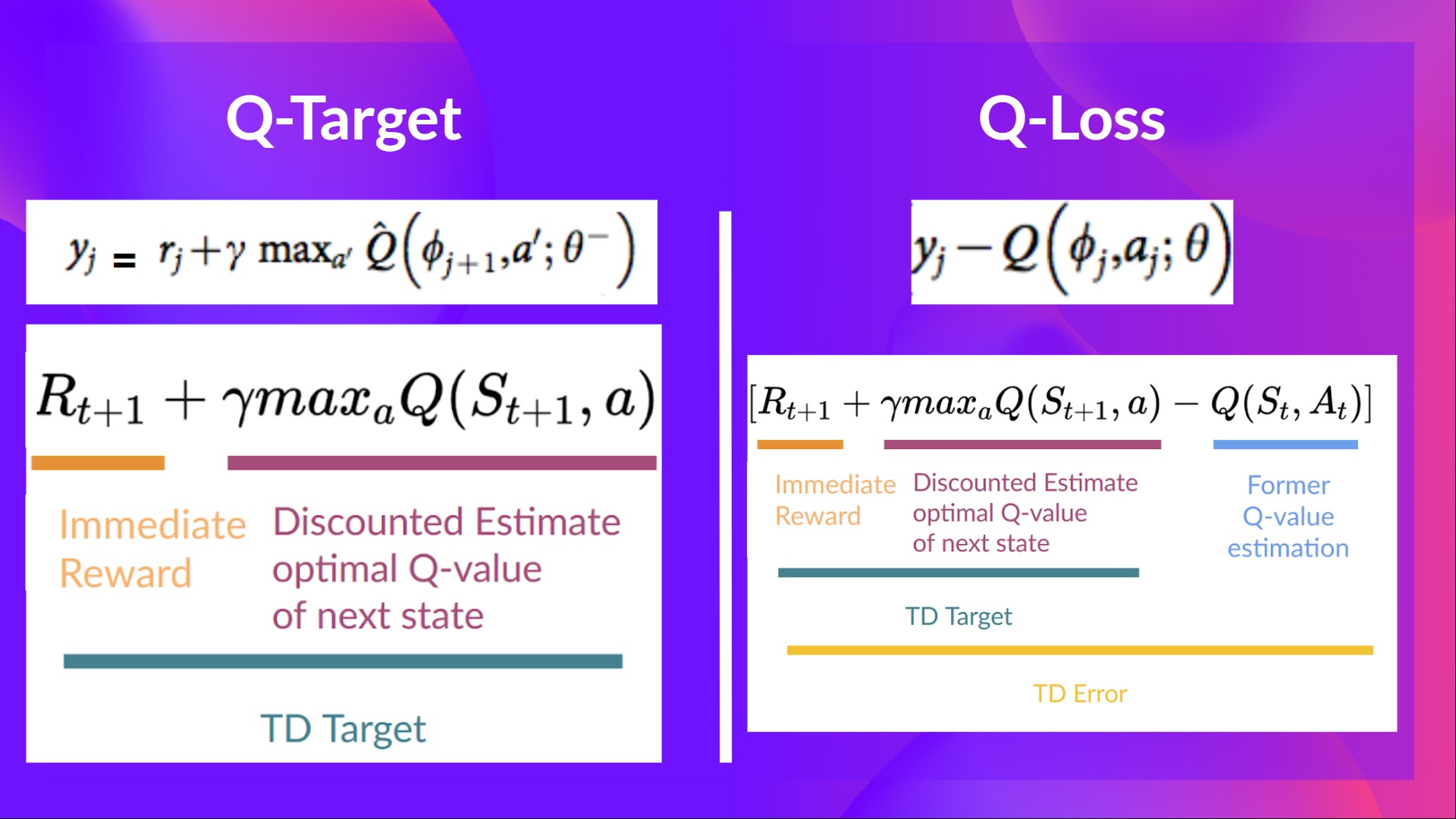

-In Deep Q-Learning, we create a **Loss function between our Q-value prediction and the Q-target and use Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

+in Deep Q-Learning, we create a **loss function that compares our Q-value prediction and the Q-target and uses Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

-In Deep Q-Learning, we create a **Loss function between our Q-value prediction and the Q-target and use Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

+in Deep Q-Learning, we create a **loss function that compares our Q-value prediction and the Q-target and uses Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

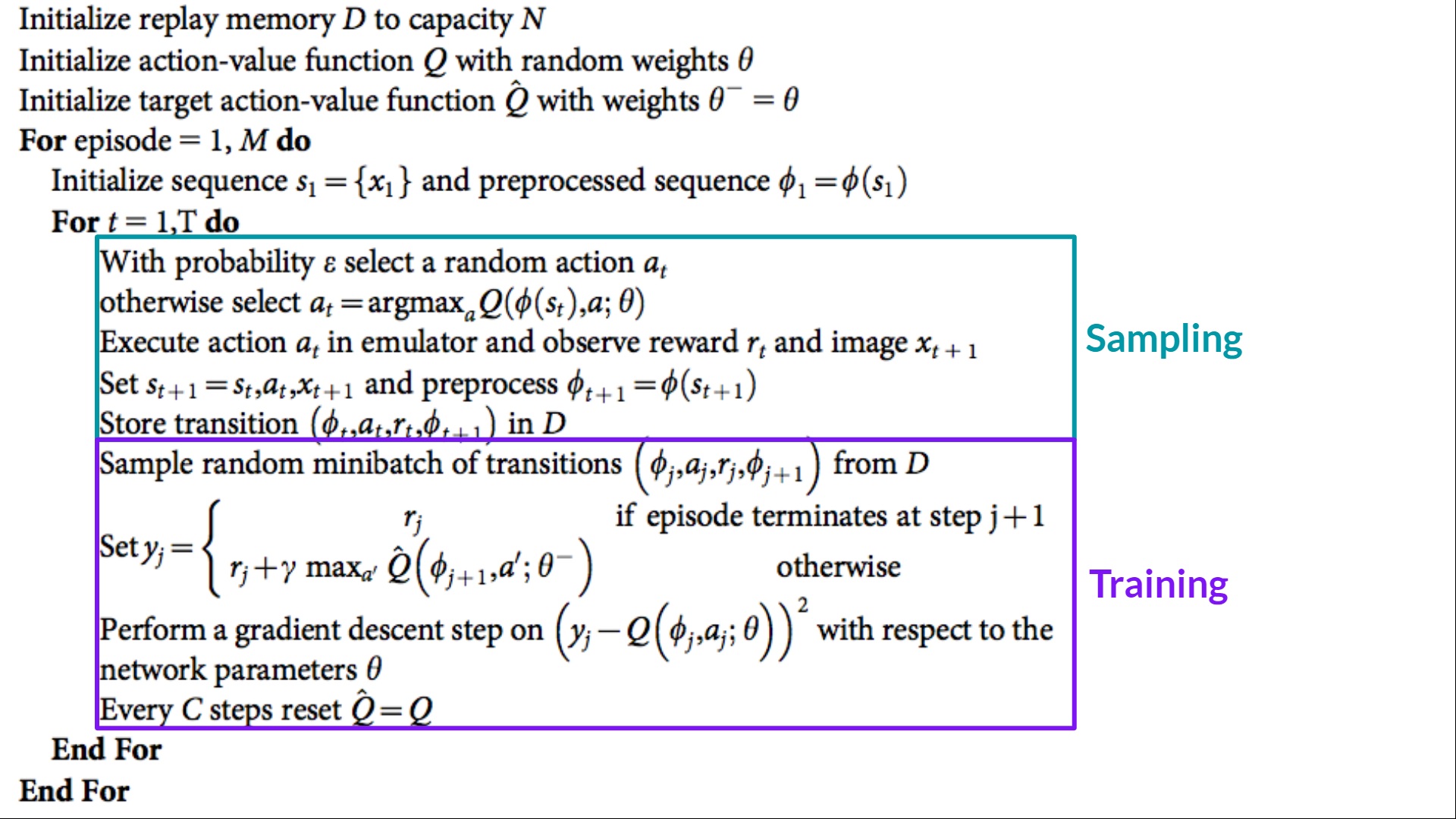

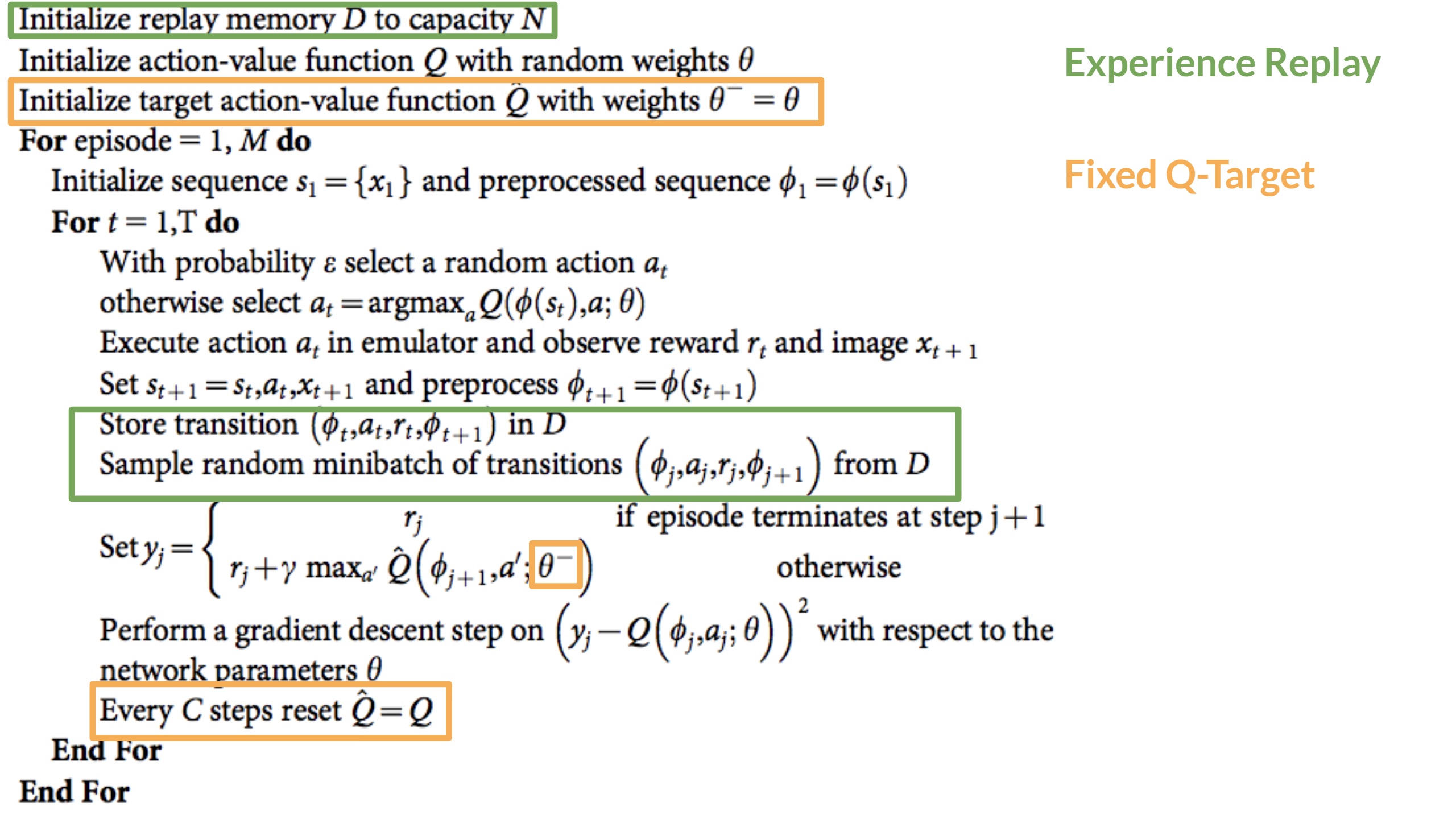

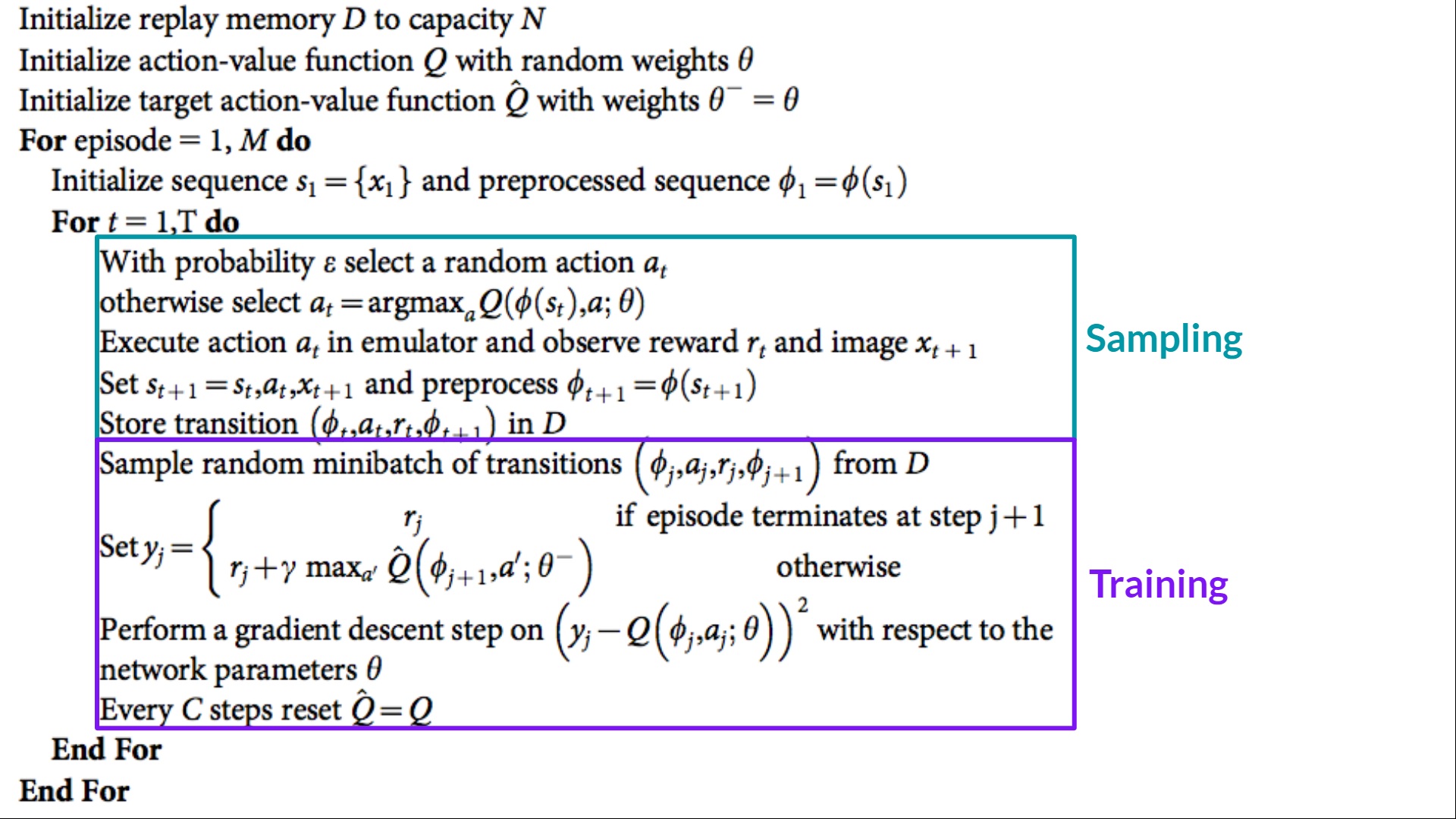

The Deep Q-Learning training algorithm has *two phases*:

-- **Sampling**: we perform actions and **store the observed experiences tuples in a replay memory**.

-- **Training**: Select the **small batch of tuple randomly and learn from it using a gradient descent update step**.

+- **Sampling**: we perform actions and **store the observed experience tuples in a replay memory**.

+- **Training**: Select a **small batch of tuples randomly and learn from this batch using a gradient descent update step**.

The Deep Q-Learning training algorithm has *two phases*:

-- **Sampling**: we perform actions and **store the observed experiences tuples in a replay memory**.

-- **Training**: Select the **small batch of tuple randomly and learn from it using a gradient descent update step**.

+- **Sampling**: we perform actions and **store the observed experience tuples in a replay memory**.

+- **Training**: Select a **small batch of tuples randomly and learn from this batch using a gradient descent update step**.

-But, this is not the only change compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

+This is not the only difference compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

To help us stabilize the training, we implement three different solutions:

-1. *Experience Replay*, to make more **efficient use of experiences**.

+1. *Experience Replay* to make more **efficient use of experiences**.

2. *Fixed Q-Target* **to stabilize the training**.

3. *Double Deep Q-Learning*, to **handle the problem of the overestimation of Q-values**.

+Let's go through them!

## Experience Replay to make more efficient use of experiences [[exp-replay]]

@@ -32,21 +33,21 @@ Why do we create a replay memory?

Experience Replay in Deep Q-Learning has two functions:

1. **Make more efficient use of the experiences during the training**.

-- Experience replay helps us **make more efficient use of the experiences during the training.** Usually, in online reinforcement learning, we interact in the environment, get experiences (state, action, reward, and next state), learn from them (update the neural network) and discard them.

-- But with experience replay, we create a replay buffer that saves experience samples **that we can reuse during the training.**

+Usually, in online reinforcement learning, the agent interacts in the environment, gets experiences (state, action, reward, and next state), learns from them (updates the neural network), and discards them. This is not efficient

+Experience replay helps **using the experiences of the training more efficiently**. We use a replay buffer that saves experience samples **that we can reuse during the training.**

-But, this is not the only change compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

+This is not the only difference compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

To help us stabilize the training, we implement three different solutions:

-1. *Experience Replay*, to make more **efficient use of experiences**.

+1. *Experience Replay* to make more **efficient use of experiences**.

2. *Fixed Q-Target* **to stabilize the training**.

3. *Double Deep Q-Learning*, to **handle the problem of the overestimation of Q-values**.

+Let's go through them!

## Experience Replay to make more efficient use of experiences [[exp-replay]]

@@ -32,21 +33,21 @@ Why do we create a replay memory?

Experience Replay in Deep Q-Learning has two functions:

1. **Make more efficient use of the experiences during the training**.

-- Experience replay helps us **make more efficient use of the experiences during the training.** Usually, in online reinforcement learning, we interact in the environment, get experiences (state, action, reward, and next state), learn from them (update the neural network) and discard them.

-- But with experience replay, we create a replay buffer that saves experience samples **that we can reuse during the training.**

+Usually, in online reinforcement learning, the agent interacts in the environment, gets experiences (state, action, reward, and next state), learns from them (updates the neural network), and discards them. This is not efficient

+Experience replay helps **using the experiences of the training more efficiently**. We use a replay buffer that saves experience samples **that we can reuse during the training.**

-⇒ This allows us to **learn from individual experiences multiple times**.

+⇒ This allows the agent to **learn from the same experiences multiple times**.

2. **Avoid forgetting previous experiences and reduce the correlation between experiences**.

-- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it overwrites new experiences.** For instance, if we are in the first level and then the second, which is different, our agent can forget how to behave and play in the first level.

+- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it gets new experiences.** For instance, if the agent is in the first level and then in the second, which is different, it can forget how to behave and play in the first level.

-The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has immediately done.**

+The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has done immediately before.**

Experience replay also has other benefits. By randomly sampling the experiences, we remove correlation in the observation sequences and avoid **action values from oscillating or diverging catastrophically.**

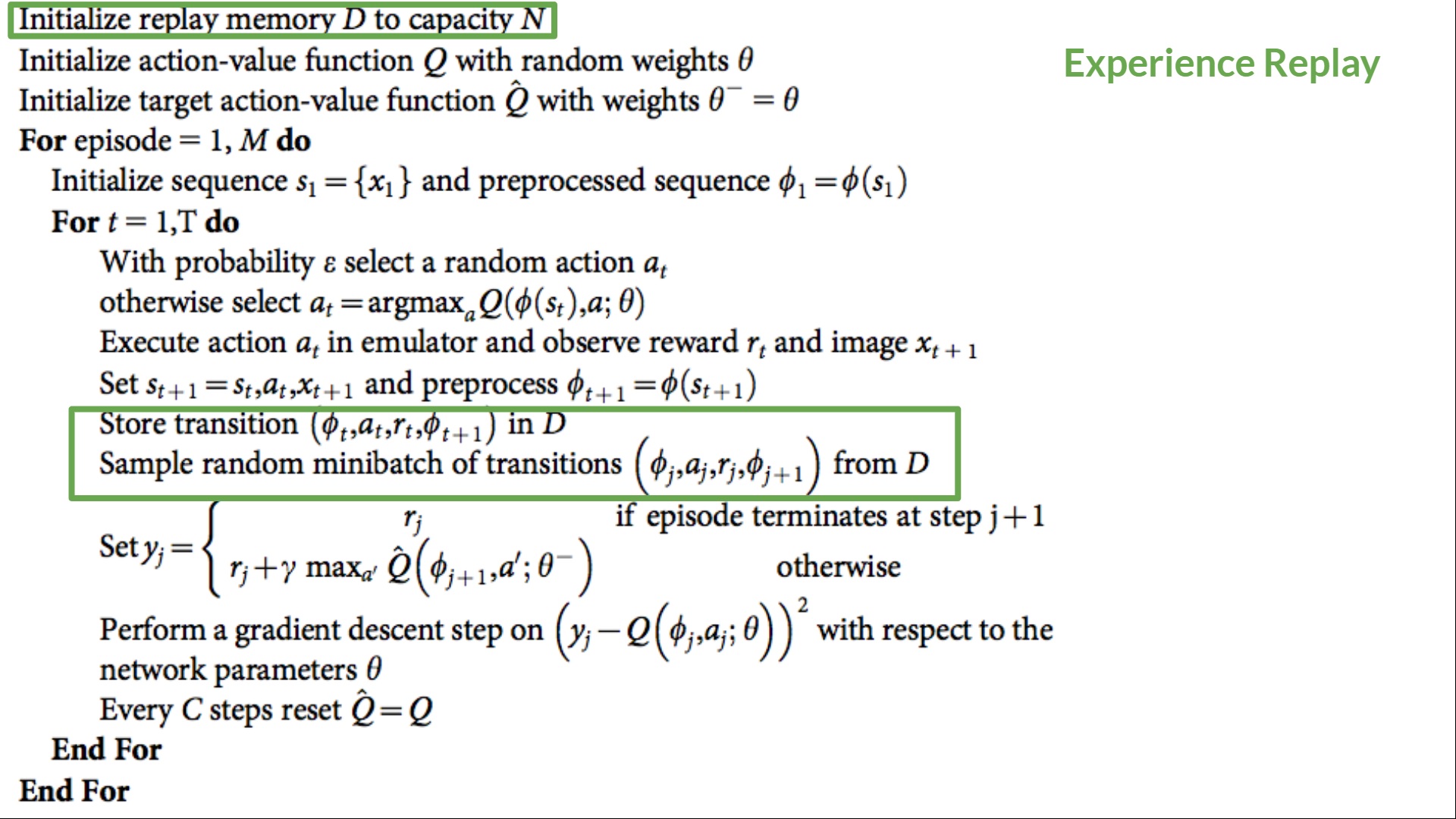

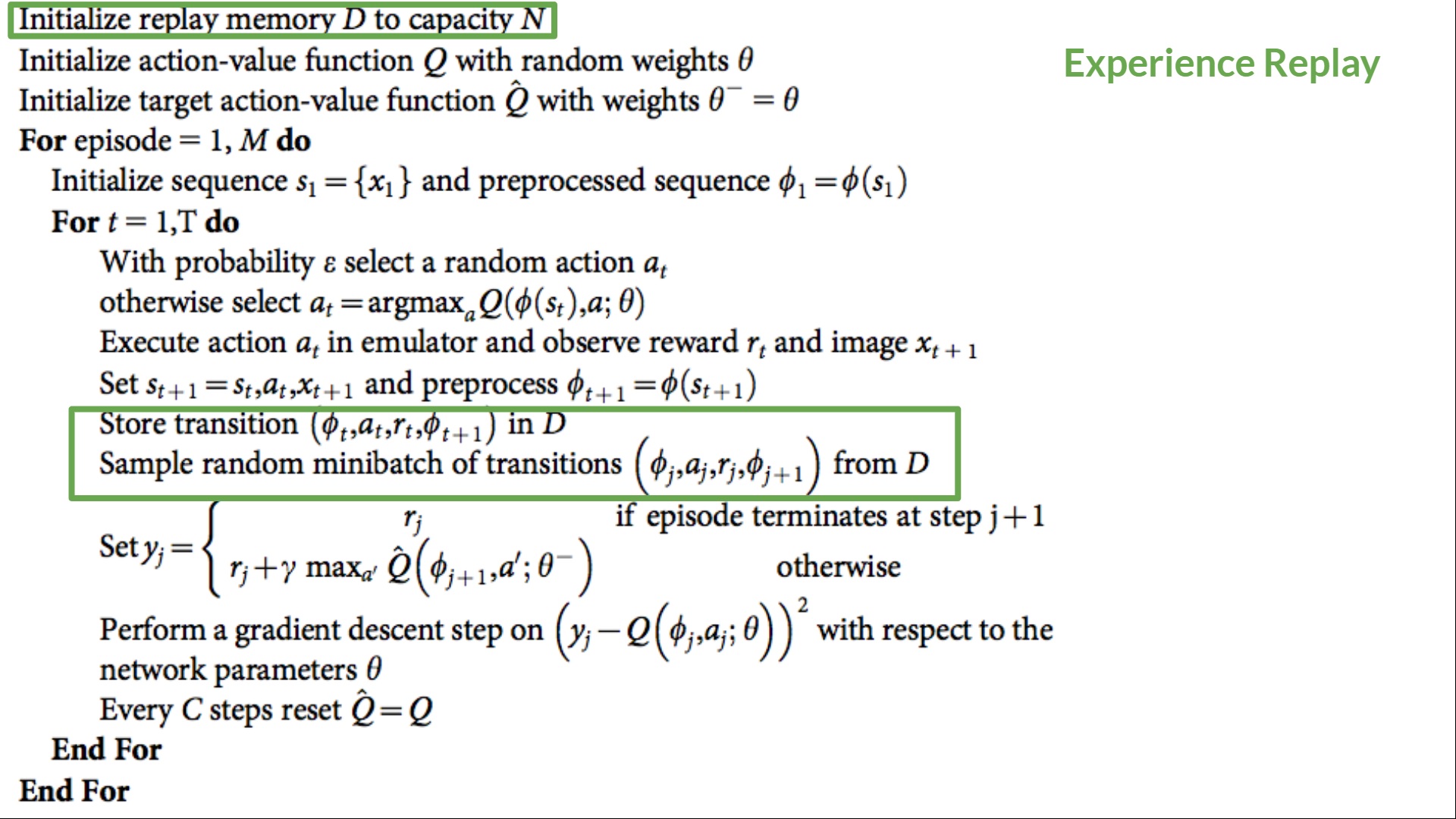

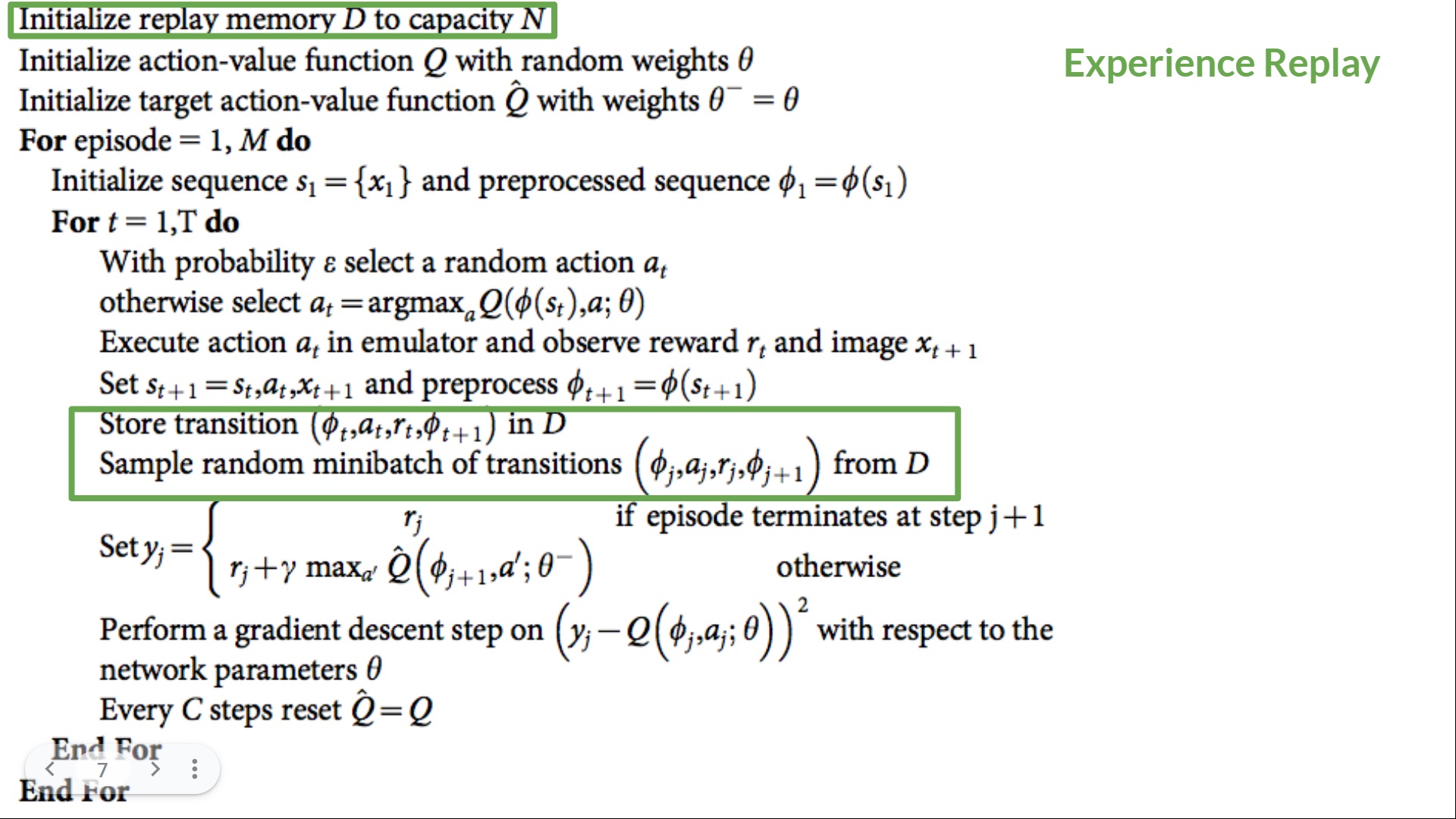

-In the Deep Q-Learning pseudocode, we see that we **initialize a replay memory buffer D from capacity N** (N is an hyperparameter that you can define). We then store experiences in the memory and sample a minibatch of experiences to feed the Deep Q-Network during the training phase.

+In the Deep Q-Learning pseudocode, we **initialize a replay memory buffer D from capacity N** (N is a hyperparameter that you can define). We then store experiences in the memory and sample a batch of experiences to feed the Deep Q-Network during the training phase.

-⇒ This allows us to **learn from individual experiences multiple times**.

+⇒ This allows the agent to **learn from the same experiences multiple times**.

2. **Avoid forgetting previous experiences and reduce the correlation between experiences**.

-- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it overwrites new experiences.** For instance, if we are in the first level and then the second, which is different, our agent can forget how to behave and play in the first level.

+- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it gets new experiences.** For instance, if the agent is in the first level and then in the second, which is different, it can forget how to behave and play in the first level.

-The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has immediately done.**

+The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has done immediately before.**

Experience replay also has other benefits. By randomly sampling the experiences, we remove correlation in the observation sequences and avoid **action values from oscillating or diverging catastrophically.**

-In the Deep Q-Learning pseudocode, we see that we **initialize a replay memory buffer D from capacity N** (N is an hyperparameter that you can define). We then store experiences in the memory and sample a minibatch of experiences to feed the Deep Q-Network during the training phase.

+In the Deep Q-Learning pseudocode, we **initialize a replay memory buffer D from capacity N** (N is a hyperparameter that you can define). We then store experiences in the memory and sample a batch of experiences to feed the Deep Q-Network during the training phase.

@@ -60,9 +61,9 @@ But we **don’t have any idea of the real TD target**. We need to estimate it

However, the problem is that we are using the same parameters (weights) for estimating the TD target **and** the Q value. Consequently, there is a significant correlation between the TD target and the parameters we are changing.

-Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** So, we’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This led to a significant oscillation in training.

+Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** We’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This can lead to a significant oscillation in training.

-It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target), you must get closer (reduce the error).

+It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target). Your goal is to get closer (reduce the error).

@@ -60,9 +61,9 @@ But we **don’t have any idea of the real TD target**. We need to estimate it

However, the problem is that we are using the same parameters (weights) for estimating the TD target **and** the Q value. Consequently, there is a significant correlation between the TD target and the parameters we are changing.

-Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** So, we’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This led to a significant oscillation in training.

+Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** We’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This can lead to a significant oscillation in training.

-It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target), you must get closer (reduce the error).

+It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target). Your goal is to get closer (reduce the error).

@@ -74,7 +75,7 @@ This leads to a bizarre path of chasing (a significant oscillation in training).

@@ -74,7 +75,7 @@ This leads to a bizarre path of chasing (a significant oscillation in training).

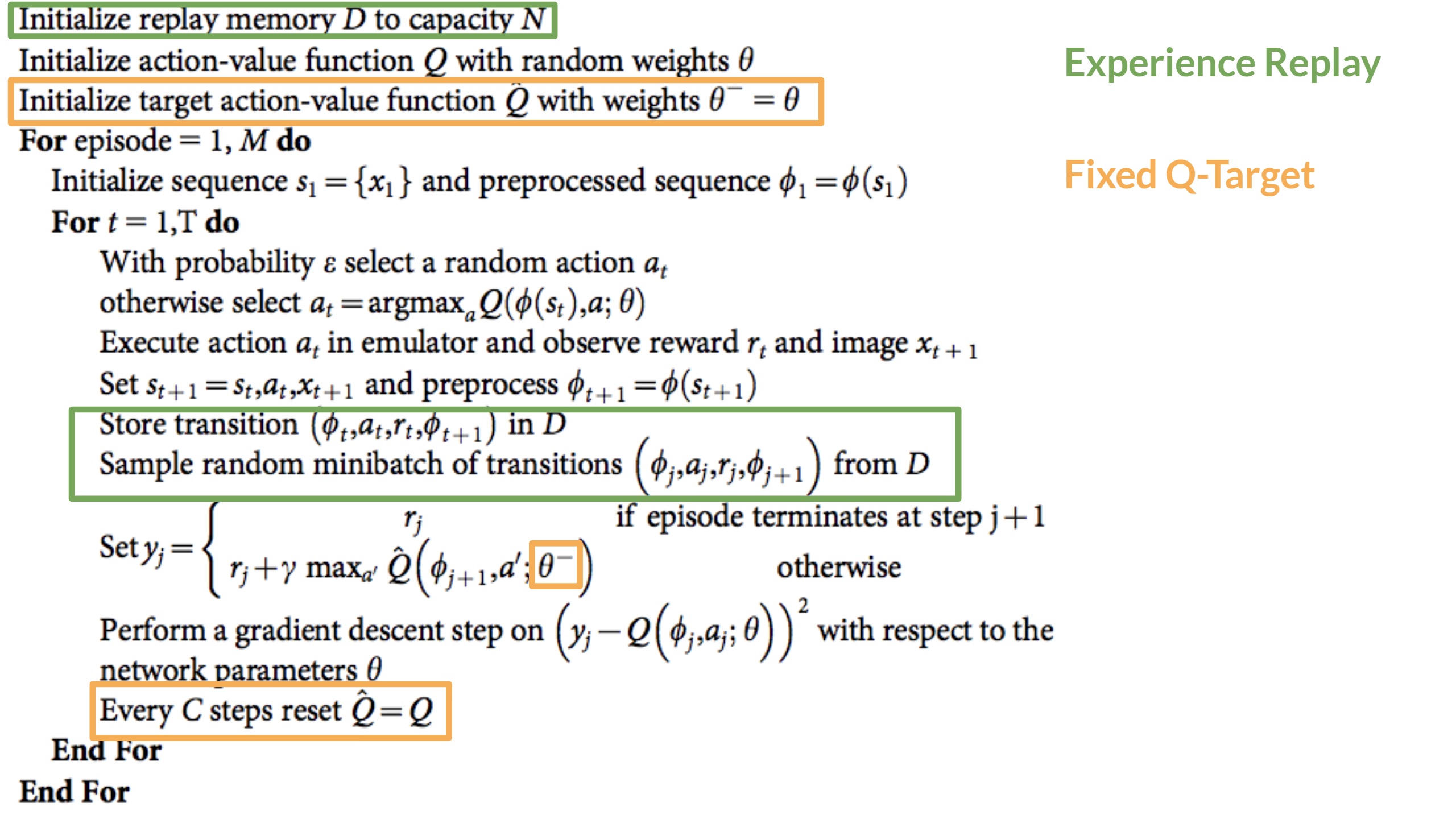

Instead, what we see in the pseudo-code is that we:

-- Use a **separate network with a fixed parameter** for estimating the TD Target

+- Use a **separate network with fixed parameters** for estimating the TD Target

- **Copy the parameters from our Deep Q-Network at every C step** to update the target network.

Instead, what we see in the pseudo-code is that we:

-- Use a **separate network with a fixed parameter** for estimating the TD Target

+- Use a **separate network with fixed parameters** for estimating the TD Target

- **Copy the parameters from our Deep Q-Network at every C step** to update the target network.

diff --git a/units/en/unit3/deep-q-network.mdx b/units/en/unit3/deep-q-network.mdx

index 2a2c5c5..cb3d616 100644

--- a/units/en/unit3/deep-q-network.mdx

+++ b/units/en/unit3/deep-q-network.mdx

@@ -11,7 +11,7 @@ When the Neural Network is initialized, **the Q-value estimation is terrible**.

We mentioned that we preprocess the input. It’s an essential step since we want to **reduce the complexity of our state to reduce the computation time needed for training**.

-So what we do is **reduce the state space to 84x84 and grayscale it** (since the colors in Atari environments don't add important information).

+To achieve this, we **reduce the state space to 84x84 and grayscale it**. We can do this since the colors in Atari environments don't add important information.

This is an essential saving since we **reduce our three color channels (RGB) to 1**.

We can also **crop a part of the screen in some games** if it does not contain important information.

diff --git a/units/en/unit3/from-q-to-dqn.mdx b/units/en/unit3/from-q-to-dqn.mdx

index 33d2ba4..13df4d1 100644

--- a/units/en/unit3/from-q-to-dqn.mdx

+++ b/units/en/unit3/from-q-to-dqn.mdx

@@ -19,11 +19,13 @@ Q-Learning worked well with small state space environments like:

But think of what we're going to do today: we will train an agent to learn to play Space Invaders a more complex game, using the frames as input.

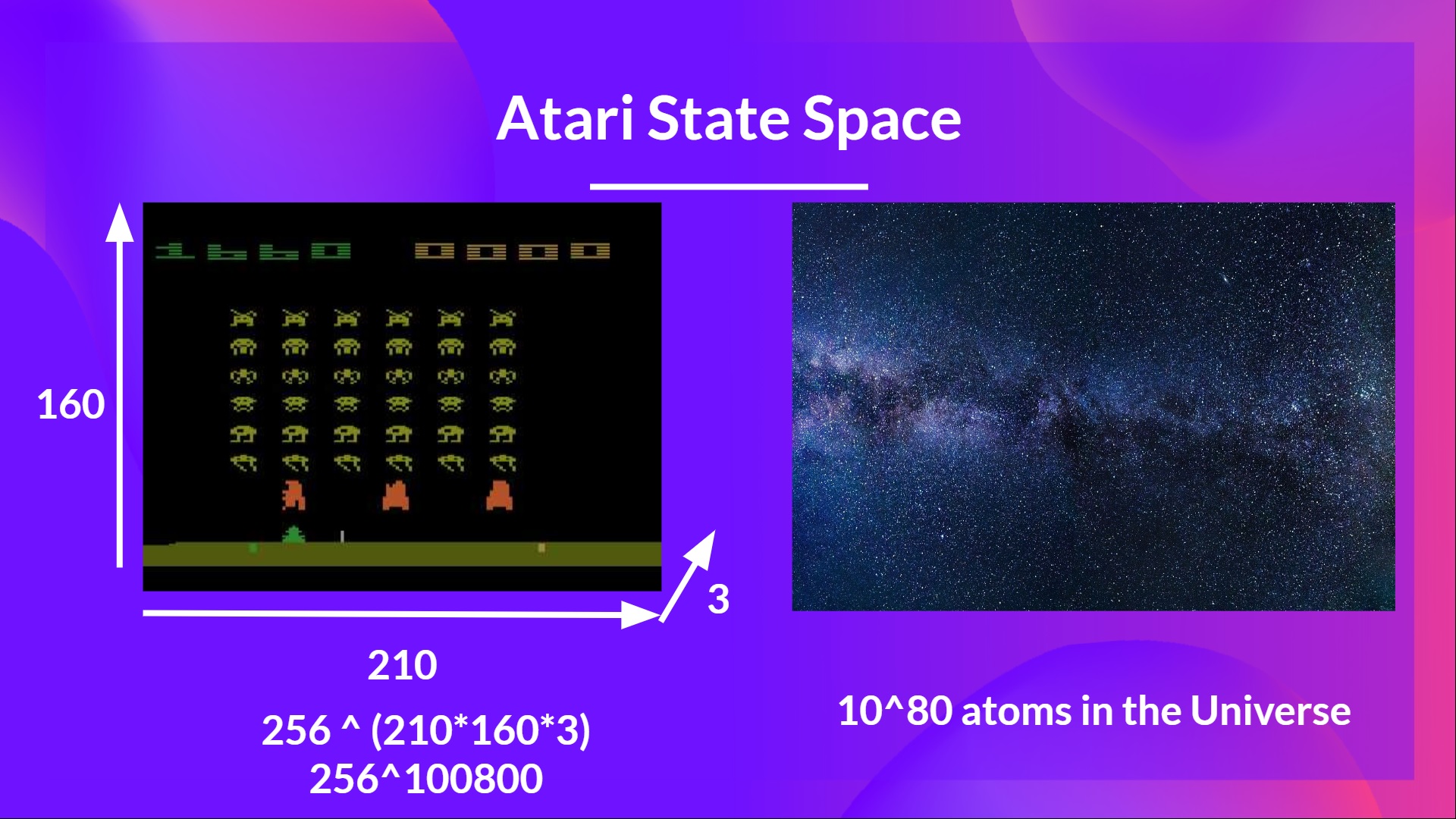

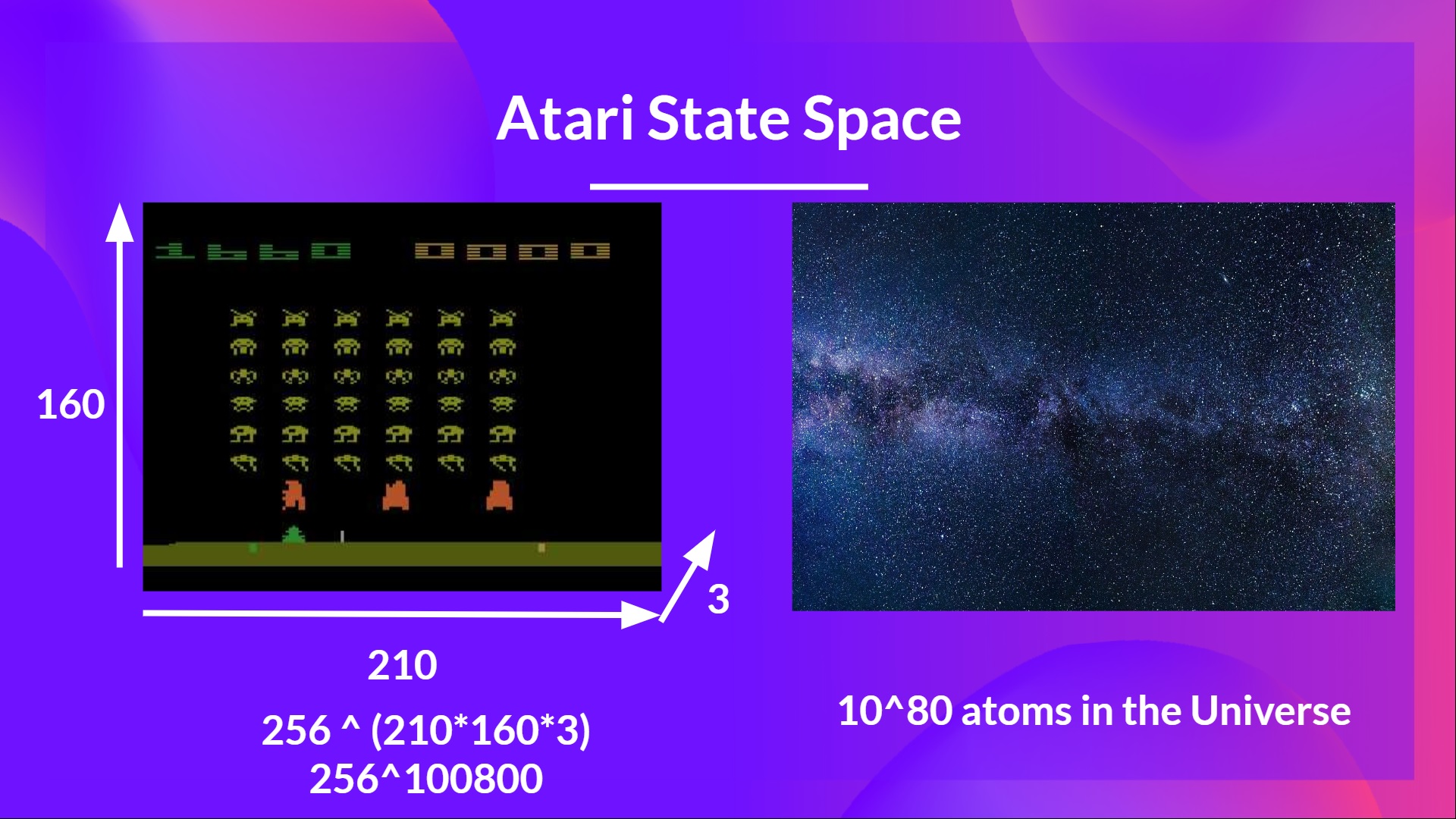

-As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3), containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3)*, containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+

+* A single frame in Atari is composed of an image of 210x160 pixels. Given the images are in color (RGB), there are 3 channels. This is why the shape is (210, 160, 3). For each pixel, the value can go from 0 to 255.

diff --git a/units/en/unit3/deep-q-network.mdx b/units/en/unit3/deep-q-network.mdx

index 2a2c5c5..cb3d616 100644

--- a/units/en/unit3/deep-q-network.mdx

+++ b/units/en/unit3/deep-q-network.mdx

@@ -11,7 +11,7 @@ When the Neural Network is initialized, **the Q-value estimation is terrible**.

We mentioned that we preprocess the input. It’s an essential step since we want to **reduce the complexity of our state to reduce the computation time needed for training**.

-So what we do is **reduce the state space to 84x84 and grayscale it** (since the colors in Atari environments don't add important information).

+To achieve this, we **reduce the state space to 84x84 and grayscale it**. We can do this since the colors in Atari environments don't add important information.

This is an essential saving since we **reduce our three color channels (RGB) to 1**.

We can also **crop a part of the screen in some games** if it does not contain important information.

diff --git a/units/en/unit3/from-q-to-dqn.mdx b/units/en/unit3/from-q-to-dqn.mdx

index 33d2ba4..13df4d1 100644

--- a/units/en/unit3/from-q-to-dqn.mdx

+++ b/units/en/unit3/from-q-to-dqn.mdx

@@ -19,11 +19,13 @@ Q-Learning worked well with small state space environments like:

But think of what we're going to do today: we will train an agent to learn to play Space Invaders a more complex game, using the frames as input.

-As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3), containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3)*, containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+

+* A single frame in Atari is composed of an image of 210x160 pixels. Given the images are in color (RGB), there are 3 channels. This is why the shape is (210, 160, 3). For each pixel, the value can go from 0 to 255.

-Therefore, the state space is gigantic; hence creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

+Therefore, the state space is gigantic; due to this, creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

This neural network will approximate, given a state, the different Q-values for each possible action at that state. And that's exactly what Deep Q-Learning does.

diff --git a/units/en/unit3/introduction.mdx b/units/en/unit3/introduction.mdx

index 366286d..80118f2 100644

--- a/units/en/unit3/introduction.mdx

+++ b/units/en/unit3/introduction.mdx

@@ -6,7 +6,7 @@

In the last unit, we learned our first reinforcement learning algorithm: Q-Learning, **implemented it from scratch**, and trained it in two environments, FrozenLake-v1 ☃️ and Taxi-v3 🚕.

-We got excellent results with this simple algorithm. But these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

+We got excellent results with this simple algorithm, but these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

But as we'll see, producing and updating a **Q-table can become ineffective in large state space environments.**

diff --git a/units/en/unit3/quiz.mdx b/units/en/unit3/quiz.mdx

index cefffa6..d042756 100644

--- a/units/en/unit3/quiz.mdx

+++ b/units/en/unit3/quiz.mdx

@@ -2,17 +2,17 @@

The best way to learn and [to avoid the illusion of competence](https://www.coursera.org/lecture/learning-how-to-learn/illusions-of-competence-BuFzf) **is to test yourself.** This will help you to find **where you need to reinforce your knowledge**.

-### Q1: What are tabular methods?

+### Q1: We mentioned Q Learning is a tabular method. What are tabular methods?

-Therefore, the state space is gigantic; hence creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

+Therefore, the state space is gigantic; due to this, creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

This neural network will approximate, given a state, the different Q-values for each possible action at that state. And that's exactly what Deep Q-Learning does.

diff --git a/units/en/unit3/introduction.mdx b/units/en/unit3/introduction.mdx

index 366286d..80118f2 100644

--- a/units/en/unit3/introduction.mdx

+++ b/units/en/unit3/introduction.mdx

@@ -6,7 +6,7 @@

In the last unit, we learned our first reinforcement learning algorithm: Q-Learning, **implemented it from scratch**, and trained it in two environments, FrozenLake-v1 ☃️ and Taxi-v3 🚕.

-We got excellent results with this simple algorithm. But these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

+We got excellent results with this simple algorithm, but these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

But as we'll see, producing and updating a **Q-table can become ineffective in large state space environments.**

diff --git a/units/en/unit3/quiz.mdx b/units/en/unit3/quiz.mdx

index cefffa6..d042756 100644

--- a/units/en/unit3/quiz.mdx

+++ b/units/en/unit3/quiz.mdx

@@ -2,17 +2,17 @@

The best way to learn and [to avoid the illusion of competence](https://www.coursera.org/lecture/learning-how-to-learn/illusions-of-competence-BuFzf) **is to test yourself.** This will help you to find **where you need to reinforce your knowledge**.

-### Q1: What are tabular methods?

+### Q1: We mentioned Q Learning is a tabular method. What are tabular methods?

Solution

-*Tabular methods* are a type of problems in which the state and actions spaces are small enough to approximate value functions to be **represented as arrays and tables**. For instance, **Q-Learning is a tabular method** since we use a table to represent the state,action value pairs.

+*Tabular methods* is a type of problem in which the state and actions spaces are small enough to approximate value functions to be **represented as arrays and tables**. For instance, **Q-Learning is a tabular method** since we use a table to represent the state, and action value pairs.

-### Q2: Why we can't use a classical Q-Learning to solve an Atari Game?

+### Q2: Why can't we use a classical Q-Learning to solve an Atari Game?

Solution

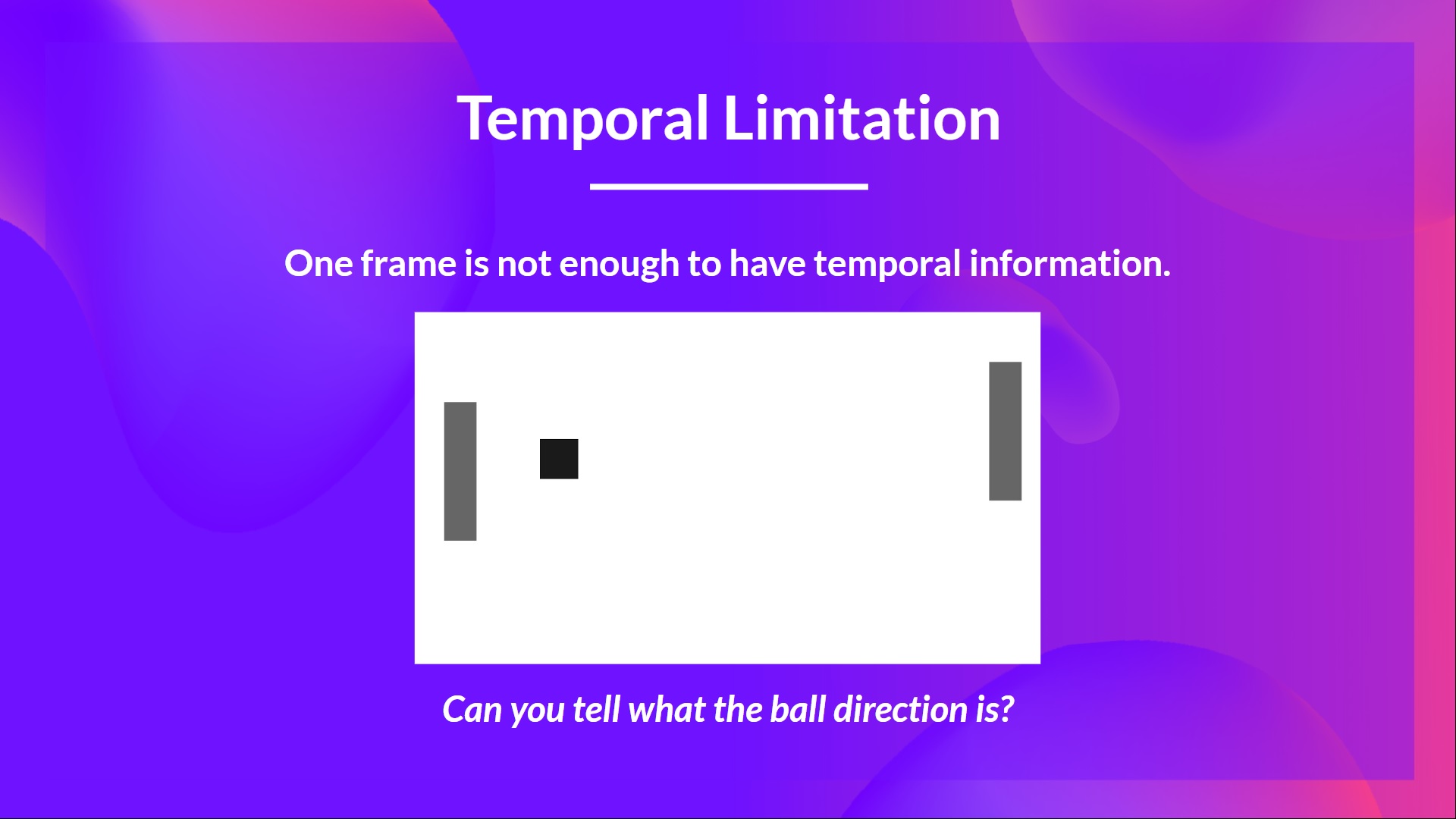

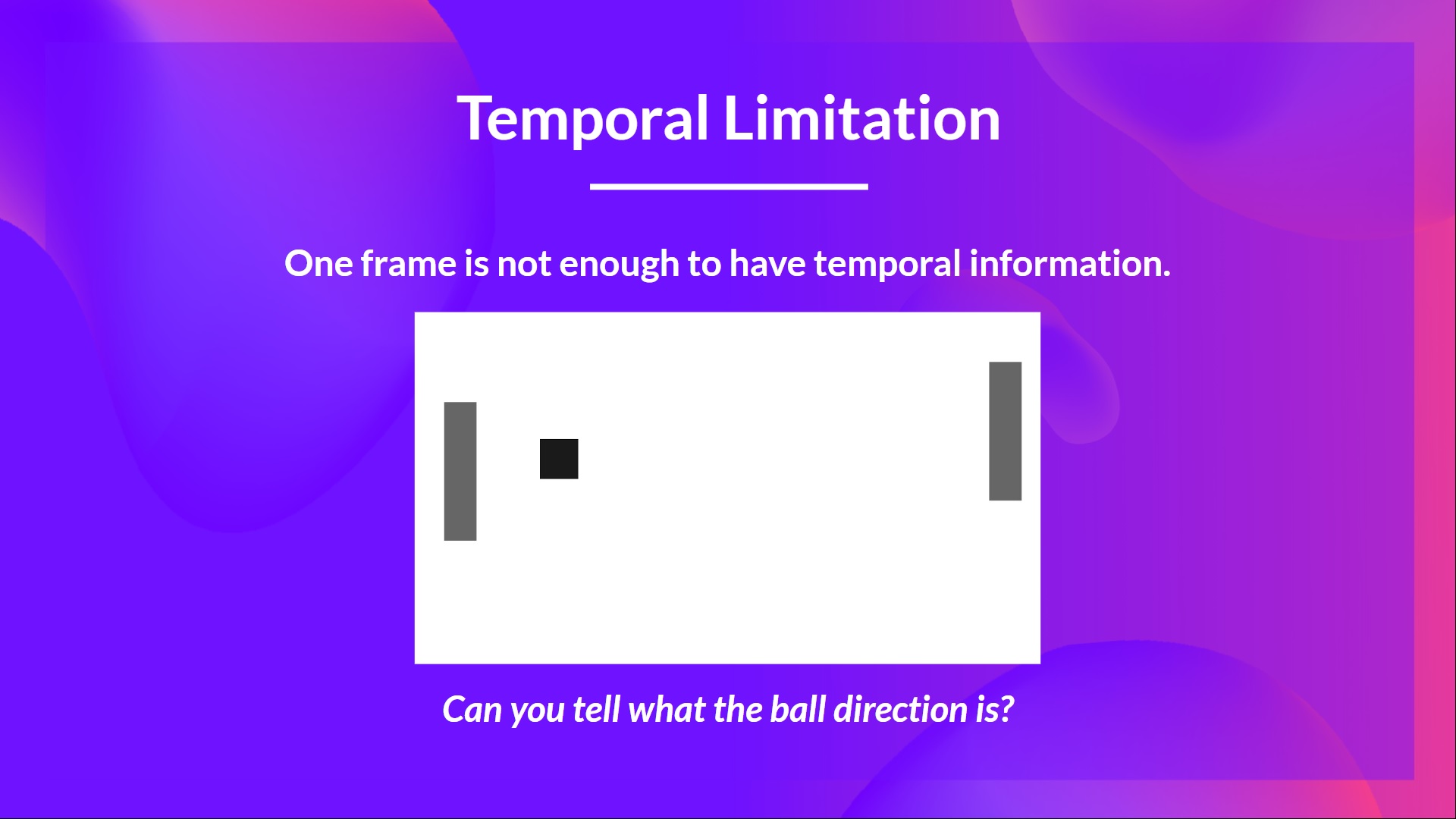

-We stack frames together because it helps us **handle the problem of temporal limitation**. Since one frame is not enough to capture temporal information.

+We stack frames together because it helps us **handle the problem of temporal limitation**: one frame is not enough to capture temporal information.

For instance, in pong, our agent **will be unable to know the ball direction if it gets only one frame**.

-In Deep Q-Learning, we create a **Loss function between our Q-value prediction and the Q-target and use Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

+in Deep Q-Learning, we create a **loss function that compares our Q-value prediction and the Q-target and uses Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

-In Deep Q-Learning, we create a **Loss function between our Q-value prediction and the Q-target and use Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

+in Deep Q-Learning, we create a **loss function that compares our Q-value prediction and the Q-target and uses Gradient Descent to update the weights of our Deep Q-Network to approximate our Q-values better**.

The Deep Q-Learning training algorithm has *two phases*:

-- **Sampling**: we perform actions and **store the observed experiences tuples in a replay memory**.

-- **Training**: Select the **small batch of tuple randomly and learn from it using a gradient descent update step**.

+- **Sampling**: we perform actions and **store the observed experience tuples in a replay memory**.

+- **Training**: Select a **small batch of tuples randomly and learn from this batch using a gradient descent update step**.

The Deep Q-Learning training algorithm has *two phases*:

-- **Sampling**: we perform actions and **store the observed experiences tuples in a replay memory**.

-- **Training**: Select the **small batch of tuple randomly and learn from it using a gradient descent update step**.

+- **Sampling**: we perform actions and **store the observed experience tuples in a replay memory**.

+- **Training**: Select a **small batch of tuples randomly and learn from this batch using a gradient descent update step**.

-But, this is not the only change compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

+This is not the only difference compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

To help us stabilize the training, we implement three different solutions:

-1. *Experience Replay*, to make more **efficient use of experiences**.

+1. *Experience Replay* to make more **efficient use of experiences**.

2. *Fixed Q-Target* **to stabilize the training**.

3. *Double Deep Q-Learning*, to **handle the problem of the overestimation of Q-values**.

+Let's go through them!

## Experience Replay to make more efficient use of experiences [[exp-replay]]

@@ -32,21 +33,21 @@ Why do we create a replay memory?

Experience Replay in Deep Q-Learning has two functions:

1. **Make more efficient use of the experiences during the training**.

-- Experience replay helps us **make more efficient use of the experiences during the training.** Usually, in online reinforcement learning, we interact in the environment, get experiences (state, action, reward, and next state), learn from them (update the neural network) and discard them.

-- But with experience replay, we create a replay buffer that saves experience samples **that we can reuse during the training.**

+Usually, in online reinforcement learning, the agent interacts in the environment, gets experiences (state, action, reward, and next state), learns from them (updates the neural network), and discards them. This is not efficient

+Experience replay helps **using the experiences of the training more efficiently**. We use a replay buffer that saves experience samples **that we can reuse during the training.**

-But, this is not the only change compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

+This is not the only difference compared with Q-Learning. Deep Q-Learning training **might suffer from instability**, mainly because of combining a non-linear Q-value function (Neural Network) and bootstrapping (when we update targets with existing estimates and not an actual complete return).

To help us stabilize the training, we implement three different solutions:

-1. *Experience Replay*, to make more **efficient use of experiences**.

+1. *Experience Replay* to make more **efficient use of experiences**.

2. *Fixed Q-Target* **to stabilize the training**.

3. *Double Deep Q-Learning*, to **handle the problem of the overestimation of Q-values**.

+Let's go through them!

## Experience Replay to make more efficient use of experiences [[exp-replay]]

@@ -32,21 +33,21 @@ Why do we create a replay memory?

Experience Replay in Deep Q-Learning has two functions:

1. **Make more efficient use of the experiences during the training**.

-- Experience replay helps us **make more efficient use of the experiences during the training.** Usually, in online reinforcement learning, we interact in the environment, get experiences (state, action, reward, and next state), learn from them (update the neural network) and discard them.

-- But with experience replay, we create a replay buffer that saves experience samples **that we can reuse during the training.**

+Usually, in online reinforcement learning, the agent interacts in the environment, gets experiences (state, action, reward, and next state), learns from them (updates the neural network), and discards them. This is not efficient

+Experience replay helps **using the experiences of the training more efficiently**. We use a replay buffer that saves experience samples **that we can reuse during the training.**

-⇒ This allows us to **learn from individual experiences multiple times**.

+⇒ This allows the agent to **learn from the same experiences multiple times**.

2. **Avoid forgetting previous experiences and reduce the correlation between experiences**.

-- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it overwrites new experiences.** For instance, if we are in the first level and then the second, which is different, our agent can forget how to behave and play in the first level.

+- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it gets new experiences.** For instance, if the agent is in the first level and then in the second, which is different, it can forget how to behave and play in the first level.

-The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has immediately done.**

+The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has done immediately before.**

Experience replay also has other benefits. By randomly sampling the experiences, we remove correlation in the observation sequences and avoid **action values from oscillating or diverging catastrophically.**

-In the Deep Q-Learning pseudocode, we see that we **initialize a replay memory buffer D from capacity N** (N is an hyperparameter that you can define). We then store experiences in the memory and sample a minibatch of experiences to feed the Deep Q-Network during the training phase.

+In the Deep Q-Learning pseudocode, we **initialize a replay memory buffer D from capacity N** (N is a hyperparameter that you can define). We then store experiences in the memory and sample a batch of experiences to feed the Deep Q-Network during the training phase.

-⇒ This allows us to **learn from individual experiences multiple times**.

+⇒ This allows the agent to **learn from the same experiences multiple times**.

2. **Avoid forgetting previous experiences and reduce the correlation between experiences**.

-- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it overwrites new experiences.** For instance, if we are in the first level and then the second, which is different, our agent can forget how to behave and play in the first level.

+- The problem we get if we give sequential samples of experiences to our neural network is that it tends to forget **the previous experiences as it gets new experiences.** For instance, if the agent is in the first level and then in the second, which is different, it can forget how to behave and play in the first level.

-The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has immediately done.**

+The solution is to create a Replay Buffer that stores experience tuples while interacting with the environment and then sample a small batch of tuples. This prevents **the network from only learning about what it has done immediately before.**

Experience replay also has other benefits. By randomly sampling the experiences, we remove correlation in the observation sequences and avoid **action values from oscillating or diverging catastrophically.**

-In the Deep Q-Learning pseudocode, we see that we **initialize a replay memory buffer D from capacity N** (N is an hyperparameter that you can define). We then store experiences in the memory and sample a minibatch of experiences to feed the Deep Q-Network during the training phase.

+In the Deep Q-Learning pseudocode, we **initialize a replay memory buffer D from capacity N** (N is a hyperparameter that you can define). We then store experiences in the memory and sample a batch of experiences to feed the Deep Q-Network during the training phase.

@@ -60,9 +61,9 @@ But we **don’t have any idea of the real TD target**. We need to estimate it

However, the problem is that we are using the same parameters (weights) for estimating the TD target **and** the Q value. Consequently, there is a significant correlation between the TD target and the parameters we are changing.

-Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** So, we’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This led to a significant oscillation in training.

+Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** We’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This can lead to a significant oscillation in training.

-It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target), you must get closer (reduce the error).

+It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target). Your goal is to get closer (reduce the error).

@@ -60,9 +61,9 @@ But we **don’t have any idea of the real TD target**. We need to estimate it

However, the problem is that we are using the same parameters (weights) for estimating the TD target **and** the Q value. Consequently, there is a significant correlation between the TD target and the parameters we are changing.

-Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** So, we’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This led to a significant oscillation in training.

+Therefore, it means that at every step of training, **our Q values shift but also the target value shifts.** We’re getting closer to our target, but the target is also moving. It’s like chasing a moving target! This can lead to a significant oscillation in training.

-It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target), you must get closer (reduce the error).

+It’s like if you were a cowboy (the Q estimation) and you want to catch the cow (the Q-target). Your goal is to get closer (reduce the error).

@@ -74,7 +75,7 @@ This leads to a bizarre path of chasing (a significant oscillation in training).

@@ -74,7 +75,7 @@ This leads to a bizarre path of chasing (a significant oscillation in training).

Instead, what we see in the pseudo-code is that we:

-- Use a **separate network with a fixed parameter** for estimating the TD Target

+- Use a **separate network with fixed parameters** for estimating the TD Target

- **Copy the parameters from our Deep Q-Network at every C step** to update the target network.

Instead, what we see in the pseudo-code is that we:

-- Use a **separate network with a fixed parameter** for estimating the TD Target

+- Use a **separate network with fixed parameters** for estimating the TD Target

- **Copy the parameters from our Deep Q-Network at every C step** to update the target network.

diff --git a/units/en/unit3/deep-q-network.mdx b/units/en/unit3/deep-q-network.mdx

index 2a2c5c5..cb3d616 100644

--- a/units/en/unit3/deep-q-network.mdx

+++ b/units/en/unit3/deep-q-network.mdx

@@ -11,7 +11,7 @@ When the Neural Network is initialized, **the Q-value estimation is terrible**.

We mentioned that we preprocess the input. It’s an essential step since we want to **reduce the complexity of our state to reduce the computation time needed for training**.

-So what we do is **reduce the state space to 84x84 and grayscale it** (since the colors in Atari environments don't add important information).

+To achieve this, we **reduce the state space to 84x84 and grayscale it**. We can do this since the colors in Atari environments don't add important information.

This is an essential saving since we **reduce our three color channels (RGB) to 1**.

We can also **crop a part of the screen in some games** if it does not contain important information.

diff --git a/units/en/unit3/from-q-to-dqn.mdx b/units/en/unit3/from-q-to-dqn.mdx

index 33d2ba4..13df4d1 100644

--- a/units/en/unit3/from-q-to-dqn.mdx

+++ b/units/en/unit3/from-q-to-dqn.mdx

@@ -19,11 +19,13 @@ Q-Learning worked well with small state space environments like:

But think of what we're going to do today: we will train an agent to learn to play Space Invaders a more complex game, using the frames as input.

-As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3), containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3)*, containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+

+* A single frame in Atari is composed of an image of 210x160 pixels. Given the images are in color (RGB), there are 3 channels. This is why the shape is (210, 160, 3). For each pixel, the value can go from 0 to 255.

diff --git a/units/en/unit3/deep-q-network.mdx b/units/en/unit3/deep-q-network.mdx

index 2a2c5c5..cb3d616 100644

--- a/units/en/unit3/deep-q-network.mdx

+++ b/units/en/unit3/deep-q-network.mdx

@@ -11,7 +11,7 @@ When the Neural Network is initialized, **the Q-value estimation is terrible**.

We mentioned that we preprocess the input. It’s an essential step since we want to **reduce the complexity of our state to reduce the computation time needed for training**.

-So what we do is **reduce the state space to 84x84 and grayscale it** (since the colors in Atari environments don't add important information).

+To achieve this, we **reduce the state space to 84x84 and grayscale it**. We can do this since the colors in Atari environments don't add important information.

This is an essential saving since we **reduce our three color channels (RGB) to 1**.

We can also **crop a part of the screen in some games** if it does not contain important information.

diff --git a/units/en/unit3/from-q-to-dqn.mdx b/units/en/unit3/from-q-to-dqn.mdx

index 33d2ba4..13df4d1 100644

--- a/units/en/unit3/from-q-to-dqn.mdx

+++ b/units/en/unit3/from-q-to-dqn.mdx

@@ -19,11 +19,13 @@ Q-Learning worked well with small state space environments like:

But think of what we're going to do today: we will train an agent to learn to play Space Invaders a more complex game, using the frames as input.

-As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3), containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+As **[Nikita Melkozerov mentioned](https://twitter.com/meln1k), Atari environments** have an observation space with a shape of (210, 160, 3)*, containing values ranging from 0 to 255 so that gives us 256^(210x160x3) = 256^100800 (for comparison, we have approximately 10^80 atoms in the observable universe).

+

+* A single frame in Atari is composed of an image of 210x160 pixels. Given the images are in color (RGB), there are 3 channels. This is why the shape is (210, 160, 3). For each pixel, the value can go from 0 to 255.

-Therefore, the state space is gigantic; hence creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

+Therefore, the state space is gigantic; due to this, creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

This neural network will approximate, given a state, the different Q-values for each possible action at that state. And that's exactly what Deep Q-Learning does.

diff --git a/units/en/unit3/introduction.mdx b/units/en/unit3/introduction.mdx

index 366286d..80118f2 100644

--- a/units/en/unit3/introduction.mdx

+++ b/units/en/unit3/introduction.mdx

@@ -6,7 +6,7 @@

In the last unit, we learned our first reinforcement learning algorithm: Q-Learning, **implemented it from scratch**, and trained it in two environments, FrozenLake-v1 ☃️ and Taxi-v3 🚕.

-We got excellent results with this simple algorithm. But these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

+We got excellent results with this simple algorithm, but these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

But as we'll see, producing and updating a **Q-table can become ineffective in large state space environments.**

diff --git a/units/en/unit3/quiz.mdx b/units/en/unit3/quiz.mdx

index cefffa6..d042756 100644

--- a/units/en/unit3/quiz.mdx

+++ b/units/en/unit3/quiz.mdx

@@ -2,17 +2,17 @@

The best way to learn and [to avoid the illusion of competence](https://www.coursera.org/lecture/learning-how-to-learn/illusions-of-competence-BuFzf) **is to test yourself.** This will help you to find **where you need to reinforce your knowledge**.

-### Q1: What are tabular methods?

+### Q1: We mentioned Q Learning is a tabular method. What are tabular methods?

-Therefore, the state space is gigantic; hence creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

+Therefore, the state space is gigantic; due to this, creating and updating a Q-table for that environment would not be efficient. In this case, the best idea is to approximate the Q-values instead of a Q-table using a parametrized Q-function \\(Q_{\theta}(s,a)\\) .

This neural network will approximate, given a state, the different Q-values for each possible action at that state. And that's exactly what Deep Q-Learning does.

diff --git a/units/en/unit3/introduction.mdx b/units/en/unit3/introduction.mdx

index 366286d..80118f2 100644

--- a/units/en/unit3/introduction.mdx

+++ b/units/en/unit3/introduction.mdx

@@ -6,7 +6,7 @@

In the last unit, we learned our first reinforcement learning algorithm: Q-Learning, **implemented it from scratch**, and trained it in two environments, FrozenLake-v1 ☃️ and Taxi-v3 🚕.

-We got excellent results with this simple algorithm. But these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

+We got excellent results with this simple algorithm, but these environments were relatively simple because the **state space was discrete and small** (14 different states for FrozenLake-v1 and 500 for Taxi-v3).

But as we'll see, producing and updating a **Q-table can become ineffective in large state space environments.**

diff --git a/units/en/unit3/quiz.mdx b/units/en/unit3/quiz.mdx

index cefffa6..d042756 100644

--- a/units/en/unit3/quiz.mdx

+++ b/units/en/unit3/quiz.mdx

@@ -2,17 +2,17 @@

The best way to learn and [to avoid the illusion of competence](https://www.coursera.org/lecture/learning-how-to-learn/illusions-of-competence-BuFzf) **is to test yourself.** This will help you to find **where you need to reinforce your knowledge**.

-### Q1: What are tabular methods?

+### Q1: We mentioned Q Learning is a tabular method. What are tabular methods?