mirror of

https://github.com/huggingface/deep-rl-class.git

synced 2026-03-25 06:11:47 +08:00

885 lines

31 KiB

Plaintext

885 lines

31 KiB

Plaintext

{

|

||

"cells": [

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"colab_type": "text",

|

||

"id": "view-in-github"

|

||

},

|

||

"source": [

|

||

"<a href=\"https://colab.research.google.com/github/huggingface/deep-rl-class/blob/main/notebooks/unit5/unit5.ipynb\" target=\"_parent\"><img src=\"https://colab.research.google.com/assets/colab-badge.svg\" alt=\"Open In Colab\"/></a>"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "2D3NL_e4crQv"

|

||

},

|

||

"source": [

|

||

"# Unit 5: An Introduction to ML-Agents\n",

|

||

"\n"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "97ZiytXEgqIz"

|

||

},

|

||

"source": [

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/thumbnail.png\" alt=\"Thumbnail\"/>\n",

|

||

"\n",

|

||

"In this notebook, you'll learn about ML-Agents and train two agents.\n",

|

||

"\n",

|

||

"- The first one will learn to **shoot snowballs onto spawning targets**.\n",

|

||

"- The second need to press a button to spawn a pyramid, then navigate to the pyramid, knock it over, **and move to the gold brick at the top**. To do that, it will need to explore its environment, and we will use a technique called curiosity.\n",

|

||

"\n",

|

||

"After that, you'll be able **to watch your agents playing directly on your browser**.\n",

|

||

"\n",

|

||

"For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "FMYrDriDujzX"

|

||

},

|

||

"source": [

|

||

"⬇️ Here is an example of what **you will achieve at the end of this unit.** ⬇️\n"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "cBmFlh8suma-"

|

||

},

|

||

"source": [

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/pyramids.gif\" alt=\"Pyramids\"/>\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget.gif\" alt=\"SnowballTarget\"/>"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "A-cYE0K5iL-w"

|

||

},

|

||

"source": [

|

||

"### 🎮 Environments:\n",

|

||

"\n",

|

||

"- [Pyramids](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Learning-Environment-Examples.md#pyramids)\n",

|

||

"- SnowballTarget\n",

|

||

"\n",

|

||

"### 📚 RL-Library:\n",

|

||

"\n",

|

||

"- [ML-Agents](https://github.com/Unity-Technologies/ml-agents)\n"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "qEhtaFh9i31S"

|

||

},

|

||

"source": [

|

||

"We're constantly trying to improve our tutorials, so **if you find some issues in this notebook**, please [open an issue on the GitHub Repo](https://github.com/huggingface/deep-rl-class/issues)."

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "j7f63r3Yi5vE"

|

||

},

|

||

"source": [

|

||

"## Objectives of this notebook 🏆\n",

|

||

"\n",

|

||

"At the end of the notebook, you will:\n",

|

||

"\n",

|

||

"- Understand how works **ML-Agents**, the environment library.\n",

|

||

"- Be able to **train agents in Unity Environments**.\n"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "viNzVbVaYvY3"

|

||

},

|

||

"source": [

|

||

"## This notebook is from the Deep Reinforcement Learning Course\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/deep-rl-course-illustration.jpg\" alt=\"Deep RL Course illustration\"/>"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "6p5HnEefISCB"

|

||

},

|

||

"source": [

|

||

"In this free course, you will:\n",

|

||

"\n",

|

||

"- 📖 Study Deep Reinforcement Learning in **theory and practice**.\n",

|

||

"- 🧑💻 Learn to **use famous Deep RL libraries** such as Stable Baselines3, RL Baselines3 Zoo, CleanRL and Sample Factory 2.0.\n",

|

||

"- 🤖 Train **agents in unique environments**\n",

|

||

"\n",

|

||

"And more check 📚 the syllabus 👉 https://huggingface.co/deep-rl-course/communication/publishing-schedule\n",

|

||

"\n",

|

||

"Don’t forget to **<a href=\"http://eepurl.com/ic5ZUD\">sign up to the course</a>** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**\n",

|

||

"\n",

|

||

"\n",

|

||

"The best way to keep in touch is to join our discord server to exchange with the community and with us 👉🏻 https://discord.gg/ydHrjt3WP5"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "Y-mo_6rXIjRi"

|

||

},

|

||

"source": [

|

||

"## Prerequisites 🏗️\n",

|

||

"Before diving into the notebook, you need to:\n",

|

||

"\n",

|

||

"🔲 📚 **Study [what is ML-Agents and how it works by reading Unit 5](https://huggingface.co/deep-rl-course/unit5/introduction)** 🤗 "

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "xYO1uD5Ujgdh"

|

||

},

|

||

"source": [

|

||

"# Let's train our agents 🚀\n",

|

||

"\n",

|

||

"**To validate this hands-on for the certification process, you just need to push your trained models to the Hub**. There’s no results to attain to validate this one. But if you want to get nice results you can try to attain:\n",

|

||

"\n",

|

||

"- For `Pyramids` : Mean Reward = 1.75\n",

|

||

"- For `SnowballTarget` : Mean Reward = 15 or 30 targets hit in an episode.\n"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "DssdIjk_8vZE"

|

||

},

|

||

"source": [

|

||

"## Set the GPU 💪\n",

|

||

"- To **accelerate the agent's training, we'll use a GPU**. To do that, go to `Runtime > Change Runtime type`\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step1.jpg\" alt=\"GPU Step 1\">"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "sTfCXHy68xBv"

|

||

},

|

||

"source": [

|

||

"- `Hardware Accelerator > GPU`\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step2.jpg\" alt=\"GPU Step 2\">"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {},

|

||

"source": [

|

||

"## Clone the repository 🔽\n",

|

||

"\n",

|

||

"- We need to clone the repository, that contains **ML-Agents.**"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {},

|

||

"outputs": [],

|

||

"source": [

|

||

"%%capture\n",

|

||

"# Clone the repository (can take 3min)\n",

|

||

"!git clone --depth 1 https://github.com/Unity-Technologies/ml-agents"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {},

|

||

"source": [

|

||

"## Setup the Virtual Environment 🔽\n",

|

||

"- In order for the **ML-Agents** to run successfully in Colab, Colab's Python version must meet the library's Python requirements.\n",

|

||

"\n",

|

||

"- We can check for the supported Python version under the `python_requires` parameter in the `setup.py` files. These files are required to set up the **ML-Agents** library for use and can be found in the following locations:\n",

|

||

" - `/content/ml-agents/ml-agents/setup.py`\n",

|

||

" - `/content/ml-agents/ml-agents-envs/setup.py`\n",

|

||

"\n",

|

||

"- Colab's Current Python version(can be checked using `!python --version`) doesn't match the library's `python_requires` parameter, as a result installation may silently fail and lead to errors like these, when executing the same commands later:\n",

|

||

" - `/bin/bash: line 1: mlagents-learn: command not found`\n",

|

||

" - `/bin/bash: line 1: mlagents-push-to-hf: command not found`\n",

|

||

"\n",

|

||

"- To resolve this, we'll create a virtual environment with a Python version compatible with the **ML-Agents** library.\n",

|

||

"\n",

|

||

"`Note:` *For future compatibility, always check the `python_requires` parameter in the installation files and set your virtual environment to the maximum supported Python version in the given below script if the Colab's Python version is not compatible*"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {},

|

||

"outputs": [],

|

||

"source": [

|

||

"# Colab's Current Python Version (Incompatible with ML-Agents)\n",

|

||

"!python --version"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {},

|

||

"outputs": [],

|

||

"source": [

|

||

"# Install virtualenv and create a virtual environment\n",

|

||

"!pip install virtualenv\n",

|

||

"!virtualenv myenv\n",

|

||

"\n",

|

||

"# Download and install Miniconda\n",

|

||

"!wget https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-x86_64.sh\n",

|

||

"!chmod +x Miniconda3-latest-Linux-x86_64.sh\n",

|

||

"!./Miniconda3-latest-Linux-x86_64.sh -b -f -p /usr/local\n",

|

||

"\n",

|

||

"# Activate Miniconda and install Python ver 3.10.12\n",

|

||

"!source /usr/local/bin/activate\n",

|

||

"!conda install -q -y --prefix /usr/local python=3.10.12 ujson # Specify the version here\n",

|

||

"\n",

|

||

"# Set environment variables for Python and conda paths\n",

|

||

"!export PYTHONPATH=/usr/local/lib/python3.10/site-packages/\n",

|

||

"!export CONDA_PREFIX=/usr/local/envs/myenv"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {},

|

||

"outputs": [],

|

||

"source": [

|

||

"# Python Version in New Virtual Environment (Compatible with ML-Agents)\n",

|

||

"!python --version"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {},

|

||

"source": [

|

||

"## Installing the dependencies 🔽"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {},

|

||

"outputs": [],

|

||

"source": [

|

||

"%%capture\n",

|

||

"# Go inside the repository and install the package (can take 3min)\n",

|

||

"%cd ml-agents\n",

|

||

"!pip3 install -e ./ml-agents-envs\n",

|

||

"!pip3 install -e ./ml-agents"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "R5_7Ptd_kEcG"

|

||

},

|

||

"source": [

|

||

"## SnowballTarget ⛄\n",

|

||

"\n",

|

||

"If you need a refresher on how this environments work check this section 👉\n",

|

||

"https://huggingface.co/deep-rl-course/unit5/snowball-target"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "HRY5ufKUKfhI"

|

||

},

|

||

"source": [

|

||

"### Download and move the environment zip file in `./training-envs-executables/linux/`\n",

|

||

"- Our environment executable is in a zip file.\n",

|

||

"- We need to download it and place it to `./training-envs-executables/linux/`\n",

|

||

"- We use a linux executable because we use colab, and colab machines OS is Ubuntu (linux)"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "C9Ls6_6eOKiA"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"# Here, we create training-envs-executables and linux\n",

|

||

"!mkdir ./training-envs-executables\n",

|

||

"!mkdir ./training-envs-executables/linux"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "ekSh8LWawkB5"

|

||

},

|

||

"source": [

|

||

"We downloaded the file SnowballTarget.zip from https://github.com/huggingface/Snowball-Target using `wget`"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "6LosWO50wa77"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!wget \"https://github.com/huggingface/Snowball-Target/raw/main/SnowballTarget.zip\" -O ./training-envs-executables/linux/SnowballTarget.zip"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "_LLVaEEK3ayi"

|

||

},

|

||

"source": [

|

||

"We unzip the executable.zip file"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "8FPx0an9IAwO"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"%%capture\n",

|

||

"!unzip -d ./training-envs-executables/linux/ ./training-envs-executables/linux/SnowballTarget.zip"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "nyumV5XfPKzu"

|

||

},

|

||

"source": [

|

||

"Make sure your file is accessible"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "EdFsLJ11JvQf"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!chmod -R 755 ./training-envs-executables/linux/SnowballTarget"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "NAuEq32Mwvtz"

|

||

},

|

||

"source": [

|

||

"### Define the SnowballTarget config file\n",

|

||

"- In ML-Agents, you define the **training hyperparameters into config.yaml files.**\n",

|

||

"\n",

|

||

"There are multiple hyperparameters. To know them better, you should check for each explanation with [the documentation](https://github.com/Unity-Technologies/ml-agents/blob/release_20_docs/docs/Training-Configuration-File.md)\n",

|

||

"\n",

|

||

"\n",

|

||

"So you need to create a `SnowballTarget.yaml` config file in ./content/ml-agents/config/ppo/\n",

|

||

"\n",

|

||

"We'll give you here a first version of this config (to copy and paste into your `SnowballTarget.yaml file`), **but you should modify it**.\n",

|

||

"\n",

|

||

"```\n",

|

||

"behaviors:\n",

|

||

" SnowballTarget:\n",

|

||

" trainer_type: ppo\n",

|

||

" summary_freq: 10000\n",

|

||

" keep_checkpoints: 10\n",

|

||

" checkpoint_interval: 50000\n",

|

||

" max_steps: 200000\n",

|

||

" time_horizon: 64\n",

|

||

" threaded: false\n",

|

||

" hyperparameters:\n",

|

||

" learning_rate: 0.0003\n",

|

||

" learning_rate_schedule: linear\n",

|

||

" batch_size: 128\n",

|

||

" buffer_size: 2048\n",

|

||

" beta: 0.005\n",

|

||

" epsilon: 0.2\n",

|

||

" lambd: 0.95\n",

|

||

" num_epoch: 3\n",

|

||

" network_settings:\n",

|

||

" normalize: false\n",

|

||

" hidden_units: 256\n",

|

||

" num_layers: 2\n",

|

||

" vis_encode_type: simple\n",

|

||

" reward_signals:\n",

|

||

" extrinsic:\n",

|

||

" gamma: 0.99\n",

|

||

" strength: 1.0\n",

|

||

"```"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "4U3sRH4N4h_l"

|

||

},

|

||

"source": [

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config1.png\" alt=\"Config SnowballTarget\"/>\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config2.png\" alt=\"Config SnowballTarget\"/>"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "JJJdo_5AyoGo"

|

||

},

|

||

"source": [

|

||

"As an experimentation, you should also try to modify some other hyperparameters. Unity provides very [good documentation explaining each of them here](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Training-Configuration-File.md).\n",

|

||

"\n",

|

||

"Now that you've created the config file and understand what most hyperparameters do, we're ready to train our agent 🔥."

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "f9fI555bO12v"

|

||

},

|

||

"source": [

|

||

"### Train the agent\n",

|

||

"\n",

|

||

"To train our agent, we just need to **launch mlagents-learn and select the executable containing the environment.**\n",

|

||

"\n",

|

||

"We define four parameters:\n",

|

||

"\n",

|

||

"1. `mlagents-learn <config>`: the path where the hyperparameter config file is.\n",

|

||

"2. `--env`: where the environment executable is.\n",

|

||

"3. `--run_id`: the name you want to give to your training run id.\n",

|

||

"4. `--no-graphics`: to not launch the visualization during the training.\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentslearn.png\" alt=\"MlAgents learn\"/>\n",

|

||

"\n",

|

||

"Train the model and use the `--resume` flag to continue training in case of interruption.\n",

|

||

"\n",

|

||

"> It will fail first time if and when you use `--resume`, try running the block again to bypass the error.\n",

|

||

"\n"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "lN32oWF8zPjs"

|

||

},

|

||

"source": [

|

||

"The training will take 10 to 35min depending on your config, go take a ☕️you deserve it 🤗."

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "bS-Yh1UdHfzy"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!mlagents-learn ./config/ppo/SnowballTarget.yaml --env=./training-envs-executables/linux/SnowballTarget/SnowballTarget --run-id=\"SnowballTarget1\" --no-graphics"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "5Vue94AzPy1t"

|

||

},

|

||

"source": [

|

||

"### Push the agent to the 🤗 Hub\n",

|

||

"\n",

|

||

"- Now that we trained our agent, we’re **ready to push it to the Hub to be able to visualize it playing on your browser🔥.**"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "izT6FpgNzZ6R"

|

||

},

|

||

"source": [

|

||

"To be able to share your model with the community there are three more steps to follow:\n",

|

||

"\n",

|

||

"1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join\n",

|

||

"\n",

|

||

"2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.\n",

|

||

"- Create a new token (https://huggingface.co/settings/tokens) **with write role**\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/create-token.jpg\" alt=\"Create HF Token\">\n",

|

||

"\n",

|

||

"- Copy the token\n",

|

||

"- Run the cell below and paste the token"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "rKt2vsYoK56o"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"from huggingface_hub import notebook_login\n",

|

||

"notebook_login()"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "aSU9qD9_6dem"

|

||

},

|

||

"source": [

|

||

"If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "KK4fPfnczunT"

|

||

},

|

||

"source": [

|

||

"Then, we simply need to run `mlagents-push-to-hf`.\n",

|

||

"\n",

|

||

"And we define 4 parameters:\n",

|

||

"\n",

|

||

"1. `--run-id`: the name of the training run id.\n",

|

||

"2. `--local-dir`: where the agent was saved, it’s results/<run_id name>, so in my case results/First Training.\n",

|

||

"3. `--repo-id`: the name of the Hugging Face repo you want to create or update. It’s always <your huggingface username>/<the repo name>\n",

|

||

"If the repo does not exist **it will be created automatically**\n",

|

||

"4. `--commit-message`: since HF repos are git repository you need to define a commit message.\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentspushtohub.png\" alt=\"Push to Hub\"/>\n",

|

||

"\n",

|

||

"For instance:\n",

|

||

"\n",

|

||

"`!mlagents-push-to-hf --run-id=\"SnowballTarget1\" --local-dir=\"./results/SnowballTarget1\" --repo-id=\"ThomasSimonini/ppo-SnowballTarget\" --commit-message=\"First Push\"`"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "kAFzVB7OYj_H"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!mlagents-push-to-hf --run-id=\"SnowballTarget1\" --local-dir=\"./results/SnowballTarget1\" --repo-id=\"ThomasSimonini/ppo-SnowballTarget\" --commit-message=\"First Push\""

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "dGEFAIboLVc6"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!mlagents-push-to-hf --run-id= # Add your run id --local-dir= # Your local dir --repo-id= # Your repo id --commit-message= # Your commit message"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "yborB0850FTM"

|

||

},

|

||

"source": [

|

||

"Else, if everything worked you should have this at the end of the process(but with a different url 😆) :\n",

|

||

"\n",

|

||

"\n",

|

||

"\n",

|

||

"```\n",

|

||

"Your model is pushed to the hub. You can view your model here: https://huggingface.co/ThomasSimonini/ppo-SnowballTarget\n",

|

||

"```\n",

|

||

"\n",

|

||

"It’s the link to your model, it contains a model card that explains how to use it, your Tensorboard and your config file. **What’s awesome is that it’s a git repository, that means you can have different commits, update your repository with a new push etc.**"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "5Uaon2cg0NrL"

|

||

},

|

||

"source": [

|

||

"But now comes the best: **being able to visualize your agent online 👀.**"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "VMc4oOsE0QiZ"

|

||

},

|

||

"source": [

|

||

"### Watch your agent playing 👀\n",

|

||

"\n",

|

||

"For this step it’s simple:\n",

|

||

"\n",

|

||

"1. Go here: https://huggingface.co/spaces/ThomasSimonini/ML-Agents-SnowballTarget\n",

|

||

"\n",

|

||

"2. Launch the game and put it in full screen by clicking on the bottom right button\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget_load.png\" alt=\"Snowballtarget load\"/>"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "Djs8c5rR0Z8a"

|

||

},

|

||

"source": [

|

||

"1. In step 1, type your username (your username is case sensitive: for instance, my username is ThomasSimonini not thomassimonini or ThOmasImoNInI) and click on the search button.\n",

|

||

"\n",

|

||

"2. In step 2, select your model repository.\n",

|

||

"\n",

|

||

"3. In step 3, **choose which model you want to replay**:\n",

|

||

" - I have multiple ones, since we saved a model every 500000 timesteps.\n",

|

||

" - But since I want the more recent, I choose `SnowballTarget.onnx`\n",

|

||

"\n",

|

||

"👉 What’s nice **is to try with different models step to see the improvement of the agent.**\n",

|

||

"\n",

|

||

"And don't hesitate to share the best score your agent gets on discord in #rl-i-made-this channel 🔥\n",

|

||

"\n",

|

||

"Let's now try a harder environment called Pyramids..."

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "rVMwRi4y_tmx"

|

||

},

|

||

"source": [

|

||

"## Pyramids 🏆\n",

|

||

"\n",

|

||

"### Download and move the environment zip file in `./training-envs-executables/linux/`\n",

|

||

"- Our environment executable is in a zip file.\n",

|

||

"- We need to download it and place it to `./training-envs-executables/linux/`\n",

|

||

"- We use a linux executable because we use colab, and colab machines OS is Ubuntu (linux)"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "x2C48SGZjZYw"

|

||

},

|

||

"source": [

|

||

"We downloaded the file Pyramids.zip from from https://huggingface.co/spaces/unity/ML-Agents-Pyramids/resolve/main/Pyramids.zip using `wget`"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "eWh8Pl3sjZY2"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!wget \"https://huggingface.co/spaces/unity/ML-Agents-Pyramids/resolve/main/Pyramids.zip\" -O ./training-envs-executables/linux/Pyramids.zip"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "V5LXPOPujZY3"

|

||

},

|

||

"source": [

|

||

"We unzip the executable.zip file"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "SmNgFdXhjZY3"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"%%capture\n",

|

||

"!unzip -d ./training-envs-executables/linux/ ./training-envs-executables/linux/Pyramids.zip"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "T1jxwhrJjZY3"

|

||

},

|

||

"source": [

|

||

"Make sure your file is accessible"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "6fDd03btjZY3"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!chmod -R 755 ./training-envs-executables/linux/Pyramids/Pyramids"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "fqceIATXAgih"

|

||

},

|

||

"source": [

|

||

"### Modify the PyramidsRND config file\n",

|

||

"- Contrary to the first environment which was a custom one, **Pyramids was made by the Unity team**.\n",

|

||

"- So the PyramidsRND config file already exists and is in ./content/ml-agents/config/ppo/PyramidsRND.yaml\n",

|

||

"- You might asked why \"RND\" in PyramidsRND. RND stands for *random network distillation* it's a way to generate curiosity rewards. If you want to know more on that we wrote an article explaning this technique: https://medium.com/data-from-the-trenches/curiosity-driven-learning-through-random-network-distillation-488ffd8e5938\n",

|

||

"\n",

|

||

"For this training, we’ll modify one thing:\n",

|

||

"- The total training steps hyperparameter is too high since we can hit the benchmark (mean reward = 1.75) in only 1M training steps.\n",

|

||

"👉 To do that, we go to config/ppo/PyramidsRND.yaml,**and modify these to max_steps to 1000000.**\n",

|

||

"\n",

|

||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/pyramids-config.png\" alt=\"Pyramids config\"/>"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "RI-5aPL7BWVk"

|

||

},

|

||

"source": [

|

||

"As an experimentation, you should also try to modify some other hyperparameters, Unity provides a very [good documentation explaining each of them here](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Training-Configuration-File.md).\n",

|

||

"\n",

|

||

"We’re now ready to train our agent 🔥."

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "s5hr1rvIBdZH"

|

||

},

|

||

"source": [

|

||

"### Train the agent\n",

|

||

"\n",

|

||

"The training will take 30 to 45min depending on your machine, go take a ☕️you deserve it 🤗."

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "fXi4-IaHBhqD"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!mlagents-learn ./config/ppo/PyramidsRND.yaml --env=./training-envs-executables/linux/Pyramids/Pyramids --run-id=\"Pyramids Training\" --no-graphics"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "txonKxuSByut"

|

||

},

|

||

"source": [

|

||

"### Push the agent to the 🤗 Hub\n",

|

||

"\n",

|

||

"- Now that we trained our agent, we’re **ready to push it to the Hub to be able to visualize it playing on your browser🔥.**"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "code",

|

||

"execution_count": null,

|

||

"metadata": {

|

||

"id": "yiEQbv7rB4mU"

|

||

},

|

||

"outputs": [],

|

||

"source": [

|

||

"!mlagents-push-to-hf --run-id= # Add your run id --local-dir= # Your local dir --repo-id= # Your repo id --commit-message= # Your commit message"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "7aZfgxo-CDeQ"

|

||

},

|

||

"source": [

|

||

"### Watch your agent playing 👀\n",

|

||

"\n",

|

||

"👉 https://huggingface.co/spaces/unity/ML-Agents-Pyramids"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "hGG_oq2n0wjB"

|

||

},

|

||

"source": [

|

||

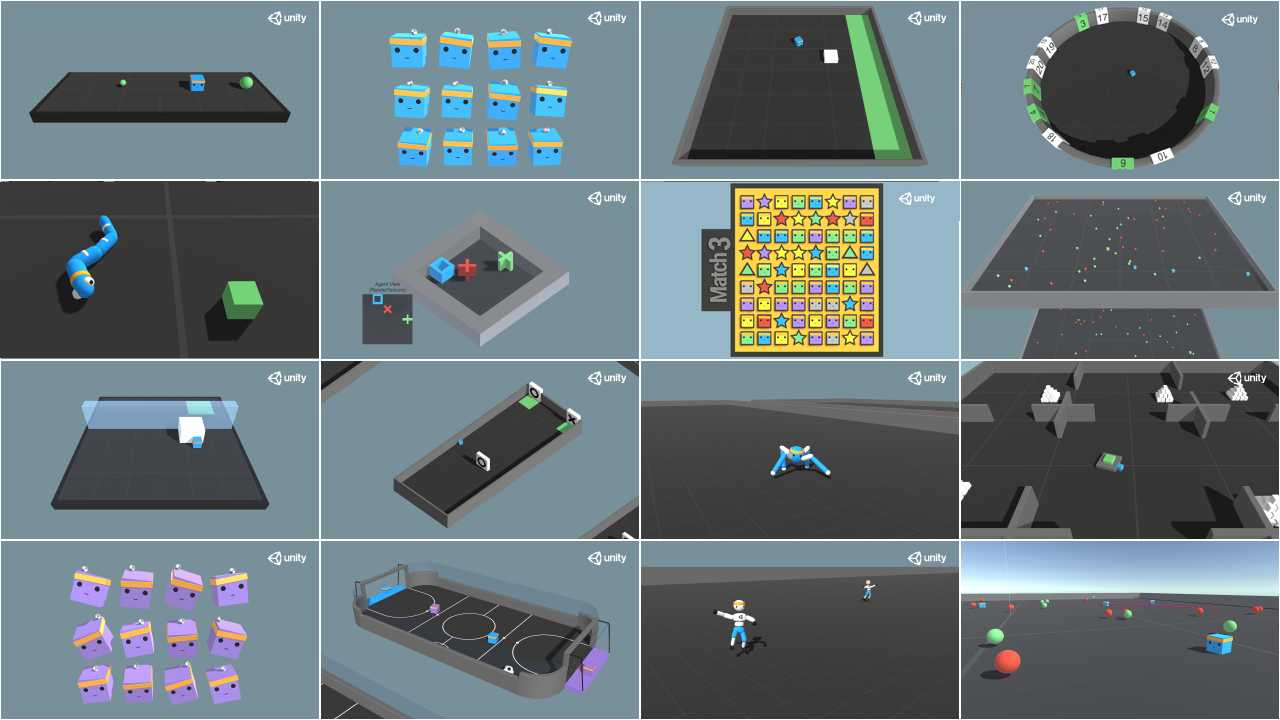

"### 🎁 Bonus: Why not train on another environment?\n",

|

||

"Now that you know how to train an agent using MLAgents, **why not try another environment?**\n",

|

||

"\n",

|

||

"MLAgents provides 17 different and we’re building some custom ones. The best way to learn is to try things of your own, have fun.\n",

|

||

"\n"

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "KSAkJxSr0z6-"

|

||

},

|

||

"source": [

|

||

""

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "YiyF4FX-04JB"

|

||

},

|

||

"source": [

|

||

"You have the full list of the Unity official environments here 👉 https://github.com/Unity-Technologies/ml-agents/blob/develop/docs/Learning-Environment-Examples.md\n",

|

||

"\n",

|

||

"For the demos to visualize your agent 👉 https://huggingface.co/unity\n",

|

||

"\n",

|

||

"For now we have integrated:\n",

|

||

"- [Worm](https://huggingface.co/spaces/unity/ML-Agents-Worm) demo where you teach a **worm to crawl**.\n",

|

||

"- [Walker](https://huggingface.co/spaces/unity/ML-Agents-Walker) demo where you teach an agent **to walk towards a goal**."

|

||

]

|

||

},

|

||

{

|

||

"cell_type": "markdown",

|

||

"metadata": {

|

||

"id": "PI6dPWmh064H"

|

||

},

|

||

"source": [

|

||

"That’s all for today. Congrats on finishing this tutorial!\n",

|

||

"\n",

|

||

"The best way to learn is to practice and try stuff. Why not try another environment? ML-Agents has 17 different environments, but you can also create your own? Check the documentation and have fun!\n",

|

||

"\n",

|

||

"See you on Unit 6 🔥,\n",

|

||

"\n",

|

||

"## Keep Learning, Stay awesome 🤗"

|

||

]

|

||

}

|

||

],

|

||

"metadata": {

|

||

"accelerator": "GPU",

|

||

"colab": {

|

||

"include_colab_link": true,

|

||

"private_outputs": true,

|

||

"provenance": []

|

||

},

|

||

"gpuClass": "standard",

|

||

"kernelspec": {

|

||

"display_name": "Python 3",

|

||

"name": "python3"

|

||

},

|

||

"language_info": {

|

||

"name": "python"

|

||

}

|

||

},

|

||

"nbformat": 4,

|

||

"nbformat_minor": 0

|

||

}

|