mirror of

https://github.com/babysor/Realtime-Voice-Clone-Chinese.git

synced 2026-05-12 11:35:56 +08:00

add instruction to use pretrained models

This commit is contained in:

@@ -27,8 +27,10 @@

|

||||

* Install [ffmpeg](https://ffmpeg.org/download.html#get-packages).

|

||||

* Run `pip install -r requirements.txt` to install the remaining necessary packages.

|

||||

|

||||

|

||||

### 2. Train synthesizer with aidatatang_200zh

|

||||

### 2. reuse the pretrained encoder/vocoder

|

||||

* Download the following models and extract to the root directory of this project.

|

||||

https://github.com/CorentinJ/Real-Time-Voice-Cloning/wiki/Pretrained-models

|

||||

### 3. Train synthesizer with aidatatang_200zh

|

||||

* Download aidatatang_200zh dataset and unzip: make sure you can access all .wav in *train* folder

|

||||

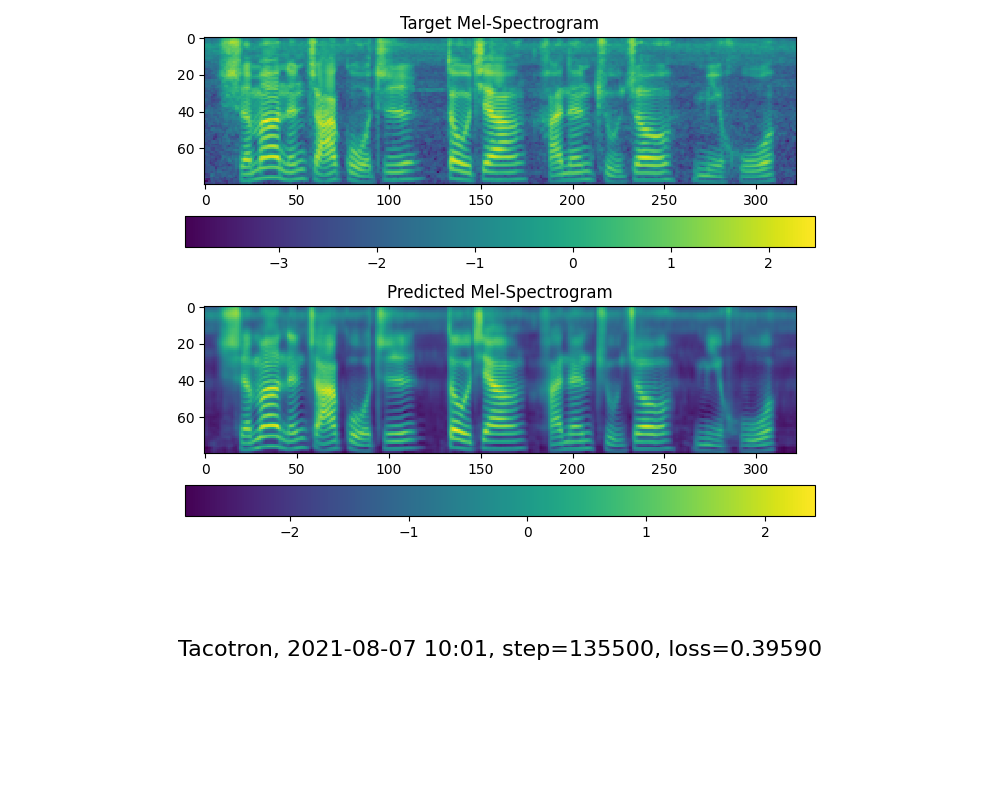

* Preprocess with the audios and the mel spectrograms:

|

||||

`python synthesizer_preprocess_audio.py <datasets_root>`

|

||||

@@ -44,7 +46,7 @@

|

||||

|

||||

|

||||

|

||||

### 3. Launch the Toolbox

|

||||

### 4. Launch the Toolbox

|

||||

You can then try the toolbox:

|

||||

|

||||

`python demo_toolbox.py -d <datasets_root>`

|

||||

|

||||

Reference in New Issue

Block a user