mirror of

https://github.com/huggingface/deep-rl-class.git

synced 2026-04-25 03:11:12 +08:00

Merge branch 'main' into ThomasSimonini/CertificationAndNext

This commit is contained in:

@@ -13,7 +13,7 @@ This repository contains the Deep Reinforcement Learning Course mdx files and no

|

||||

<br>

|

||||

<br>

|

||||

|

||||

# The documentation below is for v1.0 (depreciated)

|

||||

# The documentation below is for v1.0 (deprecated)

|

||||

|

||||

We're launching a **new version (v2.0) of the course starting December the 5th,**

|

||||

|

||||

@@ -26,7 +26,7 @@ The syllabus 📚: https://simoninithomas.github.io/deep-rl-course

|

||||

<br>

|

||||

<br>

|

||||

|

||||

# The documentation below is for v1.0 (depreciated)

|

||||

# The documentation below is for v1.0 (deprecated)

|

||||

|

||||

In this free course, you will:

|

||||

|

||||

|

||||

@@ -509,7 +509,7 @@

|

||||

"\n",

|

||||

"This step is the simplest:\n",

|

||||

"\n",

|

||||

"- Open the game Huggy in your browser: https://huggingface.co/spaces/ThomasSimonini/Huggy\n",

|

||||

"- Open the game Huggy in your browser: https://singularite.itch.io/huggy\n",

|

||||

"\n",

|

||||

"- Click on Play with my Huggy model\n",

|

||||

"\n",

|

||||

@@ -569,4 +569,4 @@

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 0

|

||||

}

|

||||

}

|

||||

|

||||

@@ -230,15 +230,6 @@

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"TODO CHANGE LINK OF THE REQUIREMENTS"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "32e3NPYgH5ET"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

@@ -1155,4 +1146,4 @@

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 0

|

||||

}

|

||||

}

|

||||

|

||||

@@ -127,7 +127,7 @@

|

||||

"source": [

|

||||

"# Let's train a Deep Q-Learning agent playing Atari' Space Invaders 👾 and upload it to the Hub.\n",

|

||||

"\n",

|

||||

"To validate this hands-on for the certification process, you need to push your trained model to the Hub and **get a result of >= 500**.\n",

|

||||

"To validate this hands-on for the certification process, you need to push your trained model to the Hub and **get a result of >= 200**.\n",

|

||||

"\n",

|

||||

"To find your result, go to the leaderboard and find your model, **the result = mean_reward - std of reward**\n",

|

||||

"\n",

|

||||

@@ -799,4 +799,4 @@

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 0

|

||||

}

|

||||

}

|

||||

|

||||

6

notebooks/unit4/requirements-unit4.txt

Normal file

6

notebooks/unit4/requirements-unit4.txt

Normal file

@@ -0,0 +1,6 @@

|

||||

gym

|

||||

git+https://github.com/ntasfi/PyGame-Learning-Environment.git

|

||||

git+https://github.com/qlan3/gym-games.git

|

||||

huggingface_hub

|

||||

imageio-ffmpeg

|

||||

pyyaml==6.0

|

||||

1614

notebooks/unit4/unit4.ipynb

Normal file

1614

notebooks/unit4/unit4.ipynb

Normal file

File diff suppressed because it is too large

Load Diff

844

notebooks/unit5/unit5.ipynb

Normal file

844

notebooks/unit5/unit5.ipynb

Normal file

@@ -0,0 +1,844 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "view-in-github",

|

||||

"colab_type": "text"

|

||||

},

|

||||

"source": [

|

||||

"<a href=\"https://colab.research.google.com/github/huggingface/deep-rl-class/blob/ThomasSimonini%2FMLAgents/notebooks/unit5/unit5.ipynb\" target=\"_parent\"><img src=\"https://colab.research.google.com/assets/colab-badge.svg\" alt=\"Open In Colab\"/></a>"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "2D3NL_e4crQv"

|

||||

},

|

||||

"source": [

|

||||

"# Unit 5: An Introduction to ML-Agents\n",

|

||||

"\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/thumbnail.png\" alt=\"Thumbnail\"/>\n",

|

||||

"\n",

|

||||

"In this notebook, you'll learn about ML-Agents and train two agents.\n",

|

||||

"\n",

|

||||

"- The first one will learn to **shoot snowballs onto spawning targets**.\n",

|

||||

"- The second need to press a button to spawn a pyramid, then navigate to the pyramid, knock it over, **and move to the gold brick at the top**. To do that, it will need to explore its environment, and we will use a technique called curiosity.\n",

|

||||

"\n",

|

||||

"After that, you'll be able **to watch your agents playing directly on your browser**.\n",

|

||||

"\n",

|

||||

"For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "97ZiytXEgqIz"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"⬇️ Here is an example of what **you will achieve at the end of this unit.** ⬇️\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "FMYrDriDujzX"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/pyramids.gif\" alt=\"Pyramids\"/>\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget.gif\" alt=\"SnowballTarget\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "cBmFlh8suma-"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### 🎮 Environments: \n",

|

||||

"\n",

|

||||

"- [Pyramids](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Learning-Environment-Examples.md#pyramids)\n",

|

||||

"- SnowballTarget\n",

|

||||

"\n",

|

||||

"### 📚 RL-Library: \n",

|

||||

"\n",

|

||||

"- [ML-Agents (HuggingFace Experimental Version)](https://github.com/huggingface/ml-agents)\n",

|

||||

"\n",

|

||||

"⚠ We're going to use an experimental version of ML-Agents were you can push to hub and load from hub Unity ML-Agents Models **you need to install the same version**"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "A-cYE0K5iL-w"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"We're constantly trying to improve our tutorials, so **if you find some issues in this notebook**, please [open an issue on the GitHub Repo](https://github.com/huggingface/deep-rl-class/issues)."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "qEhtaFh9i31S"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Objectives of this notebook 🏆\n",

|

||||

"\n",

|

||||

"At the end of the notebook, you will:\n",

|

||||

"\n",

|

||||

"- Understand how works **ML-Agents**, the environment library.\n",

|

||||

"- Be able to **train agents in Unity Environments**.\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "j7f63r3Yi5vE"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## This notebook is from the Deep Reinforcement Learning Course\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/deep-rl-course-illustration.jpg\" alt=\"Deep RL Course illustration\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "viNzVbVaYvY3"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "6p5HnEefISCB"

|

||||

},

|

||||

"source": [

|

||||

"In this free course, you will:\n",

|

||||

"\n",

|

||||

"- 📖 Study Deep Reinforcement Learning in **theory and practice**.\n",

|

||||

"- 🧑💻 Learn to **use famous Deep RL libraries** such as Stable Baselines3, RL Baselines3 Zoo, CleanRL and Sample Factory 2.0.\n",

|

||||

"- 🤖 Train **agents in unique environments** \n",

|

||||

"\n",

|

||||

"And more check 📚 the syllabus 👉 https://huggingface.co/deep-rl-course/communication/publishing-schedule\n",

|

||||

"\n",

|

||||

"Don’t forget to **<a href=\"http://eepurl.com/ic5ZUD\">sign up to the course</a>** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"The best way to keep in touch is to join our discord server to exchange with the community and with us 👉🏻 https://discord.gg/ydHrjt3WP5"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "Y-mo_6rXIjRi"

|

||||

},

|

||||

"source": [

|

||||

"## Prerequisites 🏗️\n",

|

||||

"Before diving into the notebook, you need to:\n",

|

||||

"\n",

|

||||

"🔲 📚 **Study [what is ML-Agents and how it works by reading Unit 5](https://huggingface.co/deep-rl-course/unit5/introduction)** 🤗 "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"# Let's train our agents 🚀\n",

|

||||

"\n",

|

||||

"The ML-Agents integration on the Hub is **still experimental**, some features will be added in the future. \n",

|

||||

"\n",

|

||||

"But for now, **to validate this hands-on for the certification process, you just need to push your trained models to the Hub**. There’s no results to attain to validate this one. But if you want to get nice results you can try to attain:\n",

|

||||

"\n",

|

||||

"- For `Pyramids` : Mean Reward = 1.75\n",

|

||||

"- For `SnowballTarget` : Mean Reward = 15 or 30 targets hit in an episode.\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "xYO1uD5Ujgdh"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Set the GPU 💪\n",

|

||||

"- To **accelerate the agent's training, we'll use a GPU**. To do that, go to `Runtime > Change Runtime type`\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step1.jpg\" alt=\"GPU Step 1\">"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "DssdIjk_8vZE"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"- `Hardware Accelerator > GPU`\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step2.jpg\" alt=\"GPU Step 2\">"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "sTfCXHy68xBv"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "an3ByrXYQ4iK"

|

||||

},

|

||||

"source": [

|

||||

"## Clone the repository and install the dependencies 🔽\n",

|

||||

"- We need to clone the repository, that **contains the experimental version of the library that allows you to push your trained agent to the Hub.**"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "6WNoL04M7rTa"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"# Clone the repository\n",

|

||||

"!git clone --depth 1 https://github.com/huggingface/ml-agents/ "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "d8wmVcMk7xKo"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"# Go inside the repository and install the package\n",

|

||||

"%cd ml-agents\n",

|

||||

"!pip3 install -e ./ml-agents-envs\n",

|

||||

"!pip3 install -e ./ml-agents"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## SnowballTarget ⛄\n",

|

||||

"\n",

|

||||

"If you need a refresher on how this environments work check this section 👉\n",

|

||||

"https://huggingface.co/deep-rl-course/unit5/snowball-target"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "R5_7Ptd_kEcG"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "HRY5ufKUKfhI"

|

||||

},

|

||||

"source": [

|

||||

"### Download and move the environment zip file in `./training-envs-executables/linux/`\n",

|

||||

"- Our environment executable is in a zip file.\n",

|

||||

"- We need to download it and place it to `./training-envs-executables/linux/`\n",

|

||||

"- We use a linux executable because we use colab, and colab machines OS is Ubuntu (linux)"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "C9Ls6_6eOKiA"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"# Here, we create training-envs-executables and linux\n",

|

||||

"!mkdir ./training-envs-executables\n",

|

||||

"!mkdir ./training-envs-executables/linux"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "jsoZGxr1MIXY"

|

||||

},

|

||||

"source": [

|

||||

"Download the file SnowballTarget.zip from https://drive.google.com/file/d/1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5 using `wget`. \n",

|

||||

"\n",

|

||||

"Check out the full solution to download large files from GDrive [here](https://bcrf.biochem.wisc.edu/2021/02/05/download-google-drive-files-using-wget/)"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "QU6gi8CmWhnA"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!wget --load-cookies /tmp/cookies.txt \"https://docs.google.com/uc?export=download&confirm=$(wget --quiet --save-cookies /tmp/cookies.txt --keep-session-cookies --no-check-certificate 'https://docs.google.com/uc?export=download&id=1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5' -O- | sed -rn 's/.*confirm=([0-9A-Za-z_]+).*/\\1\\n/p')&id=1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5\" -O ./training-envs-executables/linux/SnowballTarget.zip && rm -rf /tmp/cookies.txt"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"We unzip the executable.zip file"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "_LLVaEEK3ayi"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "8FPx0an9IAwO"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"!unzip -d ./training-envs-executables/linux/ ./training-envs-executables/linux/SnowballTarget.zip"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "nyumV5XfPKzu"

|

||||

},

|

||||

"source": [

|

||||

"Make sure your file is accessible "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "EdFsLJ11JvQf"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!chmod -R 755 ./training-envs-executables/linux/SnowballTarget"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Define the SnowballTarget config file\n",

|

||||

"- In ML-Agents, you define the **training hyperparameters into config.yaml files.**\n",

|

||||

"\n",

|

||||

"There are multiple hyperparameters. To know them better, you should check for each explanation with [the documentation](https://github.com/Unity-Technologies/ml-agents/blob/release_20_docs/docs/Training-Configuration-File.md)\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"So you need to create a `SnowballTarget.yaml` config file in ./content/ml-agents/config/ppo/\n",

|

||||

"\n",

|

||||

"We'll give you here a first version of this config (to copy and paste into your `SnowballTarget.yaml file`), **but you should modify it**.\n",

|

||||

"\n",

|

||||

"```\n",

|

||||

"behaviors:\n",

|

||||

" SnowballTarget:\n",

|

||||

" trainer_type: ppo\n",

|

||||

" summary_freq: 10000\n",

|

||||

" keep_checkpoints: 10\n",

|

||||

" checkpoint_interval: 50000\n",

|

||||

" max_steps: 200000\n",

|

||||

" time_horizon: 64\n",

|

||||

" threaded: true\n",

|

||||

" hyperparameters:\n",

|

||||

" learning_rate: 0.0003\n",

|

||||

" learning_rate_schedule: linear\n",

|

||||

" batch_size: 128\n",

|

||||

" buffer_size: 2048\n",

|

||||

" beta: 0.005\n",

|

||||

" epsilon: 0.2\n",

|

||||

" lambd: 0.95\n",

|

||||

" num_epoch: 3\n",

|

||||

" network_settings:\n",

|

||||

" normalize: false\n",

|

||||

" hidden_units: 256\n",

|

||||

" num_layers: 2\n",

|

||||

" vis_encode_type: simple\n",

|

||||

" reward_signals:\n",

|

||||

" extrinsic:\n",

|

||||

" gamma: 0.99\n",

|

||||

" strength: 1.0\n",

|

||||

"```"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "NAuEq32Mwvtz"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config1.png\" alt=\"Config SnowballTarget\"/>\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config2.png\" alt=\"Config SnowballTarget\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "4U3sRH4N4h_l"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"As an experimentation, you should also try to modify some other hyperparameters. Unity provides very [good documentation explaining each of them here](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Training-Configuration-File.md).\n",

|

||||

"\n",

|

||||

"Now that you've created the config file and understand what most hyperparameters do, we're ready to train our agent 🔥."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "JJJdo_5AyoGo"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "f9fI555bO12v"

|

||||

},

|

||||

"source": [

|

||||

"### Train the agent\n",

|

||||

"\n",

|

||||

"To train our agent, we just need to **launch mlagents-learn and select the executable containing the environment.**\n",

|

||||

"\n",

|

||||

"We define four parameters:\n",

|

||||

"\n",

|

||||

"1. `mlagents-learn <config>`: the path where the hyperparameter config file is.\n",

|

||||

"2. `--env`: where the environment executable is.\n",

|

||||

"3. `--run_id`: the name you want to give to your training run id.\n",

|

||||

"4. `--no-graphics`: to not launch the visualization during the training.\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentslearn.png\" alt=\"MlAgents learn\"/>\n",

|

||||

"\n",

|

||||

"Train the model and use the `--resume` flag to continue training in case of interruption. \n",

|

||||

"\n",

|

||||

"> It will fail first time if and when you use `--resume`, try running the block again to bypass the error. \n",

|

||||

"\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"The training will take 10 to 35min depending on your config, go take a ☕️you deserve it 🤗."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "lN32oWF8zPjs"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "bS-Yh1UdHfzy"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!mlagents-learn ./config/ppo/SnowballTarget.yaml --env=./training-envs-executables/linux/SnowballTarget/SnowballTarget --run-id=\"SnowballTarget1\" --no-graphics"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "5Vue94AzPy1t"

|

||||

},

|

||||

"source": [

|

||||

"### Push the agent to the 🤗 Hub\n",

|

||||

"\n",

|

||||

"- Now that we trained our agent, we’re **ready to push it to the Hub to be able to visualize it playing on your browser🔥.**"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"To be able to share your model with the community there are three more steps to follow:\n",

|

||||

"\n",

|

||||

"1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join\n",

|

||||

"\n",

|

||||

"2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.\n",

|

||||

"- Create a new token (https://huggingface.co/settings/tokens) **with write role**\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/create-token.jpg\" alt=\"Create HF Token\">\n",

|

||||

"\n",

|

||||

"- Copy the token \n",

|

||||

"- Run the cell below and paste the token"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "izT6FpgNzZ6R"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "rKt2vsYoK56o"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"from huggingface_hub import notebook_login\n",

|

||||

"notebook_login()"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "aSU9qD9_6dem"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"Then, we simply need to run `mlagents-push-to-hf`.\n",

|

||||

"\n",

|

||||

"And we define 4 parameters:\n",

|

||||

"\n",

|

||||

"1. `--run-id`: the name of the training run id.\n",

|

||||

"2. `--local-dir`: where the agent was saved, it’s results/<run_id name>, so in my case results/First Training.\n",

|

||||

"3. `--repo-id`: the name of the Hugging Face repo you want to create or update. It’s always <your huggingface username>/<the repo name>\n",

|

||||

"If the repo does not exist **it will be created automatically**\n",

|

||||

"4. `--commit-message`: since HF repos are git repository you need to define a commit message.\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentspushtohub.png\" alt=\"Push to Hub\"/>\n",

|

||||

"\n",

|

||||

"For instance:\n",

|

||||

"\n",

|

||||

"`!mlagents-push-to-hf --run-id=\"SnowballTarget1\" --local-dir=\"./results/SnowballTarget1\" --repo-id=\"ThomasSimonini/ppo-SnowballTarget\" --commit-message=\"First Push\"`"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "KK4fPfnczunT"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "dGEFAIboLVc6"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!mlagents-push-to-hf --run-id= # Add your run id --local-dir= # Your local dir --repo-id= # Your repo id --commit-message= # Your commit message"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"Else, if everything worked you should have this at the end of the process(but with a different url 😆) :\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"```\n",

|

||||

"Your model is pushed to the hub. You can view your model here: https://huggingface.co/ThomasSimonini/ppo-SnowballTarget\n",

|

||||

"```\n",

|

||||

"\n",

|

||||

"It’s the link to your model, it contains a model card that explains how to use it, your Tensorboard and your config file. **What’s awesome is that it’s a git repository, that means you can have different commits, update your repository with a new push etc.**"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "yborB0850FTM"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"But now comes the best: **being able to visualize your agent online 👀.**"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "5Uaon2cg0NrL"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Watch your agent playing 👀\n",

|

||||

"\n",

|

||||

"For this step it’s simple:\n",

|

||||

"\n",

|

||||

"1. Remember your repo-id\n",

|

||||

"\n",

|

||||

"2. Go here: https://singularite.itch.io/snowballtarget\n",

|

||||

"\n",

|

||||

"3. Launch the game and put it in full screen by clicking on the bottom right button\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget_load.png\" alt=\"Snowballtarget load\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "VMc4oOsE0QiZ"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"1. In step 1, choose your model repository which is the model id (in my case ThomasSimonini/ppo-SnowballTarget).\n",

|

||||

"\n",

|

||||

"2. In step 2, **choose what model you want to replay**:\n",

|

||||

" - I have multiple one, since we saved a model every 500000 timesteps. \n",

|

||||

" - But if I want the more recent I choose `SnowballTarget.onnx`\n",

|

||||

"\n",

|

||||

"👉 What’s nice **is to try with different models step to see the improvement of the agent.**\n",

|

||||

"\n",

|

||||

"And don't hesitate to share the best score your agent gets on discord in #rl-i-made-this channel 🔥\n",

|

||||

"\n",

|

||||

"Let's now try a harder environment called Pyramids..."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "Djs8c5rR0Z8a"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Pyramids 🏆\n",

|

||||

"\n",

|

||||

"### Download and move the environment zip file in `./training-envs-executables/linux/`\n",

|

||||

"- Our environment executable is in a zip file.\n",

|

||||

"- We need to download it and place it to `./training-envs-executables/linux/`\n",

|

||||

"- We use a linux executable because we use colab, and colab machines OS is Ubuntu (linux)"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "rVMwRi4y_tmx"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "NyqYYkLyAVMK"

|

||||

},

|

||||

"source": [

|

||||

"Download the file Pyramids.zip from https://drive.google.com/uc?export=download&id=1UiFNdKlsH0NTu32xV-giYUEVKV4-vc7H using `wget`. Check out the full solution to download large files from GDrive [here](https://bcrf.biochem.wisc.edu/2021/02/05/download-google-drive-files-using-wget/)"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "AxojCsSVAVMP"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!wget --load-cookies /tmp/cookies.txt \"https://docs.google.com/uc?export=download&confirm=$(wget --quiet --save-cookies /tmp/cookies.txt --keep-session-cookies --no-check-certificate 'https://docs.google.com/uc?export=download&id=1UiFNdKlsH0NTu32xV-giYUEVKV4-vc7H' -O- | sed -rn 's/.*confirm=([0-9A-Za-z_]+).*/\\1\\n/p')&id=1UiFNdKlsH0NTu32xV-giYUEVKV4-vc7H\" -O ./training-envs-executables/linux/Pyramids.zip && rm -rf /tmp/cookies.txt"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "bfs6CTJ1AVMP"

|

||||

},

|

||||

"source": [

|

||||

"**OR** Download directly to local machine and then drag and drop the file from local machine to `./training-envs-executables/linux`"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "H7JmgOwcSSmF"

|

||||

},

|

||||

"source": [

|

||||

"Wait for the upload to finish and then run the command below. \n",

|

||||

"\n",

|

||||

""

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"Unzip it"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "iWUUcs0_794U"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "i2E3K4V2AVMP"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"!unzip -d ./training-envs-executables/linux/ ./training-envs-executables/linux/Pyramids.zip"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "KmKYBgHTAVMP"

|

||||

},

|

||||

"source": [

|

||||

"Make sure your file is accessible "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "Im-nwvLPAVMP"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!chmod -R 755 ./training-envs-executables/linux/Pyramids/Pyramids"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Modify the PyramidsRND config file\n",

|

||||

"- Contrary to the first environment which was a custom one, **Pyramids was made by the Unity team**.\n",

|

||||

"- So the PyramidsRND config file already exists and is in ./content/ml-agents/config/ppo/PyramidsRND.yaml\n",

|

||||

"- You might asked why \"RND\" in PyramidsRND. RND stands for *random network distillation* it's a way to generate curiosity rewards. If you want to know more on that we wrote an article explaning this technique: https://medium.com/data-from-the-trenches/curiosity-driven-learning-through-random-network-distillation-488ffd8e5938\n",

|

||||

"\n",

|

||||

"For this training, we’ll modify one thing:\n",

|

||||

"- The total training steps hyperparameter is too high since we can hit the benchmark (mean reward = 1.75) in only 1M training steps.\n",

|

||||

"👉 To do that, we go to config/ppo/PyramidsRND.yaml,**and modify these to max_steps to 1000000.**\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/pyramids-config.png\" alt=\"Pyramids config\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "fqceIATXAgih"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"As an experimentation, you should also try to modify some other hyperparameters, Unity provides a very [good documentation explaining each of them here](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Training-Configuration-File.md).\n",

|

||||

"\n",

|

||||

"We’re now ready to train our agent 🔥."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "RI-5aPL7BWVk"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Train the agent\n",

|

||||

"\n",

|

||||

"The training will take 30 to 45min depending on your machine, go take a ☕️you deserve it 🤗."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "s5hr1rvIBdZH"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "fXi4-IaHBhqD"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!mlagents-learn ./config/ppo/PyramidsRND.yaml --env=./training-envs-executables/linux/Pyramids/Pyramids --run-id=\"Pyramids Training\" --no-graphics"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "txonKxuSByut"

|

||||

},

|

||||

"source": [

|

||||

"### Push the agent to the 🤗 Hub\n",

|

||||

"\n",

|

||||

"- Now that we trained our agent, we’re **ready to push it to the Hub to be able to visualize it playing on your browser🔥.**"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [],

|

||||

"metadata": {

|

||||

"id": "JZ53caJ99sX_"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"!mlagents-push-to-hf --run-id= # Add your run id --local-dir= # Your local dir --repo-id= # Your repo id --commit-message= # Your commit message"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "yiEQbv7rB4mU"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Watch your agent playing 👀\n",

|

||||

"\n",

|

||||

"The temporary link for Pyramids demo is: https://singularite.itch.io/pyramids"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "7aZfgxo-CDeQ"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

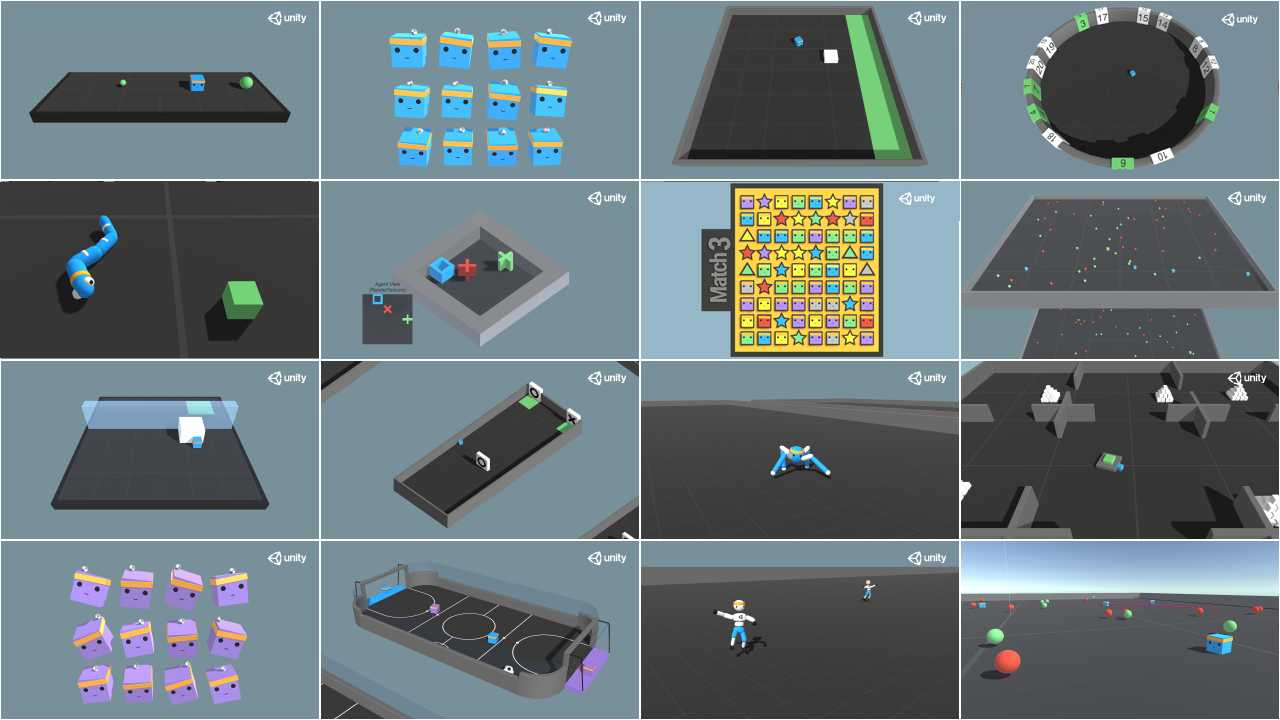

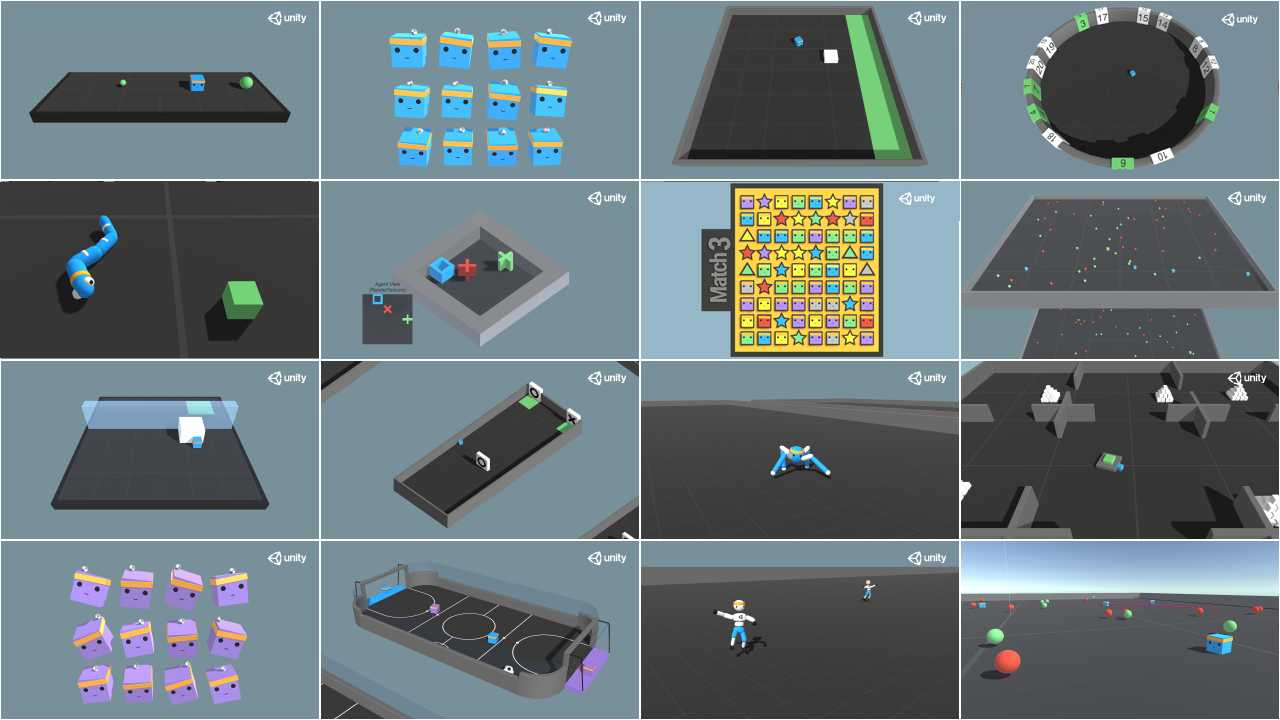

"### 🎁 Bonus: Why not train on another environment?\n",

|

||||

"Now that you know how to train an agent using MLAgents, **why not try another environment?** \n",

|

||||

"\n",

|

||||

"MLAgents provides 18 different and we’re building some custom ones. The best way to learn is to try things of your own, have fun.\n",

|

||||

"\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "hGG_oq2n0wjB"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

""

|

||||

],

|

||||

"metadata": {

|

||||

"id": "KSAkJxSr0z6-"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"You have the full list of the one currently available on Hugging Face here 👉 https://github.com/huggingface/ml-agents#the-environments\n",

|

||||

"\n",

|

||||

"For the demos to visualize your agent, the temporary link is: https://singularite.itch.io (temporary because we'll also put the demos on Hugging Face Space)\n",

|

||||

"\n",

|

||||

"For now we have integrated: \n",

|

||||

"- [Worm](https://singularite.itch.io/worm) demo where you teach a **worm to crawl**.\n",

|

||||

"- [Walker](https://singularite.itch.io/walker) demo where you teach an agent **to walk towards a goal**.\n",

|

||||

"\n",

|

||||

"If you want new demos to be added, please open an issue: https://github.com/huggingface/deep-rl-class 🤗"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "YiyF4FX-04JB"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"That’s all for today. Congrats on finishing this tutorial!\n",

|

||||

"\n",

|

||||

"The best way to learn is to practice and try stuff. Why not try another environment? ML-Agents has 18 different environments, but you can also create your own? Check the documentation and have fun!\n",

|

||||

"\n",

|

||||

"See you on Unit 6 🔥,\n",

|

||||

"\n",

|

||||

"## Keep Learning, Stay awesome 🤗"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "PI6dPWmh064H"

|

||||

}

|

||||

}

|

||||

],

|

||||

"metadata": {

|

||||

"accelerator": "GPU",

|

||||

"colab": {

|

||||

"provenance": [],

|

||||

"private_outputs": true,

|

||||

"include_colab_link": true

|

||||

},

|

||||

"gpuClass": "standard",

|

||||

"kernelspec": {

|

||||

"display_name": "Python 3",

|

||||

"name": "python3"

|

||||

},

|

||||

"language_info": {

|

||||

"name": "python"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 0

|

||||

}

|

||||

4

notebooks/unit6/requirements-unit6.txt

Normal file

4

notebooks/unit6/requirements-unit6.txt

Normal file

@@ -0,0 +1,4 @@

|

||||

stable-baselines3[extra]

|

||||

huggingface_sb3

|

||||

panda_gym==2.0.0

|

||||

pyglet==1.5.1

|

||||

918

notebooks/unit6/unit6.ipynb

Normal file

918

notebooks/unit6/unit6.ipynb

Normal file

@@ -0,0 +1,918 @@

|

||||

{

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 0,

|

||||

"metadata": {

|

||||

"colab": {

|

||||

"provenance": [],

|

||||

"private_outputs": true,

|

||||

"authorship_tag": "ABX9TyPDFLK3trc6MCLJLqUUuAbl",

|

||||

"include_colab_link": true

|

||||

},

|

||||

"kernelspec": {

|

||||

"name": "python3",

|

||||

"display_name": "Python 3"

|

||||

},

|

||||

"language_info": {

|

||||

"name": "python"

|

||||

},

|

||||

"accelerator": "GPU",

|

||||

"gpuClass": "standard"

|

||||

},

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "view-in-github",

|

||||

"colab_type": "text"

|

||||

},

|

||||

"source": [

|

||||

"<a href=\"https://colab.research.google.com/github/huggingface/deep-rl-class/blob/main/notebooks/unit6/unit6.ipynb\" target=\"_parent\"><img src=\"https://colab.research.google.com/assets/colab-badge.svg\" alt=\"Open In Colab\"/></a>"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"# Unit 6: Advantage Actor Critic (A2C) using Robotics Simulations with PyBullet and Panda-Gym 🤖\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit8/thumbnail.png\" alt=\"Thumbnail\"/>\n",

|

||||

"\n",

|

||||

"In this notebook, you'll learn to use A2C with PyBullet and Panda-Gym, two set of robotics environments. \n",

|

||||

"\n",

|

||||

"With [PyBullet](https://github.com/bulletphysics/bullet3), you're going to **train a robot to move**:\n",

|

||||

"- `AntBulletEnv-v0` 🕸️ More precisely, a spider (they say Ant but come on... it's a spider 😆) 🕸️\n",

|

||||

"\n",

|

||||

"Then, with [Panda-Gym](https://github.com/qgallouedec/panda-gym), you're going **to train a robotic arm** (Franka Emika Panda robot) to perform a task:\n",

|

||||

"- `Reach`: the robot must place its end-effector at a target position.\n",

|

||||

"\n",

|

||||

"After that, you'll be able **to train in other robotics environments**.\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "-PTReiOw-RAN"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit8/environments.gif\" alt=\"Robotics environments\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "2VGL_0ncoAJI"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### 🎮 Environments: \n",

|

||||

"\n",

|

||||

"- [PyBullet](https://github.com/bulletphysics/bullet3)\n",

|

||||

"- [Panda-Gym](https://github.com/qgallouedec/panda-gym)\n",

|

||||

"\n",

|

||||

"###📚 RL-Library: \n",

|

||||

"\n",

|

||||

"- [Stable-Baselines3](https://stable-baselines3.readthedocs.io/)"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "QInFitfWno1Q"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"We're constantly trying to improve our tutorials, so **if you find some issues in this notebook**, please [open an issue on the GitHub Repo](https://github.com/huggingface/deep-rl-class/issues)."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "2CcdX4g3oFlp"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Objectives of this notebook 🏆\n",

|

||||

"\n",

|

||||

"At the end of the notebook, you will:\n",

|

||||

"\n",

|

||||

"- Be able to use **PyBullet** and **Panda-Gym**, the environment libraries.\n",

|

||||

"- Be able to **train robots using A2C**.\n",

|

||||

"- Understand why **we need to normalize the input**.\n",

|

||||

"- Be able to **push your trained agent and the code to the Hub** with a nice video replay and an evaluation score 🔥.\n",

|

||||

"\n",

|

||||

"\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "MoubJX20oKaQ"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## This notebook is from the Deep Reinforcement Learning Course\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/deep-rl-course-illustration.jpg\" alt=\"Deep RL Course illustration\"/>\n",

|

||||

"\n",

|

||||

"In this free course, you will:\n",

|

||||

"\n",

|

||||

"- 📖 Study Deep Reinforcement Learning in **theory and practice**.\n",

|

||||

"- 🧑💻 Learn to **use famous Deep RL libraries** such as Stable Baselines3, RL Baselines3 Zoo, CleanRL and Sample Factory 2.0.\n",

|

||||

"- 🤖 Train **agents in unique environments** \n",

|

||||

"\n",

|

||||

"And more check 📚 the syllabus 👉 https://simoninithomas.github.io/deep-rl-course\n",

|

||||

"\n",

|

||||

"Don’t forget to **<a href=\"http://eepurl.com/ic5ZUD\">sign up to the course</a>** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"The best way to keep in touch is to join our discord server to exchange with the community and with us 👉🏻 https://discord.gg/ydHrjt3WP5"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "DoUNkTExoUED"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Prerequisites 🏗️\n",

|

||||

"Before diving into the notebook, you need to:\n",

|

||||

"\n",

|

||||

"🔲 📚 Study [Actor-Critic methods by reading Unit 6](https://huggingface.co/deep-rl-course/unit6/introduction) 🤗 "

|

||||

],

|

||||

"metadata": {

|

||||

"id": "BTuQAUAPoa5E"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"# Let's train our first robots 🤖"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "iajHvVDWoo01"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"To validate this hands-on for the [certification process](https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process), you need to push your two trained models to the Hub and get the following results:\n",

|

||||

"\n",

|

||||

"- `AntBulletEnv-v0` get a result of >= 650.\n",

|

||||

"- `PandaReachDense-v2` get a result of >= -3.5.\n",

|

||||

"\n",

|

||||

"To find your result, go to the [leaderboard](https://huggingface.co/spaces/huggingface-projects/Deep-Reinforcement-Learning-Leaderboard) and find your model, **the result = mean_reward - std of reward**\n",

|

||||

"\n",

|

||||

"If you don't find your model, **go to the bottom of the page and click on the refresh button**\n",

|

||||

"\n",

|

||||

"For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "zbOENTE2os_D"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Set the GPU 💪\n",

|

||||

"- To **accelerate the agent's training, we'll use a GPU**. To do that, go to `Runtime > Change Runtime type`\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step1.jpg\" alt=\"GPU Step 1\">"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "PU4FVzaoM6fC"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"- `Hardware Accelerator > GPU`\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step2.jpg\" alt=\"GPU Step 2\">"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "KV0NyFdQM9ZG"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Create a virtual display 🔽\n",

|

||||

"\n",

|

||||

"During the notebook, we'll need to generate a replay video. To do so, with colab, **we need to have a virtual screen to be able to render the environment** (and thus record the frames). \n",

|

||||

"\n",

|

||||

"Hence the following cell will install the librairies and create and run a virtual screen 🖥"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "bTpYcVZVMzUI"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "jV6wjQ7Be7p5"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"!apt install python-opengl\n",

|

||||

"!apt install ffmpeg\n",

|

||||

"!apt install xvfb\n",

|

||||

"!pip3 install pyvirtualdisplay"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"# Virtual display\n",

|

||||

"from pyvirtualdisplay import Display\n",

|

||||

"\n",

|

||||

"virtual_display = Display(visible=0, size=(1400, 900))\n",

|

||||

"virtual_display.start()"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "ww5PQH1gNLI4"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Install dependencies 🔽\n",

|

||||

"The first step is to install the dependencies, we’ll install multiple ones:\n",

|

||||

"\n",

|

||||

"- `pybullet`: Contains the walking robots environments.\n",

|

||||

"- `panda-gym`: Contains the robotics arm environments.\n",

|

||||

"- `stable-baselines3[extra]`: The SB3 deep reinforcement learning library.\n",

|

||||

"- `huggingface_sb3`: Additional code for Stable-baselines3 to load and upload models from the Hugging Face 🤗 Hub.\n",

|

||||

"- `huggingface_hub`: Library allowing anyone to work with the Hub repositories."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "e1obkbdJ_KnG"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "2yZRi_0bQGPM"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!pip install -r https://raw.githubusercontent.com/huggingface/deep-rl-class/main/notebooks/unit6/requirements-unit6.txt"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Import the packages 📦"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "QTep3PQQABLr"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"import pybullet_envs\n",

|

||||

"import panda_gym\n",

|

||||

"import gym\n",

|

||||

"\n",

|

||||

"import os\n",

|

||||

"\n",

|

||||

"from huggingface_sb3 import load_from_hub, package_to_hub\n",

|

||||

"\n",

|

||||

"from stable_baselines3 import A2C\n",

|

||||

"from stable_baselines3.common.evaluation import evaluate_policy\n",

|

||||

"from stable_baselines3.common.vec_env import DummyVecEnv, VecNormalize\n",

|

||||

"from stable_baselines3.common.env_util import make_vec_env\n",

|

||||

"\n",

|

||||

"from huggingface_hub import notebook_login"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "HpiB8VdnQ7Bk"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Environment 1: AntBulletEnv-v0 🕸\n",

|

||||

"\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "lfBwIS_oAVXI"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Create the AntBulletEnv-v0\n",

|

||||

"#### The environment 🎮\n",

|

||||

"In this environment, the agent needs to use correctly its different joints to walk correctly.\n",

|

||||

"You can find a detailled explanation of this environment here: https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "frVXOrnlBerQ"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"env_id = \"AntBulletEnv-v0\"\n",

|

||||

"# Create the env\n",

|

||||

"env = gym.make(env_id)\n",

|

||||

"\n",

|

||||

"# Get the state space and action space\n",

|

||||

"s_size = env.observation_space.shape[0]\n",

|

||||

"a_size = env.action_space"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "JpU-JCDQYYax"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"print(\"_____OBSERVATION SPACE_____ \\n\")\n",

|

||||

"print(\"The State Space is: \", s_size)\n",

|

||||

"print(\"Sample observation\", env.observation_space.sample()) # Get a random observation"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "2ZfvcCqEYgrg"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"The observation Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):\n",

|

||||

"\n",

|

||||

"The difference is that our observation space is 28 not 29.\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit8/obs_space.png\" alt=\"PyBullet Ant Obs space\"/>\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "QzMmsdMJS7jh"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"print(\"\\n _____ACTION SPACE_____ \\n\")\n",

|

||||

"print(\"The Action Space is: \", a_size)\n",

|

||||

"print(\"Action Space Sample\", env.action_space.sample()) # Take a random action"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "Tc89eLTYYkK2"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"The action Space (from [Jeffrey Y Mo](https://hackmd.io/@jeffreymo/SJJrSJh5_#PyBullet)):\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit8/action_space.png\" alt=\"PyBullet Ant Obs space\"/>\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "3RfsHhzZS9Pw"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Normalize observation and rewards"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "S5sXcg469ysB"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"A good practice in reinforcement learning is to [normalize input features](https://stable-baselines3.readthedocs.io/en/master/guide/rl_tips.html). \n",

|

||||

"\n",

|

||||

"For that purpose, there is a wrapper that will compute a running average and standard deviation of input features.\n",

|

||||

"\n",

|

||||

"We also normalize rewards with this same wrapper by adding `norm_reward = True`\n",

|

||||

"\n",

|

||||

"[You should check the documentation to fill this cell](https://stable-baselines3.readthedocs.io/en/master/guide/vec_envs.html#vecnormalize)"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "1ZyX6qf3Zva9"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"env = make_vec_env(env_id, n_envs=4)\n",

|

||||

"\n",

|

||||

"# Adding this wrapper to normalize the observation and the reward\n",

|

||||

"env = # TODO: Add the wrapper"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "1RsDtHHAQ9Ie"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"#### Solution"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "tF42HvI7-gs5"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"env = make_vec_env(env_id, n_envs=4)\n",

|

||||

"\n",

|

||||

"env = VecNormalize(env, norm_obs=True, norm_reward=False, clip_obs=10.)"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "2O67mqgC-hol"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Create the A2C Model 🤖\n",

|

||||

"\n",

|

||||

"In this case, because we have a vector of 28 values as input, we'll use an MLP (multi-layer perceptron) as policy.\n",

|

||||

"\n",

|

||||

"For more information about A2C implementation with StableBaselines3 check: https://stable-baselines3.readthedocs.io/en/master/modules/a2c.html#notes\n",

|

||||

"\n",

|

||||

"To find the best parameters I checked the [official trained agents by Stable-Baselines3 team](https://huggingface.co/sb3)."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "4JmEVU6z1ZA-"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"model = # Create the A2C model and try to find the best parameters"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "vR3T4qFt164I"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"#### Solution"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "nWAuOOLh-oQf"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"model = A2C(policy = \"MlpPolicy\",\n",

|

||||

" env = env,\n",

|

||||

" gae_lambda = 0.9,\n",

|

||||

" gamma = 0.99,\n",

|

||||

" learning_rate = 0.00096,\n",

|

||||

" max_grad_norm = 0.5,\n",

|

||||

" n_steps = 8,\n",

|

||||

" vf_coef = 0.4,\n",

|

||||

" ent_coef = 0.0,\n",

|

||||

" policy_kwargs=dict(\n",

|

||||

" log_std_init=-2, ortho_init=False),\n",

|

||||

" normalize_advantage=False,\n",

|

||||

" use_rms_prop= True,\n",

|

||||

" use_sde= True,\n",

|

||||

" verbose=1)"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "FKFLY54T-pU1"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",