mirror of

https://github.com/huggingface/deep-rl-class.git

synced 2026-04-15 02:41:20 +08:00

Merge pull request #173 from huggingface/ThomasSimonini/MLAgents

Add Unit Introduction to ML-Agents

This commit is contained in:

844

notebooks/unit5/unit5.ipynb

Normal file

844

notebooks/unit5/unit5.ipynb

Normal file

@@ -0,0 +1,844 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "view-in-github",

|

||||

"colab_type": "text"

|

||||

},

|

||||

"source": [

|

||||

"<a href=\"https://colab.research.google.com/github/huggingface/deep-rl-class/blob/ThomasSimonini%2FMLAgents/notebooks/unit5/unit5.ipynb\" target=\"_parent\"><img src=\"https://colab.research.google.com/assets/colab-badge.svg\" alt=\"Open In Colab\"/></a>"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "2D3NL_e4crQv"

|

||||

},

|

||||

"source": [

|

||||

"# Unit 5: An Introduction to ML-Agents\n",

|

||||

"\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/thumbnail.png\" alt=\"Thumbnail\"/>\n",

|

||||

"\n",

|

||||

"In this notebook, you'll learn about ML-Agents and train two agents.\n",

|

||||

"\n",

|

||||

"- The first one will learn to **shoot snowballs onto spawning targets**.\n",

|

||||

"- The second need to press a button to spawn a pyramid, then navigate to the pyramid, knock it over, **and move to the gold brick at the top**. To do that, it will need to explore its environment, and we will use a technique called curiosity.\n",

|

||||

"\n",

|

||||

"After that, you'll be able **to watch your agents playing directly on your browser**.\n",

|

||||

"\n",

|

||||

"For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "97ZiytXEgqIz"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"⬇️ Here is an example of what **you will achieve at the end of this unit.** ⬇️\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "FMYrDriDujzX"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/pyramids.gif\" alt=\"Pyramids\"/>\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget.gif\" alt=\"SnowballTarget\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "cBmFlh8suma-"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### 🎮 Environments: \n",

|

||||

"\n",

|

||||

"- [Pyramids](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Learning-Environment-Examples.md#pyramids)\n",

|

||||

"- SnowballTarget\n",

|

||||

"\n",

|

||||

"### 📚 RL-Library: \n",

|

||||

"\n",

|

||||

"- [ML-Agents (HuggingFace Experimental Version)](https://github.com/huggingface/ml-agents)\n",

|

||||

"\n",

|

||||

"⚠ We're going to use an experimental version of ML-Agents were you can push to hub and load from hub Unity ML-Agents Models **you need to install the same version**"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "A-cYE0K5iL-w"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"We're constantly trying to improve our tutorials, so **if you find some issues in this notebook**, please [open an issue on the GitHub Repo](https://github.com/huggingface/deep-rl-class/issues)."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "qEhtaFh9i31S"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Objectives of this notebook 🏆\n",

|

||||

"\n",

|

||||

"At the end of the notebook, you will:\n",

|

||||

"\n",

|

||||

"- Understand how works **ML-Agents**, the environment library.\n",

|

||||

"- Be able to **train agents in Unity Environments**.\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "j7f63r3Yi5vE"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## This notebook is from the Deep Reinforcement Learning Course\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/deep-rl-course-illustration.jpg\" alt=\"Deep RL Course illustration\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "viNzVbVaYvY3"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "6p5HnEefISCB"

|

||||

},

|

||||

"source": [

|

||||

"In this free course, you will:\n",

|

||||

"\n",

|

||||

"- 📖 Study Deep Reinforcement Learning in **theory and practice**.\n",

|

||||

"- 🧑💻 Learn to **use famous Deep RL libraries** such as Stable Baselines3, RL Baselines3 Zoo, CleanRL and Sample Factory 2.0.\n",

|

||||

"- 🤖 Train **agents in unique environments** \n",

|

||||

"\n",

|

||||

"And more check 📚 the syllabus 👉 https://huggingface.co/deep-rl-course/communication/publishing-schedule\n",

|

||||

"\n",

|

||||

"Don’t forget to **<a href=\"http://eepurl.com/ic5ZUD\">sign up to the course</a>** (we are collecting your email to be able to **send you the links when each Unit is published and give you information about the challenges and updates).**\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"The best way to keep in touch is to join our discord server to exchange with the community and with us 👉🏻 https://discord.gg/ydHrjt3WP5"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "Y-mo_6rXIjRi"

|

||||

},

|

||||

"source": [

|

||||

"## Prerequisites 🏗️\n",

|

||||

"Before diving into the notebook, you need to:\n",

|

||||

"\n",

|

||||

"🔲 📚 **Study [what is ML-Agents and how it works by reading Unit 5](https://huggingface.co/deep-rl-course/unit5/introduction)** 🤗 "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"# Let's train our agents 🚀\n",

|

||||

"\n",

|

||||

"The ML-Agents integration on the Hub is **still experimental**, some features will be added in the future. \n",

|

||||

"\n",

|

||||

"But for now, **to validate this hands-on for the certification process, you just need to push your trained models to the Hub**. There’s no results to attain to validate this one. But if you want to get nice results you can try to attain:\n",

|

||||

"\n",

|

||||

"- For `Pyramids` : Mean Reward = 1.75\n",

|

||||

"- For `SnowballTarget` : Mean Reward = 15 or 30 targets hit in an episode.\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "xYO1uD5Ujgdh"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Set the GPU 💪\n",

|

||||

"- To **accelerate the agent's training, we'll use a GPU**. To do that, go to `Runtime > Change Runtime type`\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step1.jpg\" alt=\"GPU Step 1\">"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "DssdIjk_8vZE"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"- `Hardware Accelerator > GPU`\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step2.jpg\" alt=\"GPU Step 2\">"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "sTfCXHy68xBv"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "an3ByrXYQ4iK"

|

||||

},

|

||||

"source": [

|

||||

"## Clone the repository and install the dependencies 🔽\n",

|

||||

"- We need to clone the repository, that **contains the experimental version of the library that allows you to push your trained agent to the Hub.**"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "6WNoL04M7rTa"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"# Clone the repository\n",

|

||||

"!git clone --depth 1 https://github.com/huggingface/ml-agents/ "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "d8wmVcMk7xKo"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"# Go inside the repository and install the package\n",

|

||||

"%cd ml-agents\n",

|

||||

"!pip3 install -e ./ml-agents-envs\n",

|

||||

"!pip3 install -e ./ml-agents"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## SnowballTarget ⛄\n",

|

||||

"\n",

|

||||

"If you need a refresher on how this environments work check this section 👉\n",

|

||||

"https://huggingface.co/deep-rl-course/unit5/snowball-target"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "R5_7Ptd_kEcG"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "HRY5ufKUKfhI"

|

||||

},

|

||||

"source": [

|

||||

"### Download and move the environment zip file in `./training-envs-executables/linux/`\n",

|

||||

"- Our environment executable is in a zip file.\n",

|

||||

"- We need to download it and place it to `./training-envs-executables/linux/`\n",

|

||||

"- We use a linux executable because we use colab, and colab machines OS is Ubuntu (linux)"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "C9Ls6_6eOKiA"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"# Here, we create training-envs-executables and linux\n",

|

||||

"!mkdir ./training-envs-executables\n",

|

||||

"!mkdir ./training-envs-executables/linux"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "jsoZGxr1MIXY"

|

||||

},

|

||||

"source": [

|

||||

"Download the file SnowballTarget.zip from https://drive.google.com/file/d/1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5 using `wget`. \n",

|

||||

"\n",

|

||||

"Check out the full solution to download large files from GDrive [here](https://bcrf.biochem.wisc.edu/2021/02/05/download-google-drive-files-using-wget/)"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "QU6gi8CmWhnA"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!wget --load-cookies /tmp/cookies.txt \"https://docs.google.com/uc?export=download&confirm=$(wget --quiet --save-cookies /tmp/cookies.txt --keep-session-cookies --no-check-certificate 'https://docs.google.com/uc?export=download&id=1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5' -O- | sed -rn 's/.*confirm=([0-9A-Za-z_]+).*/\\1\\n/p')&id=1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5\" -O ./training-envs-executables/linux/SnowballTarget.zip && rm -rf /tmp/cookies.txt"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"We unzip the executable.zip file"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "_LLVaEEK3ayi"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "8FPx0an9IAwO"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"!unzip -d ./training-envs-executables/linux/ ./training-envs-executables/linux/SnowballTarget.zip"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "nyumV5XfPKzu"

|

||||

},

|

||||

"source": [

|

||||

"Make sure your file is accessible "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "EdFsLJ11JvQf"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!chmod -R 755 ./training-envs-executables/linux/SnowballTarget"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Define the SnowballTarget config file\n",

|

||||

"- In ML-Agents, you define the **training hyperparameters into config.yaml files.**\n",

|

||||

"\n",

|

||||

"There are multiple hyperparameters. To know them better, you should check for each explanation with [the documentation](https://github.com/Unity-Technologies/ml-agents/blob/release_20_docs/docs/Training-Configuration-File.md)\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"So you need to create a `SnowballTarget.yaml` config file in ./content/ml-agents/config/ppo/\n",

|

||||

"\n",

|

||||

"We'll give you here a first version of this config (to copy and paste into your `SnowballTarget.yaml file`), **but you should modify it**.\n",

|

||||

"\n",

|

||||

"```\n",

|

||||

"behaviors:\n",

|

||||

" SnowballTarget:\n",

|

||||

" trainer_type: ppo\n",

|

||||

" summary_freq: 10000\n",

|

||||

" keep_checkpoints: 10\n",

|

||||

" checkpoint_interval: 50000\n",

|

||||

" max_steps: 200000\n",

|

||||

" time_horizon: 64\n",

|

||||

" threaded: true\n",

|

||||

" hyperparameters:\n",

|

||||

" learning_rate: 0.0003\n",

|

||||

" learning_rate_schedule: linear\n",

|

||||

" batch_size: 128\n",

|

||||

" buffer_size: 2048\n",

|

||||

" beta: 0.005\n",

|

||||

" epsilon: 0.2\n",

|

||||

" lambd: 0.95\n",

|

||||

" num_epoch: 3\n",

|

||||

" network_settings:\n",

|

||||

" normalize: false\n",

|

||||

" hidden_units: 256\n",

|

||||

" num_layers: 2\n",

|

||||

" vis_encode_type: simple\n",

|

||||

" reward_signals:\n",

|

||||

" extrinsic:\n",

|

||||

" gamma: 0.99\n",

|

||||

" strength: 1.0\n",

|

||||

"```"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "NAuEq32Mwvtz"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config1.png\" alt=\"Config SnowballTarget\"/>\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config2.png\" alt=\"Config SnowballTarget\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "4U3sRH4N4h_l"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"As an experimentation, you should also try to modify some other hyperparameters. Unity provides very [good documentation explaining each of them here](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Training-Configuration-File.md).\n",

|

||||

"\n",

|

||||

"Now that you've created the config file and understand what most hyperparameters do, we're ready to train our agent 🔥."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "JJJdo_5AyoGo"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "f9fI555bO12v"

|

||||

},

|

||||

"source": [

|

||||

"### Train the agent\n",

|

||||

"\n",

|

||||

"To train our agent, we just need to **launch mlagents-learn and select the executable containing the environment.**\n",

|

||||

"\n",

|

||||

"We define four parameters:\n",

|

||||

"\n",

|

||||

"1. `mlagents-learn <config>`: the path where the hyperparameter config file is.\n",

|

||||

"2. `--env`: where the environment executable is.\n",

|

||||

"3. `--run_id`: the name you want to give to your training run id.\n",

|

||||

"4. `--no-graphics`: to not launch the visualization during the training.\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentslearn.png\" alt=\"MlAgents learn\"/>\n",

|

||||

"\n",

|

||||

"Train the model and use the `--resume` flag to continue training in case of interruption. \n",

|

||||

"\n",

|

||||

"> It will fail first time if and when you use `--resume`, try running the block again to bypass the error. \n",

|

||||

"\n"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"The training will take 10 to 35min depending on your config, go take a ☕️you deserve it 🤗."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "lN32oWF8zPjs"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "bS-Yh1UdHfzy"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!mlagents-learn ./config/ppo/SnowballTarget.yaml --env=./training-envs-executables/linux/SnowballTarget/SnowballTarget --run-id=\"SnowballTarget1\" --no-graphics"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "5Vue94AzPy1t"

|

||||

},

|

||||

"source": [

|

||||

"### Push the agent to the 🤗 Hub\n",

|

||||

"\n",

|

||||

"- Now that we trained our agent, we’re **ready to push it to the Hub to be able to visualize it playing on your browser🔥.**"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"To be able to share your model with the community there are three more steps to follow:\n",

|

||||

"\n",

|

||||

"1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join\n",

|

||||

"\n",

|

||||

"2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.\n",

|

||||

"- Create a new token (https://huggingface.co/settings/tokens) **with write role**\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/create-token.jpg\" alt=\"Create HF Token\">\n",

|

||||

"\n",

|

||||

"- Copy the token \n",

|

||||

"- Run the cell below and paste the token"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "izT6FpgNzZ6R"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "rKt2vsYoK56o"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"from huggingface_hub import notebook_login\n",

|

||||

"notebook_login()"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "aSU9qD9_6dem"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"Then, we simply need to run `mlagents-push-to-hf`.\n",

|

||||

"\n",

|

||||

"And we define 4 parameters:\n",

|

||||

"\n",

|

||||

"1. `--run-id`: the name of the training run id.\n",

|

||||

"2. `--local-dir`: where the agent was saved, it’s results/<run_id name>, so in my case results/First Training.\n",

|

||||

"3. `--repo-id`: the name of the Hugging Face repo you want to create or update. It’s always <your huggingface username>/<the repo name>\n",

|

||||

"If the repo does not exist **it will be created automatically**\n",

|

||||

"4. `--commit-message`: since HF repos are git repository you need to define a commit message.\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentspushtohub.png\" alt=\"Push to Hub\"/>\n",

|

||||

"\n",

|

||||

"For instance:\n",

|

||||

"\n",

|

||||

"`!mlagents-push-to-hf --run-id=\"SnowballTarget1\" --local-dir=\"./results/SnowballTarget1\" --repo-id=\"ThomasSimonini/ppo-SnowballTarget\" --commit-message=\"First Push\"`"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "KK4fPfnczunT"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "dGEFAIboLVc6"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!mlagents-push-to-hf --run-id= # Add your run id --local-dir= # Your local dir --repo-id= # Your repo id --commit-message= # Your commit message"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"Else, if everything worked you should have this at the end of the process(but with a different url 😆) :\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"\n",

|

||||

"```\n",

|

||||

"Your model is pushed to the hub. You can view your model here: https://huggingface.co/ThomasSimonini/ppo-SnowballTarget\n",

|

||||

"```\n",

|

||||

"\n",

|

||||

"It’s the link to your model, it contains a model card that explains how to use it, your Tensorboard and your config file. **What’s awesome is that it’s a git repository, that means you can have different commits, update your repository with a new push etc.**"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "yborB0850FTM"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"But now comes the best: **being able to visualize your agent online 👀.**"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "5Uaon2cg0NrL"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Watch your agent playing 👀\n",

|

||||

"\n",

|

||||

"For this step it’s simple:\n",

|

||||

"\n",

|

||||

"1. Remember your repo-id\n",

|

||||

"\n",

|

||||

"2. Go here: https://singularite.itch.io/snowballtarget\n",

|

||||

"\n",

|

||||

"3. Launch the game and put it in full screen by clicking on the bottom right button\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget_load.png\" alt=\"Snowballtarget load\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "VMc4oOsE0QiZ"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"1. In step 1, choose your model repository which is the model id (in my case ThomasSimonini/ppo-SnowballTarget).\n",

|

||||

"\n",

|

||||

"2. In step 2, **choose what model you want to replay**:\n",

|

||||

" - I have multiple one, since we saved a model every 500000 timesteps. \n",

|

||||

" - But if I want the more recent I choose `SnowballTarget.onnx`\n",

|

||||

"\n",

|

||||

"👉 What’s nice **is to try with different models step to see the improvement of the agent.**\n",

|

||||

"\n",

|

||||

"And don't hesitate to share the best score your agent gets on discord in #rl-i-made-this channel 🔥\n",

|

||||

"\n",

|

||||

"Let's now try a harder environment called Pyramids..."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "Djs8c5rR0Z8a"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"## Pyramids 🏆\n",

|

||||

"\n",

|

||||

"### Download and move the environment zip file in `./training-envs-executables/linux/`\n",

|

||||

"- Our environment executable is in a zip file.\n",

|

||||

"- We need to download it and place it to `./training-envs-executables/linux/`\n",

|

||||

"- We use a linux executable because we use colab, and colab machines OS is Ubuntu (linux)"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "rVMwRi4y_tmx"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "NyqYYkLyAVMK"

|

||||

},

|

||||

"source": [

|

||||

"Download the file Pyramids.zip from https://drive.google.com/uc?export=download&id=1UiFNdKlsH0NTu32xV-giYUEVKV4-vc7H using `wget`. Check out the full solution to download large files from GDrive [here](https://bcrf.biochem.wisc.edu/2021/02/05/download-google-drive-files-using-wget/)"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "AxojCsSVAVMP"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!wget --load-cookies /tmp/cookies.txt \"https://docs.google.com/uc?export=download&confirm=$(wget --quiet --save-cookies /tmp/cookies.txt --keep-session-cookies --no-check-certificate 'https://docs.google.com/uc?export=download&id=1UiFNdKlsH0NTu32xV-giYUEVKV4-vc7H' -O- | sed -rn 's/.*confirm=([0-9A-Za-z_]+).*/\\1\\n/p')&id=1UiFNdKlsH0NTu32xV-giYUEVKV4-vc7H\" -O ./training-envs-executables/linux/Pyramids.zip && rm -rf /tmp/cookies.txt"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "bfs6CTJ1AVMP"

|

||||

},

|

||||

"source": [

|

||||

"**OR** Download directly to local machine and then drag and drop the file from local machine to `./training-envs-executables/linux`"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "H7JmgOwcSSmF"

|

||||

},

|

||||

"source": [

|

||||

"Wait for the upload to finish and then run the command below. \n",

|

||||

"\n",

|

||||

""

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"Unzip it"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "iWUUcs0_794U"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "i2E3K4V2AVMP"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"%%capture\n",

|

||||

"!unzip -d ./training-envs-executables/linux/ ./training-envs-executables/linux/Pyramids.zip"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "KmKYBgHTAVMP"

|

||||

},

|

||||

"source": [

|

||||

"Make sure your file is accessible "

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "Im-nwvLPAVMP"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!chmod -R 755 ./training-envs-executables/linux/Pyramids/Pyramids"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Modify the PyramidsRND config file\n",

|

||||

"- Contrary to the first environment which was a custom one, **Pyramids was made by the Unity team**.\n",

|

||||

"- So the PyramidsRND config file already exists and is in ./content/ml-agents/config/ppo/PyramidsRND.yaml\n",

|

||||

"- You might asked why \"RND\" in PyramidsRND. RND stands for *random network distillation* it's a way to generate curiosity rewards. If you want to know more on that we wrote an article explaning this technique: https://medium.com/data-from-the-trenches/curiosity-driven-learning-through-random-network-distillation-488ffd8e5938\n",

|

||||

"\n",

|

||||

"For this training, we’ll modify one thing:\n",

|

||||

"- The total training steps hyperparameter is too high since we can hit the benchmark (mean reward = 1.75) in only 1M training steps.\n",

|

||||

"👉 To do that, we go to config/ppo/PyramidsRND.yaml,**and modify these to max_steps to 1000000.**\n",

|

||||

"\n",

|

||||

"<img src=\"https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/pyramids-config.png\" alt=\"Pyramids config\"/>"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "fqceIATXAgih"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"As an experimentation, you should also try to modify some other hyperparameters, Unity provides a very [good documentation explaining each of them here](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Training-Configuration-File.md).\n",

|

||||

"\n",

|

||||

"We’re now ready to train our agent 🔥."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "RI-5aPL7BWVk"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Train the agent\n",

|

||||

"\n",

|

||||

"The training will take 30 to 45min depending on your machine, go take a ☕️you deserve it 🤗."

|

||||

],

|

||||

"metadata": {

|

||||

"id": "s5hr1rvIBdZH"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"metadata": {

|

||||

"id": "fXi4-IaHBhqD"

|

||||

},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"!mlagents-learn ./config/ppo/PyramidsRND.yaml --env=./training-envs-executables/linux/Pyramids/Pyramids --run-id=\"Pyramids Training\" --no-graphics"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"metadata": {

|

||||

"id": "txonKxuSByut"

|

||||

},

|

||||

"source": [

|

||||

"### Push the agent to the 🤗 Hub\n",

|

||||

"\n",

|

||||

"- Now that we trained our agent, we’re **ready to push it to the Hub to be able to visualize it playing on your browser🔥.**"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [],

|

||||

"metadata": {

|

||||

"id": "JZ53caJ99sX_"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"source": [

|

||||

"!mlagents-push-to-hf --run-id= # Add your run id --local-dir= # Your local dir --repo-id= # Your repo id --commit-message= # Your commit message"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "yiEQbv7rB4mU"

|

||||

},

|

||||

"execution_count": null,

|

||||

"outputs": []

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### Watch your agent playing 👀\n",

|

||||

"\n",

|

||||

"The temporary link for Pyramids demo is: https://singularite.itch.io/pyramids"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "7aZfgxo-CDeQ"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"### 🎁 Bonus: Why not train on another environment?\n",

|

||||

"Now that you know how to train an agent using MLAgents, **why not try another environment?** \n",

|

||||

"\n",

|

||||

"MLAgents provides 18 different and we’re building some custom ones. The best way to learn is to try things of your own, have fun.\n",

|

||||

"\n"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "hGG_oq2n0wjB"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

""

|

||||

],

|

||||

"metadata": {

|

||||

"id": "KSAkJxSr0z6-"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"You have the full list of the one currently available on Hugging Face here 👉 https://github.com/huggingface/ml-agents#the-environments\n",

|

||||

"\n",

|

||||

"For the demos to visualize your agent, the temporary link is: https://singularite.itch.io (temporary because we'll also put the demos on Hugging Face Space)\n",

|

||||

"\n",

|

||||

"For now we have integrated: \n",

|

||||

"- [Worm](https://singularite.itch.io/worm) demo where you teach a **worm to crawl**.\n",

|

||||

"- [Walker](https://singularite.itch.io/walker) demo where you teach an agent **to walk towards a goal**.\n",

|

||||

"\n",

|

||||

"If you want new demos to be added, please open an issue: https://github.com/huggingface/deep-rl-class 🤗"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "YiyF4FX-04JB"

|

||||

}

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"source": [

|

||||

"That’s all for today. Congrats on finishing this tutorial!\n",

|

||||

"\n",

|

||||

"The best way to learn is to practice and try stuff. Why not try another environment? ML-Agents has 18 different environments, but you can also create your own? Check the documentation and have fun!\n",

|

||||

"\n",

|

||||

"See you on Unit 6 🔥,\n",

|

||||

"\n",

|

||||

"## Keep Learning, Stay awesome 🤗"

|

||||

],

|

||||

"metadata": {

|

||||

"id": "PI6dPWmh064H"

|

||||

}

|

||||

}

|

||||

],

|

||||

"metadata": {

|

||||

"accelerator": "GPU",

|

||||

"colab": {

|

||||

"provenance": [],

|

||||

"private_outputs": true,

|

||||

"include_colab_link": true

|

||||

},

|

||||

"gpuClass": "standard",

|

||||

"kernelspec": {

|

||||

"display_name": "Python 3",

|

||||

"name": "python3"

|

||||

},

|

||||

"language_info": {

|

||||

"name": "python"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

"nbformat_minor": 0

|

||||

}

|

||||

@@ -130,6 +130,24 @@

|

||||

title: Conclusion

|

||||

- local: unit4/additional-readings

|

||||

title: Additional Readings

|

||||

- title: Unit 5. Introduction to Unity ML-Agents

|

||||

sections:

|

||||

- local: unit5/introduction

|

||||

title: Introduction

|

||||

- local: unit5/how-mlagents-works

|

||||

title: How ML-Agents works?

|

||||

- local: unit5/snowball-target

|

||||

title: The SnowballTarget environment

|

||||

- local: unit5/pyramids

|

||||

title: The Pyramids environment

|

||||

- local: unit5/curiosity

|

||||

title: (Optional) What is curiosity in Deep Reinforcement Learning?

|

||||

- local: unit5/hands-on

|

||||

title: Hands-on

|

||||

- local: unit5/bonus

|

||||

title: Bonus. Learn to create your own environments with Unity and MLAgents

|

||||

- local: unit5/conclusion

|

||||

title: Conclusion

|

||||

- title: What's next? New Units Publishing Schedule

|

||||

sections:

|

||||

- local: communication/publishing-schedule

|

||||

|

||||

19

units/en/unit5/bonus.mdx

Normal file

19

units/en/unit5/bonus.mdx

Normal file

@@ -0,0 +1,19 @@

|

||||

# Bonus: Learn to create your own environments with Unity and MLAgents

|

||||

|

||||

**You can create your own reinforcement learning environments with Unity and MLAgents**. But, using a game engine such as Unity, can be intimidating at first but here are the steps you can do to learn smoothly.

|

||||

|

||||

## Step 1: Know how to use Unity

|

||||

|

||||

- The best way to learn Unity is to do ["Create with Code" course](https://learn.unity.com/course/create-with-code): it's a series of videos for beginners where **you will create 5 small games with Unity**.

|

||||

|

||||

## Step 2: Create the simplest environment with this tutorial

|

||||

|

||||

- Then, when you know how to use Unity, you can create your [first basic RL environment using this tutorial](https://github.com/Unity-Technologies/ml-agents/blob/release_20_docs/docs/Learning-Environment-Create-New.md).

|

||||

|

||||

## Step 3: Iterate and create nice environments

|

||||

|

||||

- Now that you've created a first simple environment you can iterate in more complex one using the [MLAgents documentation (especially Designing Agents and Agent part)](https://github.com/Unity-Technologies/ml-agents/blob/release_20_docs/docs/)

|

||||

- In addition, you can follow this free course ["Create a hummingbird environment"](https://learn.unity.com/course/ml-agents-hummingbirds) by [Adam Kelly](https://twitter.com/aktwelve)

|

||||

|

||||

|

||||

Have fun! And if you create custom environments don't hesitate to share them to `#rl-i-made-this` discord channel.

|

||||

22

units/en/unit5/conclusion.mdx

Normal file

22

units/en/unit5/conclusion.mdx

Normal file

@@ -0,0 +1,22 @@

|

||||

# Conclusion

|

||||

|

||||

Congrats on finishing this unit! You’ve just trained your first ML-Agents and shared it to the Hub 🥳.

|

||||

|

||||

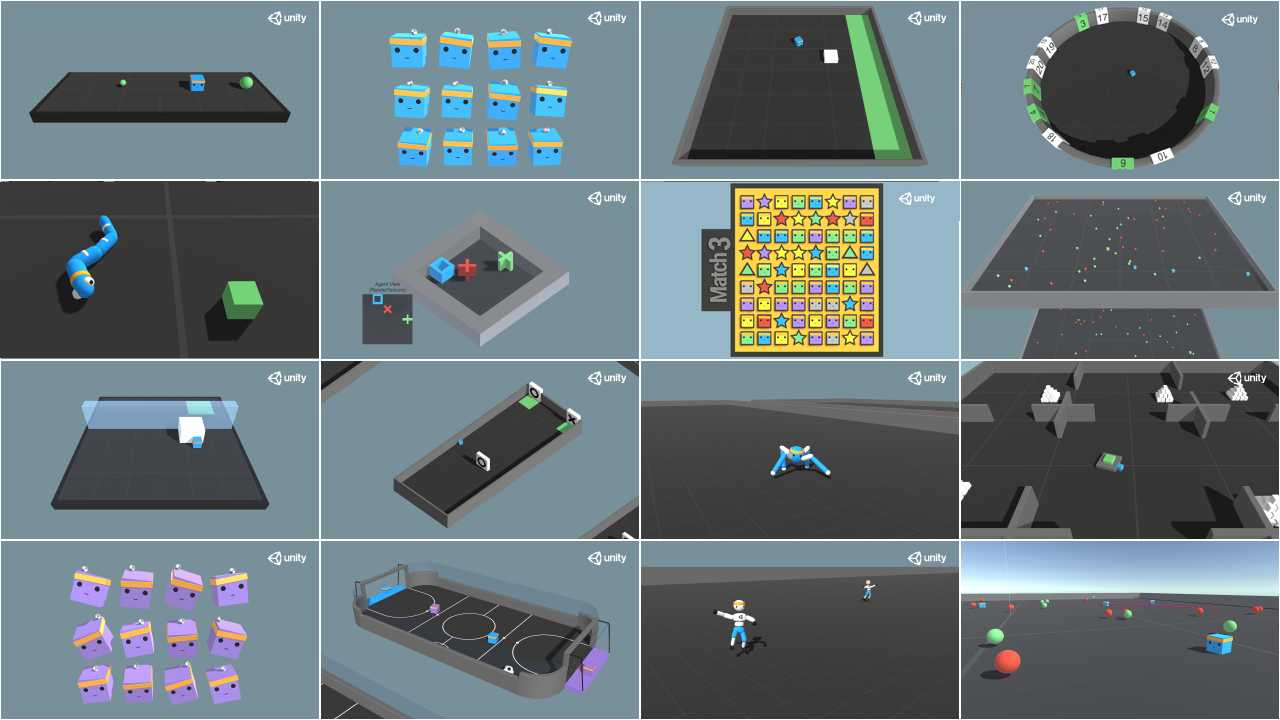

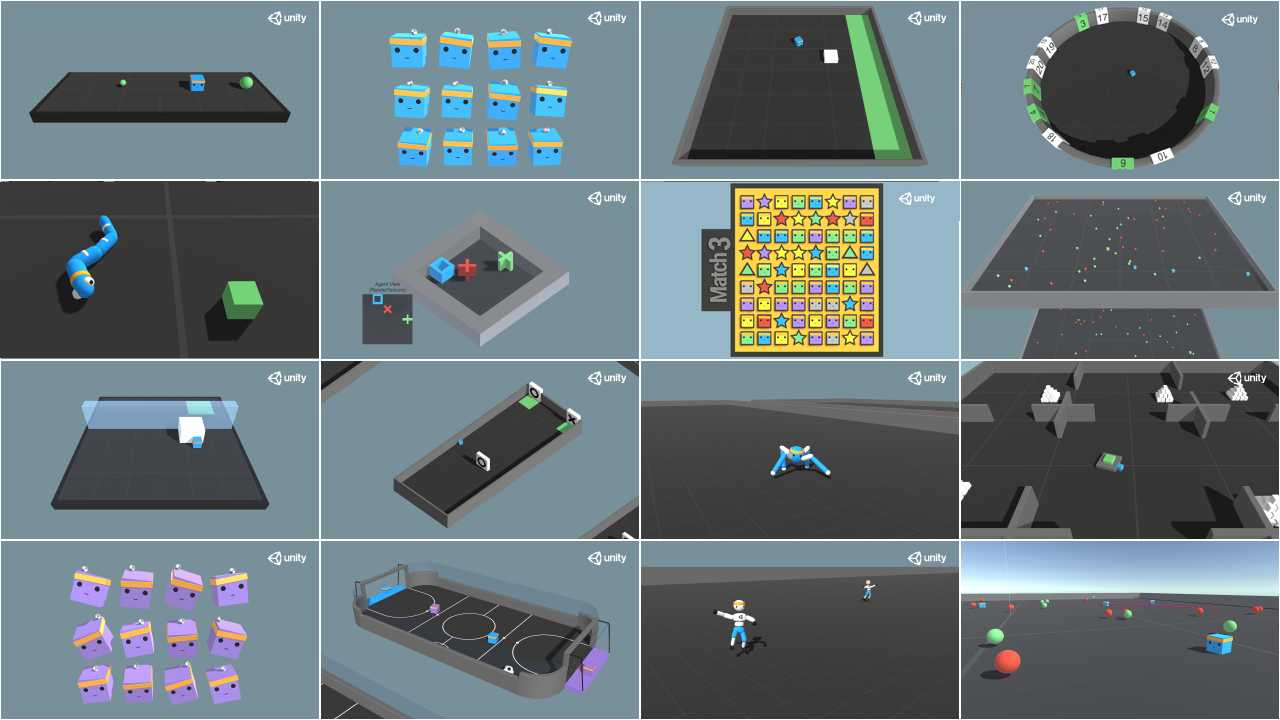

The best way to learn is to **practice and try stuff**. Why not try another environment? [ML-Agents has 18 different environments](https://github.com/Unity-Technologies/ml-agents/blob/develop/docs/Learning-Environment-Examples.md).

|

||||

|

||||

For instance:

|

||||

- [Worm](https://singularite.itch.io/worm), where you teach a worm to crawl.

|

||||

- [Walker](https://singularite.itch.io/walker): teach an agent to walk towards a goal.

|

||||

|

||||

Check the documentation to find how to train them and the list of already integrated MLAgents environments on the Hub: https://github.com/huggingface/ml-agents#getting-started

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit5/envs-unity.jpeg" alt="Example envs"/>

|

||||

|

||||

|

||||

In the next unit, we're going to learn about multi-agents. And you're going to train your first multi-agents to compete in Soccer and Snowball fight against other classmate's agents.

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight.gif" alt="Snownball fight"/>

|

||||

|

||||

Finally, we would love **to hear what you think of the course and how we can improve it**. If you have some feedback then, please 👉 [fill this form](https://forms.gle/BzKXWzLAGZESGNaE9)

|

||||

|

||||

### Keep Learning, stay awesome 🤗

|

||||

50

units/en/unit5/curiosity.mdx

Normal file

50

units/en/unit5/curiosity.mdx

Normal file

@@ -0,0 +1,50 @@

|

||||

# (Optional) What is curiosity in Deep Reinforcement Learning?

|

||||

|

||||

This is an (optional) introduction about curiosity. If you want to learn more you can read my two articles where I dive into the mathematical details:

|

||||

|

||||

- [Curiosity-Driven Learning through Next State Prediction](https://medium.com/data-from-the-trenches/curiosity-driven-learning-through-next-state-prediction-f7f4e2f592fa)

|

||||

- [Random Network Distillation: a new take on Curiosity-Driven Learning](https://medium.com/data-from-the-trenches/curiosity-driven-learning-through-random-network-distillation-488ffd8e5938)

|

||||

|

||||

## Two Major Problems in Modern RL

|

||||

|

||||

To understand what is curiosity, we need first to understand the two major problems with RL:

|

||||

|

||||

First, the *sparse rewards problem:* that is, **most rewards do not contain information, and hence are set to zero**.

|

||||

|

||||

Remember that RL is based on the *reward hypothesis*, which is the idea that each goal can be described as the maximization of the rewards. Therefore, rewards act as feedback for RL agents, **if they don’t receive any, their knowledge of which action is appropriate (or not) cannot change**.

|

||||

|

||||

|

||||

<figure>

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit5/curiosity1.png" alt="Curiosity"/>

|

||||

<figcaption>Source: Thanks to the reward, our agent knows that this action at that state was good</figcaption>

|

||||

</figure>

|

||||

|

||||

|

||||

For instance, in [Vizdoom](https://vizdoom.cs.put.edu.pl/), a set of environments based on the game Doom “DoomMyWayHome,” your agent is only rewarded **if it finds the vest**.

|

||||

However, the vest is far away from your starting point, so most of your rewards will be zero. Therefore, if our agent does not receive useful feedback (dense rewards), it will take much longer to learn an optimal policy and **it can spend time turning around without finding the goal**.

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit5/curiosity2.png" alt="Curiosity"/>

|

||||

|

||||

The second big problem is that **the extrinsic reward function is handmade, that is in each environment, a human has to implement a reward function**. But how we can scale that in big and complex environments?

|

||||

|

||||

## So what is curiosity?

|

||||

|

||||

A solution to these problems is **to develop a reward function that is intrinsic to the agent, i.e., generated by the agent itself**. The agent will act as a self-learner since it will be the student, but also its own feedback master.

|

||||

|

||||

**This intrinsic reward mechanism is known as curiosity** because this reward push to explore states that are novel/unfamiliar. In order to achieve that, our agent will receive a high reward when exploring new trajectories.

|

||||

|

||||

This reward is in fact designed on how human acts, **we have naturally an intrinsic desire to explore environments and discover new things**.

|

||||

|

||||

There are different ways to calculate this intrinsic reward, the classical one (curiosity through next-state prediction) was to calculate curiosity **as the error of our agent of predicting the next state, given the current state and action taken**.

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit5/curiosity3.png" alt="Curiosity"/>

|

||||

|

||||

Because the idea of curiosity is to **encourage our agent to perform actions that reduce the uncertainty in the agent’s ability to predict the consequences of its own actions** (uncertainty will be higher in areas where the agent has spent less time, or in areas with complex dynamics).

|

||||

|

||||

If the agent spend a lot of times on these states, it will be good to predict the next state (low curiosity), on the other hand, if it’s a new state unexplored, it will be bad to predict the next state (high curiosity).

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit5/curiosity4.png" alt="Curiosity"/>

|

||||

|

||||

Using curiosity will push our agent to favor transitions with high prediction error (which will be higher in areas where the agent has spent less time, or in areas with complex dynamics) and **consequently better explore our environment**.

|

||||

|

||||

There’s also **other curiosity calculation methods**. ML-Agents uses a more advanced one called Curiosity through random network distillation. This is out of the scope of the tutorial but if you’re interested [I wrote an article explaining it in detail](https://medium.com/data-from-the-trenches/curiosity-driven-learning-through-random-network-distillation-488ffd8e5938).

|

||||

394

units/en/unit5/hands-on.mdx

Normal file

394

units/en/unit5/hands-on.mdx

Normal file

@@ -0,0 +1,394 @@

|

||||

# Hands-on

|

||||

|

||||

<CourseFloatingBanner classNames="absolute z-10 right-0 top-0"

|

||||

notebooks={[

|

||||

{label: "Google Colab", value: "https://colab.research.google.com/github/huggingface/deep-rl-class/blob/main/notebooks/unit5/unit5.ipynb"}

|

||||

]}

|

||||

askForHelpUrl="http://hf.co/join/discord" />

|

||||

|

||||

|

||||

Now that we learned what is ML-Agents, how it works and that we studied the two environments we're going to use. We're ready to train our agents.

|

||||

|

||||

- The first one will learn to **shoot snowballs onto spawning target**.

|

||||

- The second need to **press a button to spawn a pyramid, then navigate to the pyramid, knock it over, and move to the gold brick at the top**. To do that, it will need to explore its environment, and we will use a technique called curiosity.

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/envs.png" alt="Environments" />

|

||||

|

||||

After that, you'll be able to watch your agents playing directly on your browser.

|

||||

|

||||

The ML-Agents integration on the Hub **is still experimental**, some features will be added in the future. But for now, to validate this hands-on for the certification process, you just need to push your trained models to the Hub.

|

||||

There's no results to attain to validate this one. But if you want to get nice results you can try to reach:

|

||||

|

||||

- For [Pyramids](https://singularite.itch.io/pyramids): Mean Reward = 1.75

|

||||

- For [SnowballTarget](https://singularite.itch.io/snowballtarget): Mean Reward = 15 or 30 targets shoot in an episode.

|

||||

|

||||

For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

|

||||

|

||||

**To start the hands-on click on Open In Colab button** 👇 :

|

||||

|

||||

[](https://colab.research.google.com/github/huggingface/deep-rl-class/blob/master/notebooks/unit5/unit5.ipynb)

|

||||

|

||||

# Unit 5: An Introduction to ML-Agents

|

||||

|

||||

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/thumbnail.png" alt="Thumbnail"/>

|

||||

|

||||

In this notebook, you'll learn about ML-Agents and train two agents.

|

||||

|

||||

- The first one will learn to **shoot snowballs onto spawning targets**.

|

||||

- The second need to press a button to spawn a pyramid, then navigate to the pyramid, knock it over, **and move to the gold brick at the top**. To do that, it will need to explore its environment, and we will use a technique called curiosity.

|

||||

|

||||

After that, you'll be able **to watch your agents playing directly on your browser**.

|

||||

|

||||

For more information about the certification process, check this section 👉 https://huggingface.co/deep-rl-course/en/unit0/introduction#certification-process

|

||||

|

||||

⬇️ Here is an example of what **you will achieve at the end of this unit.** ⬇️

|

||||

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/pyramids.gif" alt="Pyramids"/>

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget.gif" alt="SnowballTarget"/>

|

||||

|

||||

### 🎮 Environments:

|

||||

|

||||

- [Pyramids](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Learning-Environment-Examples.md#pyramids)

|

||||

- SnowballTarget

|

||||

|

||||

### 📚 RL-Library:

|

||||

|

||||

- [ML-Agents (HuggingFace Experimental Version)](https://github.com/huggingface/ml-agents)

|

||||

|

||||

⚠ We're going to use an experimental version of ML-Agents were you can push to hub and load from hub Unity ML-Agents Models **you need to install the same version**

|

||||

|

||||

We're constantly trying to improve our tutorials, so **if you find some issues in this notebook**, please [open an issue on the GitHub Repo](https://github.com/huggingface/deep-rl-class/issues).

|

||||

|

||||

## Objectives of this notebook 🏆

|

||||

|

||||

At the end of the notebook, you will:

|

||||

|

||||

- Understand how works **ML-Agents**, the environment library.

|

||||

- Be able to **train agents in Unity Environments**.

|

||||

|

||||

## Prerequisites 🏗️

|

||||

Before diving into the notebook, you need to:

|

||||

|

||||

🔲 📚 **Study [what is ML-Agents and how it works by reading Unit 5](https://huggingface.co/deep-rl-course/unit5/introduction)** 🤗

|

||||

|

||||

# Let's train our agents 🚀

|

||||

|

||||

The ML-Agents integration on the Hub is **still experimental**, some features will be added in the future.

|

||||

|

||||

But for now, **to validate this hands-on for the certification process, you just need to push your trained models to the Hub**. There’s no results to attain to validate this one. But if you want to get nice results you can try to attain:

|

||||

|

||||

- For `Pyramids` : Mean Reward = 1.75

|

||||

- For `SnowballTarget` : Mean Reward = 15 or 30 targets hit in an episode.

|

||||

|

||||

|

||||

## Set the GPU 💪

|

||||

|

||||

- To **accelerate the agent's training, we'll use a GPU**. To do that, go to `Runtime > Change Runtime type`

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step1.jpg" alt="GPU Step 1">

|

||||

|

||||

- `Hardware Accelerator > GPU`

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/gpu-step2.jpg" alt="GPU Step 2">

|

||||

|

||||

## Clone the repository and install the dependencies 🔽

|

||||

- We need to clone the repository, that **contains the experimental version of the library that allows you to push your trained agent to the Hub.**

|

||||

|

||||

```python

|

||||

%%capture

|

||||

# Clone the repository

|

||||

!git clone --depth 1 https://github.com/huggingface/ml-agents/

|

||||

```

|

||||

|

||||

```python

|

||||

%%capture

|

||||

# Go inside the repository and install the package

|

||||

%cd ml-agents

|

||||

!pip3 install -e ./ml-agents-envs

|

||||

!pip3 install -e ./ml-agents

|

||||

```

|

||||

|

||||

## SnowballTarget ⛄

|

||||

|

||||

If you need a refresher on how this environments work check this section 👉

|

||||

https://huggingface.co/deep-rl-course/unit5/snowball-target

|

||||

|

||||

### Download and move the environment zip file in `./training-envs-executables/linux/`

|

||||

- Our environment executable is in a zip file.

|

||||

- We need to download it and place it to `./training-envs-executables/linux/`

|

||||

- We use a linux executable because we use colab, and colab machines OS is Ubuntu (linux)

|

||||

|

||||

```python

|

||||

# Here, we create training-envs-executables and linux

|

||||

!mkdir ./training-envs-executables

|

||||

!mkdir ./training-envs-executables/linux

|

||||

```

|

||||

|

||||

Download the file SnowballTarget.zip from https://drive.google.com/file/d/1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5 using `wget`.

|

||||

|

||||

Check out the full solution to download large files from GDrive [here](https://bcrf.biochem.wisc.edu/2021/02/05/download-google-drive-files-using-wget/)

|

||||

|

||||

```python

|

||||

!wget --load-cookies /tmp/cookies.txt "https://docs.google.com/uc?export=download&confirm=$(wget --quiet --save-cookies /tmp/cookies.txt --keep-session-cookies --no-check-certificate 'https://docs.google.com/uc?export=download&id=1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5' -O- | sed -rn 's/.*confirm=([0-9A-Za-z_]+).*/\1\n/p')&id=1YHHLjyj6gaZ3Gemx1hQgqrPgSS2ZhmB5" -O ./training-envs-executables/linux/SnowballTarget.zip && rm -rf /tmp/cookies.txt

|

||||

```

|

||||

|

||||

We unzip the executable.zip file

|

||||

|

||||

```python

|

||||

%%capture

|

||||

!unzip -d ./training-envs-executables/linux/ ./training-envs-executables/linux/SnowballTarget.zip

|

||||

```

|

||||

|

||||

Make sure your file is accessible

|

||||

|

||||

```python

|

||||

!chmod -R 755 ./training-envs-executables/linux/SnowballTarget

|

||||

```

|

||||

|

||||

### Define the SnowballTarget config file

|

||||

- In ML-Agents, you define the **training hyperparameters into config.yaml files.**

|

||||

|

||||

There are multiple hyperparameters. To know them better, you should check for each explanation with [the documentation](https://github.com/Unity-Technologies/ml-agents/blob/release_20_docs/docs/Training-Configuration-File.md)

|

||||

|

||||

|

||||

So you need to create a `SnowballTarget.yaml` config file in ./content/ml-agents/config/ppo/

|

||||

|

||||

We'll give you here a first version of this config (to copy and paste into your `SnowballTarget.yaml file`), **but you should modify it**.

|

||||

|

||||

```

|

||||

behaviors:

|

||||

SnowballTarget:

|

||||

trainer_type: ppo

|

||||

summary_freq: 10000

|

||||

keep_checkpoints: 10

|

||||

checkpoint_interval: 50000

|

||||

max_steps: 200000

|

||||

time_horizon: 64

|

||||

threaded: true

|

||||

hyperparameters:

|

||||

learning_rate: 0.0003

|

||||

learning_rate_schedule: linear

|

||||

batch_size: 128

|

||||

buffer_size: 2048

|

||||

beta: 0.005

|

||||

epsilon: 0.2

|

||||

lambd: 0.95

|

||||

num_epoch: 3

|

||||

network_settings:

|

||||

normalize: false

|

||||

hidden_units: 256

|

||||

num_layers: 2

|

||||

vis_encode_type: simple

|

||||

reward_signals:

|

||||

extrinsic:

|

||||

gamma: 0.99

|

||||

strength: 1.0

|

||||

```

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config1.png" alt="Config SnowballTarget"/>

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballfight_config2.png" alt="Config SnowballTarget"/>

|

||||

|

||||

As an experimentation, you should also try to modify some other hyperparameters. Unity provides very [good documentation explaining each of them here](https://github.com/Unity-Technologies/ml-agents/blob/main/docs/Training-Configuration-File.md).

|

||||

|

||||

Now that you've created the config file and understand what most hyperparameters do, we're ready to train our agent 🔥.

|

||||

|

||||

### Train the agent

|

||||

|

||||

To train our agent, we just need to **launch mlagents-learn and select the executable containing the environment.**

|

||||

|

||||

We define four parameters:

|

||||

|

||||

1. `mlagents-learn <config>`: the path where the hyperparameter config file is.

|

||||

2. `--env`: where the environment executable is.

|

||||

3. `--run_id`: the name you want to give to your training run id.

|

||||

4. `--no-graphics`: to not launch the visualization during the training.

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentslearn.png" alt="MlAgents learn"/>

|

||||

|

||||

Train the model and use the `--resume` flag to continue training in case of interruption.

|

||||

|

||||

> It will fail first time if and when you use `--resume`, try running the block again to bypass the error.

|

||||

|

||||

|

||||

|

||||

The training will take 10 to 35min depending on your config, go take a ☕️you deserve it 🤗.

|

||||

|

||||

```python

|

||||

!mlagents-learn ./config/ppo/SnowballTarget.yaml --env=./training-envs-executables/linux/SnowballTarget/SnowballTarget --run-id="SnowballTarget1" --no-graphics

|

||||

```

|

||||

|

||||

### Push the agent to the 🤗 Hub

|

||||

|

||||

- Now that we trained our agent, we’re **ready to push it to the Hub to be able to visualize it playing on your browser🔥.**

|

||||

|

||||

To be able to share your model with the community there are three more steps to follow:

|

||||

|

||||

1️⃣ (If it's not already done) create an account to HF ➡ https://huggingface.co/join

|

||||

|

||||

2️⃣ Sign in and then, you need to store your authentication token from the Hugging Face website.

|

||||

- Create a new token (https://huggingface.co/settings/tokens) **with write role**

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/notebooks/create-token.jpg" alt="Create HF Token">

|

||||

|

||||

- Copy the token

|

||||

- Run the cell below and paste the token

|

||||

|

||||

```python

|

||||

from huggingface_hub import notebook_login

|

||||

|

||||

notebook_login()

|

||||

```

|

||||

|

||||

If you don't want to use a Google Colab or a Jupyter Notebook, you need to use this command instead: `huggingface-cli login`

|

||||

|

||||

Then, we simply need to run `mlagents-push-to-hf`.

|

||||

|

||||

And we define 4 parameters:

|

||||

|

||||

1. `--run-id`: the name of the training run id.

|

||||

2. `--local-dir`: where the agent was saved, it’s results/<run_id name>, so in my case results/First Training.

|

||||

3. `--repo-id`: the name of the Hugging Face repo you want to create or update. It’s always <your huggingface username>/<the repo name>

|

||||

If the repo does not exist **it will be created automatically**

|

||||

4. `--commit-message`: since HF repos are git repository you need to define a commit message.

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/mlagentspushtohub.png" alt="Push to Hub"/>

|

||||

|

||||

For instance:

|

||||

|

||||

`!mlagents-push-to-hf --run-id="SnowballTarget1" --local-dir="./results/SnowballTarget1" --repo-id="ThomasSimonini/ppo-SnowballTarget" --commit-message="First Push"`

|

||||

|

||||

```python

|

||||

!mlagents-push-to-hf --run-id= # Add your run id --local-dir= # Your local dir --repo-id= # Your repo id --commit-message= # Your commit message

|

||||

```

|

||||

|

||||

Else, if everything worked you should have this at the end of the process(but with a different url 😆) :

|

||||

|

||||

|

||||

|

||||

```

|

||||

Your model is pushed to the hub. You can view your model here: https://huggingface.co/ThomasSimonini/ppo-SnowballTarget

|

||||

```

|

||||

|

||||

It’s the link to your model, it contains a model card that explains how to use it, your Tensorboard and your config file. **What’s awesome is that it’s a git repository, that means you can have different commits, update your repository with a new push etc.**

|

||||

|

||||

But now comes the best: **being able to visualize your agent online 👀.**

|

||||

|

||||

### Watch your agent playing 👀

|

||||

|

||||

For this step it’s simple:

|

||||

|

||||

1. Remember your repo-id

|

||||

|

||||

2. Go here: https://singularite.itch.io/snowballtarget

|

||||

|

||||

3. Launch the game and put it in full screen by clicking on the bottom right button

|

||||

|

||||

<img src="https://huggingface.co/datasets/huggingface-deep-rl-course/course-images/resolve/main/en/unit7/snowballtarget_load.png" alt="Snowballtarget load"/>

|

||||

|

||||

1. In step 1, choose your model repository which is the model id (in my case ThomasSimonini/ppo-SnowballTarget).

|

||||

|

||||

2. In step 2, **choose what model you want to replay**:

|

||||

- I have multiple one, since we saved a model every 500000 timesteps.

|

||||

- But if I want the more recent I choose `SnowballTarget.onnx`

|

||||

|

||||

👉 What’s nice **is to try with different models step to see the improvement of the agent.**

|

||||

|

||||

And don't hesitate to share the best score your agent gets on discord in #rl-i-made-this channel 🔥

|

||||