mirror of

https://github.com/babysor/Realtime-Voice-Clone-Chinese.git

synced 2026-02-03 18:43:41 +08:00

Compare commits

5 Commits

babysor-pa

...

refactor

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f9ee4d7890 | ||

|

|

8d0d22bc00 | ||

|

|

87f4859874 | ||

|

|

c3590bffb2 | ||

|

|

efbdb21b70 |

@@ -1,4 +0,0 @@

|

||||

*/saved_models

|

||||

!vocoder/saved_models/pretrained/**

|

||||

!encoder/saved_models/pretrained.pt

|

||||

/datasets

|

||||

13

.gitignore

vendored

13

.gitignore

vendored

@@ -14,13 +14,8 @@

|

||||

*.bcf

|

||||

*.toc

|

||||

*.sh

|

||||

data/ckpt/*/*

|

||||

!data/ckpt/encoder/pretrained.pt

|

||||

!data/ckpt/vocoder/pretrained/

|

||||

*/saved_models

|

||||

!vocoder/saved_models/pretrained/**

|

||||

!encoder/saved_models/pretrained.pt

|

||||

wavs

|

||||

log

|

||||

!/docker-entrypoint.sh

|

||||

!/datasets_download/*.sh

|

||||

/datasets

|

||||

monotonic_align/build

|

||||

monotonic_align/monotonic_align

|

||||

log

|

||||

22

.vscode/launch.json

vendored

22

.vscode/launch.json

vendored

@@ -15,8 +15,7 @@

|

||||

"name": "Python: Vocoder Preprocess",

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "control\\cli\\vocoder_preprocess.py",

|

||||

"cwd": "${workspaceFolder}",

|

||||

"program": "vocoder_preprocess.py",

|

||||

"console": "integratedTerminal",

|

||||

"args": ["..\\audiodata"]

|

||||

},

|

||||

@@ -24,8 +23,7 @@

|

||||

"name": "Python: Vocoder Train",

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "control\\cli\\vocoder_train.py",

|

||||

"cwd": "${workspaceFolder}",

|

||||

"program": "vocoder_train.py",

|

||||

"console": "integratedTerminal",

|

||||

"args": ["dev", "..\\audiodata"]

|

||||

},

|

||||

@@ -34,7 +32,6 @@

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "demo_toolbox.py",

|

||||

"cwd": "${workspaceFolder}",

|

||||

"console": "integratedTerminal",

|

||||

"args": ["-d","..\\audiodata"]

|

||||

},

|

||||

@@ -43,7 +40,6 @@

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "demo_toolbox.py",

|

||||

"cwd": "${workspaceFolder}",

|

||||

"console": "integratedTerminal",

|

||||

"args": ["-d","..\\audiodata","-vc"]

|

||||

},

|

||||

@@ -51,9 +47,9 @@

|

||||

"name": "Python: Synth Train",

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "train.py",

|

||||

"program": "synthesizer_train.py",

|

||||

"console": "integratedTerminal",

|

||||

"args": ["--type", "vits"]

|

||||

"args": ["my_run", "..\\"]

|

||||

},

|

||||

{

|

||||

"name": "Python: PPG Convert",

|

||||

@@ -64,14 +60,6 @@

|

||||

"args": ["-c", ".\\ppg2mel\\saved_models\\seq2seq_mol_ppg2mel_vctk_libri_oneshotvc_r4_normMel_v2.yaml",

|

||||

"-m", ".\\ppg2mel\\saved_models\\best_loss_step_304000.pth", "--wav_dir", ".\\wavs\\input", "--ref_wav_path", ".\\wavs\\pkq.mp3", "-o", ".\\wavs\\output\\"

|

||||

]

|

||||

},

|

||||

{

|

||||

"name": "Python: Vits Train",

|

||||

"type": "python",

|

||||

"request": "launch",

|

||||

"program": "train.py",

|

||||

"console": "integratedTerminal",

|

||||

"args": ["--type", "vits"]

|

||||

},

|

||||

}

|

||||

]

|

||||

}

|

||||

|

||||

17

Dockerfile

17

Dockerfile

@@ -1,17 +0,0 @@

|

||||

FROM pytorch/pytorch:latest

|

||||

|

||||

RUN apt-get update && apt-get install -y build-essential ffmpeg parallel aria2 && apt-get clean

|

||||

|

||||

COPY ./requirements.txt /workspace/requirements.txt

|

||||

|

||||

RUN pip install -r requirements.txt && pip install webrtcvad-wheels

|

||||

|

||||

COPY . /workspace

|

||||

|

||||

VOLUME [ "/datasets", "/workspace/synthesizer/saved_models/" ]

|

||||

|

||||

ENV DATASET_MIRROR=default FORCE_RETRAIN=false TRAIN_DATASETS=aidatatang_200zh\ magicdata\ aishell3\ data_aishell TRAIN_SKIP_EXISTING=true

|

||||

|

||||

EXPOSE 8080

|

||||

|

||||

ENTRYPOINT [ "/workspace/docker-entrypoint.sh" ]

|

||||

128

README-CN.md

128

README-CN.md

@@ -18,10 +18,17 @@

|

||||

|

||||

🌍 **Webserver Ready** 可伺服你的训练结果,供远程调用

|

||||

|

||||

### 进行中的工作

|

||||

* GUI/客户端大升级与合并

|

||||

[X] 初始化框架 `./mkgui` (基于streamlit + fastapi)和 [技术设计](https://vaj2fgg8yn.feishu.cn/docs/doccnvotLWylBub8VJIjKzoEaee)

|

||||

[X] 增加 Voice Cloning and Conversion的演示页面

|

||||

[X] 增加Voice Conversion的预处理preprocessing 和训练 training 页面

|

||||

[ ] 增加其他的的预处理preprocessing 和训练 training 页面

|

||||

* 模型后端基于ESPnet2升级

|

||||

|

||||

|

||||

## 开始

|

||||

### 1. 安装要求

|

||||

#### 1.1 通用配置

|

||||

> 按照原始存储库测试您是否已准备好所有环境。

|

||||

运行工具箱(demo_toolbox.py)需要 **Python 3.7 或更高版本** 。

|

||||

|

||||

@@ -29,70 +36,8 @@

|

||||

> 如果在用 pip 方式安装的时候出现 `ERROR: Could not find a version that satisfies the requirement torch==1.9.0+cu102 (from versions: 0.1.2, 0.1.2.post1, 0.1.2.post2)` 这个错误可能是 python 版本过低,3.9 可以安装成功

|

||||

* 安装 [ffmpeg](https://ffmpeg.org/download.html#get-packages)。

|

||||

* 运行`pip install -r requirements.txt` 来安装剩余的必要包。

|

||||

> 这里的环境建议使用 `Repo Tag 0.0.1` `Pytorch1.9.0 with Torchvision0.10.0 and cudatoolkit10.2` `requirements.txt` `webrtcvad-wheels` 因为 `requiremants.txt` 是在几个月前导出的,所以不适配新版本

|

||||

* 安装 webrtcvad `pip install webrtcvad-wheels`。

|

||||

|

||||

或者

|

||||

- 用`conda` 或者 `mamba` 安装依赖

|

||||

|

||||

```conda env create -n env_name -f env.yml```

|

||||

|

||||

```mamba env create -n env_name -f env.yml```

|

||||

|

||||

会创建新环境安装必须的依赖. 之后用 `conda activate env_name` 切换环境就完成了.

|

||||

> env.yml只包含了运行时必要的依赖,暂时不包括monotonic-align,如果想要装GPU版本的pytorch可以查看官网教程。

|

||||

|

||||

#### 1.2 M1芯片Mac环境配置(Inference Time)

|

||||

> 以下环境按x86-64搭建,使用原生的`demo_toolbox.py`,可作为在不改代码情况下快速使用的workaround。

|

||||

>

|

||||

> 如需使用M1芯片训练,因`demo_toolbox.py`依赖的`PyQt5`不支持M1,则应按需修改代码,或者尝试使用`web.py`。

|

||||

|

||||

* 安装`PyQt5`,参考[这个链接](https://stackoverflow.com/a/68038451/20455983)

|

||||

* 用Rosetta打开Terminal,参考[这个链接](https://dev.to/courier/tips-and-tricks-to-setup-your-apple-m1-for-development-547g)

|

||||

* 用系统Python创建项目虚拟环境

|

||||

```

|

||||

/usr/bin/python3 -m venv /PathToMockingBird/venv

|

||||

source /PathToMockingBird/venv/bin/activate

|

||||

```

|

||||

* 升级pip并安装`PyQt5`

|

||||

```

|

||||

pip install --upgrade pip

|

||||

pip install pyqt5

|

||||

```

|

||||

* 安装`pyworld`和`ctc-segmentation`

|

||||

> 这里两个文件直接`pip install`的时候找不到wheel,尝试从c里build时找不到`Python.h`报错

|

||||

* 安装`pyworld`

|

||||

* `brew install python` 通过brew安装python时会自动安装`Python.h`

|

||||

* `export CPLUS_INCLUDE_PATH=/opt/homebrew/Frameworks/Python.framework/Headers` 对于M1,brew安装`Python.h`到上述路径。把路径添加到环境变量里

|

||||

* `pip install pyworld`

|

||||

|

||||

* 安装`ctc-segmentation`

|

||||

> 因上述方法没有成功,选择从[github](https://github.com/lumaku/ctc-segmentation) clone源码手动编译

|

||||

* `git clone https://github.com/lumaku/ctc-segmentation.git` 克隆到任意位置

|

||||

* `cd ctc-segmentation`

|

||||

* `source /PathToMockingBird/venv/bin/activate` 假设一开始未开启,打开MockingBird项目的虚拟环境

|

||||

* `cythonize -3 ctc_segmentation/ctc_segmentation_dyn.pyx`

|

||||

* `/usr/bin/arch -x86_64 python setup.py build` 要注意明确用x86-64架构编译

|

||||

* `/usr/bin/arch -x86_64 python setup.py install --optimize=1 --skip-build`用x86-64架构安装

|

||||

|

||||

* 安装其他依赖

|

||||

* `/usr/bin/arch -x86_64 pip install torch torchvision torchaudio` 这里用pip安装`PyTorch`,明确架构是x86

|

||||

* `pip install ffmpeg` 安装ffmpeg

|

||||

* `pip install -r requirements.txt`

|

||||

|

||||

* 运行

|

||||

> 参考[这个链接](https://youtrack.jetbrains.com/issue/PY-46290/Allow-running-Python-under-Rosetta-2-in-PyCharm-for-Apple-Silicon)

|

||||

,让项目跑在x86架构环境上

|

||||

* `vim /PathToMockingBird/venv/bin/pythonM1`

|

||||

* 写入以下代码

|

||||

```

|

||||

#!/usr/bin/env zsh

|

||||

mydir=${0:a:h}

|

||||

/usr/bin/arch -x86_64 $mydir/python "$@"

|

||||

```

|

||||

* `chmod +x pythonM1` 设为可执行文件

|

||||

* 如果使用PyCharm,则把Interpreter指向`pythonM1`,否则也可命令行运行`/PathToMockingBird/venv/bin/pythonM1 demo_toolbox.py`

|

||||

|

||||

### 2. 准备预训练模型

|

||||

考虑训练您自己专属的模型或者下载社区他人训练好的模型:

|

||||

> 近期创建了[知乎专题](https://www.zhihu.com/column/c_1425605280340504576) 将不定期更新炼丹小技巧or心得,也欢迎提问

|

||||

@@ -114,7 +59,7 @@

|

||||

> 假如你下载的 `aidatatang_200zh`文件放在D盘,`train`文件路径为 `D:\data\aidatatang_200zh\corpus\train` , 你的`datasets_root`就是 `D:\data\`

|

||||

|

||||

* 训练合成器:

|

||||

`python ./control/cli/synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer`

|

||||

`python synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer`

|

||||

|

||||

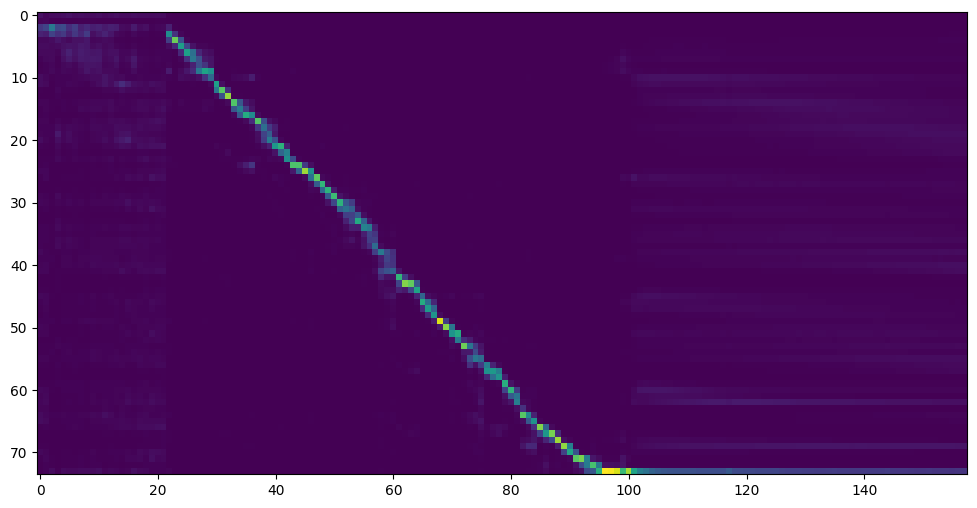

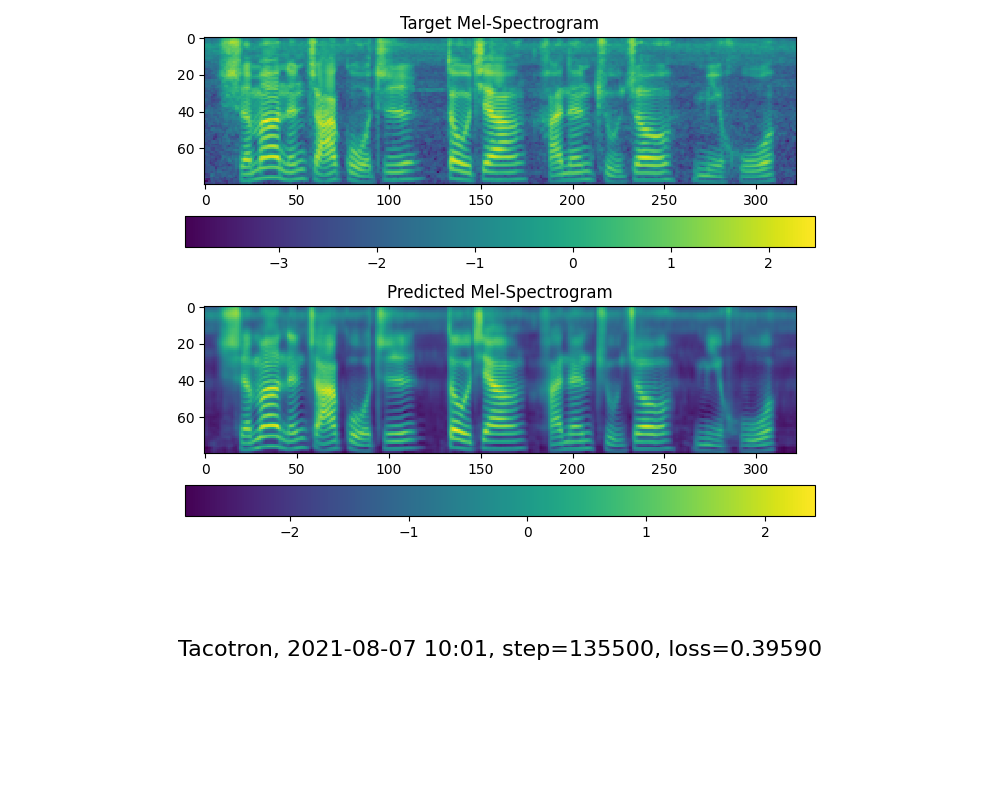

* 当您在训练文件夹 *synthesizer/saved_models/* 中看到注意线显示和损失满足您的需要时,请转到`启动程序`一步。

|

||||

|

||||

@@ -125,7 +70,7 @@

|

||||

| --- | ----------- | ----- | ----- |

|

||||

| 作者 | https://pan.baidu.com/s/1iONvRxmkI-t1nHqxKytY3g [百度盘链接](https://pan.baidu.com/s/1iONvRxmkI-t1nHqxKytY3g) 4j5d | | 75k steps 用3个开源数据集混合训练

|

||||

| 作者 | https://pan.baidu.com/s/1fMh9IlgKJlL2PIiRTYDUvw [百度盘链接](https://pan.baidu.com/s/1fMh9IlgKJlL2PIiRTYDUvw) 提取码:om7f | | 25k steps 用3个开源数据集混合训练, 切换到tag v0.0.1使用

|

||||

|@FawenYo | https://yisiou-my.sharepoint.com/:u:/g/personal/lawrence_cheng_fawenyo_onmicrosoft_com/EWFWDHzee-NNg9TWdKckCc4BC7bK2j9cCbOWn0-_tK0nOg?e=n0gGgC | [input](https://github.com/babysor/MockingBird/wiki/audio/self_test.mp3) [output](https://github.com/babysor/MockingBird/wiki/audio/export.wav) | 200k steps 台湾口音需切换到tag v0.0.1使用

|

||||

|@FawenYo | https://drive.google.com/file/d/1H-YGOUHpmqKxJ9FRc6vAjPuqQki24UbC/view?usp=sharing [百度盘链接](https://pan.baidu.com/s/1vSYXO4wsLyjnF3Unl-Xoxg) 提取码:1024 | [input](https://github.com/babysor/MockingBird/wiki/audio/self_test.mp3) [output](https://github.com/babysor/MockingBird/wiki/audio/export.wav) | 200k steps 台湾口音需切换到tag v0.0.1使用

|

||||

|@miven| https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ 提取码:2021 | https://www.bilibili.com/video/BV1uh411B7AD/ | 150k steps 注意:根据[issue](https://github.com/babysor/MockingBird/issues/37)修复 并切换到tag v0.0.1使用

|

||||

|

||||

#### 2.4训练声码器 (可选)

|

||||

@@ -136,14 +81,14 @@

|

||||

|

||||

|

||||

* 训练wavernn声码器:

|

||||

`python ./control/cli/vocoder_train.py <trainid> <datasets_root>`

|

||||

`python vocoder_train.py <trainid> <datasets_root>`

|

||||

> `<trainid>`替换为你想要的标识,同一标识再次训练时会延续原模型

|

||||

|

||||

* 训练hifigan声码器:

|

||||

`python ./control/cli/vocoder_train.py <trainid> <datasets_root> hifigan`

|

||||

`python vocoder_train.py <trainid> <datasets_root> hifigan`

|

||||

> `<trainid>`替换为你想要的标识,同一标识再次训练时会延续原模型

|

||||

* 训练fregan声码器:

|

||||

`python ./control/cli/vocoder_train.py <trainid> <datasets_root> --config config.json fregan`

|

||||

`python vocoder_train.py <trainid> <datasets_root> --config config.json fregan`

|

||||

> `<trainid>`替换为你想要的标识,同一标识再次训练时会延续原模型

|

||||

* 将GAN声码器的训练切换为多GPU模式:修改GAN文件夹下.json文件中的"num_gpus"参数

|

||||

### 3. 启动程序或工具箱

|

||||

@@ -164,7 +109,7 @@

|

||||

想像柯南拿着变声器然后发出毛利小五郎的声音吗?本项目现基于PPG-VC,引入额外两个模块(PPG extractor + PPG2Mel), 可以实现变声功能。(文档不全,尤其是训练部分,正在努力补充中)

|

||||

#### 4.0 准备环境

|

||||

* 确保项目以上环境已经安装ok,运行`pip install espnet` 来安装剩余的必要包。

|

||||

* 下载以下模型 链接:https://pan.baidu.com/s/1bl_x_DHJSAUyN2fma-Q_Wg

|

||||

* 下载以下模型 链接:https://pan.baidu.com/s/1bl_x_DHJSAUyN2fma-Q_Wg

|

||||

提取码:gh41

|

||||

* 24K采样率专用的vocoder(hifigan)到 *vocoder\saved_models\xxx*

|

||||

* 预训练的ppg特征encoder(ppg_extractor)到 *ppg_extractor\saved_models\xxx*

|

||||

@@ -174,14 +119,14 @@

|

||||

|

||||

* 下载aidatatang_200zh数据集并解压:确保您可以访问 *train* 文件夹中的所有音频文件(如.wav)

|

||||

* 进行音频和梅尔频谱图预处理:

|

||||

`python ./control/cli/pre4ppg.py <datasets_root> -d {dataset} -n {number}`

|

||||

`python pre4ppg.py <datasets_root> -d {dataset} -n {number}`

|

||||

可传入参数:

|

||||

* `-d {dataset}` 指定数据集,支持 aidatatang_200zh, 不传默认为aidatatang_200zh

|

||||

* `-n {number}` 指定并行数,CPU 11700k在8的情况下,需要运行12到18小时!待优化

|

||||

* `-n {number}` 指定并行数,CPU 11770k在8的情况下,需要运行12到18小时!待优化

|

||||

> 假如你下载的 `aidatatang_200zh`文件放在D盘,`train`文件路径为 `D:\data\aidatatang_200zh\corpus\train` , 你的`datasets_root`就是 `D:\data\`

|

||||

|

||||

* 训练合成器, 注意在上一步先下载好`ppg2mel.yaml`, 修改里面的地址指向预训练好的文件夹:

|

||||

`python ./control/cli/ppg2mel_train.py --config .\ppg2mel\saved_models\ppg2mel.yaml --oneshotvc `

|

||||

`python ppg2mel_train.py --config .\ppg2mel\saved_models\ppg2mel.yaml --oneshotvc `

|

||||

* 如果想要继续上一次的训练,可以通过`--load .\ppg2mel\saved_models\<old_pt_file>` 参数指定一个预训练模型文件。

|

||||

|

||||

#### 4.2 启动工具箱VC模式

|

||||

@@ -203,30 +148,30 @@

|

||||

|[1703.10135](https://arxiv.org/pdf/1703.10135.pdf) | Tacotron (synthesizer) | Tacotron: Towards End-to-End Speech Synthesis | [fatchord/WaveRNN](https://github.com/fatchord/WaveRNN)

|

||||

|[1710.10467](https://arxiv.org/pdf/1710.10467.pdf) | GE2E (encoder)| Generalized End-To-End Loss for Speaker Verification | 本代码库 |

|

||||

|

||||

## 常见问题(FQ&A)

|

||||

#### 1.数据集在哪里下载?

|

||||

## 常見問題(FQ&A)

|

||||

#### 1.數據集哪裡下載?

|

||||

| 数据集 | OpenSLR地址 | 其他源 (Google Drive, Baidu网盘等) |

|

||||

| --- | ----------- | ---------------|

|

||||

| aidatatang_200zh | [OpenSLR](http://www.openslr.org/62/) | [Google Drive](https://drive.google.com/file/d/110A11KZoVe7vy6kXlLb6zVPLb_J91I_t/view?usp=sharing) |

|

||||

| magicdata | [OpenSLR](http://www.openslr.org/68/) | [Google Drive (Dev set)](https://drive.google.com/file/d/1g5bWRUSNH68ycC6eNvtwh07nX3QhOOlo/view?usp=sharing) |

|

||||

| aishell3 | [OpenSLR](https://www.openslr.org/93/) | [Google Drive](https://drive.google.com/file/d/1shYp_o4Z0X0cZSKQDtFirct2luFUwKzZ/view?usp=sharing) |

|

||||

| data_aishell | [OpenSLR](https://www.openslr.org/33/) | |

|

||||

> 解压 aidatatang_200zh 后,还需将 `aidatatang_200zh\corpus\train`下的文件全选解压缩

|

||||

> 解壓 aidatatang_200zh 後,還需將 `aidatatang_200zh\corpus\train`下的檔案全選解壓縮

|

||||

|

||||

#### 2.`<datasets_root>`是什麼意思?

|

||||

假如数据集路径为 `D:\data\aidatatang_200zh`,那么 `<datasets_root>`就是 `D:\data`

|

||||

假如數據集路徑為 `D:\data\aidatatang_200zh`,那麼 `<datasets_root>`就是 `D:\data`

|

||||

|

||||

#### 3.训练模型显存不足

|

||||

训练合成器时:将 `synthesizer/hparams.py`中的batch_size参数调小

|

||||

#### 3.訓練模型顯存不足

|

||||

訓練合成器時:將 `synthesizer/hparams.py`中的batch_size參數調小

|

||||

```

|

||||

//调整前

|

||||

//調整前

|

||||

tts_schedule = [(2, 1e-3, 20_000, 12), # Progressive training schedule

|

||||

(2, 5e-4, 40_000, 12), # (r, lr, step, batch_size)

|

||||

(2, 2e-4, 80_000, 12), #

|

||||

(2, 1e-4, 160_000, 12), # r = reduction factor (# of mel frames

|

||||

(2, 3e-5, 320_000, 12), # synthesized for each decoder iteration)

|

||||

(2, 1e-5, 640_000, 12)], # lr = learning rate

|

||||

//调整后

|

||||

//調整後

|

||||

tts_schedule = [(2, 1e-3, 20_000, 8), # Progressive training schedule

|

||||

(2, 5e-4, 40_000, 8), # (r, lr, step, batch_size)

|

||||

(2, 2e-4, 80_000, 8), #

|

||||

@@ -235,15 +180,15 @@ tts_schedule = [(2, 1e-3, 20_000, 8), # Progressive training schedule

|

||||

(2, 1e-5, 640_000, 8)], # lr = learning rate

|

||||

```

|

||||

|

||||

声码器-预处理数据集时:将 `synthesizer/hparams.py`中的batch_size参数调小

|

||||

聲碼器-預處理數據集時:將 `synthesizer/hparams.py`中的batch_size參數調小

|

||||

```

|

||||

//调整前

|

||||

//調整前

|

||||

### Data Preprocessing

|

||||

max_mel_frames = 900,

|

||||

rescale = True,

|

||||

rescaling_max = 0.9,

|

||||

synthesis_batch_size = 16, # For vocoder preprocessing and inference.

|

||||

//调整后

|

||||

//調整後

|

||||

### Data Preprocessing

|

||||

max_mel_frames = 900,

|

||||

rescale = True,

|

||||

@@ -251,16 +196,16 @@ tts_schedule = [(2, 1e-3, 20_000, 8), # Progressive training schedule

|

||||

synthesis_batch_size = 8, # For vocoder preprocessing and inference.

|

||||

```

|

||||

|

||||

声码器-训练声码器时:将 `vocoder/wavernn/hparams.py`中的batch_size参数调小

|

||||

聲碼器-訓練聲碼器時:將 `vocoder/wavernn/hparams.py`中的batch_size參數調小

|

||||

```

|

||||

//调整前

|

||||

//調整前

|

||||

# Training

|

||||

voc_batch_size = 100

|

||||

voc_lr = 1e-4

|

||||

voc_gen_at_checkpoint = 5

|

||||

voc_pad = 2

|

||||

|

||||

//调整后

|

||||

//調整後

|

||||

# Training

|

||||

voc_batch_size = 6

|

||||

voc_lr = 1e-4

|

||||

@@ -269,16 +214,17 @@ voc_pad =2

|

||||

```

|

||||

|

||||

#### 4.碰到`RuntimeError: Error(s) in loading state_dict for Tacotron: size mismatch for encoder.embedding.weight: copying a param with shape torch.Size([70, 512]) from checkpoint, the shape in current model is torch.Size([75, 512]).`

|

||||

请参照 issue [#37](https://github.com/babysor/MockingBird/issues/37)

|

||||

請參照 issue [#37](https://github.com/babysor/MockingBird/issues/37)

|

||||

|

||||

#### 5.如何改善CPU、GPU占用率?

|

||||

视情况调整batch_size参数来改善

|

||||

#### 5.如何改善CPU、GPU佔用率?

|

||||

適情況調整batch_size參數來改善

|

||||

|

||||

#### 6.发生 `页面文件太小,无法完成操作`

|

||||

请参考这篇[文章](https://blog.csdn.net/qq_17755303/article/details/112564030),将虚拟内存更改为100G(102400),例如:文件放置D盘就更改D盘的虚拟内存

|

||||

#### 6.發生 `頁面文件太小,無法完成操作`

|

||||

請參考這篇[文章](https://blog.csdn.net/qq_17755303/article/details/112564030),將虛擬內存更改為100G(102400),例如:档案放置D槽就更改D槽的虚拟内存

|

||||

|

||||

#### 7.什么时候算训练完成?

|

||||

首先一定要出现注意力模型,其次是loss足够低,取决于硬件设备和数据集。拿本人的供参考,我的注意力是在 18k 步之后出现的,并且在 50k 步之后损失变得低于 0.4

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

@@ -1,223 +0,0 @@

|

||||

## 实时语音克隆 - 中文/普通话

|

||||

|

||||

|

||||

[](http://choosealicense.com/licenses/mit/)

|

||||

|

||||

### [English](README.md) | 中文

|

||||

|

||||

### [DEMO VIDEO](https://www.bilibili.com/video/BV17Q4y1B7mY/) | [Wiki教程](https://github.com/babysor/MockingBird/wiki/Quick-Start-(Newbie)) | [训练教程](https://vaj2fgg8yn.feishu.cn/docs/doccn7kAbr3SJz0KM0SIDJ0Xnhd)

|

||||

|

||||

## 特性

|

||||

🌍 **中文** 支持普通话并使用多种中文数据集进行测试:aidatatang_200zh, magicdata, aishell3, biaobei, MozillaCommonVoice, data_aishell 等

|

||||

|

||||

🤩 **Easy & Awesome** 仅需下载或新训练合成器(synthesizer)就有良好效果,复用预训练的编码器/声码器,或实时的HiFi-GAN作为vocoder

|

||||

|

||||

🌍 **Webserver Ready** 可伺服你的训练结果,供远程调用。

|

||||

|

||||

🤩 **感谢各位小伙伴的支持,本项目将开启新一轮的更新**

|

||||

|

||||

## 1.快速开始

|

||||

### 1.1 建议环境

|

||||

- Ubuntu 18.04

|

||||

- Cuda 11.7 && CuDNN 8.5.0

|

||||

- Python 3.8 或 3.9

|

||||

- Pytorch 2.0.1 <post cuda-11.7>

|

||||

### 1.2 环境配置

|

||||

```shell

|

||||

# 下载前建议更换国内镜像源

|

||||

|

||||

conda create -n sound python=3.9

|

||||

|

||||

conda activate sound

|

||||

|

||||

git clone https://github.com/babysor/MockingBird.git

|

||||

|

||||

cd MockingBird

|

||||

|

||||

pip install -r requirements.txt

|

||||

|

||||

pip install webrtcvad-wheels

|

||||

|

||||

pip install torch torchvision torchaudio --index-url https://download.pytorch.org/whl/cu117

|

||||

```

|

||||

|

||||

### 1.3 模型准备

|

||||

> 当实在没有设备或者不想慢慢调试,可以使用社区贡献的模型(欢迎持续分享):

|

||||

|

||||

| 作者 | 下载链接 | 效果预览 | 信息 |

|

||||

| --- | ----------- | ----- | ----- |

|

||||

| 作者 | https://pan.baidu.com/s/1iONvRxmkI-t1nHqxKytY3g [百度盘链接](https://pan.baidu.com/s/1iONvRxmkI-t1nHqxKytY3g) 4j5d | | 75k steps 用3个开源数据集混合训练

|

||||

| 作者 | https://pan.baidu.com/s/1fMh9IlgKJlL2PIiRTYDUvw [百度盘链接](https://pan.baidu.com/s/1fMh9IlgKJlL2PIiRTYDUvw) 提取码:om7f | | 25k steps 用3个开源数据集混合训练, 切换到tag v0.0.1使用

|

||||

|@FawenYo | https://drive.google.com/file/d/1H-YGOUHpmqKxJ9FRc6vAjPuqQki24UbC/view?usp=sharing [百度盘链接](https://pan.baidu.com/s/1vSYXO4wsLyjnF3Unl-Xoxg) 提取码:1024 | [input](https://github.com/babysor/MockingBird/wiki/audio/self_test.mp3) [output](https://github.com/babysor/MockingBird/wiki/audio/export.wav) | 200k steps 台湾口音需切换到tag v0.0.1使用

|

||||

|@miven| https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ 提取码:2021 | https://www.bilibili.com/video/BV1uh411B7AD/ | 150k steps 注意:根据[issue](https://github.com/babysor/MockingBird/issues/37)修复 并切换到tag v0.0.1使用

|

||||

|

||||

### 1.4 文件结构准备

|

||||

文件结构准备如下所示,算法将自动遍历synthesizer下的.pt模型文件。

|

||||

```

|

||||

# 以第一个 pretrained-11-7-21_75k.pt 为例

|

||||

|

||||

└── data

|

||||

└── ckpt

|

||||

└── synthesizer

|

||||

└── pretrained-11-7-21_75k.pt

|

||||

```

|

||||

### 1.5 运行

|

||||

```

|

||||

python web.py

|

||||

```

|

||||

|

||||

## 2.模型训练

|

||||

### 2.1 数据准备

|

||||

#### 2.1.1 数据下载

|

||||

``` shell

|

||||

# aidatatang_200zh

|

||||

|

||||

wget https://openslr.elda.org/resources/62/aidatatang_200zh.tgz

|

||||

```

|

||||

``` shell

|

||||

# MAGICDATA

|

||||

|

||||

wget https://openslr.magicdatatech.com/resources/68/train_set.tar.gz

|

||||

|

||||

wget https://openslr.magicdatatech.com/resources/68/dev_set.tar.gz

|

||||

|

||||

wget https://openslr.magicdatatech.com/resources/68/test_set.tar.gz

|

||||

```

|

||||

``` shell

|

||||

# AISHELL-3

|

||||

|

||||

wget https://openslr.elda.org/resources/93/data_aishell3.tgz

|

||||

```

|

||||

```shell

|

||||

# Aishell

|

||||

|

||||

wget https://openslr.elda.org/resources/33/data_aishell.tgz

|

||||

```

|

||||

#### 2.1.2 数据批量解压

|

||||

```shell

|

||||

# 该指令为解压当前目录下的所有压缩文件

|

||||

|

||||

for gz in *.gz; do tar -zxvf $gz; done

|

||||

```

|

||||

### 2.2 encoder模型训练

|

||||

#### 2.2.1 数据预处理:

|

||||

需要先在`pre.py `头部加入:

|

||||

```python

|

||||

import torch

|

||||

torch.multiprocessing.set_start_method('spawn', force=True)

|

||||

```

|

||||

使用以下指令对数据预处理:

|

||||

```shell

|

||||

python pre.py <datasets_root> \

|

||||

-d <datasets_name>

|

||||

```

|

||||

其中`<datasets_root>`为原数据集路径,`<datasets_name>` 为数据集名称。

|

||||

|

||||

支持 `librispeech_other`,`voxceleb1`,`aidatatang_200zh`,使用逗号分割处理多数据集。

|

||||

|

||||

### 2.2.2 encoder模型训练:

|

||||

超参数文件路径:`models/encoder/hparams.py`

|

||||

```shell

|

||||

python encoder_train.py <name> \

|

||||

<datasets_root>/SV2TTS/encoder

|

||||

```

|

||||

其中 `<name>` 是训练产生文件的名称,可自行修改。

|

||||

|

||||

其中 `<datasets_root>` 是经过 `Step 2.1.1` 处理过后的数据集路径。

|

||||

#### 2.2.3 开启encoder模型训练数据可视化(可选)

|

||||

```shell

|

||||

visdom

|

||||

```

|

||||

|

||||

### 2.3 synthesizer模型训练

|

||||

#### 2.3.1 数据预处理:

|

||||

```shell

|

||||

python pre.py <datasets_root> \

|

||||

-d <datasets_name> \

|

||||

-o <datasets_path> \

|

||||

-n <number>

|

||||

```

|

||||

`<datasets_root>` 为原数据集路径,当你的`aidatatang_200zh`路径为`/data/aidatatang_200zh/corpus/train`时,`<datasets_root>` 为 `/data/`。

|

||||

|

||||

`<datasets_name>` 为数据集名称。

|

||||

|

||||

`<datasets_path>` 为数据集处理后的保存路径。

|

||||

|

||||

`<number>` 为数据集处理时进程数,根据CPU情况调整大小。

|

||||

|

||||

#### 2.3.2 新增数据预处理:

|

||||

```shell

|

||||

python pre.py <datasets_root> \

|

||||

-d <datasets_name> \

|

||||

-o <datasets_path> \

|

||||

-n <number> \

|

||||

-s

|

||||

```

|

||||

当新增数据集时,应加 `-s` 选择数据拼接,不加则为覆盖。

|

||||

#### 2.3.3 synthesizer模型训练:

|

||||

超参数文件路径:`models/synthesizer/hparams.py`,需将`MockingBird/control/cli/synthesizer_train.py`移成`MockingBird/synthesizer_train.py`结构。

|

||||

```shell

|

||||

python synthesizer_train.py <name> <datasets_path> \

|

||||

-m <out_dir>

|

||||

```

|

||||

其中 `<name>` 是训练产生文件的名称,可自行修改。

|

||||

|

||||

其中 `<datasets_path>` 是经过 `Step 2.2.1` 处理过后的数据集路径。

|

||||

|

||||

其中 `<out_dir> `为训练时所有数据的保存路径。

|

||||

|

||||

### 2.4 vocoder模型训练

|

||||

vocoder模型对生成效果影响不大,已预置3款。

|

||||

#### 2.4.1 数据预处理

|

||||

```shell

|

||||

python vocoder_preprocess.py <datasets_root> \

|

||||

-m <synthesizer_model_path>

|

||||

```

|

||||

|

||||

其中`<datasets_root>`为你数据集路径。

|

||||

|

||||

其中 `<synthesizer_model_path>`为synthesizer模型地址。

|

||||

|

||||

#### 2.4.2 wavernn声码器训练:

|

||||

```

|

||||

python vocoder_train.py <name> <datasets_root>

|

||||

```

|

||||

#### 2.4.3 hifigan声码器训练:

|

||||

```

|

||||

python vocoder_train.py <name> <datasets_root> hifigan

|

||||

```

|

||||

#### 2.4.4 fregan声码器训练:

|

||||

```

|

||||

python vocoder_train.py <name> <datasets_root> \

|

||||

--config config.json fregan

|

||||

```

|

||||

将GAN声码器的训练切换为多GPU模式:修改`GAN`文件夹下`.json`文件中的`num_gpus`参数。

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

## 3.致谢

|

||||

### 3.1 项目致谢

|

||||

该库一开始从仅支持英语的[Real-Time-Voice-Cloning](https://github.com/CorentinJ/Real-Time-Voice-Cloning) 分叉出来的,鸣谢作者。

|

||||

### 3.2 论文致谢

|

||||

| URL | Designation | 标题 | 实现源码 |

|

||||

| --- | ----------- | ----- | --------------------- |

|

||||

| [1803.09017](https://arxiv.org/abs/1803.09017) | GlobalStyleToken (synthesizer)| Style Tokens: Unsupervised Style Modeling, Control and Transfer in End-to-End Speech Synthesis | 本代码库 |

|

||||

| [2010.05646](https://arxiv.org/abs/2010.05646) | HiFi-GAN (vocoder)| Generative Adversarial Networks for Efficient and High Fidelity Speech Synthesis | 本代码库 |

|

||||

| [2106.02297](https://arxiv.org/abs/2106.02297) | Fre-GAN (vocoder)| Fre-GAN: Adversarial Frequency-consistent Audio Synthesis | 本代码库 |

|

||||

|[**1806.04558**](https://arxiv.org/pdf/1806.04558.pdf) | SV2TTS | Transfer Learning from Speaker Verification to Multispeaker Text-To-Speech Synthesis | 本代码库 |

|

||||

|[1802.08435](https://arxiv.org/pdf/1802.08435.pdf) | WaveRNN (vocoder) | Efficient Neural Audio Synthesis | [fatchord/WaveRNN](https://github.com/fatchord/WaveRNN) |

|

||||

|[1703.10135](https://arxiv.org/pdf/1703.10135.pdf) | Tacotron (synthesizer) | Tacotron: Towards End-to-End Speech Synthesis | [fatchord/WaveRNN](https://github.com/fatchord/WaveRNN)

|

||||

|[1710.10467](https://arxiv.org/pdf/1710.10467.pdf) | GE2E (encoder)| Generalized End-To-End Loss for Speaker Verification | 本代码库 |

|

||||

|

||||

### 3.3 开发者致谢

|

||||

|

||||

作为AI领域的从业者,我们不仅乐于开发一些具有里程碑意义的算法项目,同时也乐于分享项目以及开发过程中收获的喜悦。

|

||||

|

||||

因此,你们的使用是对我们项目的最大认可。同时当你们在项目使用中遇到一些问题时,欢迎你们随时在issue上留言。你们的指正这对于项目的后续优化具有十分重大的的意义。

|

||||

|

||||

为了表示感谢,我们将在本项目中留下各位开发者信息以及相对应的贡献。

|

||||

|

||||

- ------------------------------------------------ 开 发 者 贡 献 内 容 ---------------------------------------------------------------------------------

|

||||

|

||||

88

README.md

88

README.md

@@ -1,11 +1,9 @@

|

||||

> 🚧 While I no longer actively update this repo, you can find me continuously pushing this tech forward to good side and open-source. I'm also building an optimized and cloud hosted version: https://noiz.ai/ and it's free but not ready for commersial use now.

|

||||

>

|

||||

|

||||

<a href="https://trendshift.io/repositories/3869" target="_blank"><img src="https://trendshift.io/api/badge/repositories/3869" alt="babysor%2FMockingBird | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

|

||||

|

||||

|

||||

[](http://choosealicense.com/licenses/mit/)

|

||||

|

||||

> English | [中文](README-CN.md)| [中文Linux](README-LINUX-CN.md)

|

||||

> English | [中文](README-CN.md)

|

||||

|

||||

## Features

|

||||

🌍 **Chinese** supported mandarin and tested with multiple datasets: aidatatang_200zh, magicdata, aishell3, data_aishell, and etc.

|

||||

@@ -20,10 +18,17 @@

|

||||

|

||||

### [DEMO VIDEO](https://www.bilibili.com/video/BV17Q4y1B7mY/)

|

||||

|

||||

### Ongoing Works(Helps Needed)

|

||||

* Major upgrade on GUI/Client and unifying web and toolbox

|

||||

[X] Init framework `./mkgui` and [tech design](https://vaj2fgg8yn.feishu.cn/docs/doccnvotLWylBub8VJIjKzoEaee)

|

||||

[X] Add demo part of Voice Cloning and Conversion

|

||||

[X] Add preprocessing and training for Voice Conversion

|

||||

[ ] Add preprocessing and training for Encoder/Synthesizer/Vocoder

|

||||

* Major upgrade on model backend based on ESPnet2(not yet started)

|

||||

|

||||

## Quick Start

|

||||

|

||||

### 1. Install Requirements

|

||||

#### 1.1 General Setup

|

||||

> Follow the original repo to test if you got all environment ready.

|

||||

**Python 3.7 or higher ** is needed to run the toolbox.

|

||||

|

||||

@@ -31,76 +36,9 @@

|

||||

> If you get an `ERROR: Could not find a version that satisfies the requirement torch==1.9.0+cu102 (from versions: 0.1.2, 0.1.2.post1, 0.1.2.post2 )` This error is probably due to a low version of python, try using 3.9 and it will install successfully

|

||||

* Install [ffmpeg](https://ffmpeg.org/download.html#get-packages).

|

||||

* Run `pip install -r requirements.txt` to install the remaining necessary packages.

|

||||

> The recommended environment here is `Repo Tag 0.0.1` `Pytorch1.9.0 with Torchvision0.10.0 and cudatoolkit10.2` `requirements.txt` `webrtcvad-wheels` because `requirements. txt` was exported a few months ago, so it doesn't work with newer versions

|

||||

* Install webrtcvad `pip install webrtcvad-wheels`(If you need)

|

||||

|

||||

or

|

||||

- install dependencies with `conda` or `mamba`

|

||||

|

||||

```conda env create -n env_name -f env.yml```

|

||||

|

||||

```mamba env create -n env_name -f env.yml```

|

||||

|

||||

will create a virtual environment where necessary dependencies are installed. Switch to the new environment by `conda activate env_name` and enjoy it.

|

||||

> env.yml only includes the necessary dependencies to run the project,temporarily without monotonic-align. You can check the official website to install the GPU version of pytorch.

|

||||

|

||||

#### 1.2 Setup with a M1 Mac

|

||||

> The following steps are a workaround to directly use the original `demo_toolbox.py`without the changing of codes.

|

||||

>

|

||||

> Since the major issue comes with the PyQt5 packages used in `demo_toolbox.py` not compatible with M1 chips, were one to attempt on training models with the M1 chip, either that person can forgo `demo_toolbox.py`, or one can try the `web.py` in the project.

|

||||

|

||||

##### 1.2.1 Install `PyQt5`, with [ref](https://stackoverflow.com/a/68038451/20455983) here.

|

||||

* Create and open a Rosetta Terminal, with [ref](https://dev.to/courier/tips-and-tricks-to-setup-your-apple-m1-for-development-547g) here.

|

||||

* Use system Python to create a virtual environment for the project

|

||||

```

|

||||

/usr/bin/python3 -m venv /PathToMockingBird/venv

|

||||

source /PathToMockingBird/venv/bin/activate

|

||||

```

|

||||

* Upgrade pip and install `PyQt5`

|

||||

```

|

||||

pip install --upgrade pip

|

||||

pip install pyqt5

|

||||

```

|

||||

##### 1.2.2 Install `pyworld` and `ctc-segmentation`

|

||||

|

||||

> Both packages seem to be unique to this project and are not seen in the original [Real-Time Voice Cloning](https://github.com/CorentinJ/Real-Time-Voice-Cloning) project. When installing with `pip install`, both packages lack wheels so the program tries to directly compile from c code and could not find `Python.h`.

|

||||

|

||||

* Install `pyworld`

|

||||

* `brew install python` `Python.h` can come with Python installed by brew

|

||||

* `export CPLUS_INCLUDE_PATH=/opt/homebrew/Frameworks/Python.framework/Headers` The filepath of brew-installed `Python.h` is unique to M1 MacOS and listed above. One needs to manually add the path to the environment variables.

|

||||

* `pip install pyworld` that should do.

|

||||

|

||||

|

||||

* Install`ctc-segmentation`

|

||||

> Same method does not apply to `ctc-segmentation`, and one needs to compile it from the source code on [github](https://github.com/lumaku/ctc-segmentation).

|

||||

* `git clone https://github.com/lumaku/ctc-segmentation.git`

|

||||

* `cd ctc-segmentation`

|

||||

* `source /PathToMockingBird/venv/bin/activate` If the virtual environment hasn't been deployed, activate it.

|

||||

* `cythonize -3 ctc_segmentation/ctc_segmentation_dyn.pyx`

|

||||

* `/usr/bin/arch -x86_64 python setup.py build` Build with x86 architecture.

|

||||

* `/usr/bin/arch -x86_64 python setup.py install --optimize=1 --skip-build`Install with x86 architecture.

|

||||

|

||||

##### 1.2.3 Other dependencies

|

||||

* `/usr/bin/arch -x86_64 pip install torch torchvision torchaudio` Pip installing `PyTorch` as an example, articulate that it's installed with x86 architecture

|

||||

* `pip install ffmpeg` Install ffmpeg

|

||||

* `pip install -r requirements.txt` Install other requirements.

|

||||

|

||||

##### 1.2.4 Run the Inference Time (with Toolbox)

|

||||

> To run the project on x86 architecture. [ref](https://youtrack.jetbrains.com/issue/PY-46290/Allow-running-Python-under-Rosetta-2-in-PyCharm-for-Apple-Silicon).

|

||||

* `vim /PathToMockingBird/venv/bin/pythonM1` Create an executable file `pythonM1` to condition python interpreter at `/PathToMockingBird/venv/bin`.

|

||||

* Write in the following content:

|

||||

```

|

||||

#!/usr/bin/env zsh

|

||||

mydir=${0:a:h}

|

||||

/usr/bin/arch -x86_64 $mydir/python "$@"

|

||||

```

|

||||

* `chmod +x pythonM1` Set the file as executable.

|

||||

* If using PyCharm IDE, configure project interpreter to `pythonM1`([steps here](https://www.jetbrains.com/help/pycharm/configuring-python-interpreter.html#add-existing-interpreter)), if using command line python, run `/PathToMockingBird/venv/bin/pythonM1 demo_toolbox.py`

|

||||

|

||||

|

||||

> Note that we are using the pretrained encoder/vocoder but synthesizer since the original model is incompatible with the Chinese symbols. It means the demo_cli is not working at this moment.

|

||||

### 2. Prepare your models

|

||||

> Note that we are using the pretrained encoder/vocoder but not synthesizer, since the original model is incompatible with the Chinese symbols. It means the demo_cli is not working at this moment, so additional synthesizer models are required.

|

||||

|

||||

You can either train your models or use existing ones:

|

||||

|

||||

#### 2.1 Train encoder with your dataset (Optional)

|

||||

@@ -118,7 +56,7 @@ You can either train your models or use existing ones:

|

||||

Allowing parameter `--dataset {dataset}` to support aidatatang_200zh, magicdata, aishell3, data_aishell, etc.If this parameter is not passed, the default dataset will be aidatatang_200zh.

|

||||

|

||||

* Train the synthesizer:

|

||||

`python train.py --type=synth mandarin <datasets_root>/SV2TTS/synthesizer`

|

||||

`python synthesizer_train.py mandarin <datasets_root>/SV2TTS/synthesizer`

|

||||

|

||||

* Go to next step when you see attention line show and loss meet your need in training folder *synthesizer/saved_models/*.

|

||||

|

||||

@@ -129,7 +67,7 @@ Allowing parameter `--dataset {dataset}` to support aidatatang_200zh, magicdata,

|

||||

| --- | ----------- | ----- |----- |

|

||||

| @author | https://pan.baidu.com/s/1iONvRxmkI-t1nHqxKytY3g [Baidu](https://pan.baidu.com/s/1iONvRxmkI-t1nHqxKytY3g) 4j5d | | 75k steps trained by multiple datasets

|

||||

| @author | https://pan.baidu.com/s/1fMh9IlgKJlL2PIiRTYDUvw [Baidu](https://pan.baidu.com/s/1fMh9IlgKJlL2PIiRTYDUvw) code:om7f | | 25k steps trained by multiple datasets, only works under version 0.0.1

|

||||

|@FawenYo | https://yisiou-my.sharepoint.com/:u:/g/personal/lawrence_cheng_fawenyo_onmicrosoft_com/EWFWDHzee-NNg9TWdKckCc4BC7bK2j9cCbOWn0-_tK0nOg?e=n0gGgC | [input](https://github.com/babysor/MockingBird/wiki/audio/self_test.mp3) [output](https://github.com/babysor/MockingBird/wiki/audio/export.wav) | 200k steps with local accent of Taiwan, only works under version 0.0.1

|

||||

|@FawenYo | https://drive.google.com/file/d/1H-YGOUHpmqKxJ9FRc6vAjPuqQki24UbC/view?usp=sharing https://u.teknik.io/AYxWf.pt | [input](https://github.com/babysor/MockingBird/wiki/audio/self_test.mp3) [output](https://github.com/babysor/MockingBird/wiki/audio/export.wav) | 200k steps with local accent of Taiwan, only works under version 0.0.1

|

||||

|@miven| https://pan.baidu.com/s/1PI-hM3sn5wbeChRryX-RCQ code: 2021 https://www.aliyundrive.com/s/AwPsbo8mcSP code: z2m0 | https://www.bilibili.com/video/BV1uh411B7AD/ | only works under version 0.0.1

|

||||

|

||||

#### 2.4 Train vocoder (Optional)

|

||||

|

||||

@@ -1,9 +1,9 @@

|

||||

from models.encoder.params_model import model_embedding_size as speaker_embedding_size

|

||||

from encoder.params_model import model_embedding_size as speaker_embedding_size

|

||||

from utils.argutils import print_args

|

||||

from utils.modelutils import check_model_paths

|

||||

from models.synthesizer.inference import Synthesizer

|

||||

from models.encoder import inference as encoder

|

||||

from models.vocoder import inference as vocoder

|

||||

from synthesizer.inference import Synthesizer

|

||||

from encoder import inference as encoder

|

||||

from vocoder import inference as vocoder

|

||||

from pathlib import Path

|

||||

import numpy as np

|

||||

import soundfile as sf

|

||||

|

||||

@@ -1,66 +0,0 @@

|

||||

import sys

|

||||

import torch

|

||||

import argparse

|

||||

import numpy as np

|

||||

from utils.hparams import HpsYaml

|

||||

from models.ppg2mel.train.train_linglf02mel_seq2seq_oneshotvc import Solver

|

||||

|

||||

# For reproducibility, comment these may speed up training

|

||||

torch.backends.cudnn.deterministic = True

|

||||

torch.backends.cudnn.benchmark = False

|

||||

|

||||

def main():

|

||||

# Arguments

|

||||

parser = argparse.ArgumentParser(description=

|

||||

'Training PPG2Mel VC model.')

|

||||

parser.add_argument('--config', type=str,

|

||||

help='Path to experiment config, e.g., config/vc.yaml')

|

||||

parser.add_argument('--name', default=None, type=str, help='Name for logging.')

|

||||

parser.add_argument('--logdir', default='log/', type=str,

|

||||

help='Logging path.', required=False)

|

||||

parser.add_argument('--ckpdir', default='ppg2mel/saved_models/', type=str,

|

||||

help='Checkpoint path.', required=False)

|

||||

parser.add_argument('--outdir', default='result/', type=str,

|

||||

help='Decode output path.', required=False)

|

||||

parser.add_argument('--load', default=None, type=str,

|

||||

help='Load pre-trained model (for training only)', required=False)

|

||||

parser.add_argument('--warm_start', action='store_true',

|

||||

help='Load model weights only, ignore specified layers.')

|

||||

parser.add_argument('--seed', default=0, type=int,

|

||||

help='Random seed for reproducable results.', required=False)

|

||||

parser.add_argument('--njobs', default=8, type=int,

|

||||

help='Number of threads for dataloader/decoding.', required=False)

|

||||

parser.add_argument('--cpu', action='store_true', help='Disable GPU training.')

|

||||

parser.add_argument('--no-pin', action='store_true',

|

||||

help='Disable pin-memory for dataloader')

|

||||

parser.add_argument('--test', action='store_true', help='Test the model.')

|

||||

parser.add_argument('--no-msg', action='store_true', help='Hide all messages.')

|

||||

parser.add_argument('--finetune', action='store_true', help='Finetune model')

|

||||

parser.add_argument('--oneshotvc', action='store_true', help='Oneshot VC model')

|

||||

parser.add_argument('--bilstm', action='store_true', help='BiLSTM VC model')

|

||||

parser.add_argument('--lsa', action='store_true', help='Use location-sensitive attention (LSA)')

|

||||

|

||||

###

|

||||

paras = parser.parse_args()

|

||||

setattr(paras, 'gpu', not paras.cpu)

|

||||

setattr(paras, 'pin_memory', not paras.no_pin)

|

||||

setattr(paras, 'verbose', not paras.no_msg)

|

||||

# Make the config dict dot visitable

|

||||

config = HpsYaml(paras.config)

|

||||

|

||||

np.random.seed(paras.seed)

|

||||

torch.manual_seed(paras.seed)

|

||||

if torch.cuda.is_available():

|

||||

torch.cuda.manual_seed_all(paras.seed)

|

||||

|

||||

print(">>> OneShot VC training ...")

|

||||

mode = "train"

|

||||

solver = Solver(config, paras, mode)

|

||||

solver.load_data()

|

||||

solver.set_model()

|

||||

solver.exec()

|

||||

print(">>> Oneshot VC train finished!")

|

||||

sys.exit(0)

|

||||

|

||||

if __name__ == "__main__":

|

||||

main()

|

||||

@@ -1,31 +0,0 @@

|

||||

{

|

||||

"resblock": "1",

|

||||

"num_gpus": 0,

|

||||

"batch_size": 16,

|

||||

"learning_rate": 0.0002,

|

||||

"adam_b1": 0.8,

|

||||

"adam_b2": 0.99,

|

||||

"lr_decay": 0.999,

|

||||

"seed": 1234,

|

||||

|

||||

"upsample_rates": [5,5,4,2],

|

||||

"upsample_kernel_sizes": [10,10,8,4],

|

||||

"upsample_initial_channel": 512,

|

||||

"resblock_kernel_sizes": [3,7,11],

|

||||

"resblock_dilation_sizes": [[1,3,5], [1,3,5], [1,3,5]],

|

||||

|

||||

"segment_size": 6400,

|

||||

"num_mels": 80,

|

||||

"num_freq": 1025,

|

||||

"n_fft": 1024,

|

||||

"hop_size": 200,

|

||||

"win_size": 800,

|

||||

|

||||

"sampling_rate": 16000,

|

||||

|

||||

"fmin": 0,

|

||||

"fmax": 7600,

|

||||

"fmax_for_loss": null,

|

||||

|

||||

"num_workers": 4

|

||||

}

|

||||

@@ -1,8 +0,0 @@

|

||||

https://openslr.magicdatatech.com/resources/62/aidatatang_200zh.tgz

|

||||

out=download/aidatatang_200zh.tgz

|

||||

https://openslr.magicdatatech.com/resources/68/train_set.tar.gz

|

||||

out=download/magicdata.tgz

|

||||

https://openslr.magicdatatech.com/resources/93/data_aishell3.tgz

|

||||

out=download/aishell3.tgz

|

||||

https://openslr.magicdatatech.com/resources/33/data_aishell.tgz

|

||||

out=download/data_aishell.tgz

|

||||

@@ -1,8 +0,0 @@

|

||||

https://openslr.elda.org/resources/62/aidatatang_200zh.tgz

|

||||

out=download/aidatatang_200zh.tgz

|

||||

https://openslr.elda.org/resources/68/train_set.tar.gz

|

||||

out=download/magicdata.tgz

|

||||

https://openslr.elda.org/resources/93/data_aishell3.tgz

|

||||

out=download/aishell3.tgz

|

||||

https://openslr.elda.org/resources/33/data_aishell.tgz

|

||||

out=download/data_aishell.tgz

|

||||

@@ -1,8 +0,0 @@

|

||||

https://us.openslr.org/resources/62/aidatatang_200zh.tgz

|

||||

out=download/aidatatang_200zh.tgz

|

||||

https://us.openslr.org/resources/68/train_set.tar.gz

|

||||

out=download/magicdata.tgz

|

||||

https://us.openslr.org/resources/93/data_aishell3.tgz

|

||||

out=download/aishell3.tgz

|

||||

https://us.openslr.org/resources/33/data_aishell.tgz

|

||||

out=download/data_aishell.tgz

|

||||

@@ -1,4 +0,0 @@

|

||||

0c0ace77fe8ee77db8d7542d6eb0b7ddf09b1bfb880eb93a7fbdbf4611e9984b /datasets/download/aidatatang_200zh.tgz

|

||||

be2507d431ad59419ec871e60674caedb2b585f84ffa01fe359784686db0e0cc /datasets/download/aishell3.tgz

|

||||

a4a0313cde0a933e0e01a451f77de0a23d6c942f4694af5bb7f40b9dc38143fe /datasets/download/data_aishell.tgz

|

||||

1d2647c614b74048cfe16492570cc5146d800afdc07483a43b31809772632143 /datasets/download/magicdata.tgz

|

||||

@@ -1,8 +0,0 @@

|

||||

https://www.openslr.org/resources/62/aidatatang_200zh.tgz

|

||||

out=download/aidatatang_200zh.tgz

|

||||

https://www.openslr.org/resources/68/train_set.tar.gz

|

||||

out=download/magicdata.tgz

|

||||

https://www.openslr.org/resources/93/data_aishell3.tgz

|

||||

out=download/aishell3.tgz

|

||||

https://www.openslr.org/resources/33/data_aishell.tgz

|

||||

out=download/data_aishell.tgz

|

||||

@@ -1,8 +0,0 @@

|

||||

#!/usr/bin/env bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

aria2c -x 10 --disable-ipv6 --input-file /workspace/datasets_download/${DATASET_MIRROR}.txt --dir /datasets --continue

|

||||

|

||||

echo "Verifying sha256sum..."

|

||||

parallel --will-cite -a /workspace/datasets_download/datasets.sha256sum "echo -n {} | sha256sum -c"

|

||||

@@ -1,29 +0,0 @@

|

||||

#!/usr/bin/env bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

mkdir -p /datasets/aidatatang_200zh

|

||||

if [ -z "$(ls -A /datasets/aidatatang_200zh)" ] ; then

|

||||

tar xvz --directory /datasets/ -f /datasets/download/aidatatang_200zh.tgz --exclude 'aidatatang_200zh/corpus/dev/*' --exclude 'aidatatang_200zh/corpus/test/*'

|

||||

cd /datasets/aidatatang_200zh/corpus/train/

|

||||

cat *.tar.gz | tar zxvf - -i

|

||||

rm -f *.tar.gz

|

||||

fi

|

||||

|

||||

mkdir -p /datasets/magicdata

|

||||

if [ -z "$(ls -A /datasets/magicdata)" ] ; then

|

||||

tar xvz --directory /datasets/magicdata -f /datasets/download/magicdata.tgz train/

|

||||

fi

|

||||

|

||||

mkdir -p /datasets/aishell3

|

||||

if [ -z "$(ls -A /datasets/aishell3)" ] ; then

|

||||

tar xvz --directory /datasets/aishell3 -f /datasets/download/aishell3.tgz train/

|

||||

fi

|

||||

|

||||

mkdir -p /datasets/data_aishell

|

||||

if [ -z "$(ls -A /datasets/data_aishell)" ] ; then

|

||||

tar xvz --directory /datasets/ -f /datasets/download/data_aishell.tgz

|

||||

cd /datasets/data_aishell/wav/

|

||||

cat *.tar.gz | tar zxvf - -i --exclude 'dev/*' --exclude 'test/*'

|

||||

rm -f *.tar.gz

|

||||

fi

|

||||

@@ -1,5 +1,5 @@

|

||||

from pathlib import Path

|

||||

from control.toolbox import Toolbox

|

||||

from toolbox import Toolbox

|

||||

from utils.argutils import print_args

|

||||

from utils.modelutils import check_model_paths

|

||||

import argparse

|

||||

@@ -17,15 +17,15 @@ if __name__ == '__main__':

|

||||

"supported datasets.", default=None)

|

||||

parser.add_argument("-vc", "--vc_mode", action="store_true",

|

||||

help="Voice Conversion Mode(PPG based)")

|

||||

parser.add_argument("-e", "--enc_models_dir", type=Path, default=f"data{os.sep}ckpt{os.sep}encoder",

|

||||

parser.add_argument("-e", "--enc_models_dir", type=Path, default="encoder/saved_models",

|

||||

help="Directory containing saved encoder models")

|

||||

parser.add_argument("-s", "--syn_models_dir", type=Path, default=f"data{os.sep}ckpt{os.sep}synthesizer",

|

||||

parser.add_argument("-s", "--syn_models_dir", type=Path, default="synthesizer/saved_models",

|

||||

help="Directory containing saved synthesizer models")

|

||||

parser.add_argument("-v", "--voc_models_dir", type=Path, default=f"data{os.sep}ckpt{os.sep}vocoder",

|

||||

parser.add_argument("-v", "--voc_models_dir", type=Path, default="vocoder/saved_models",

|

||||

help="Directory containing saved vocoder models")

|

||||

parser.add_argument("-ex", "--extractor_models_dir", type=Path, default=f"data{os.sep}ckpt{os.sep}ppg_extractor",

|

||||

parser.add_argument("-ex", "--extractor_models_dir", type=Path, default="ppg_extractor/saved_models",

|

||||

help="Directory containing saved extrator models")

|

||||

parser.add_argument("-cv", "--convertor_models_dir", type=Path, default=f"data{os.sep}ckpt{os.sep}ppg2mel",

|

||||

parser.add_argument("-cv", "--convertor_models_dir", type=Path, default="ppg2mel/saved_models",

|

||||

help="Directory containing saved convert models")

|

||||

parser.add_argument("--cpu", action="store_true", help=\

|

||||

"If True, processing is done on CPU, even when a GPU is available.")

|

||||

|

||||

@@ -1,23 +0,0 @@

|

||||

version: '3.8'

|

||||

|

||||

services:

|

||||

server:

|

||||

image: mockingbird:latest

|

||||

build: .

|

||||

volumes:

|

||||

- ./datasets:/datasets

|

||||

- ./synthesizer/saved_models:/workspace/synthesizer/saved_models

|

||||

environment:

|

||||

- DATASET_MIRROR=US

|

||||

- FORCE_RETRAIN=false

|

||||

- TRAIN_DATASETS=aidatatang_200zh magicdata aishell3 data_aishell

|

||||

- TRAIN_SKIP_EXISTING=true

|

||||

ports:

|

||||

- 8080:8080

|

||||

deploy:

|

||||

resources:

|

||||

reservations:

|

||||

devices:

|

||||

- driver: nvidia

|

||||

device_ids: [ '0' ]

|

||||

capabilities: [ gpu ]

|

||||

@@ -1,17 +0,0 @@

|

||||

#!/usr/bin/env bash

|

||||

|

||||

if [ -z "$(ls -A /workspace/synthesizer/saved_models)" ] || [ "$FORCE_RETRAIN" = true ] ; then

|

||||

/workspace/datasets_download/download.sh

|

||||

/workspace/datasets_download/extract.sh

|

||||

for DATASET in ${TRAIN_DATASETS}

|

||||

do

|

||||

if [ "$TRAIN_SKIP_EXISTING" = true ] ; then

|

||||

python pre.py /datasets -d ${DATASET} -n $(nproc) --skip_existing

|

||||

else

|

||||

python pre.py /datasets -d ${DATASET} -n $(nproc)

|

||||

fi

|

||||

done

|

||||

python synthesizer_train.py mandarin /datasets/SV2TTS/synthesizer

|

||||

fi

|

||||

|

||||

python web.py

|

||||

@@ -1,5 +1,5 @@

|

||||

from scipy.ndimage.morphology import binary_dilation

|

||||

from models.encoder.params_data import *

|

||||

from encoder.params_data import *

|

||||

from pathlib import Path

|

||||

from typing import Optional, Union

|

||||

from warnings import warn

|

||||

@@ -39,7 +39,7 @@ def preprocess_wav(fpath_or_wav: Union[str, Path, np.ndarray],

|

||||

|

||||

# Resample the wav if needed

|

||||

if source_sr is not None and source_sr != sampling_rate:

|

||||

wav = librosa.resample(wav, orig_sr = source_sr, target_sr = sampling_rate)

|

||||

wav = librosa.resample(wav, source_sr, sampling_rate)

|

||||

|

||||

# Apply the preprocessing: normalize volume and shorten long silences

|

||||

if normalize:

|

||||

@@ -99,7 +99,7 @@ def trim_long_silences(wav):

|

||||

return ret[width - 1:] / width

|

||||

|

||||

audio_mask = moving_average(voice_flags, vad_moving_average_width)

|

||||

audio_mask = np.round(audio_mask).astype(bool)

|

||||

audio_mask = np.round(audio_mask).astype(np.bool)

|

||||

|

||||

# Dilate the voiced regions

|

||||

audio_mask = binary_dilation(audio_mask, np.ones(vad_max_silence_length + 1))

|

||||

2

encoder/data_objects/__init__.py

Normal file

2

encoder/data_objects/__init__.py

Normal file

@@ -0,0 +1,2 @@

|

||||

from encoder.data_objects.speaker_verification_dataset import SpeakerVerificationDataset

|

||||

from encoder.data_objects.speaker_verification_dataset import SpeakerVerificationDataLoader

|

||||

@@ -1,5 +1,5 @@

|

||||

from models.encoder.data_objects.random_cycler import RandomCycler

|

||||

from models.encoder.data_objects.utterance import Utterance

|

||||

from encoder.data_objects.random_cycler import RandomCycler

|

||||

from encoder.data_objects.utterance import Utterance

|

||||

from pathlib import Path

|

||||

|

||||

# Contains the set of utterances of a single speaker

|

||||

@@ -1,6 +1,6 @@

|

||||

import numpy as np

|

||||

from typing import List

|

||||

from models.encoder.data_objects.speaker import Speaker

|

||||

from encoder.data_objects.speaker import Speaker

|

||||

|

||||

class SpeakerBatch:

|

||||

def __init__(self, speakers: List[Speaker], utterances_per_speaker: int, n_frames: int):

|

||||

@@ -1,7 +1,7 @@

|

||||

from models.encoder.data_objects.random_cycler import RandomCycler

|

||||

from models.encoder.data_objects.speaker_batch import SpeakerBatch

|

||||

from models.encoder.data_objects.speaker import Speaker

|

||||

from models.encoder.params_data import partials_n_frames

|

||||

from encoder.data_objects.random_cycler import RandomCycler

|

||||

from encoder.data_objects.speaker_batch import SpeakerBatch

|

||||

from encoder.data_objects.speaker import Speaker

|

||||

from encoder.params_data import partials_n_frames

|

||||

from torch.utils.data import Dataset, DataLoader

|

||||

from pathlib import Path

|

||||

|

||||

@@ -1,8 +1,8 @@

|

||||

from models.encoder.params_data import *

|

||||

from models.encoder.model import SpeakerEncoder

|

||||

from models.encoder.audio import preprocess_wav # We want to expose this function from here

|

||||

from encoder.params_data import *

|

||||

from encoder.model import SpeakerEncoder

|

||||

from encoder.audio import preprocess_wav # We want to expose this function from here

|

||||

from matplotlib import cm

|

||||

from models.encoder import audio

|

||||

from encoder import audio

|

||||

from pathlib import Path

|

||||

import matplotlib.pyplot as plt

|

||||

import numpy as np

|

||||

@@ -1,5 +1,5 @@

|

||||

from models.encoder.params_model import *

|

||||

from models.encoder.params_data import *

|

||||

from encoder.params_model import *

|

||||

from encoder.params_data import *

|

||||

from scipy.interpolate import interp1d

|

||||

from sklearn.metrics import roc_curve

|

||||

from torch.nn.utils import clip_grad_norm_

|

||||

@@ -1,8 +1,8 @@

|

||||

from multiprocess.pool import ThreadPool

|

||||

from models.encoder.params_data import *

|

||||

from models.encoder.config import librispeech_datasets, anglophone_nationalites

|

||||

from encoder.params_data import *

|

||||

from encoder.config import librispeech_datasets, anglophone_nationalites

|

||||

from datetime import datetime

|

||||

from models.encoder import audio

|

||||

from encoder import audio

|

||||

from pathlib import Path

|

||||

from tqdm import tqdm

|

||||

import numpy as np

|

||||

@@ -22,7 +22,7 @@ class DatasetLog:

|

||||

self._log_params()

|

||||

|

||||

def _log_params(self):

|

||||

from models.encoder import params_data

|

||||

from encoder import params_data

|

||||

self.write_line("Parameter values:")

|

||||

for param_name in (p for p in dir(params_data) if not p.startswith("__")):

|

||||

value = getattr(params_data, param_name)

|

||||

@@ -1,7 +1,7 @@

|

||||

from models.encoder.visualizations import Visualizations

|

||||

from models.encoder.data_objects import SpeakerVerificationDataLoader, SpeakerVerificationDataset

|

||||

from models.encoder.params_model import *

|

||||

from models.encoder.model import SpeakerEncoder

|

||||

from encoder.visualizations import Visualizations

|

||||

from encoder.data_objects import SpeakerVerificationDataLoader, SpeakerVerificationDataset

|

||||

from encoder.params_model import *

|

||||

from encoder.model import SpeakerEncoder

|

||||

from utils.profiler import Profiler

|

||||

from pathlib import Path

|

||||

import torch

|

||||

@@ -1,4 +1,4 @@

|

||||

from models.encoder.data_objects.speaker_verification_dataset import SpeakerVerificationDataset

|

||||

from encoder.data_objects.speaker_verification_dataset import SpeakerVerificationDataset

|

||||

from datetime import datetime

|

||||

from time import perf_counter as timer

|

||||

import matplotlib.pyplot as plt

|

||||

@@ -21,7 +21,7 @@ colormap = np.array([

|

||||

[33, 0, 127],

|

||||

[0, 0, 0],

|

||||

[183, 183, 183],

|

||||

], dtype=float) / 255

|

||||

], dtype=np.float) / 255

|

||||

|

||||

|

||||

class Visualizations:

|

||||

@@ -65,8 +65,8 @@ class Visualizations:

|

||||

def log_params(self):

|

||||

if self.disabled:

|

||||

return

|

||||

from models.encoder import params_data

|

||||

from models.encoder import params_model

|

||||

from encoder import params_data

|

||||

from encoder import params_model

|

||||

param_string = "<b>Model parameters</b>:<br>"

|

||||

for param_name in (p for p in dir(params_model) if not p.startswith("__")):

|

||||

value = getattr(params_model, param_name)

|

||||

@@ -1,10 +1,7 @@

|

||||

import argparse

|

||||

from pathlib import Path

|

||||

|

||||

from models.encoder.preprocess import (preprocess_aidatatang_200zh,

|

||||

preprocess_librispeech, preprocess_voxceleb1,

|

||||

preprocess_voxceleb2)

|

||||

from encoder.preprocess import preprocess_librispeech, preprocess_voxceleb1, preprocess_voxceleb2, preprocess_aidatatang_200zh

|

||||

from utils.argutils import print_args

|

||||

from pathlib import Path

|

||||

import argparse

|

||||

|

||||

if __name__ == "__main__":

|

||||

class MyFormatter(argparse.ArgumentDefaultsHelpFormatter, argparse.RawDescriptionHelpFormatter):

|

||||

@@ -1,5 +1,5 @@

|

||||

from utils.argutils import print_args

|

||||

from models.encoder.train import train

|

||||

from encoder.train import train

|

||||

from pathlib import Path

|

||||

import argparse

|

||||

|

||||

14

gen_voice.py

14

gen_voice.py

@@ -1,15 +1,23 @@

|

||||

from models.synthesizer.inference import Synthesizer

|

||||

from models.encoder import inference as encoder

|

||||

from models.vocoder.hifigan import inference as gan_vocoder

|

||||

from encoder.params_model import model_embedding_size as speaker_embedding_size

|

||||

from utils.argutils import print_args

|

||||

from utils.modelutils import check_model_paths

|

||||

from synthesizer.inference import Synthesizer

|

||||

from encoder import inference as encoder

|

||||

from vocoder.wavernn import inference as rnn_vocoder

|

||||

from vocoder.hifigan import inference as gan_vocoder

|

||||

from pathlib import Path

|

||||

import numpy as np

|

||||

import soundfile as sf

|

||||

import librosa

|

||||

import argparse

|

||||

import torch

|

||||

import sys

|

||||

import os

|

||||

import re

|

||||

import cn2an

|

||||

import glob

|

||||

|

||||

from audioread.exceptions import NoBackendError

|

||||

vocoder = gan_vocoder

|

||||

|

||||

def gen_one_wav(synthesizer, in_fpath, embed, texts, file_name, seq):

|

||||

|

||||

@@ -2,22 +2,22 @@ from pydantic import BaseModel, Field

|

||||

import os

|

||||

from pathlib import Path

|

||||

from enum import Enum

|

||||

from models.encoder import inference as encoder

|

||||

from encoder import inference as encoder

|

||||

import librosa

|

||||

from scipy.io.wavfile import write

|

||||

import re

|

||||

import numpy as np

|

||||

from control.mkgui.base.components.types import FileContent

|

||||

from models.vocoder.hifigan import inference as gan_vocoder

|

||||

from models.synthesizer.inference import Synthesizer

|

||||

from mkgui.base.components.types import FileContent

|

||||

from vocoder.hifigan import inference as gan_vocoder

|

||||

from synthesizer.inference import Synthesizer

|

||||

from typing import Any, Tuple

|

||||

import matplotlib.pyplot as plt

|

||||

|

||||

# Constants

|

||||

AUDIO_SAMPLES_DIR = f"data{os.sep}samples{os.sep}"

|

||||

SYN_MODELS_DIRT = f"data{os.sep}ckpt{os.sep}synthesizer"

|

||||

ENC_MODELS_DIRT = f"data{os.sep}ckpt{os.sep}encoder"

|

||||

VOC_MODELS_DIRT = f"data{os.sep}ckpt{os.sep}vocoder"

|

||||

AUDIO_SAMPLES_DIR = f"samples{os.sep}"

|

||||

SYN_MODELS_DIRT = f"synthesizer{os.sep}saved_models"

|

||||

ENC_MODELS_DIRT = f"encoder{os.sep}saved_models"

|

||||

VOC_MODELS_DIRT = f"vocoder{os.sep}saved_models"

|

||||

TEMP_SOURCE_AUDIO = f"wavs{os.sep}temp_source.wav"

|

||||

TEMP_RESULT_AUDIO = f"wavs{os.sep}temp_result.wav"

|

||||

if not os.path.isdir("wavs"):

|

||||

@@ -31,7 +31,7 @@ if os.path.isdir(SYN_MODELS_DIRT):

|

||||

synthesizers = Enum('synthesizers', list((file.name, file) for file in Path(SYN_MODELS_DIRT).glob("**/*.pt")))

|

||||

print("Loaded synthesizer models: " + str(len(synthesizers)))

|

||||

else:

|

||||

raise Exception(f"Model folder {SYN_MODELS_DIRT} doesn't exist. 请将模型文件位置移动到上述位置中进行重试!")

|

||||

raise Exception(f"Model folder {SYN_MODELS_DIRT} doesn't exist.")

|

||||

|

||||

if os.path.isdir(ENC_MODELS_DIRT):

|

||||

encoders = Enum('encoders', list((file.name, file) for file in Path(ENC_MODELS_DIRT).glob("**/*.pt")))

|

||||

@@ -46,16 +46,15 @@ else:

|

||||

raise Exception(f"Model folder {VOC_MODELS_DIRT} doesn't exist.")

|

||||

|

||||

|

||||

|

||||

class Input(BaseModel):

|

||||

message: str = Field(

|

||||

..., example="欢迎使用工具箱, 现已支持中文输入!", alias="文本内容"

|

||||

)

|

||||

local_audio_file: audio_input_selection = Field(

|

||||

..., alias="选择语音(本地wav)",

|

||||

..., alias="输入语音(本地wav)",

|

||||

description="选择本地语音文件."

|

||||

)

|

||||

record_audio_file: FileContent = Field(default=None, alias="录制语音",

|

||||