mirror of

https://github.com/xhongc/music-tag-web.git

synced 2026-05-04 22:14:24 +08:00

feature: first commit

This commit is contained in:

50

README.md

50

README.md

@@ -1,50 +0,0 @@

|

||||

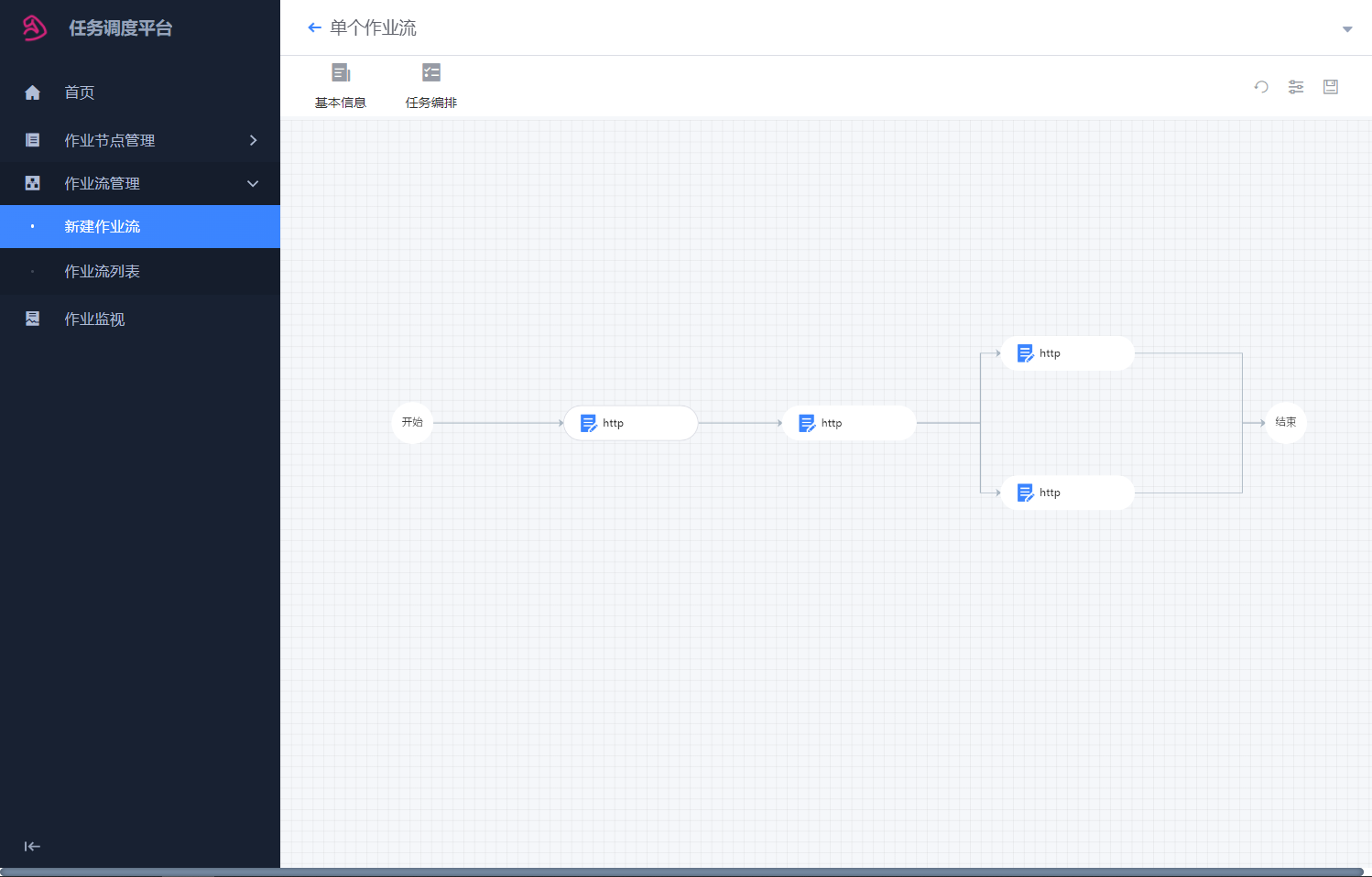

# 任务调度平台

|

||||

|

||||

|

||||

## todo list

|

||||

- [ ] 变量管理-模型设计

|

||||

- [ ] 变量管理-crud接口

|

||||

- [ ] 变量管理-前端页面

|

||||

- [ ] 变量管理-接口对接

|

||||

- [ ] 变量管理-变量集成到任务中,调整引擎中节点变量传递

|

||||

-

|

||||

- [ ] 任务管理-新增删除查看

|

||||

- [ ] 任务管理-考虑运行中的任务,可不可修改

|

||||

|

||||

- [ ] 首页-聚合数据接口,高纬度展示图表

|

||||

|

||||

- [ ] 节点管理- 区分标准节点-和节点模版

|

||||

- [ ] 节点管理- 编辑时的实时预览功能

|

||||

- [ ] 节点管理- 自定节点组建后端代码逻辑的上传,和持久化

|

||||

- [ ] 节点管理- 返回值规范未定义

|

||||

- [ ] 节点管理- 克隆/导入/导出 优先级降低

|

||||

- [ ] 节点管理- Table字段梳理,前端冗余代码删减

|

||||

- [ ] 节点管理- 搜索过滤功能

|

||||

- [ ] 节点管理- 新建作业/导入作业 统一移动到作业列表页

|

||||

|

||||

- [ ] 作业流管理- 新建作业流/导入作业流业 统一移动到作业流列表页

|

||||

- [ ] 作业流管理- 分类接口,作业流关联

|

||||

- [ ] 作业流管理- 克隆/导入/导出 优先级降低

|

||||

- [ ] 作业流管理- 跳转到执行历史

|

||||

- [ ] 作业流管理- 删除调度方式,移至任务里

|

||||

- [ ] 作业流管理- 大流程的作业流创建失败 bug

|

||||

|

||||

- [ ] 任务管理- 新建任务

|

||||

- [ ] 任务管理- 执行任务

|

||||

- [ ] 任务管理- 定时任务和周期任务

|

||||

|

||||

- [ ] 变量管理- 模型设计

|

||||

- [ ] 变量管理- 全局变量,局部变量,可变变量,常量

|

||||

- [ ] 变量管理- 集成进任务里

|

||||

|

||||

- [ ] 作业监视- 暂停,停止,跳过,忽略,等人工干预操作

|

||||

- [ ] 作业监视- 节点重试功能

|

||||

- [ ] 作业监视- Table字段梳理,前端冗余代码删减

|

||||

- [ ] 作业监视- 搜索过滤功能

|

||||

- [ ] 作业监视- 失败状态保存,失败状态判断

|

||||

|

||||

- [ ] 告警管理- 规划中

|

||||

- [ ] 审计管理- 规划中

|

||||

## install tips

|

||||

sudo apt-get install libmysqlclient-dev

|

||||

python3-dev

|

||||

@@ -1,3 +0,0 @@

|

||||

from django.contrib import admin

|

||||

|

||||

# Register your models here.

|

||||

@@ -1,6 +0,0 @@

|

||||

from django.apps import AppConfig

|

||||

|

||||

|

||||

class FlowConfig(AppConfig):

|

||||

default_auto_field = 'django.db.models.BigAutoField'

|

||||

name = 'applications.flow'

|

||||

@@ -1,70 +0,0 @@

|

||||

FAIL_OFFSET_UNIT_CHOICE = (

|

||||

("seconds", "秒"),

|

||||

("hours", "时"),

|

||||

("minutes", "分"),

|

||||

|

||||

)

|

||||

node_type = (

|

||||

(0, "开始节点"),

|

||||

(1, "结束节点"),

|

||||

(2, "作业节点"),

|

||||

(3, "子流程"),

|

||||

(4, "条件分支"),

|

||||

(5, "汇聚网关"),

|

||||

)

|

||||

|

||||

|

||||

class StateType(object):

|

||||

CREATED = "CREATED"

|

||||

READY = "READY"

|

||||

RUNNING = "RUNNING"

|

||||

SUSPENDED = "SUSPENDED"

|

||||

BLOCKED = "BLOCKED"

|

||||

FINISHED = "FINISHED"

|

||||

FAILED = "FAILED"

|

||||

REVOKED = "REVOKED"

|

||||

|

||||

|

||||

PIPELINE_STATE_TO_FLOW_STATE = {

|

||||

StateType.READY: "wait",

|

||||

StateType.RUNNING: "run",

|

||||

StateType.FAILED: "fail",

|

||||

StateType.FINISHED: "success",

|

||||

StateType.SUSPENDED: "pause",

|

||||

StateType.REVOKED: "cancel",

|

||||

StateType.BLOCKED: "stop",

|

||||

StateType.CREATED: "positive",

|

||||

|

||||

}

|

||||

|

||||

|

||||

class NodeTemplateType:

|

||||

# 空节点模板

|

||||

EmptyTemplate = "0"

|

||||

# 带内容的节点模板

|

||||

ContentTemplate = "2"

|

||||

|

||||

|

||||

a = [

|

||||

{"key": "url", "type": "textarea", "label": "请求地址:"},

|

||||

{"key": "method", "type": "select", "label": "请求类型:", "choices": [{"label": "GET", "value": "get"}]},

|

||||

{"key": "header", "type": "dict_map", "label": "Header"},

|

||||

{"key": "body", "type": "textarea", "label": "Body:"},

|

||||

{"key": "timeout", "type": "number", "label": "超时时间:"}

|

||||

]

|

||||

i = {

|

||||

"url": "",

|

||||

"method": "get",

|

||||

"header": [

|

||||

{

|

||||

"key": "",

|

||||

"value": ""

|

||||

}],

|

||||

"body": "{}",

|

||||

"timeout": 60,

|

||||

"check_point": {

|

||||

"key": "",

|

||||

"condition": "",

|

||||

"values": ""

|

||||

}

|

||||

}

|

||||

@@ -1,5 +0,0 @@

|

||||

import django_filters as filters

|

||||

|

||||

|

||||

class NodeTemplateFilter(filters.FilterSet):

|

||||

template_type = filters.CharFilter(lookup_expr="iexact")

|

||||

@@ -1,112 +0,0 @@

|

||||

# Generated by Django 2.2.6 on 2022-02-09 03:29

|

||||

|

||||

import datetime

|

||||

from django.db import migrations, models

|

||||

import django.db.models.deletion

|

||||

import django_mysql.models

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

initial = True

|

||||

|

||||

dependencies = [

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.CreateModel(

|

||||

name='Category',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='分类名称')),

|

||||

],

|

||||

),

|

||||

migrations.CreateModel(

|

||||

name='Process',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='作业名称')),

|

||||

('description', models.CharField(blank=True, max_length=255, null=True, verbose_name='作业描述')),

|

||||

('run_type', models.CharField(max_length=32, verbose_name='调度类型')),

|

||||

('total_run_count', models.PositiveIntegerField(default=0, verbose_name='执行次数')),

|

||||

('gateways', django_mysql.models.JSONField(default=dict, verbose_name='网关信息')),

|

||||

('constants', django_mysql.models.JSONField(default=dict, verbose_name='内部变量信息')),

|

||||

('dag', django_mysql.models.JSONField(default=dict, verbose_name='DAG')),

|

||||

('create_by', models.CharField(max_length=64, null=True, verbose_name='创建者')),

|

||||

('create_time', models.DateTimeField(default=datetime.datetime.now, verbose_name='创建时间')),

|

||||

('update_time', models.DateTimeField(auto_now=True, verbose_name='修改时间')),

|

||||

('update_by', models.CharField(max_length=64, null=True, verbose_name='修改人')),

|

||||

('category', models.ManyToManyField(to='flow.Category')),

|

||||

],

|

||||

),

|

||||

migrations.CreateModel(

|

||||

name='ProcessRun',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='作业名称')),

|

||||

('description', models.CharField(blank=True, max_length=255, null=True, verbose_name='作业描述')),

|

||||

('run_type', models.CharField(max_length=32, verbose_name='调度类型')),

|

||||

('gateways', django_mysql.models.JSONField(default=dict, verbose_name='网关信息')),

|

||||

('constants', django_mysql.models.JSONField(default=dict, verbose_name='内部变量信息')),

|

||||

('dag', django_mysql.models.JSONField(default=dict, verbose_name='DAG')),

|

||||

('create_by', models.CharField(max_length=64, null=True, verbose_name='创建者')),

|

||||

('create_time', models.DateTimeField(default=datetime.datetime.now, verbose_name='创建时间')),

|

||||

('update_time', models.DateTimeField(auto_now=True, verbose_name='修改时间')),

|

||||

('update_by', models.CharField(max_length=64, null=True, verbose_name='修改人')),

|

||||

('root_id', models.CharField(max_length=255, verbose_name='根节点uuid')),

|

||||

('process', models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.SET_NULL, related_name='run', to='flow.Process')),

|

||||

],

|

||||

),

|

||||

migrations.CreateModel(

|

||||

name='NodeRun',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='节点名称')),

|

||||

('uuid', models.CharField(max_length=255, unique=True, verbose_name='UUID')),

|

||||

('description', models.CharField(blank=True, max_length=255, null=True, verbose_name='节点描述')),

|

||||

('show', models.BooleanField(default=True, verbose_name='是否显示')),

|

||||

('top', models.IntegerField(default=300)),

|

||||

('left', models.IntegerField(default=300)),

|

||||

('ico', models.CharField(blank=True, max_length=64, null=True, verbose_name='icon')),

|

||||

('fail_retry_count', models.IntegerField(default=0, verbose_name='失败重试次数')),

|

||||

('fail_offset', models.IntegerField(default=0, verbose_name='失败重试间隔')),

|

||||

('fail_offset_unit', models.CharField(choices=[('seconds', '秒'), ('hours', '时'), ('minutes', '分')], max_length=32, verbose_name='重试间隔单位')),

|

||||

('node_type', models.IntegerField(default=2)),

|

||||

('component_code', models.CharField(max_length=255, verbose_name='插件名称')),

|

||||

('is_skip_fail', models.BooleanField(default=False, verbose_name='忽略失败')),

|

||||

('is_timeout_alarm', models.BooleanField(default=False, verbose_name='超时告警')),

|

||||

('inputs', django_mysql.models.JSONField(default=dict, verbose_name='输入参数')),

|

||||

('outputs', django_mysql.models.JSONField(default=dict, verbose_name='输出参数')),

|

||||

('process_run', models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.SET_NULL, related_name='nodes_run', to='flow.ProcessRun')),

|

||||

],

|

||||

options={

|

||||

'abstract': False,

|

||||

},

|

||||

),

|

||||

migrations.CreateModel(

|

||||

name='Node',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='节点名称')),

|

||||

('uuid', models.CharField(max_length=255, unique=True, verbose_name='UUID')),

|

||||

('description', models.CharField(blank=True, max_length=255, null=True, verbose_name='节点描述')),

|

||||

('show', models.BooleanField(default=True, verbose_name='是否显示')),

|

||||

('top', models.IntegerField(default=300)),

|

||||

('left', models.IntegerField(default=300)),

|

||||

('ico', models.CharField(blank=True, max_length=64, null=True, verbose_name='icon')),

|

||||

('fail_retry_count', models.IntegerField(default=0, verbose_name='失败重试次数')),

|

||||

('fail_offset', models.IntegerField(default=0, verbose_name='失败重试间隔')),

|

||||

('fail_offset_unit', models.CharField(choices=[('seconds', '秒'), ('hours', '时'), ('minutes', '分')], max_length=32, verbose_name='重试间隔单位')),

|

||||

('node_type', models.IntegerField(default=2)),

|

||||

('component_code', models.CharField(max_length=255, verbose_name='插件名称')),

|

||||

('is_skip_fail', models.BooleanField(default=False, verbose_name='忽略失败')),

|

||||

('is_timeout_alarm', models.BooleanField(default=False, verbose_name='超时告警')),

|

||||

('inputs', django_mysql.models.JSONField(default=dict, verbose_name='输入参数')),

|

||||

('outputs', django_mysql.models.JSONField(default=dict, verbose_name='输出参数')),

|

||||

('process', models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.SET_NULL, related_name='nodes', to='flow.Process')),

|

||||

],

|

||||

options={

|

||||

'abstract': False,

|

||||

},

|

||||

),

|

||||

]

|

||||

@@ -1,40 +0,0 @@

|

||||

# Generated by Django 2.2.6 on 2022-02-10 14:21

|

||||

|

||||

from django.db import migrations, models

|

||||

import django_mysql.models

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

dependencies = [

|

||||

('flow', '0001_initial'),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.CreateModel(

|

||||

name='NodeTemplate',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='节点名称')),

|

||||

('uuid', models.CharField(max_length=255, unique=True, verbose_name='UUID')),

|

||||

('description', models.CharField(blank=True, max_length=255, null=True, verbose_name='节点描述')),

|

||||

('show', models.BooleanField(default=True, verbose_name='是否显示')),

|

||||

('top', models.IntegerField(default=300)),

|

||||

('left', models.IntegerField(default=300)),

|

||||

('ico', models.CharField(blank=True, max_length=64, null=True, verbose_name='icon')),

|

||||

('fail_retry_count', models.IntegerField(default=0, verbose_name='失败重试次数')),

|

||||

('fail_offset', models.IntegerField(default=0, verbose_name='失败重试间隔')),

|

||||

('fail_offset_unit', models.CharField(choices=[('seconds', '秒'), ('hours', '时'), ('minutes', '分')], max_length=32, verbose_name='重试间隔单位')),

|

||||

('node_type', models.IntegerField(default=2)),

|

||||

('component_code', models.CharField(max_length=255, verbose_name='插件名称')),

|

||||

('is_skip_fail', models.BooleanField(default=False, verbose_name='忽略失败')),

|

||||

('is_timeout_alarm', models.BooleanField(default=False, verbose_name='超时告警')),

|

||||

('inputs', django_mysql.models.JSONField(default=dict, verbose_name='输入参数')),

|

||||

('outputs', django_mysql.models.JSONField(default=dict, verbose_name='输出参数')),

|

||||

('template_type', models.CharField(default='2', max_length=1, verbose_name='节点模板类型')),

|

||||

],

|

||||

options={

|

||||

'abstract': False,

|

||||

},

|

||||

),

|

||||

]

|

||||

@@ -1,28 +0,0 @@

|

||||

# Generated by Django 2.2.6 on 2022-02-10 17:37

|

||||

|

||||

from django.db import migrations, models

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

dependencies = [

|

||||

('flow', '0002_nodetemplate'),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.AddField(

|

||||

model_name='node',

|

||||

name='content',

|

||||

field=models.IntegerField(default=0, verbose_name='模板id'),

|

||||

),

|

||||

migrations.AddField(

|

||||

model_name='noderun',

|

||||

name='content',

|

||||

field=models.IntegerField(default=0, verbose_name='模板id'),

|

||||

),

|

||||

migrations.AddField(

|

||||

model_name='nodetemplate',

|

||||

name='content',

|

||||

field=models.IntegerField(default=0, verbose_name='模板id'),

|

||||

),

|

||||

]

|

||||

@@ -1,24 +0,0 @@

|

||||

# Generated by Django 2.2.6 on 2022-02-26 12:02

|

||||

|

||||

from django.db import migrations

|

||||

import django_mysql.models

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

dependencies = [

|

||||

('flow', '0003_auto_20220210_1737'),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.AddField(

|

||||

model_name='nodetemplate',

|

||||

name='inputs_component',

|

||||

field=django_mysql.models.JSONField(default=list, verbose_name='前端参数组件'),

|

||||

),

|

||||

migrations.AddField(

|

||||

model_name='nodetemplate',

|

||||

name='outputs_component',

|

||||

field=django_mysql.models.JSONField(default=list, verbose_name='前端参数组件'),

|

||||

),

|

||||

]

|

||||

@@ -1,62 +0,0 @@

|

||||

# Generated by Django 2.2.6 on 2022-06-16 16:14

|

||||

|

||||

import datetime

|

||||

from django.db import migrations, models

|

||||

import django.db.models.deletion

|

||||

import django_mysql.models

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

dependencies = [

|

||||

('flow', '0004_auto_20220226_1202'),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.CreateModel(

|

||||

name='SubProcessRun',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='作业名称')),

|

||||

('description', models.CharField(blank=True, max_length=255, null=True, verbose_name='作业描述')),

|

||||

('run_type', models.CharField(max_length=32, verbose_name='调度类型')),

|

||||

('gateways', django_mysql.models.JSONField(default=dict, verbose_name='网关信息')),

|

||||

('constants', django_mysql.models.JSONField(default=dict, verbose_name='内部变量信息')),

|

||||

('dag', django_mysql.models.JSONField(default=dict, verbose_name='DAG')),

|

||||

('create_by', models.CharField(max_length=64, null=True, verbose_name='创建者')),

|

||||

('create_time', models.DateTimeField(default=datetime.datetime.now, verbose_name='创建时间')),

|

||||

('update_time', models.DateTimeField(auto_now=True, verbose_name='修改时间')),

|

||||

('update_by', models.CharField(max_length=64, null=True, verbose_name='修改人')),

|

||||

('root_id', models.CharField(max_length=255, verbose_name='根节点uuid')),

|

||||

('process', models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.SET_NULL, related_name='sub_run', to='flow.Process')),

|

||||

('process_run', models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.CASCADE, related_name='sub', to='flow.Process')),

|

||||

],

|

||||

),

|

||||

migrations.CreateModel(

|

||||

name='SubNodeRun',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='节点名称')),

|

||||

('uuid', models.CharField(max_length=255, unique=True, verbose_name='UUID')),

|

||||

('description', models.CharField(blank=True, max_length=255, null=True, verbose_name='节点描述')),

|

||||

('show', models.BooleanField(default=True, verbose_name='是否显示')),

|

||||

('top', models.IntegerField(default=300)),

|

||||

('left', models.IntegerField(default=300)),

|

||||

('ico', models.CharField(blank=True, max_length=64, null=True, verbose_name='icon')),

|

||||

('fail_retry_count', models.IntegerField(default=0, verbose_name='失败重试次数')),

|

||||

('fail_offset', models.IntegerField(default=0, verbose_name='失败重试间隔')),

|

||||

('fail_offset_unit', models.CharField(choices=[('seconds', '秒'), ('hours', '时'), ('minutes', '分')], max_length=32, verbose_name='重试间隔单位')),

|

||||

('node_type', models.IntegerField(default=2)),

|

||||

('component_code', models.CharField(max_length=255, verbose_name='插件名称')),

|

||||

('is_skip_fail', models.BooleanField(default=False, verbose_name='忽略失败')),

|

||||

('is_timeout_alarm', models.BooleanField(default=False, verbose_name='超时告警')),

|

||||

('inputs', django_mysql.models.JSONField(default=dict, verbose_name='输入参数')),

|

||||

('outputs', django_mysql.models.JSONField(default=dict, verbose_name='输出参数')),

|

||||

('content', models.IntegerField(default=0, verbose_name='模板id')),

|

||||

('subprocess_run', models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.SET_NULL, related_name='sub_nodes_run', to='flow.SubProcessRun')),

|

||||

],

|

||||

options={

|

||||

'abstract': False,

|

||||

},

|

||||

),

|

||||

]

|

||||

@@ -1,24 +0,0 @@

|

||||

# Generated by Django 2.2.6 on 2022-06-16 16:16

|

||||

|

||||

from django.db import migrations, models

|

||||

import django.db.models.deletion

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

dependencies = [

|

||||

('flow', '0005_subnoderun_subprocessrun'),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.AlterField(

|

||||

model_name='noderun',

|

||||

name='process_run',

|

||||

field=models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.CASCADE, related_name='nodes_run', to='flow.ProcessRun'),

|

||||

),

|

||||

migrations.AlterField(

|

||||

model_name='subnoderun',

|

||||

name='subprocess_run',

|

||||

field=models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.CASCADE, related_name='sub_nodes_run', to='flow.SubProcessRun'),

|

||||

),

|

||||

]

|

||||

@@ -1,142 +0,0 @@

|

||||

from datetime import datetime

|

||||

|

||||

from django.db import models

|

||||

from django_mysql.models import JSONField

|

||||

|

||||

from applications.flow.constants import FAIL_OFFSET_UNIT_CHOICE, NodeTemplateType

|

||||

|

||||

|

||||

class Category(models.Model):

|

||||

name = models.CharField("分类名称", max_length=255, blank=False, null=False)

|

||||

|

||||

|

||||

class Process(models.Model):

|

||||

name = models.CharField("作业名称", max_length=255, blank=False, null=False)

|

||||

description = models.CharField("作业描述", max_length=255, blank=True, null=True)

|

||||

category = models.ManyToManyField(Category)

|

||||

run_type = models.CharField("调度类型", max_length=32)

|

||||

total_run_count = models.PositiveIntegerField("执行次数", default=0)

|

||||

gateways = JSONField("网关信息", default=dict)

|

||||

constants = JSONField("内部变量信息", default=dict)

|

||||

dag = JSONField("DAG", default=dict)

|

||||

|

||||

create_by = models.CharField("创建者", max_length=64, null=True)

|

||||

create_time = models.DateTimeField("创建时间", default=datetime.now)

|

||||

update_time = models.DateTimeField("修改时间", auto_now=True)

|

||||

update_by = models.CharField("修改人", max_length=64, null=True)

|

||||

|

||||

@property

|

||||

def clone_data(self):

|

||||

return {

|

||||

"name": self.name,

|

||||

"description": self.description,

|

||||

"run_type": self.run_type,

|

||||

"gateways": self.gateways,

|

||||

"constants": self.constants,

|

||||

"dag": self.dag,

|

||||

}

|

||||

|

||||

|

||||

class BaseNode(models.Model):

|

||||

START_NODE = 0

|

||||

END_NODE = 1

|

||||

JOB_NODE = 2

|

||||

SUB_PROCESS_NODE = 3

|

||||

CONDITION_NODE = 4

|

||||

CONVERGE_NODE = 5

|

||||

PARALLEL_NODE = 6

|

||||

CONDITION_PARALLEL_NODE = 7

|

||||

name = models.CharField("节点名称", max_length=255, blank=False, null=False)

|

||||

uuid = models.CharField("UUID", max_length=255, unique=True)

|

||||

description = models.CharField("节点描述", max_length=255, blank=True, null=True)

|

||||

|

||||

show = models.BooleanField("是否显示", default=True)

|

||||

top = models.IntegerField(default=300)

|

||||

left = models.IntegerField(default=300)

|

||||

ico = models.CharField("icon", max_length=64, blank=True, null=True)

|

||||

|

||||

fail_retry_count = models.IntegerField("失败重试次数", default=0)

|

||||

fail_offset = models.IntegerField("失败重试间隔", default=0)

|

||||

fail_offset_unit = models.CharField("重试间隔单位", choices=FAIL_OFFSET_UNIT_CHOICE, max_length=32)

|

||||

# 0:开始节点,1:结束节点,2:作业节点,3:其他作业流 4:分支,5:汇聚.6:并行

|

||||

node_type = models.IntegerField(default=2)

|

||||

component_code = models.CharField("插件名称", max_length=255, blank=False, null=False)

|

||||

is_skip_fail = models.BooleanField("忽略失败", default=False)

|

||||

is_timeout_alarm = models.BooleanField("超时告警", default=False)

|

||||

|

||||

inputs = JSONField("输入参数", default=dict)

|

||||

outputs = JSONField("输出参数", default=dict)

|

||||

# 如为子流程content为process id, 如为 节点模板为node id

|

||||

content = models.IntegerField("模板id", default=0)

|

||||

|

||||

class Meta:

|

||||

abstract = True

|

||||

|

||||

|

||||

class Node(BaseNode):

|

||||

process = models.ForeignKey(Process, on_delete=models.SET_NULL, null=True, db_constraint=False,

|

||||

related_name="nodes")

|

||||

|

||||

|

||||

class ProcessRun(models.Model):

|

||||

# new

|

||||

process = models.ForeignKey(Process, on_delete=models.SET_NULL, null=True, db_constraint=False,

|

||||

related_name="run")

|

||||

|

||||

name = models.CharField("作业名称", max_length=255, blank=False, null=False)

|

||||

description = models.CharField("作业描述", max_length=255, blank=True, null=True)

|

||||

run_type = models.CharField("调度类型", max_length=32)

|

||||

gateways = JSONField("网关信息", default=dict)

|

||||

constants = JSONField("内部变量信息", default=dict)

|

||||

dag = JSONField("DAG", default=dict)

|

||||

|

||||

create_by = models.CharField("创建者", max_length=64, null=True)

|

||||

create_time = models.DateTimeField("创建时间", default=datetime.now)

|

||||

update_time = models.DateTimeField("修改时间", auto_now=True)

|

||||

update_by = models.CharField("修改人", max_length=64, null=True)

|

||||

|

||||

root_id = models.CharField("根节点uuid", max_length=255)

|

||||

|

||||

|

||||

class SubProcessRun(models.Model):

|

||||

process_run = models.ForeignKey(Process, on_delete=models.CASCADE, null=True, db_constraint=False,

|

||||

related_name="sub")

|

||||

process = models.ForeignKey(Process, on_delete=models.SET_NULL, null=True, db_constraint=False,

|

||||

related_name="sub_run")

|

||||

name = models.CharField("作业名称", max_length=255, blank=False, null=False)

|

||||

description = models.CharField("作业描述", max_length=255, blank=True, null=True)

|

||||

run_type = models.CharField("调度类型", max_length=32)

|

||||

gateways = JSONField("网关信息", default=dict)

|

||||

constants = JSONField("内部变量信息", default=dict)

|

||||

dag = JSONField("DAG", default=dict)

|

||||

|

||||

create_by = models.CharField("创建者", max_length=64, null=True)

|

||||

create_time = models.DateTimeField("创建时间", default=datetime.now)

|

||||

update_time = models.DateTimeField("修改时间", auto_now=True)

|

||||

update_by = models.CharField("修改人", max_length=64, null=True)

|

||||

|

||||

root_id = models.CharField("根节点uuid", max_length=255)

|

||||

|

||||

|

||||

class SubNodeRun(BaseNode):

|

||||

subprocess_run = models.ForeignKey(SubProcessRun, on_delete=models.CASCADE, null=True, db_constraint=False,

|

||||

related_name="sub_nodes_run")

|

||||

|

||||

@staticmethod

|

||||

def field_names():

|

||||

return [field.name for field in NodeRun._meta.get_fields() if field.name not in ["id"]]

|

||||

|

||||

|

||||

class NodeRun(BaseNode):

|

||||

process_run = models.ForeignKey(ProcessRun, on_delete=models.CASCADE, null=True, db_constraint=False,

|

||||

related_name="nodes_run")

|

||||

|

||||

@staticmethod

|

||||

def field_names():

|

||||

return [field.name for field in NodeRun._meta.get_fields() if field.name not in ["id"]]

|

||||

|

||||

|

||||

class NodeTemplate(BaseNode):

|

||||

template_type = models.CharField("节点模板类型", max_length=1, default=NodeTemplateType.ContentTemplate)

|

||||

inputs_component = JSONField("前端参数组件", default=list)

|

||||

outputs_component = JSONField("前端参数组件", default=list)

|

||||

@@ -1,362 +0,0 @@

|

||||

import json

|

||||

|

||||

from bamboo_engine import api

|

||||

from django.db import transaction

|

||||

|

||||

from pipeline.eri.models import State

|

||||

from pipeline.eri.runtime import BambooDjangoRuntime

|

||||

from rest_framework import serializers

|

||||

|

||||

from applications.flow.constants import PIPELINE_STATE_TO_FLOW_STATE

|

||||

from applications.flow.models import Process, Node, ProcessRun, NodeRun, NodeTemplate, SubProcessRun, SubNodeRun

|

||||

from applications.utils.uuid_helper import get_uuid

|

||||

|

||||

|

||||

class ProcessViewSetsSerializer(serializers.Serializer):

|

||||

name = serializers.CharField(required=True)

|

||||

description = serializers.CharField(required=False, allow_blank=True)

|

||||

category = serializers.ListField(default="null")

|

||||

run_type = serializers.CharField(default="null")

|

||||

pipeline_tree = serializers.JSONField(required=True)

|

||||

|

||||

def save(self, **kwargs):

|

||||

if self.instance is not None:

|

||||

self.update(instance=self.instance, validated_data=self.validated_data)

|

||||

else:

|

||||

self.create(validated_data=self.validated_data)

|

||||

|

||||

def create(self, validated_data):

|

||||

node_map = {}

|

||||

for node in validated_data["pipeline_tree"]["nodes"]:

|

||||

node_map[node["uuid"]] = node

|

||||

dag = {k: [] for k in node_map.keys()}

|

||||

for line in self.validated_data["pipeline_tree"]["lines"]:

|

||||

dag[line["from"]].append(line["to"])

|

||||

with transaction.atomic():

|

||||

process = Process.objects.create(name=validated_data["name"],

|

||||

description=validated_data["description"],

|

||||

run_type=validated_data["run_type"],

|

||||

dag=dag)

|

||||

bulk_nodes = []

|

||||

for node in node_map.values():

|

||||

node_data = node["node_data"]

|

||||

if isinstance(node_data.get("inputs", {}), dict):

|

||||

node_inputs = node_data.get("inputs", {})

|

||||

else:

|

||||

node_inputs = json.loads(node_data["inputs"])

|

||||

bulk_nodes.append(Node(process=process,

|

||||

name=node_data["node_name"],

|

||||

uuid=node["uuid"],

|

||||

description=node_data["description"],

|

||||

fail_retry_count=node_data.get("fail_retry_count", 0) or 0,

|

||||

fail_offset=node_data.get("fail_offset", 0) or 0,

|

||||

fail_offset_unit=node_data.get("fail_offset_unit", "seconds"),

|

||||

node_type=node.get("type", 2),

|

||||

is_skip_fail=node_data["is_skip_fail"],

|

||||

is_timeout_alarm=node_data["is_skip_fail"],

|

||||

inputs=node_inputs,

|

||||

show=node["show"],

|

||||

top=node["top"],

|

||||

left=node["left"],

|

||||

ico=node["ico"],

|

||||

outputs={},

|

||||

component_code="http_request",

|

||||

content=node.get("content", 0) or 0

|

||||

))

|

||||

Node.objects.bulk_create(bulk_nodes, batch_size=500)

|

||||

self._data = {}

|

||||

|

||||

def update(self, instance, validated_data):

|

||||

node_map = {}

|

||||

for node in validated_data["pipeline_tree"]["nodes"]:

|

||||

node_map[node["uuid"]] = node

|

||||

dag = {k: [] for k in node_map.keys()}

|

||||

for line in self.validated_data["pipeline_tree"]["lines"]:

|

||||

dag[line["from"]].append(line["to"])

|

||||

with transaction.atomic():

|

||||

instance.name = validated_data["name"]

|

||||

instance.description = validated_data["description"]

|

||||

instance.run_type = validated_data["run_type"]

|

||||

instance.dag = dag

|

||||

instance.save()

|

||||

bulk_update_nodes = []

|

||||

bulk_create_nodes = []

|

||||

node_dict = Node.objects.filter(process_id=instance.id).in_bulk(field_name="uuid")

|

||||

for node in node_map.values():

|

||||

node_data = node["node_data"]

|

||||

node_obj = node_dict.get(node["uuid"], None)

|

||||

if isinstance(node_data.get("inputs", {}), dict):

|

||||

node_inputs = node_data.get("inputs", {})

|

||||

else:

|

||||

node_inputs = json.loads(node_data["inputs"])

|

||||

if node_obj:

|

||||

node_obj.content = node.get("content", 0) or 0

|

||||

node_obj.name = node_data["node_name"]

|

||||

node_obj.description = node_data["description"]

|

||||

node_obj.fail_retry_count = node_data.get("fail_retry_count", 0) or 0

|

||||

node_obj.fail_offset = node_data.get("fail_offset", 0) or 0

|

||||

node_obj.fail_offset_unit = node_data.get("fail_offset_unit", "seconds")

|

||||

node_obj.node_type = node.get("type", 3)

|

||||

node_obj.is_skip_fail = node_data["is_skip_fail"]

|

||||

node_obj.is_timeout_alarm = node_data["is_timeout_alarm"]

|

||||

node_obj.inputs = node_inputs

|

||||

node_obj.show = node["show"]

|

||||

node_obj.top = node["top"]

|

||||

node_obj.left = node["left"]

|

||||

node_obj.ico = node["ico"]

|

||||

node_obj.outputs = {}

|

||||

node_obj.component_code = "http_request"

|

||||

bulk_update_nodes.append(node_obj)

|

||||

else:

|

||||

node_obj = Node()

|

||||

node_obj.content = node.get("content", 0) or 0

|

||||

node_obj.name = node_data["node_name"]

|

||||

node_obj.description = node_data["description"]

|

||||

node_obj.fail_retry_count = node_data.get("fail_retry_count", 0) or 0

|

||||

node_obj.fail_offset = node_data.get("fail_offset", 0) or 0

|

||||

node_obj.fail_offset_unit = node_data.get("fail_offset_unit", "seconds")

|

||||

node_obj.node_type = node.get("type", 3)

|

||||

node_obj.is_skip_fail = node_data["is_skip_fail"]

|

||||

node_obj.is_timeout_alarm = node_data["is_timeout_alarm"]

|

||||

node_obj.inputs = node_inputs

|

||||

node_obj.show = node["show"]

|

||||

node_obj.top = node["top"]

|

||||

node_obj.left = node["left"]

|

||||

node_obj.ico = node["ico"]

|

||||

node_obj.outputs = {}

|

||||

node_obj.component_code = "http_request"

|

||||

node_obj.uuid = node["uuid"]

|

||||

node_obj.process_id = instance.id

|

||||

bulk_create_nodes.append(node_obj)

|

||||

Node.objects.bulk_update(bulk_update_nodes,

|

||||

fields=["name", "description", "fail_retry_count", "fail_offset",

|

||||

"fail_offset_unit", "node_type", "is_skip_fail",

|

||||

"is_timeout_alarm", "inputs", "show", "top", "left", "ico",

|

||||

"outputs", "component_code"], batch_size=500)

|

||||

Node.objects.bulk_create(bulk_create_nodes, batch_size=500)

|

||||

self._data = {}

|

||||

|

||||

|

||||

class ListProcessViewSetsSerializer(serializers.ModelSerializer):

|

||||

class Meta:

|

||||

model = Process

|

||||

# fields = "__all__"

|

||||

exclude = ("dag",)

|

||||

|

||||

|

||||

class ListProcessRunViewSetsSerializer(serializers.ModelSerializer):

|

||||

state = serializers.SerializerMethodField()

|

||||

|

||||

class Meta:

|

||||

model = ProcessRun

|

||||

fields = "__all__"

|

||||

|

||||

def get_state(self, obj):

|

||||

runtime = BambooDjangoRuntime()

|

||||

process_info = api.get_pipeline_states(runtime, root_id=obj.root_id)

|

||||

try:

|

||||

process_state = PIPELINE_STATE_TO_FLOW_STATE.get(process_info.data[obj.root_id]["state"])

|

||||

except Exception:

|

||||

process_state = "error"

|

||||

return process_state

|

||||

|

||||

|

||||

class ListSubProcessRunViewSetsSerializer(serializers.ModelSerializer):

|

||||

state = serializers.SerializerMethodField()

|

||||

|

||||

class Meta:

|

||||

model = SubProcessRun

|

||||

fields = "__all__"

|

||||

|

||||

def get_state(self, obj):

|

||||

runtime = BambooDjangoRuntime()

|

||||

process_info = api.get_pipeline_states(runtime, root_id=obj.root_id)

|

||||

try:

|

||||

process_state = PIPELINE_STATE_TO_FLOW_STATE.get(process_info.data[obj.root_id]["state"])

|

||||

except Exception:

|

||||

process_state = "error"

|

||||

return process_state

|

||||

|

||||

|

||||

class RetrieveProcessViewSetsSerializer(serializers.ModelSerializer):

|

||||

pipeline_tree = serializers.SerializerMethodField()

|

||||

|

||||

# category = serializers.SerializerMethodField()

|

||||

#

|

||||

# def get_category(self, obj):

|

||||

# return obj.category.all()

|

||||

|

||||

def get_pipeline_tree(self, obj):

|

||||

lines = []

|

||||

nodes = []

|

||||

for _from, to_list in obj.dag.items():

|

||||

for _to in to_list:

|

||||

lines.append({

|

||||

"from": _from,

|

||||

"to": _to

|

||||

})

|

||||

node_list = Node.objects.filter(process_id=obj.id).values()

|

||||

node_content_id = [node["content"] for node in node_list if node.get("content", 0)]

|

||||

content_map = NodeTemplate.objects.filter(id__in=node_content_id).in_bulk()

|

||||

for node in node_list:

|

||||

node_template = content_map.get(node.get("content", 0), "")

|

||||

inputs_component = ""

|

||||

if node_template:

|

||||

inputs_component = json.dumps(node_template.inputs_component)

|

||||

nodes.append({"show": node["show"],

|

||||

"top": node["top"],

|

||||

"left": node["left"],

|

||||

"ico": node["ico"],

|

||||

"type": node["node_type"],

|

||||

"name": node["name"],

|

||||

"content": node["content"],

|

||||

"node_data": {

|

||||

"inputs": json.dumps(node["inputs"]),

|

||||

"inputs_component": inputs_component,

|

||||

"run_mark": 0,

|

||||

"node_name": node["name"],

|

||||

"description": node["description"],

|

||||

"fail_retry_count": node["fail_retry_count"],

|

||||

"fail_offset": node["fail_offset"],

|

||||

"fail_offset_unit": node["fail_offset_unit"],

|

||||

"is_skip_fail": node["is_skip_fail"],

|

||||

"is_timeout_alarm": node["is_timeout_alarm"]},

|

||||

"uuid": node["uuid"]})

|

||||

return {"lines": lines, "nodes": nodes}

|

||||

|

||||

class Meta:

|

||||

model = Process

|

||||

fields = ("id", "name", "description", "category", "run_type", "pipeline_tree")

|

||||

|

||||

|

||||

class RetrieveProcessRunViewSetsSerializer(serializers.ModelSerializer):

|

||||

pipeline_tree = serializers.SerializerMethodField()

|

||||

|

||||

def get_pipeline_tree(self, obj):

|

||||

lines = []

|

||||

nodes = []

|

||||

for _from, to_list in obj.dag.items():

|

||||

for _to in to_list:

|

||||

lines.append({

|

||||

"from": _from,

|

||||

"to": _to

|

||||

})

|

||||

runtime = BambooDjangoRuntime()

|

||||

process_info = api.get_pipeline_states(runtime, root_id=obj.root_id)

|

||||

process_state = PIPELINE_STATE_TO_FLOW_STATE.get(process_info.data[obj.root_id]["state"])

|

||||

state_map = process_info.data[obj.root_id]["children"]

|

||||

node_list = NodeRun.objects.filter(process_run_id=obj.id).values()

|

||||

for node in node_list:

|

||||

pipeline_state = state_map.get(node["uuid"], {}).get("state", "READY")

|

||||

flow_state = PIPELINE_STATE_TO_FLOW_STATE[pipeline_state]

|

||||

outputs = ""

|

||||

# print(flow_state)

|

||||

if node["node_type"] not in [0, 1] and flow_state not in ["wait"]:

|

||||

output_data = api.get_execution_data_outputs(runtime, node_id=node["uuid"])

|

||||

outputs = output_data.data.get("outputs", "")

|

||||

if node["node_type"] == 3:

|

||||

# todo先简单判断node有fail,process就为fail

|

||||

if State.objects.filter(parent_id=node["uuid"], name="FAILED").exists():

|

||||

flow_state = "fail"

|

||||

# todo先简单判断node有fail,process就为fail

|

||||

if flow_state == "fail":

|

||||

process_state = "fail"

|

||||

nodes.append({"show": node["show"],

|

||||

"top": node["top"],

|

||||

"left": node["left"],

|

||||

"ico": node["ico"],

|

||||

"type": node["node_type"],

|

||||

"name": node["name"],

|

||||

"state": flow_state,

|

||||

"content": node["content"],

|

||||

"node_data": {

|

||||

"inputs": node["inputs"],

|

||||

"outputs": outputs,

|

||||

"run_mark": 0,

|

||||

"node_name": node["name"],

|

||||

"description": node["description"],

|

||||

"fail_retry_count": node["fail_retry_count"],

|

||||

"fail_offset": node["fail_offset"],

|

||||

"fail_offset_unit": node["fail_offset_unit"],

|

||||

"is_skip_fail": node["is_skip_fail"],

|

||||

"is_timeout_alarm": node["is_timeout_alarm"]},

|

||||

"uuid": node["uuid"]})

|

||||

return {"lines": lines, "nodes": nodes, "process_state": process_state}

|

||||

|

||||

class Meta:

|

||||

model = ProcessRun

|

||||

fields = ("id", "name", "description", "run_type", "pipeline_tree")

|

||||

|

||||

|

||||

class RetrieveSubProcessRunViewSetsSerializer(serializers.ModelSerializer):

|

||||

pipeline_tree = serializers.SerializerMethodField()

|

||||

|

||||

def get_pipeline_tree(self, obj):

|

||||

lines = []

|

||||

nodes = []

|

||||

for _from, to_list in obj.dag.items():

|

||||

for _to in to_list:

|

||||

lines.append({

|

||||

"from": _from,

|

||||

"to": _to

|

||||

})

|

||||

runtime = BambooDjangoRuntime()

|

||||

process_info = api.get_pipeline_states(runtime, root_id=obj.root_id)

|

||||

process_state = PIPELINE_STATE_TO_FLOW_STATE.get(process_info.data[obj.root_id]["state"])

|

||||

state_map = process_info.data[obj.root_id]["children"]

|

||||

node_list = SubNodeRun.objects.filter(subprocess_run_id=obj.id).values()

|

||||

for node in node_list:

|

||||

pipeline_state = state_map.get(node["uuid"], {}).get("state", "READY")

|

||||

flow_state = PIPELINE_STATE_TO_FLOW_STATE[pipeline_state]

|

||||

outputs = ""

|

||||

# print(flow_state)

|

||||

if node["node_type"] not in [0, 1] and flow_state not in ["wait"]:

|

||||

output_data = api.get_execution_data_outputs(runtime, node_id=node["uuid"])

|

||||

outputs = output_data.data.get("outputs", "")

|

||||

if node["node_type"] == 3:

|

||||

# todo先简单判断node有fail,process就为fail

|

||||

if State.objects.filter(parent_id=node["uuid"], name="FAILED").exists():

|

||||

flow_state = "fail"

|

||||

# todo先简单判断node有fail,process就为fail

|

||||

if flow_state == "fail":

|

||||

process_state = "fail"

|

||||

nodes.append({"show": node["show"],

|

||||

"top": node["top"],

|

||||

"left": node["left"],

|

||||

"ico": node["ico"],

|

||||

"type": node["node_type"],

|

||||

"name": node["name"],

|

||||

"state": flow_state,

|

||||

"content": node["content"],

|

||||

"node_data": {

|

||||

"inputs": node["inputs"],

|

||||

"outputs": outputs,

|

||||

"run_mark": 0,

|

||||

"node_name": node["name"],

|

||||

"description": node["description"],

|

||||

"fail_retry_count": node["fail_retry_count"],

|

||||

"fail_offset": node["fail_offset"],

|

||||

"fail_offset_unit": node["fail_offset_unit"],

|

||||

"is_skip_fail": node["is_skip_fail"],

|

||||

"is_timeout_alarm": node["is_timeout_alarm"]},

|

||||

"uuid": node["uuid"]})

|

||||

return {"lines": lines, "nodes": nodes, "process_state": process_state}

|

||||

|

||||

class Meta:

|

||||

model = SubProcessRun

|

||||

fields = ("id", "name", "description", "run_type", "pipeline_tree")

|

||||

|

||||

|

||||

class ExecuteProcessSerializer(serializers.Serializer):

|

||||

process_id = serializers.IntegerField(required=True)

|

||||

|

||||

|

||||

class NodeTemplateSerializer(serializers.ModelSerializer):

|

||||

|

||||

def validate(self, attrs):

|

||||

attrs["uuid"] = get_uuid()

|

||||

return attrs

|

||||

|

||||

class Meta:

|

||||

model = NodeTemplate

|

||||

exclude = ("uuid",)

|

||||

@@ -1,3 +0,0 @@

|

||||

from django.test import TestCase

|

||||

|

||||

# Create your tests here.

|

||||

@@ -1,12 +0,0 @@

|

||||

from rest_framework.routers import DefaultRouter

|

||||

|

||||

from . import views

|

||||

|

||||

flow_router = DefaultRouter()

|

||||

flow_router.register(r"flow", viewset=views.ProcessViewSets, base_name="flow")

|

||||

flow_router.register(r"run", viewset=views.ProcessRunViewSets, base_name="run")

|

||||

flow_router.register(r"sub_run", viewset=views.SubProcessRunViewSets, base_name="sub_run")

|

||||

flow_router.register(r"test", viewset=views.TestViewSets, base_name="test")

|

||||

|

||||

node_router = DefaultRouter()

|

||||

node_router.register(r"template", viewset=views.NodeTemplateViewSet, base_name="template")

|

||||

@@ -1,43 +0,0 @@

|

||||

from applications.flow.models import ProcessRun, NodeRun, Process, Node, SubProcessRun, SubNodeRun

|

||||

from applications.utils.dag_helper import PipelineBuilder, instance_dag

|

||||

|

||||

|

||||

def build_and_create_process(process_id):

|

||||

"""构建pipeline和创建运行时数据"""

|

||||

p_builder = PipelineBuilder(process_id)

|

||||

pipeline = p_builder.build()

|

||||

|

||||

process = p_builder.process

|

||||

node_map = p_builder.node_map

|

||||

process_run_uuid = p_builder.instance

|

||||

|

||||

# 保存的实例数据

|

||||

process_run_data = process.clone_data

|

||||

process_run_data["dag"] = instance_dag(process_run_data["dag"], process_run_uuid)

|

||||

process_run = ProcessRun.objects.create(process_id=process.id, root_id=pipeline["id"], **process_run_data)

|

||||

node_run_bulk = []

|

||||

for pipeline_id, node in node_map.items():

|

||||

_node = {k: v for k, v in node.__dict__.items() if k in NodeRun.field_names()}

|

||||

_node["uuid"] = process_run_uuid[pipeline_id].id

|

||||

node_run_bulk.append(NodeRun(process_run=process_run, **_node))

|

||||

if node.node_type == Node.SUB_PROCESS_NODE:

|

||||

create_subprocess(node.content, process_run.id, process_run_uuid, pipeline["id"])

|

||||

NodeRun.objects.bulk_create(node_run_bulk, batch_size=500)

|

||||

return pipeline

|

||||

|

||||

|

||||

def create_subprocess(process_id, process_run_id, process_run_uuid, root_id):

|

||||

process = Process.objects.filter(id=process_id).first()

|

||||

process_run_data = process.clone_data

|

||||

process_run_data["dag"] = instance_dag(process_run_data["dag"], process_run_uuid)

|

||||

process_run = SubProcessRun.objects.create(process_id=process_id, process_run_id=process_run_id, root_id=root_id,

|

||||

**process_run_data)

|

||||

subprocess_node_map = Node.objects.filter(process_id=process_id).in_bulk(field_name="uuid")

|

||||

node_run_bulk = []

|

||||

for pipeline_id, node in subprocess_node_map.items():

|

||||

_node = {k: v for k, v in node.__dict__.items() if k in NodeRun.field_names()}

|

||||

_node["uuid"] = process_run_uuid[pipeline_id].id

|

||||

node_run_bulk.append(SubNodeRun(subprocess_run=process_run, **_node))

|

||||

if node.node_type == Node.SUB_PROCESS_NODE:

|

||||

create_subprocess(node.content, process_run_id, process_run_uuid, root_id)

|

||||

SubNodeRun.objects.bulk_create(node_run_bulk, batch_size=500)

|

||||

@@ -1,144 +0,0 @@

|

||||

from datetime import datetime

|

||||

import random

|

||||

from django.db.models import F

|

||||

|

||||

from applications.flow.utils import build_and_create_process

|

||||

from bamboo_engine import api

|

||||

from bamboo_engine.builder import *

|

||||

from django.http import JsonResponse

|

||||

from pipeline.eri.runtime import BambooDjangoRuntime

|

||||

from rest_framework import mixins

|

||||

from rest_framework.decorators import action

|

||||

from rest_framework.response import Response

|

||||

|

||||

from applications.flow.filters import NodeTemplateFilter

|

||||

from applications.flow.models import Process, Node, ProcessRun, NodeRun, NodeTemplate, SubProcessRun

|

||||

from applications.flow.serializers import ProcessViewSetsSerializer, ListProcessViewSetsSerializer, \

|

||||

RetrieveProcessViewSetsSerializer, ExecuteProcessSerializer, ListProcessRunViewSetsSerializer, \

|

||||

RetrieveProcessRunViewSetsSerializer, NodeTemplateSerializer, ListSubProcessRunViewSetsSerializer, \

|

||||

RetrieveSubProcessRunViewSetsSerializer

|

||||

from applications.utils.dag_helper import DAG, instance_dag, PipelineBuilder

|

||||

from component.drf.viewsets import GenericViewSet

|

||||

|

||||

|

||||

class ProcessViewSets(mixins.ListModelMixin,

|

||||

mixins.CreateModelMixin,

|

||||

mixins.RetrieveModelMixin,

|

||||

mixins.DestroyModelMixin,

|

||||

mixins.UpdateModelMixin,

|

||||

GenericViewSet):

|

||||

queryset = Process.objects.order_by("-update_time")

|

||||

|

||||

def get_serializer_class(self):

|

||||

if self.action == "list":

|

||||

return ListProcessViewSetsSerializer

|

||||

elif self.action == "retrieve":

|

||||

return RetrieveProcessViewSetsSerializer

|

||||

elif self.action == "execute":

|

||||

return ExecuteProcessSerializer

|

||||

return ProcessViewSetsSerializer

|

||||

|

||||

@action(methods=["POST"], detail=False)

|

||||

def execute(self, request, *args, **kwargs):

|

||||

validated_data = self.is_validated_data(request.data)

|

||||

process_id = validated_data["process_id"]

|

||||

pipeline = build_and_create_process(process_id)

|

||||

# 执行

|

||||

runtime = BambooDjangoRuntime()

|

||||

api.run_pipeline(runtime=runtime, pipeline=pipeline)

|

||||

|

||||

Process.objects.filter(id=process_id).update(total_run_count=F("total_run_count") + 1)

|

||||

|

||||

return Response({})

|

||||

|

||||

|

||||

class ProcessRunViewSets(mixins.ListModelMixin,

|

||||

mixins.RetrieveModelMixin,

|

||||

GenericViewSet):

|

||||

queryset = ProcessRun.objects.order_by("-update_time")

|

||||

|

||||

def get_serializer_class(self):

|

||||

if self.action == "list":

|

||||

return ListProcessRunViewSetsSerializer

|

||||

elif self.action == "retrieve":

|

||||

return RetrieveProcessRunViewSetsSerializer

|

||||

elif self.action == "execute":

|

||||

return ExecuteProcessSerializer

|

||||

|

||||

|

||||

class SubProcessRunViewSets(mixins.ListModelMixin,

|

||||

mixins.RetrieveModelMixin,

|

||||

GenericViewSet):

|

||||

queryset = SubProcessRun.objects.order_by("-update_time")

|

||||

|

||||

def get_serializer_class(self):

|

||||

if self.action == "list":

|

||||

return ListSubProcessRunViewSetsSerializer

|

||||

elif self.action == "retrieve":

|

||||

return RetrieveSubProcessRunViewSetsSerializer

|

||||

|

||||

|

||||

class TestViewSets(GenericViewSet):

|

||||

def list(self, request, *args, **kwargs):

|

||||

random_list = [1, 1, 1, 1, 1, 1, 1, 1, 1, 0]

|

||||

sign = random.choice(random_list)

|

||||

if sign:

|

||||

return Response({"now": datetime.now().strftime("%Y-%m-%d %H:%M:%S"), "data": request.query_params})

|

||||

else:

|

||||

raise Exception("随机抛出异常")

|

||||

|

||||

|

||||

class NodeTemplateViewSet(mixins.ListModelMixin,

|

||||

mixins.CreateModelMixin,

|

||||

mixins.UpdateModelMixin,

|

||||

mixins.DestroyModelMixin,

|

||||

mixins.RetrieveModelMixin,

|

||||

GenericViewSet):

|

||||

queryset = NodeTemplate.objects.order_by("-id")

|

||||

serializer_class = NodeTemplateSerializer

|

||||

filterset_class = NodeTemplateFilter

|

||||

|

||||

|

||||

# Create your views here.

|

||||

def flow(request):

|

||||

# 使用 builder 构造出流程描述结构

|

||||

start = EmptyStartEvent()

|

||||

act = ServiceActivity(component_code="http_request")

|

||||

|

||||

act2 = ServiceActivity(component_code="http_request")

|

||||

act2.component.inputs.n = Var(type=Var.PLAIN, value=50)

|

||||

|

||||

act3 = ServiceActivity(component_code="http_request")

|

||||

act3.component.inputs.n = Var(type=Var.PLAIN, value=5)

|

||||

|

||||

act4 = ServiceActivity(component_code="http_request")

|

||||

act5 = ServiceActivity(component_code="http_request")

|

||||

eg = ExclusiveGateway(

|

||||

conditions={

|

||||

0: '${exe_res} >= 0',

|

||||

1: '${exe_res} < 0'

|

||||

},

|

||||

name='act_2 or act_3'

|

||||

)

|

||||

pg = ParallelGateway()

|

||||

cg = ConvergeGateway()

|

||||

|

||||

end = EmptyEndEvent()

|

||||

|

||||

start.extend(act).extend(eg).connect(act2, act3).to(act2).extend(act4).extend(act5).to(eg).converge(end)

|

||||

# 全局变量

|

||||

pipeline_data = Data()

|

||||

pipeline_data.inputs['${exe_res}'] = NodeOutput(type=Var.PLAIN, source_act=act.id, source_key='exe_res')

|

||||

|

||||

pipeline = builder.build_tree(start, data=pipeline_data)

|

||||

print(pipeline)

|

||||

# 执行流程对象

|

||||

runtime = BambooDjangoRuntime()

|

||||

|

||||

api.run_pipeline(runtime=runtime, pipeline=pipeline)

|

||||

|

||||

result = api.get_pipeline_states(runtime=runtime, root_id=pipeline["id"])

|

||||

|

||||

result_output = api.get_execution_data_outputs(runtime, act.id).data

|

||||

# api.pause_pipeline(runtime=runtime, pipeline_id=pipeline["id"])

|

||||

return JsonResponse({})

|

||||

@@ -1,5 +1,5 @@

|

||||

from django.apps import AppConfig

|

||||

|

||||

|

||||

class TaskConfig(AppConfig):

|

||||

class ProjectConfig(AppConfig):

|

||||

name = 'task'

|

||||

|

||||

@@ -1,30 +0,0 @@

|

||||

# Generated by Django 2.2.6 on 2022-06-17 15:02

|

||||

|

||||

from django.db import migrations, models

|

||||

import django.db.models.deletion

|

||||

|

||||

|

||||

class Migration(migrations.Migration):

|

||||

|

||||

initial = True

|

||||

|

||||

dependencies = [

|

||||

('flow', '0006_auto_20220616_1616'),

|

||||

]

|

||||

|

||||

operations = [

|

||||

migrations.CreateModel(

|

||||

name='Task',

|

||||

fields=[

|

||||

('id', models.AutoField(auto_created=True, primary_key=True, serialize=False, verbose_name='ID')),

|

||||

('name', models.CharField(max_length=255, verbose_name='任务名称')),

|

||||

('run_type', models.CharField(choices=[('hand', '手动'), ('now', '立即'), ('time', '定时'), ('cycle', '周期'), ('cron', 'cron表达式')], max_length=64, verbose_name='执行方式')),

|

||||

('when_start', models.CharField(max_length=100, verbose_name='执行时间')),

|

||||

('cycle_time', models.CharField(max_length=20, null=True, verbose_name='周期时间')),

|

||||

('cycle_type', models.CharField(choices=[('min', '分钟'), ('hour', '小时'), ('day', '天')], max_length=20, null=True, verbose_name='周期间隔(min,hour,day)')),

|

||||

('cron_time', models.TextField(default='', verbose_name='cron表达式')),

|

||||

('celery_task_id', models.CharField(max_length=64, null=True, verbose_name='celery的任务ID')),

|

||||

('process_run', models.ForeignKey(db_constraint=False, null=True, on_delete=django.db.models.deletion.CASCADE, related_name='tasks', to='flow.ProcessRun')),

|

||||

],

|

||||

),

|

||||

]

|

||||

@@ -1,29 +1,3 @@

|

||||

from django.db import models

|

||||

|

||||

from applications.flow.models import ProcessRun

|

||||

|

||||

|

||||

class Task(models.Model):

|

||||

TypeChoices = (

|

||||

("hand", "手动"),

|

||||

("now", "立即"),

|

||||

("time", "定时"),

|

||||

("cycle", "周期"),

|

||||

("cron", "cron表达式"),

|

||||

)

|

||||

CycleChoices = (

|

||||

("min", "分钟"),

|

||||

("hour", "小时"),

|

||||

("day", "天"),

|

||||

)

|

||||

name = models.CharField("任务名称", max_length=255, blank=False, null=False)

|

||||

|

||||

process_run = models.ForeignKey(ProcessRun, on_delete=models.CASCADE, null=True, db_constraint=False,

|

||||

related_name="tasks")

|

||||

run_type = models.CharField("执行方式", choices=TypeChoices,max_length=64)

|

||||

when_start = models.CharField(max_length=100, verbose_name="执行时间")

|

||||

cycle_time = models.CharField(max_length=20, null=True, verbose_name="周期时间")

|

||||

cycle_type = models.CharField(max_length=20, null=True, verbose_name="周期间隔(min,hour,day)", choices=CycleChoices)

|

||||

cron_time = models.TextField(default="", verbose_name="cron表达式")

|

||||

|

||||

celery_task_id = models.CharField(max_length=64, null=True, verbose_name="celery的任务ID")

|

||||

# Create your models here.

|

||||

|

||||

@@ -1,9 +0,0 @@

|

||||

from rest_framework import serializers

|

||||

|

||||

from applications.task.models import Task

|

||||

|

||||

|

||||

class TaskSerializer(serializers.ModelSerializer):

|

||||

class Meta:

|

||||

model = Task

|

||||

fields = "__all__"

|

||||

@@ -1,6 +0,0 @@

|

||||

from rest_framework.routers import DefaultRouter

|

||||

|

||||

from . import views

|

||||

|

||||

task_router = DefaultRouter()

|

||||

task_router.register(r"task", viewset=views.TaskViewSets, base_name="task")

|

||||

@@ -1,12 +1,3 @@

|

||||

from django.shortcuts import render

|

||||

|

||||

from applications.task.models import Task

|

||||

from applications.task.serializers import TaskSerializer

|

||||

from component.drf.viewsets import GenericViewSet

|

||||

from rest_framework import mixins

|

||||

|

||||

|

||||

class TaskViewSets(mixins.ListModelMixin,

|

||||

GenericViewSet):

|

||||

queryset = Task.objects.order_by("-id")

|

||||

serializer_class = TaskSerializer

|

||||

# Create your views here.

|

||||

|

||||

@@ -1,291 +0,0 @@

|

||||

from collections import OrderedDict, defaultdict

|

||||

from copy import copy, deepcopy

|

||||

|

||||

from applications.flow.models import Process, Node

|

||||

from bamboo_engine.builder import EmptyStartEvent, EmptyEndEvent, ExclusiveGateway, ServiceActivity, Var, builder, Data, \

|

||||

ParallelGateway, ConvergeGateway, ConditionalParallelGateway, SubProcess

|

||||

|

||||

|

||||

class DAG(object):

|

||||

""" Directed acyclic graph implementation. """

|

||||

|

||||

def __init__(self):

|

||||

""" Construct a new DAG with no nodes or edges. """

|

||||

self.reset_graph()

|

||||

|

||||

def add_node(self, node_name, graph=None):

|

||||

""" Add a node if it does not exist yet, or error out. """

|

||||

if not graph:

|

||||

graph = self.graph

|

||||

if node_name in graph:

|

||||

raise KeyError('node %s already exists' % node_name)

|

||||

graph[node_name] = set()

|

||||

|

||||

def add_node_if_not_exists(self, node_name, graph=None):

|

||||

try:

|

||||

self.add_node(node_name, graph=graph)

|

||||

except KeyError:

|

||||

pass

|

||||

|

||||

def delete_node(self, node_name, graph=None):

|

||||

""" Deletes this node and all edges referencing it. """

|

||||

if not graph:

|

||||

graph = self.graph

|

||||

if node_name not in graph:

|

||||

raise KeyError('node %s does not exist' % node_name)

|

||||

graph.pop(node_name)

|

||||

|

||||

for node, edges in graph.items():

|

||||

if node_name in edges:

|

||||

edges.remove(node_name)

|

||||

|

||||

def delete_node_if_exists(self, node_name, graph=None):

|

||||

try:

|

||||

self.delete_node(node_name, graph=graph)

|

||||

except KeyError:

|

||||

pass

|

||||

|

||||

def add_edge(self, ind_node, dep_node, graph=None):

|

||||

""" Add an edge (dependency) between the specified nodes. """

|

||||

if not graph:

|

||||

graph = self.graph

|

||||

if ind_node not in graph or dep_node not in graph:

|

||||

raise KeyError('one or more nodes do not exist in graph')

|

||||

test_graph = deepcopy(graph)

|

||||

test_graph[ind_node].add(dep_node)

|

||||

is_valid, message = self.validate(test_graph)

|

||||

if is_valid:

|

||||

graph[ind_node].add(dep_node)

|

||||

else:

|

||||

raise Exception()

|

||||

|

||||

def delete_edge(self, ind_node, dep_node, graph=None):

|

||||

""" Delete an edge from the graph. """

|

||||

if not graph:

|

||||

graph = self.graph

|

||||

if dep_node not in graph.get(ind_node, []):

|

||||

raise KeyError('this edge does not exist in graph')

|

||||

graph[ind_node].remove(dep_node)

|

||||

|

||||

def rename_edges(self, old_task_name, new_task_name, graph=None):

|

||||

""" Change references to a task in existing edges. """

|

||||

if not graph:

|

||||

graph = self.graph

|

||||

for node, edges in graph.items():

|

||||

|

||||

if node == old_task_name:

|

||||

graph[new_task_name] = copy(edges)

|

||||

del graph[old_task_name]

|

||||

|

||||

else:

|

||||

if old_task_name in edges:

|

||||

edges.remove(old_task_name)

|

||||

edges.add(new_task_name)

|

||||

|

||||

def predecessors(self, node, graph=None):

|

||||